I wish to present you a approach of picturing and fascinated with matrices. The subject for at the moment is the sq. matrix, which we’ll name A. I’m going to indicate you a approach of graphing sq. matrices, though we must restrict ourselves to the two x 2 case. That can be, as they are saying, with out lack of generality. The approach I’m about to indicate you could possibly be used with 3 x 3 matrices in the event you had a greater three-d monitor, and as can be revealed, it might be used on 3 x 2 and a couple of x 3 matrices, too. When you had extra creativeness, we might use the approach on 4 x 4, 5 x 5, and even higher-dimensional matrices.

However we’ll restrict ourselves to 2 x 2. A could be

Any longer, I’ll write matrices as

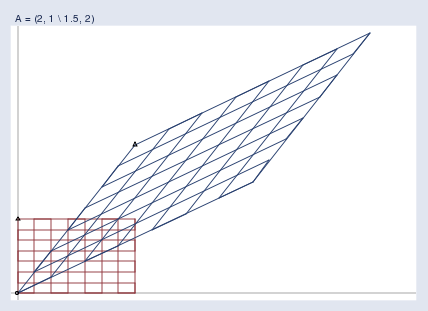

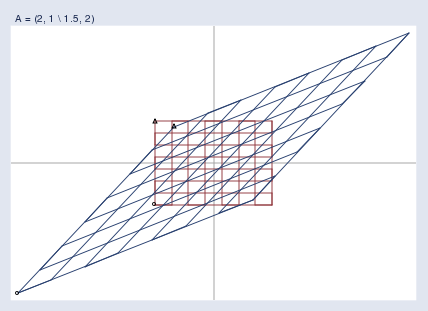

A = (2, 1 1.5, 2)

the place commas are used to separate components on the identical row and backslashes are used to separate the rows.

To graph A, I would like you to consider

y = Ax

the place

y: 2 x 1,

A: 2 x 2, and

x: 2 x 1.

That’s, we’re going to take into consideration A when it comes to its impact in remodeling factors in area from x to y. As an illustration, if we had the purpose

x = (0.75 0.25)

then

y = (1.75 1.625)

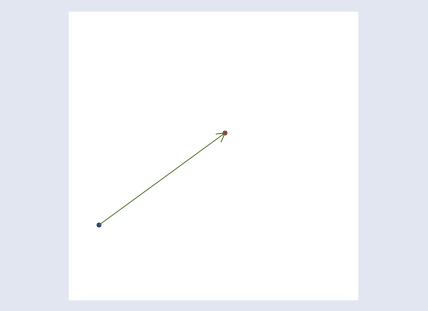

as a result of by the foundations of matrix multiplication y[1] = 0.75*2 + 0.25*1 = 1.75 and y[2] = 0.75*1.5 + 0.25*2 = 1.625. The matrix A transforms the purpose (0.75 0.25) to (1.75 1.625). We might graph that:

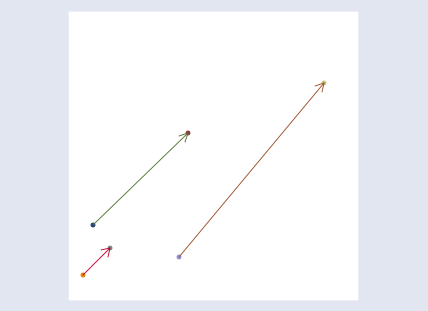

To get a greater understanding of how A transforms the area, we might graph extra factors:

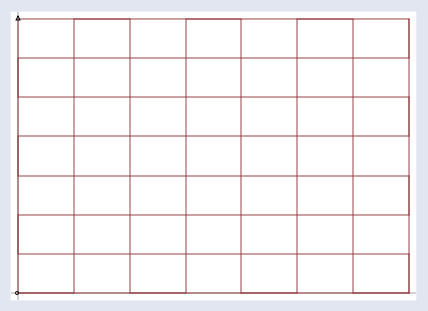

I don’t want you to get misplaced among the many particular person factors which A might remodel, nonetheless. To focus higher on A, we’re going to graph y = Ax for all x. To try this, I’m first going to take a grid,

One after the other, I’m going to take each level on the grid, name the purpose x, and run it by the remodel y = Ax. Then I’m going to graph the remodeled factors:

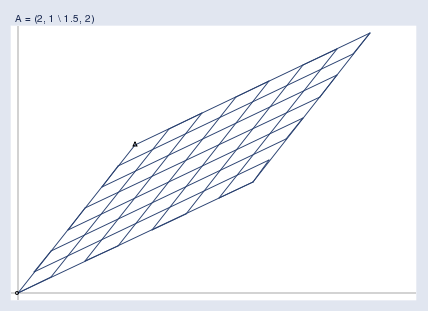

Lastly, I’m going to superimpose the 2 graphs:

On this approach, I can now see precisely what A = (2, 1 1.5, 2) does. It stretches the area, and skews it.

I would like you to consider transforms like A as transforms of the area, not of the person factors. I used a grid above, however I might simply as effectively have used an image of the Eiffel tower and, pixel by pixel, remodeled it through the use of y = Ax. The outcome could be a distorted model of the unique picture, simply because the the grid above is a distorted model of the unique grid. The distorted picture won’t be useful in understanding the Eiffel Tower, however it’s useful in understanding the properties of A. So it’s with the grids.

Discover that within the above picture there are two small triangles and two small circles. I put a triangle and circle on the backside left and prime left of the unique grid, after which once more on the corresponding factors on the remodeled grid. They’re there that will help you orient the remodeled grid relative to the unique. They wouldn’t be essential had I remodeled an image of the Eiffel tower.

I’ve suppressed the dimensions info within the graph, however the axes make it apparent that we’re wanting on the first quadrant within the graph above. I might simply as effectively have remodeled a wider space.

Whatever the area graphed, you might be alleged to think about two infinite planes. I’ll graph the area that makes it best to see the purpose I want to make, however you could keep in mind that no matter I’m exhibiting you applies to the complete area.

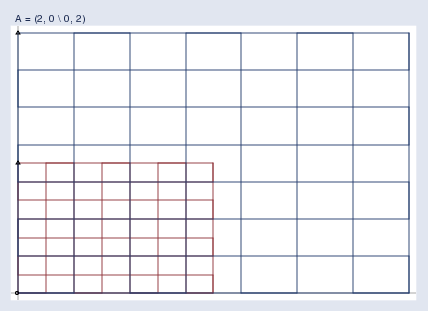

We’d like first to change into conversant in footage like this, so let’s see some examples. Pure stretching appears like this:

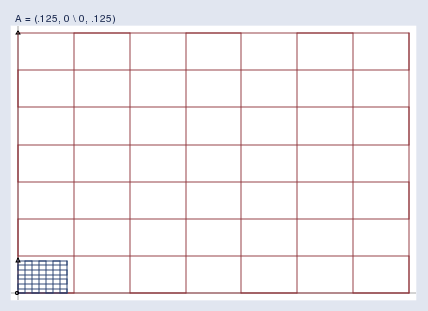

Pure compression appears like this:

Take note of the colour of the grids. The unique grid, I’m exhibiting in crimson; the remodeled grid is proven in blue.

A pure rotation appears like this:

Be aware the situation of the triangle; this area was rotated across the origin.

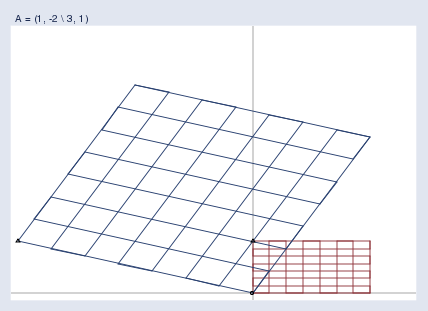

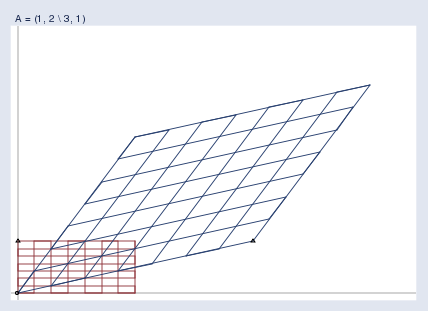

Right here’s an attention-grabbing matrix that produces a stunning outcome: A = (1, 2 3, 1).

This matrix flips the area! Discover the little triangles. Within the authentic grid, the triangle is situated on the prime left. Within the remodeled area, the corresponding triangle finally ends up on the backside proper! A = (1, 2 3, 1) seems to be an innocuous matrix — it doesn’t actually have a detrimental quantity in it — and but in some way, it twisted the area horribly.

So now you understand what 2 x 2 matrices do. They skew,stretch, compress, rotate, and even flip 2-space. In a like method, 3 x 3 matrices do the identical to 3-space; 4 x 4 matrices, to 4-space; and so forth.

Effectively, you might be little question pondering, that is all very entertaining. Not likely helpful, however entertaining.

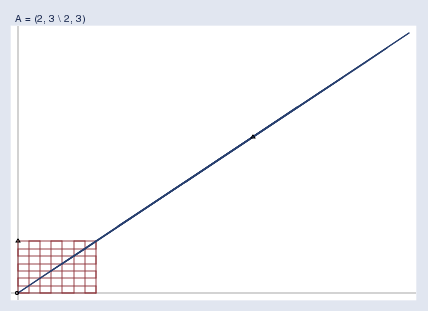

Okay, inform me what it means for a matrix to be singular. Higher but, I’ll inform you. It means this:

A singular matrix A compresses the area a lot that the poor area is squished till it’s nothing greater than a line. It’s as a result of the area is so squished after transformation by y = Ax that one can’t take the ensuing y and get again the unique x. A number of totally different x values get squished into that very same worth of y. Truly, an infinite quantity do, and we don’t know which you began with.

A = (2, 3 2, 3) squished the area all the way down to a line. The matrix A = (0, 0 0, 0) would squish the area down to some extent, specifically (0 0). In larger dimensions, say, ok, singular matrices can squish area into ok-1, ok-2, …, or 0 dimensions. The variety of dimensions known as the rank of the matrix.

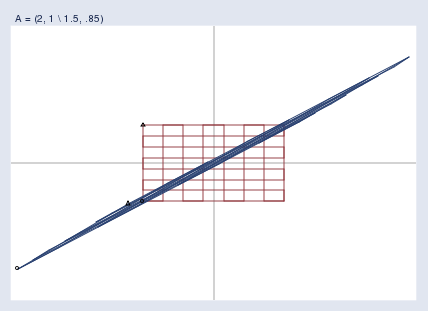

Singular matrices are an excessive case of practically singular matrices, that are the bane of my existence right here at StataCorp. Here’s what it means for a matrix to be practically singular:

Almost singular matrices lead to areas which might be closely however not absolutely compressed. In practically singular matrices, the mapping from x to y remains to be one-to-one, however x‘s which might be distant from one another can find yourself having practically equal y values. Almost singular matrices trigger finite-precision computer systems issue. Calculating y = Ax is straightforward sufficient, however to calculate the reverse remodel x = A-1y means taking small variations and blowing them again up, which is usually a numeric catastrophe within the making.

A lot for the photographs illustrating that matrices remodel and deform area; the message is that they do. This mind-set can present instinct and even deep insights. Right here’s one:

Within the above graph of the absolutely singular matrix, I selected a matrix that not solely squished the area but in addition skewed the area some. I didn’t have to incorporate the skew. Had I chosen matrix A = (1, 0 0, 0), I might have compressed the area down onto the horizontal axis. And with that, we have now an image of nonsquare matrices. I didn’t actually need a 2 x 2 matrix to map 2-space onto one in every of its axes; a 2 x 1 vector would have been ample. The implication is that, in a really deep sense, nonsquare matrices are similar to sq. matrices with zero rows or columns added to make them sq.. You may keep in mind that; it’ll serve you effectively.

Right here’s one other perception:

Within the linear regression components b = (X‘X)-1X‘y, (X‘X)-1 is a sq. matrix, so we are able to consider it as remodeling area. Let’s attempt to perceive it that approach.

Start by imagining a case the place it simply seems that (X‘X)-1 = I. In such a case, (X‘X)-1 would have off-diagonal components equal to zero, and diagonal components all equal to at least one. The off-diagonal components being equal to 0 implies that the variables within the knowledge are uncorrelated; the diagonal components all being equal to 1 implies that the sum of every squared variable would equal 1. That will be true if the variables every had imply 0 and variance 1/N. Such knowledge will not be widespread, however I can think about them.

If I had knowledge like that, my components for calculating b could be b = (X‘X)-1X‘y = IX‘y = X‘y. After I first realized that, it stunned me as a result of I’d have anticipated the components to be one thing like b = X-1y. I anticipated that as a result of we’re discovering an answer to y = Xb, and b = X-1y is an apparent resolution. In reality, that’s simply what we bought, as a result of it seems that X-1y = X‘y when (X‘X)-1 = I. They’re equal as a result of (X‘X)-1 = I implies that X‘X = I, which implies that X‘ = X-1. For this math to work out, we want an appropriate definition of inverse for nonsquare matrices. However they do exist, and in reality, every thing it’s worthwhile to work it out is correct there in entrance of you.

Anyway, when correlations are zero and variables are appropriately normalized, the linear regression calculation components reduces to b = X‘y. That is smart to me (now) and but, it’s nonetheless a really neat components. It takes one thing that’s N x ok — the info — and makes ok coefficients out of it. X‘y is the guts of the linear regression components.

Let’s name b = X‘y the naive components as a result of it’s justified solely below the idea that (X‘X)-1 = I, and actual X‘X inverses will not be equal to I. (X‘X)-1 is a sq. matrix and, as we have now seen, which means it may be interpreted as compressing, increasing, and rotating area. (And even flipping area, though it seems the positive-definite restriction on X‘X guidelines out the flip.) Within the components (X‘X)-1X‘y, (X‘X)-1 is compressing, increasing, and skewing X‘y, the naive regression coefficients. Thus (X‘X)-1 is the corrective lens that interprets the naive coefficients into the coefficient we search. And which means X‘X is the distortion attributable to scale of the info and correlations of variables.

Thus I’m entitled to explain linear regression as follows: I’ve knowledge (y, X) to which I wish to match y = Xb. The naive calculation is b = X‘y, which ignores the dimensions and correlations of the variables. The distortion attributable to the dimensions and correlations of the variables is X‘X. To right for the distortion, I map the naive coefficients by (X‘X)-1.

Instinct, like magnificence, is within the eye of the beholder. After I realized that the variance matrix of the estimated coefficients was equal to s2(X‘X)-1, I instantly thought: s2 — there’s the statistics. That single statistical worth is then parceled out by the corrective lens that accounts for scale and correlation. If I had knowledge that didn’t want correcting, then the usual errors of all of the coefficients could be the identical and could be similar to the variance of the residuals.

When you undergo the derivation of s2(X‘X)-1, there’s a temptation to suppose that s2 is merely one thing factored out from the variance matrix, in all probability to emphasise the connection between the variance of the residuals and commonplace errors. One simply loses sight of the truth that s2 is the guts of the matter, simply as X‘y is the guts of (X‘X)-1X‘y. Clearly, one must view each s2 and X‘y although the identical corrective lens.

I’ve extra to say about this mind-set about matrices. Search for half 2 within the close to future. Replace: half 2 of this posting, “Understanding matrices intuitively, half 2, eigenvalues and eigenvectors”, could now be discovered at http://weblog.stata.com/2011/03/09/understanding-matrices-intuitively-part-2/.