Cisco Reside US 2026 is simply across the nook, and this yr’s occasion guarantees to be larger and higher than ever. We’re bringing collectively a formidable lineup of 24 Cisco Networking App Market companions who’re able to showcase cutting-edge options, share real-world experience, and join with the Cisco group.

Whether or not you’re trying to discover new integrations, uncover AI-powered automation instruments, or learn the way main organizations are reworking their networks, our market companions have one thing for everybody.

Who’s Attending CLUS 2026?

Our ecosystem spans a number of resolution areas:

Wi-fi & Community Optimization: Ekahau, Wyebot, 7SIGNAL, and Hamina are bringing Wi-Fi design precision, client-side intelligence, community visibility, and superior wi-fi planning options to reinforce efficiency and troubleshooting throughout Meraki and Catalyst Heart environments.

Community Operations & Automation: Crimson Hat, NetBox, Auvik, NetOp, IP Cloth, Zabbix, ManageEngine, ElastiFlow, and Progress Software program are demonstrating easy methods to simplify operations by means of clever automation, community documentation, infrastructure intelligence, and complete monitoring.

Safety & Coverage Administration: ORDR, Claroty, BackBox, Firemon, AlgoSec, and Tufin are highlighting zero-trust architectures, AI-driven menace detection, coverage automation, and community safety administration.

Infrastructure & Connectivity: Megaport, BlueCAT, and Environment friendly IP are presenting options for community infrastructure administration, DNS/DHCP, service supply, and community telemetry.

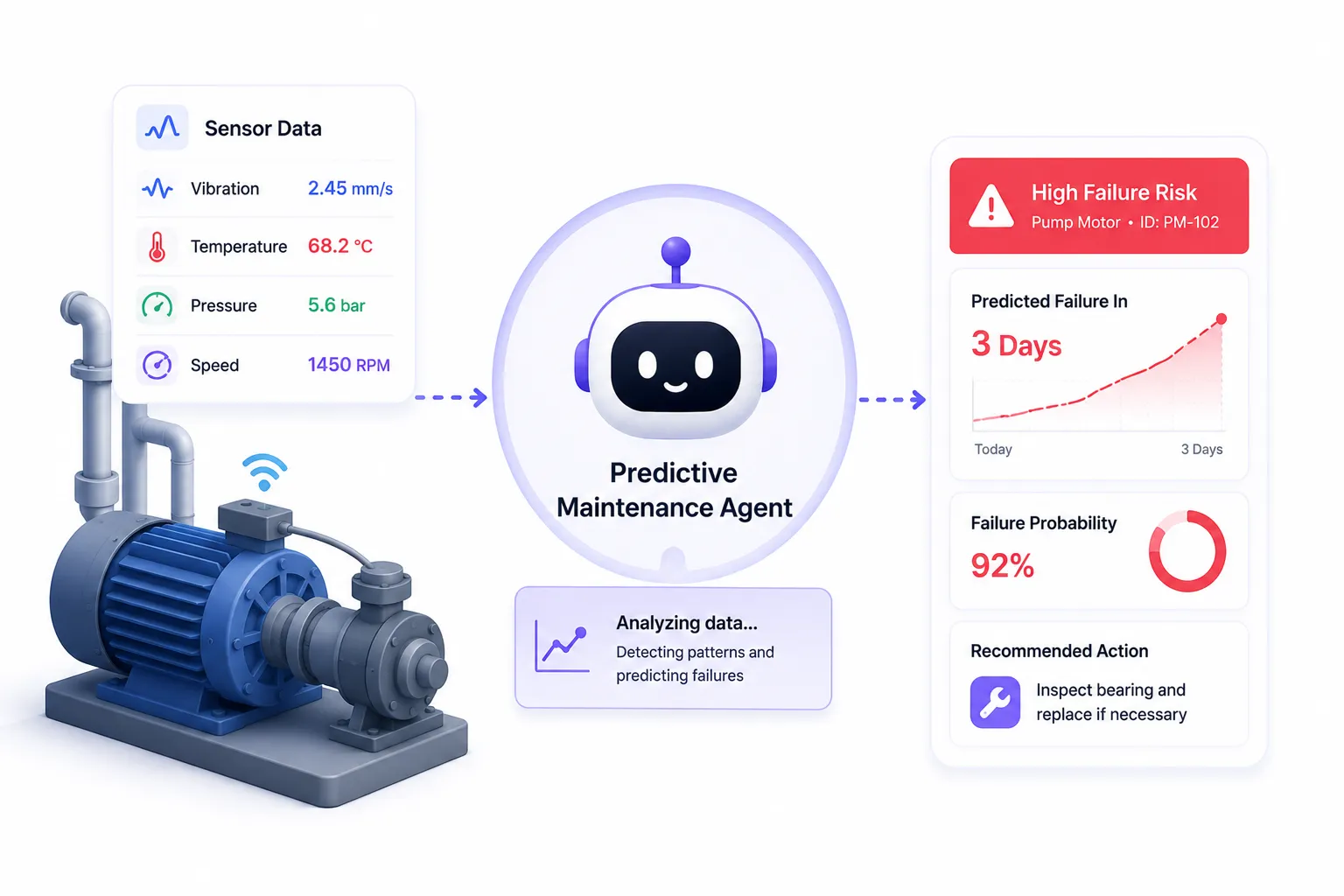

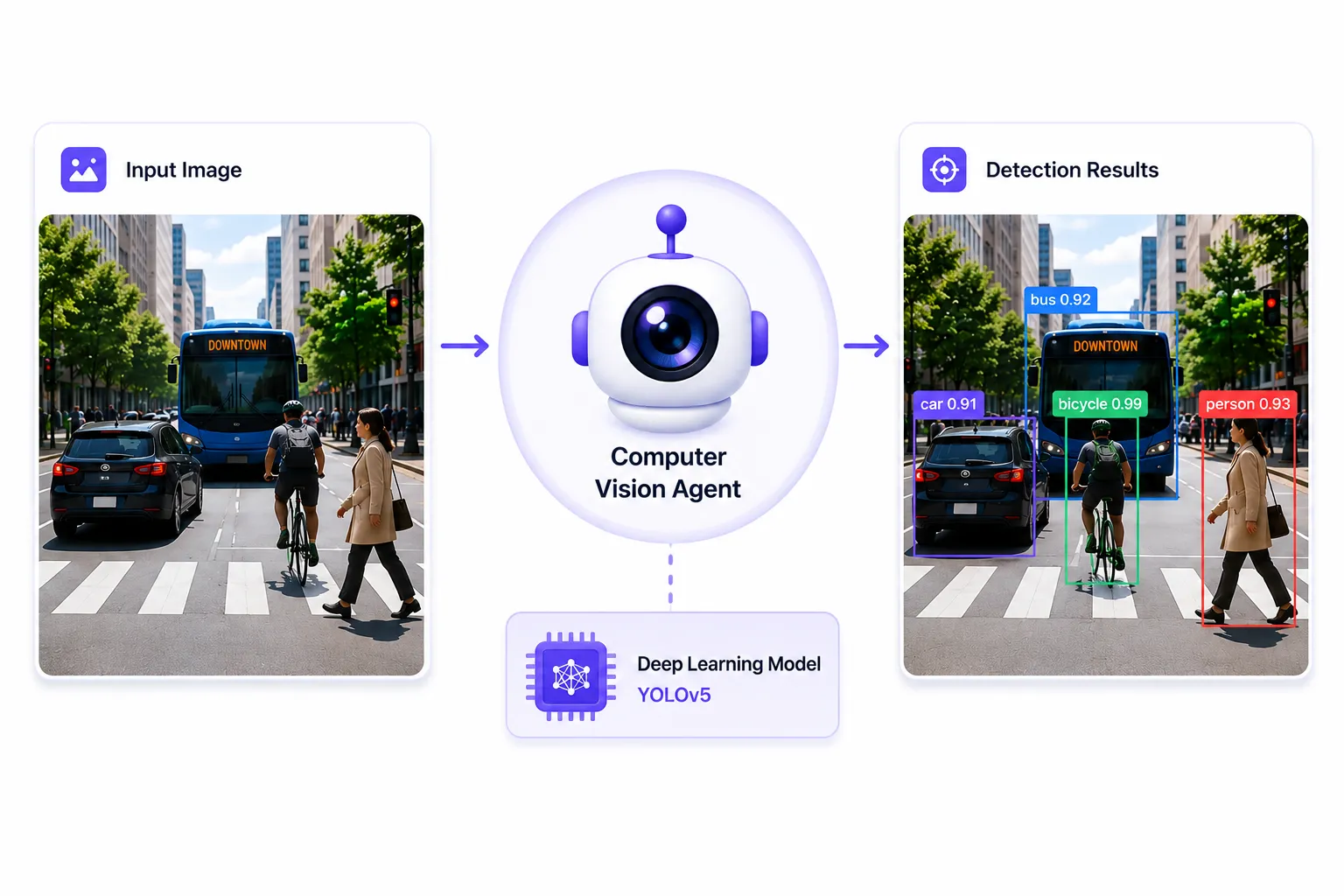

Specialised Options: SingleWire brings trendy crucial communication capabilities, whereas OnStak delivers laptop imaginative and prescient and IoT administration.

What to Count on On-Web site

Reside Demonstrations & Sales space Experiences: Companions like Ekahau will run a number of each day demos with devoted demo stations. Wyebot is bringing interactive sales space experiences with giveaways and unique swag. Many companions are internet hosting personal assembly suites for one-on-one conversations.

Skilled-Led Talking Classes: Over 15 devoted classes that includes associate specialists and Cisco clients discussing real-world use instances:

Monday, June 1

- 10:30 AM – 11:15 AM PDT: How Megaport Constructed the World’s Largest NaaS by Partnering with Cisco [CSSMSI-2000]

Audio system: Alex Mitev (Cisco SE) & Cameron Daniel (Megaport CTO) - 11:30 AM – 11:50 AM PDT: The Unified Edge: Orchestrating Cisco Meraki, Catalyst, and Intersight with Ansible [WOSPAR-2014]

Speaker: Steve Fulmer, Product Supervisor, Crimson Hat - 11:30 AM – 11:50 AM PDT: Constructing the Future: Scalable Wi-fi Designs for the Wi-Fi 7 Period [WOSPAR-1007]

Speaker: Matt Starling, Sr. Director Product Advertising and marketing, Ekahau - 2:30 PM – 2:50 PM PDT: Trendy Community Operations: Staying in Management with AI-Guided Motion from Auvik Aurora [WOSPAR-2036]

Speaker: Hao Zhang, Supervisor Gross sales Engineering, Auvik - 3:00 PM – 3:20 PM PDT: From AI-Pushed Insights to Motion: Automating Community Resilience at Scale [WOSPAR-2039]

Speaker: Irfahn Khimji, Discipline CTO, BackBox - 3:30 PM – 3:50 PM PDT: Past Observability: Agentic AI for Community Operations [WOSPAR-2050]

Speaker: Bibi Rosenbach, CEO, NetOp CLD Ltd

Tuesday, June 2

- 11:00 AM – 11:20 AM PDT: ORDR AI for Whole Cisco Visibility and Zero Belief Coverage Automation [WOSPAR-2037]

Speaker: Craig Hyps, Fellow Options Architect, ORDR - 5:00 PM – 5:30 PM PDT: Spine to the Future: Scaling AI with Megaport’s International NaaS [CENSPG-1021]

Audio system: Guru Shenoy (SVP Product Administration, Cisco), Michael van Rooyen (EVP International Innovation, Megaport), Invoice Gartner (SVP/GM Optical Programs, Cisco)

Wednesday, June 3

- 11:00 AM – 11:20 AM PDT: Precision Efficiency: Navigating Finish-to-Finish Connectivity Troubleshooting [WOSPAR-1008]

Speaker: Matt Starling, Sr. Director Product Advertising and marketing, Ekahau - 11:00 AM – 11:20 AM PDT: Seconds Matter: Enhancing Security with Cisco and Trendy Important Communication Options [WOSPAR-2006]

Speaker: Ken Rosko, Channel Supervisor, SingleWire Software program - 11:30 AM – 11:45 AM PDT: Lead in 15 – The Visibility Crucial: Utilizing Private Branding to Unite Distributed Groups [ITLGEN-2503]

Speaker: Alexis Bertholf, Megaport - 12:30 PM – 12:50 PM PDT: Fixing the Community Visibility Hole With AI-Pushed Expertise Insights [WOSPAR-2035]

Speaker: Eric Camulli, 7SIGNAL, Inc. - 1:00 PM – 1:45 PM PDT: Remodeling Cisco Community Operations with Ansible Automation Platform: From Playbooks to Clever Automation [DEVNET-2838]

Speaker: Sagar Paul, Senior Software program Engineer, Crimson Hat - 2:15 PM – 3:00 PM PDT: Authenticity in Motion: How Adaptability and Advocacy Form Tech Careers [CENLTF-1026]

Audio system: Nicole Soloko (Director Technique & Planning, Cisco), Marc Moffett (VP Options Engineering Americas, Cisco), Alex Sapiz (SVP Company Advertising and marketing, Cisco), Alexis Bertholf (Megaport) - 3:30 PM – 3:50 PM PDT: The Small Wins That Save Hundreds of thousands: How Beneath Armour Makes use of IP Cloth to Maintain Huge Initiatives Shifting [WOSPAR-2046]

Audio system: Chris Dooly (Sr Lead Community Engineer, Beneath Armour), Lauren Malhoit (International Director Answer Structure, IP Cloth) - 5:00 PM – 5:45 PM PDT: Simplifying Community Operations: Cisco Catalyst Heart & Meraki Integrations with NetBox [CISCOU-2640]

Speaker: Ben Bowling, Options Engineer, NetBox Labs

Full Companion Lineup at CLUS 2026

Gold Sponsors: Crimson Hat, Ekahau, BlueCAT

Bronze Sponsors: Hamina, AlgoSec, Wyebot, IP Cloth, Environment friendly IP, ManageEngine, Tufin, Netbox, Progress Software program

Copper Sponsors: ElastiFlow, Zabbix, OnStak, Megaport

Village Sponsors: SingleWire (Collaboration Village), Auvik (Networking Village), 7Signal (Networking Village), ORDR (Safety Village), Claroty (Safety Village), BackBox (Safety Village), Firemon (Safety Village), NetOp (Networking Village)

The best way to Join

Discover Your Companions: Search for our companions throughout the World of Options sponsorship areas, Safety Village, Networking Village, and Collaboration Village. A visible information shall be accessible on-site that can assist you navigate.

Attend Classes: Evaluate the Cisco Reside Session Catalog and add partner-hosted classes to your schedule earlier than you arrive.

Schedule Conferences: Many companions have devoted assembly areas accessible. Join with them upfront to lock in time for deeper conversations about your particular wants.

Why Companion Options Matter

The Cisco Networking App Market is residence to over 350 purposes purpose-built to increase Cisco’s capabilities. From AI-driven troubleshooting to automated compliance, from wi-fi optimization to infrastructure visibility, these options assist organizations speed up digital transformation and maximize their Cisco investments.

At CLUS 2026, you’ll see firsthand how these partnerships are driving innovation throughout industries.

Able to discover? Register for Cisco Reside US 2026

Study extra in regards to the Cisco Networking App Market

Join with us on social: #CiscoLive #CiscoPartners

We’d love to listen to what you suppose. Ask a Query, Remark Beneath, and Keep Linked with #CiscoPartners on social!

Cisco Companions Fb | @CiscoPartners X | Cisco Companions LinkedIn