Latest advances in massive language fashions (LLMs) have enabled AI brokers to carry out more and more complicated duties in internet navigation. Regardless of this progress, efficient use of such brokers continues to depend on human involvement to right misinterpretations or alter outputs that diverge from their preferences. Nevertheless, present agentic methods lack an understanding of when and why people intervene. In consequence, they may overlook consumer wants and proceed incorrectly, or interrupt customers too regularly with pointless affirmation requests.

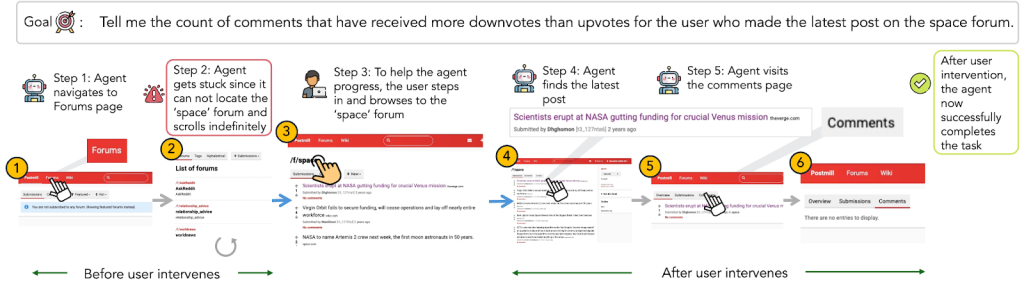

This blogpost is predicated on our latest work — Modeling Distinct Human Interplay in Net Brokers — the place we shift the main focus from autonomy to collaboration. As a substitute of optimizing brokers solely for an end-to-end autonomous pipeline, we ask: Can brokers anticipate when people are more likely to intervene?

To formulate this process, we gather CowCorpus – a novel dataset of interleaved human and agent motion trajectories. In comparison with current datasets comprising both solely agent trajectory or human trajectory, CowCorpus captures the collaborative process execution by a group of a human and an agent. In complete, CowCorpus has:

- 400 actual human–agent internet classes

- 4,200+ interleaved actions

- Step-level annotations of intervention moments

We curate CowCorpus from 20 real-world customers utilizing CowPilot, an open-source artifact by the identical analysis group. CowPilot is constructed as a generalizable Chrome extension, which is accessible to any arbitrary web site. Additionally it is simple to put in, making the annotation course of less complicated for our contributors. In CowPilot, we confirmed how collaboration works. In PlowPilot, we wish to make it adaptive.

To make sure CowCorpus is in line with established benchmarks and displays particular person consumer preferences, we designate a mix of free-form duties and benchmark duties in our dataset —

- 10 commonplace duties from the Mind2Web dataset (Deng et al., 2024): Helps us to grasp how the collaborative nature varies amongst contributors below the mounted process setup.

- 10 free-form duties of the contributors’ personal alternative: Helps us to grasp what sort of internet duties folks want to automate.

In complete, CowCorpus covers 9 varieties of process classes:

We analyze when human interventions happen throughout collaborative process execution and the way such temporal patterns fluctuate throughout customers. Utilizing participant-level measures, we cluster customers by interplay habits with 𝑘-means (𝑘=4). This evaluation reveals 4 distinct and secure teams of customers with qualitatively completely different patterns of intervention timing and management sharing. Based mostly on cluster centroids and consultant trajectories, we characterize the 4 teams as follows:

- Takeover: Customers intervene occasionally and usually late within the process. Once they do step in, they have an inclination to retain management fairly than returning it to the agent, leading to low handback charges. These interventions typically coincide with finishing the duty themselves fairly than correcting the agent mid-execution.

- Fingers-on: Customers intervene regularly and with excessive depth. Their interventions are inclined to happen comparatively late within the trajectory, however not like Takeover customers, they repeatedly alternate management with the agent, resulting in medium handback charges and sustained joint execution.

- Fingers-off: Customers hardly ever intervene all through the duty. They exhibit low intervention frequency and depth, permitting the agent to execute most trajectories end-to-end with minimal human involvement.

- Collaborative: Customers intervene selectively and constantly return management to the agent. This group is characterised by excessive handback charges and earlier intervention positions, reflecting focused, short-lived interventions that help ongoing collaboration.

Total, customers exhibit systematic variations in when interventions happen, how a lot they intervene, and whether or not management is relinquished afterward. Such temporal intervention patterns are constant throughout duties and inspire modeling distinct human–agent interplay patterns.

We mannequin human–agent collaboration as a Partially Observable Markov Determination Course of (POMDP). Given a process instruction, each the agent and human take turns executing actions primarily based on their insurance policies, forming a trajectory over time. At every step, the system observes the present state as a multimodal enter consisting of the webpage screenshot and accessibility tree. The agent proposes an motion conditioned on the commentary and previous trajectory. The human might intervene at any step, represented as a binary variable.

We formulate intervention prediction as a step-wise binary classification drawback that estimates the likelihood of human intervention given the present state, agent motion, and historical past. To resolve this, we use a big multimodal mannequin skilled through supervised fine-tuning. The mannequin takes as enter the trajectory historical past, present commentary, and proposed motion, and outputs a call to both request human enter or permit the agent to proceed.

We practice (1) a common intervention-aware mannequin utilizing all coaching information and (2) style-conditioned fashions tailor-made to every interplay group utilizing the corresponding subset of trajectories. To guage effectiveness, we examine these fashions in opposition to each prompting-based proprietary LMs and fine-tuned open-weight fashions on the Human Intervention Prediction process. Throughout all fashions, important takeaways are:

- Proprietary Fashions stay overly conservative: We consider three households of closed-source LMs (Claude 4 Sonnet, GPT-4o, and Gemini 2.5 Professional) utilizing zero-shot with out reasoning. They battle with the temporal dynamics essential for correct human intervention prediction. Notably, GPT-4o achieves excessive efficiency on non-intervention steps (Non-intervention F1: 0.846), but it surely fails on energetic interventions (Intervention F1: 0.198). The drastic F1 disparity signifies that generalist fashions are overly conservative and battle to stability the dynamic with the necessity for proactive help.

- Fantastic-tuned Open-weight Fashions with Specialised Knowledge Beats Scale: In distinction, finetuning open-weight fashions on CowCorpus yields essentially the most vital efficiency positive factors, surpassing proprietary fashions. Our fine-tuned Gemma-27B (SFT) achieves the state-of-the-art PTS (0.303), outperforming Claude 4 Sonnet (0.293), whereas the smaller LLaVA-8B (SFT) achieves a aggressive PTS (0.201), beating GPT-4o (0.147). These outcomes reveal that fine-tuning on high-quality interplay traces successfully bridges the alignment hole, permitting smaller fashions to grasp the nuance of intervention timing the place generalized large fashions fail

From Modeling to Deployment: PlowPilot

We built-in our intervention-aware mannequin right into a stay internet agent, PlowPilot. As a substitute of asking for affirmation at each step, the agent now: 1) Predicts when intervention is probably going; 2) Prompts solely at high-risk moments or the place consumer affirmation is more likely to occur; 3) Proceeds robotically in any other case.

We reinvited our annotators and requested them to fee our new system. On common, we seen a +26.5% enhance in user-rated usefulness. The next determine highlights particular person responses to every of 8 solutions requested to them. Importantly, the underlying execution agent stays unchanged from CowPilot.; PlowPilot differs solely by the addition of the intervention-aware module. The noticed positive factors due to this fact, come up solely from proactively modeling human intervention. These findings present preliminary proof that anticipating consumer intervention can considerably enhance the effectiveness and value of collaborative agent methods in apply.

Intervention is a sign of choice and collaboration type. If brokers can mannequin that sign, they grow to be adaptive companions fairly than simply autonomous instruments.

Relatively than maximizing full autonomy, we advocate optimizing the human–agent boundary. Brokers ought to study not solely to behave, however to defer—proactively handing management again when acceptable. This boundary must be adaptive, capturing user-specific interplay and intervention patterns. By studying when to contain the consumer, brokers allow extra environment friendly and customized collaboration. Optimizing this adaptive handoff shifts the aim from autonomy to collaborative intelligence, decreasing oversight whereas preserving management.

For extra particulars: