# Introduction

During the last decade, Pandas has been the inspiration for information work in Python. For datasets that slot in reminiscence, it’s quick and acquainted sufficient that switching libraries not often crosses any programmer’s thoughts.

Nevertheless, when you begin working with thousands and thousands of rows, the failings begin to seem: groupby operations that take a number of seconds, intermediate copies that eat RAM, and window capabilities that run as Python-level loops slightly than vectorized C or Rust code.

Polars is a DataFrame library in-built Rust on high of Apache Arrow. It was designed with parallelism and lazy analysis as first-class options. Pandas executes every operation upfront and in sequence, whereas Polars can construct up a question plan and optimize it previous to executing, with most operations executing concurrently throughout all accessible CPU cores robotically.

On this article, we discover three actual information issues utilizing actual questions from the StrataScratch coding platform. For every drawback, we evaluate each libraries’ options and level out the place the efficiency distinction issues most.

# Utilizing rank() vs. with_row_count(): Exercise Rank

In this query, the aim is to search out the e-mail exercise rank for every person primarily based on the full variety of emails despatched. The person with essentially the most emails will get rank 1. Outcomes should be sorted by whole emails in descending order, utilizing alphabetical order as a tiebreaker, and every rank should be distinct, even when two customers have the identical e-mail depend.

// Knowledge View

The google_gmail_emails desk shops one row per e-mail despatched, with a sender ID (from_user), recipient ID (to_user), and the day the e-mail was despatched. Here’s a preview of the desk:

| id | from_user | to_user | day |

|---|---|---|---|

| 0 | 6edf0be4b2267df1fa | 75d295377a46f83236 | 10 |

| 1 | 6edf0be4b2267df1fa | 32ded68d89443e808 | 6 |

| 2 | 6edf0be4b2267df1fa | 55e60cfcc9dc49c17e | 10 |

| 3 | 6edf0be4b2267df1fa | e0e0defbb9ec47f6f7 | 6 |

| 4 | … | … | … |

| 314 | e6088004caf0c8cc51 | e6088004caf0c8cc51 | 5 |

Grain (what one output row means): one person, with their whole e-mail depend and distinctive exercise rank.

// Frequent Mistake

The query asks for a singular rank even when two customers have the identical e-mail depend. A typical mistake is to make use of the rank(technique='dense') technique in Pandas, which assigns the identical rank to tied customers. The proper technique is 'first', which breaks ties by place within the sorted body. Since we kind alphabetically by user_id earlier than rating, the ensuing ranks are distinctive and deterministic.

The Polars optimum answer avoids the rank operate completely. After sorting by ["total_emails", "user_id"] in descending and ascending order, respectively, the .with_row_count("activity_rank", offset=1) clause assigns sequential integers ranging from 1. No tie-breaking logic is required as a result of the type already dealt with it.

// Options

1. Pandas Answer

We rename from_user to user_id, group by person, depend emails, compute the primary rank, and kind by e-mail depend in descending order, with alphabetical tie-breaking.

import pandas as pd

import numpy as np

google_gmail_emails = google_gmail_emails.rename(columns={"from_user": "user_id"})

consequence = google_gmail_emails.groupby(

['user_id']).dimension().to_frame('total_emails').reset_index()

consequence['activity_rank'] = consequence['total_emails'].rank(technique='first', ascending=False)

consequence = consequence.sort_values(by=['total_emails', 'user_id'], ascending=[False, True])

2. Polars Answer

We use a lazy chain that renames, teams, types, and assigns row numbers in a single cross. Calling .gather() on the finish materializes the consequence.

import polars as pl

google_gmail_emails = google_gmail_emails.rename({"from_user": "user_id"})

consequence = (

google_gmail_emails.lazy()

.group_by("user_id")

.agg(total_emails = pl.depend())

.kind(

by=["total_emails", "user_id"],

descending=[True, False]

)

.with_row_count("activity_rank", offset=1)

.choose([

pl.col("user_id"),

"total_emails",

"activity_rank"

])

.gather()

)

// Efficiency Comparability

The Pandas answer iterates over the information twice after grouping: as soon as to compute sizes and as soon as to assign ranks. Internally, rank(technique='first') allocates a rank array, resolves ties through argsort, and writes again — which is significantly costlier than it appears to be like for a single column. The Polars group_by operate divides the workload throughout all accessible CPU cores, leading to considerably sooner aggregation for big tables. And for the reason that .with_row_count() clause is a single O(n) sequential cross after sorting, it replaces the rank operate with the most affordable potential operation. On a desk containing thousands and thousands of e-mail data, the usage of parallel aggregation and not using a rank operate can lead to a 5–10x enchancment in wall-clock time in comparison with the Pandas method.

Right here is the code output preview:

| user_id | total_emails | activity_rank |

|---|---|---|

| 32ded68d89443e808 | 19 | 1 |

| ef5fe98c6b9f313075 | 19 | 2 |

| 5b8754928306a18b68 | 18 | 3 |

| 55e60cfcc9dc49c17e | 16 | 4 |

| 91f59516cb9dee1e88 | 16 | 5 |

| … | … | … |

| e6088004caf0c8cc51 | 6 | 25 |

# Utilizing cumcount() + pivot() vs. over(): Discovering Person Purchases

In this query, we’re requested to establish returning lively customers — particularly, those that made a second buy inside 1 and seven days after their first. Purchases made on the identical day shouldn’t be included. The result’s merely a listing of qualifying user_id values.

// Knowledge View

The amazon_transactions desk has one row per buy, with user_id, merchandise, created_at date, and income.

Here’s a preview of the desk:

| id | user_id | merchandise | created_at | income |

|---|---|---|---|---|

| 1 | 109 | milk | 2020-03-03 | 123 |

| 2 | 139 | biscuit | 2020-03-18 | 421 |

| 3 | 120 | milk | 2020-03-18 | 176 |

| … | … | … | … | … |

| 100 | 117 | bread | 2020-03-10 | 209 |

Grain (what one output row means): one person ID that made a qualifying return buy inside 7 days of their first.

// Edge Case

Similar-day purchases must be ignored, that means the hole between first and second buy should exceed 0 days and be at most 7 days. A buyer who buys twice on the identical day doesn’t qualify.

// Options

Each options discover every person’s earliest buy date after which filter for subsequent purchases inside the 1- to 7-day timeframe. One factor to look at: if created_at has timestamps as a substitute of plain dates, you want to truncate to the date earlier than evaluating. In any other case, two purchases made at completely different occasions on the identical day would incorrectly cross the strict inequality.

1. Pandas Answer

In Pandas, the answer includes isolating distinctive buy dates per person, rating them with cumcount(), pivoting to get first and second dates aspect by aspect, and computing the day distinction.

import pandas as pd

amazon_transactions["purchase_date"] = pd.to_datetime(amazon_transactions["created_at"]).dt.date

day by day = amazon_transactions[["user_id", "purchase_date"]].drop_duplicates()

ranked = day by day.sort_values(["user_id", "purchase_date"])

ranked["rn"] = ranked.groupby("user_id").cumcount() + 1

first_two = (ranked[ranked["rn"] <= 2]

.pivot(index="user_id", columns="rn", values="purchase_date")

.reset_index()

.rename(columns={1: "first_date", 2: "second_date"}))

first_two = first_two.dropna(subset=["second_date"])

first_two["diff"] = (pd.to_datetime(first_two["second_date"]) - pd.to_datetime(first_two["first_date"])).dt.days

consequence = first_two[(first_two["diff"] >= 1) & (first_two["diff"] <= 7)][["user_id"]]

2. Polars Answer

The Polars answer includes computing the primary buy date per person as a window expression with .over("user_id"), filtering to purchases that match the time window, and returning a deduplicated user_id record.

import polars as pl

# returning lively customers: 2nd buy 1–7 days after the primary (ignore same-day)

returning_users = (

amazon_transactions

.lazy()

# first buy date per person (window so we keep away from .groupby on LazyFrame)

.with_columns(

pl.col("created_at").min().over("user_id").alias("first_purchase_date")

)

# maintain transactions strictly 1-7 days after that first buy

.filter(

(pl.col("created_at") > pl.col("first_purchase_date")) &

(pl.col("created_at") <= pl.col("first_purchase_date") + pl.period(days=7))

)

# distinct person record

.choose("user_id")

.distinctive()

.kind("user_id", descending=[False])

)

// Efficiency Comparability

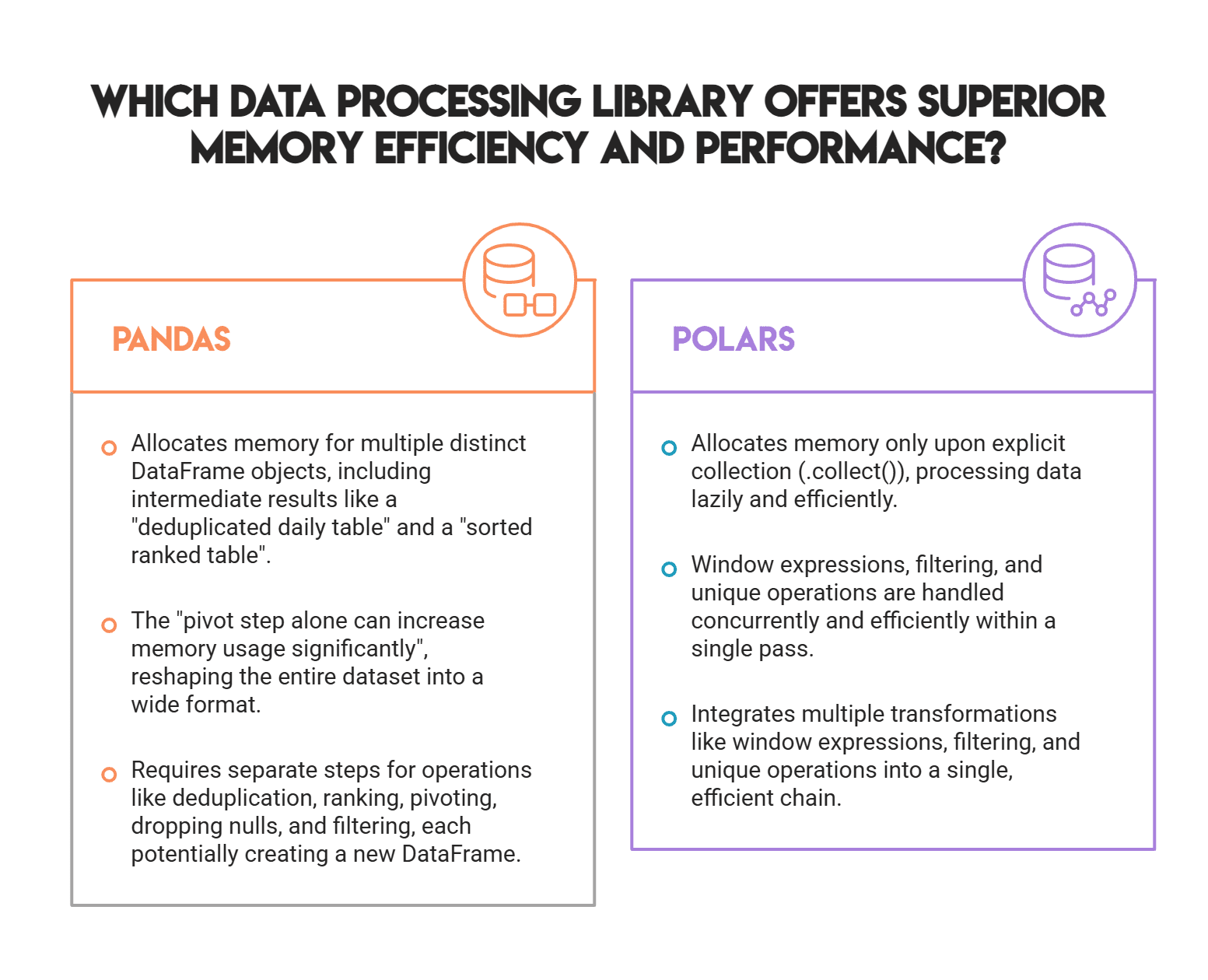

Discover the variety of distinct DataFrame allocations within the Pandas answer: the deduplicated day by day desk, the sorted ranked desk, the pivoted body, the dropna consequence, and the filtered output. These consist of 5 separate objects, every of which copies information into a brand new reminiscence block. On a big transactions desk, the pivot step alone can considerably improve reminiscence utilization, because it reshapes the complete dataset into a large format.

The Polars lazy chain doesn’t allocate any reminiscence till .gather(). The .over("user_id") window expression computes every person’s earliest buy date in a single cross, the .filter() applies instantly in the identical step, and .distinctive() runs concurrently throughout CPU cores. There isn’t any pivot, no intermediate sorted copy, and no separate date-casting step — Polars handles date arithmetic natively contained in the expression engine. This method consumes much less reminiscence and runs sooner, even on reasonably sized datasets.

Right here is the code output preview:

| user_id |

|---|

| 100 |

| 103 |

| 105 |

| … |

| 143 |

# Utilizing increasing().imply() vs. cum_mean(): Month-to-month Gross sales Rolling Common

In this query, we’re requested to find out a cumulative common for month-to-month e-book gross sales all through 2022. The typical grows every month utilizing all previous months: February averages January and February, March averages all three, and so forth. The output ought to embody the month, that month’s whole gross sales, and the cumulative common rounded to the closest complete quantity.

// Knowledge View

The amazon_books desk has one row per e-book and its unit value. The book_orders desk has one row per order, linking a e-book ID to a amount and an order date. Here’s a preview of the desk:

| book_id | book_title | unit_price |

|---|---|---|

| B001 | The Starvation Video games | 25 |

| B002 | The Outsiders | 50 |

| B003 | To Kill a Mockingbird | 100 |

| … | … | … |

| B020 | The Pillars of the Earth | 60 |

The book_orders desk has one row per e-book order, linking every order ID to an order date, e-book ID, and the amount ordered:

| order_id | order_date | book_id | amount |

|---|---|---|---|

| 1001 | 2022-01-10 | B001 | 1 |

| 1002 | 2022-01-10 | B009 | 1 |

| 1003 | 2022-01-15 | B012 | 2 |

| … | … | … | … |

| 1084 | 2023-02-01 | B009 | 1 |

Grain (what one output row means): one month in 2022, with whole gross sales for that month and a cumulative common of all month-to-month gross sales as much as and together with that month.

// Commerce-Offs

Utilizing Pandas, the .increasing().imply() clause is handy, however operates internally with a Python-level loop over rising window slices. For a 12-row month-to-month abstract, this price is negligible. For day by day or hourly information at scale (say, three years of hourly transactions), every increasing window slice provides overhead that compounds row by row.

Polars’ cum_mean() runs a single cross in Rust and is inherently sooner at scale. There’s one catch: the query requires rounding to the closest complete quantity, and Pandas makes use of banker’s rounding (spherical half to even) by default. The Polars answer makes use of NumPy’s cumsum with an specific flooring(x + 0.5) method to implement round-half-up conduct. When you want an actual match to the anticipated output, the NumPy technique is extra dependable than the built-in rounding in both library.

// Options

1. Pandas Answer

We merge books with orders, filter to 2022, mixture month-to-month gross sales, and apply .increasing().imply() to compute the cumulative common.

import pandas as pd

import numpy as np

import datetime as dt

merged = pd.merge(book_orders, amazon_books, on="book_id", how="internal")

merged["order_date"] = pd.to_datetime(merged["order_date"])

merged["order_month"] = merged["order_date"].dt.month

merged["year"] = merged["order_date"].dt.yr

merged["sales"] = merged["unit_price"] * merged["quantity"]

merged = merged.loc[(merged["year"] == 2022), :]

consequence = (

merged.groupby("order_month")["sales"]

.sum()

.to_frame("monthly_sales")

.sort_values(by="order_month")

.reset_index()

)

consequence["rolling_average"] = consequence["monthly_sales"].increasing().imply().spherical(0)

consequence

2. Polars: Constructing the Lazy Pipeline and Gathering

We be a part of the 2 tables inside a lazy chain, compute gross sales as unit_price * amount, filter to 2022, mixture by month, and name .gather() to modify to keen mode earlier than the NumPy rolling step.

import polars as pl

import numpy as np

# Step 1: Put together month-to-month gross sales (LazyFrame)

monthly_sales_lazy = (

book_orders.lazy()

.be a part of(amazon_books.lazy(), on="book_id", how="internal")

.with_columns([

(pl.col("unit_price") * pl.col("quantity")).alias("sales"),

pl.col("order_date").cast(pl.Datetime),

pl.col("order_date").dt.year().alias("year"),

pl.col("order_date").dt.month().alias("order_month")

])

.filter(pl.col("yr") == 2022)

.group_by("order_month")

.agg(pl.col("gross sales").sum().alias("monthly_sales"))

.kind("order_month")

)

# Step 2: Change to keen mode for rolling computation

monthly_sales = monthly_sales_lazy.gather()

3. Computing the Rolling Common and Finalizing

With the month-to-month gross sales as a NumPy array, we apply round-half-up rounding, add the consequence again to the Polars DataFrame, and choose the output columns.

# Step 3: Rolling common with round-half-up

sales_np = monthly_sales["monthly_sales"].to_numpy()

cumsum = np.cumsum(sales_np)

rolling_avg = np.flooring(cumsum / np.arange(1, len(cumsum)+1) + 0.5).astype(int)

# Step 4: Add again to Polars DataFrame

monthly_sales = monthly_sales.with_columns([

pl.Series("rolling_average", rolling_avg)

])

# Step 5: Remaining consequence with right column names

consequence = monthly_sales.choose(["order_month", "monthly_sales", "rolling_average"])

// Efficiency Comparability

This query has two operations that have an effect on efficiency essentially the most: the be a part of and the cumulative window. In Pandas, pd.merge joins all rows from each tables earlier than filtering for 2022. Because of this yearly’s value of orders is processed earlier than rows exterior the goal interval are discarded. Polars builds a lazy question plan and pushes the filter(yr == 2022) situation earlier than the be a part of executes, so it joins a smaller dataset from the beginning. That predicate pushdown occurs robotically, with no additional writing required.

Essentially the most noticeable distinction is the rolling common hole. Pandas’ .increasing().imply() grows its window one row at a time, calling into C for every phase whereas remaining managed by a Python loop. Polars’ cum_mean() computes the entire column in a single Rust loop with no Python overhead. Whereas the distinction could also be imperceptible with month-to-month information, for those who run this identical question on day by day information for 3 years (roughly 1,000 rows), the Polars model completes in microseconds whereas Pandas reveals measurable latency as a result of increasing window.

Right here is the code output preview:

| order_month | gross sales | rolling_average |

|---|---|---|

| 1 | 145 | 145 |

| 2 | 250 | 198 |

| 3 | 315 | 237 |

| … | … | … |

| 12 | 710 | 402 |

# Conclusion

Throughout all three issues, the Polars options comply with the identical sample: construct a lazy question plan, push as a lot computation as potential into the optimizer, and name .gather() solely if you want a concrete consequence.

The syntax takes some adjustment for those who, like most analysts, have years of Pandas habits, however the operations align carefully. .groupby() turns into .group_by(), .rename() takes a plain dict as a substitute of a columns= key phrase, and rating turns into a kind adopted by .with_row_count().

The true distinction reveals at scale. When coping with small datasets, each libraries return outcomes quick sufficient that the distinction isn’t noticeable. As row counts attain the thousands and thousands, Polars’ Rust-level parallelism and single-pass algorithms considerably outperform. When you’re encountering efficiency points with Pandas, these three challenges are an amazing place to begin for migration.

Nate Rosidi is a knowledge scientist and in product technique. He is additionally an adjunct professor educating analytics, and is the founding father of StrataScratch, a platform serving to information scientists put together for his or her interviews with actual interview questions from high corporations. Nate writes on the most recent tendencies within the profession market, offers interview recommendation, shares information science initiatives, and covers all the things SQL.