Coaching giant language fashions requires correct suggestions indicators, however conventional reinforcement studying (RL) usually struggles with reward sign reliability. The standard of those indicators straight influences how fashions be taught and make choices. Nonetheless, creating sturdy suggestions mechanisms may be complicated and error inclined. Actual-world coaching eventualities usually introduce hidden biases, unintended incentives, and ambiguous success standards that may derail the training course of, resulting in fashions that behave unpredictably or fail to satisfy desired targets.

On this publish, you’ll discover ways to implement reinforcement studying with verifiable rewards (RLVR) to introduce verification and transparency into reward indicators to enhance coaching efficiency. This strategy works greatest when outputs may be objectively verified for correctness, akin to in mathematical reasoning, code technology, or symbolic manipulation duties. Additionally, you will discover ways to layer strategies like Group Relative Coverage Optimization (GRPO) and few-shot examples to additional enhance outcomes. You’ll use the GSM8K dataset (Grade College Math 8K: a group of grade faculty math issues) to enhance math drawback fixing accuracy, however the strategies used right here may be tailored to all kinds of different use instances.

Technical overview

Earlier than diving into implementation, it’s useful to know the RL ideas that underpin this strategy. RL addresses challenges in mannequin coaching by establishing a structured suggestions system via reward indicators. This paradigm permits fashions to be taught via interplay, receiving suggestions that guides them towards optimum conduct. RL offers a framework for fashions to iteratively enhance their responses primarily based on clearly outlined indicators concerning the high quality of their outputs, making it extremely efficient for coaching fashions that work together with customers and should adapt their conduct primarily based on outcomes. Conventional RL has highlighted an necessary consideration: the standard of the reward sign issues considerably. When reward capabilities are imprecise or incomplete, fashions can interact in “reward hacking,” discovering unintended methods to maximise scores with out attaining the specified conduct. Recognizing this limitation has led to the event of extra rigorous approaches that target creating dependable, well-defined reward capabilities.

RLVR addresses reward hacking via rule-based suggestions outlined by the mannequin tuner. It makes use of programmatic reward capabilities that robotically rating outputs towards particular standards, enabling speedy iteration with out the bottleneck of accumulating human rankings. These “verifiable” rewards come from goal, reproducible guidelines, making RLVR ultimate for evolving necessities as a result of it learns basic optimization methods and adapts shortly to new eventualities. GRPO is a reinforcement studying algorithm that improves AI mannequin studying by evaluating efficiency inside teams fairly than throughout all knowledge without delay. It organizes coaching knowledge into significant teams and optimizes efficiency relative to every group’s baseline, giving acceptable consideration to every class. This group-aware optimization reduces coaching variance, accelerates convergence, and might produce fashions that carry out persistently throughout numerous classes. Combining RLVR with GRPO creates a framework the place automated rewards information studying whereas group-relative optimization helps drive balanced efficiency.

You outline reward capabilities for various job elements, and GRPO treats these as distinct teams throughout coaching, facilitating simultaneous enchancment throughout dimensions. This mixture delivers speedy adaptation and sturdy efficiency, ultimate for dynamic environments requiring generalization past coaching distribution. Including few-shot studying enhances this framework in 3 ways. First, few-shot examples present templates that present the mannequin what good outputs seem like, narrowing the search house for exploration. Second, GRPO leverages these examples by producing a number of candidate responses per immediate and studying from their relative efficiency inside every group. Third, verifiable rewards instantly verify which approaches succeed. This mixture accelerates studying: the mannequin begins with concrete examples of the specified format, explores variations effectively via group-based comparability, and receives definitive suggestions on correctness.

Answer overview

On this part, you’ll stroll via the best way to fine-tune a Qwen2.5-0.5B mannequin on SageMaker AI utilizing Amazon Amazon SageMaker Coaching Jobs. Amazon SageMaker Coaching jobs help distributed multi-GPU and multi-node configurations, so you may spin up high-performance clusters on demand, practice billion-parameter fashions sooner, and robotically shut down sources when the job finishes.

Be aware: Whereas Qwen2.5-0.5B was chosen for this use case, others like code technology would require a bigger mannequin (e.g. Qwen2.5-Coder-7B) and subsequently bigger coaching cases.

Conditions

To run the instance from this publish on Amazon SageMaker AI, you will need to fulfill the next stipulations:

Setting arrange

You should use your most popular IDE, akin to VS Code or PyCharm, however ensure your native atmosphere is configured to work with AWS, as mentioned within the stipulations.

To make use of SageMaker Studio JupyterLab areas full the next steps:

- On the Amazon SageMaker AI console, select Domains within the navigation pane, then open your area.

- Within the navigation pane beneath Purposes and IDEs, select Studio.

- On the Person profiles tab, find your person profile, then select Launch and Studio.

- In Amazon SageMaker Studio, launch an

ml.t3.mediumJupyterLab pocket book occasion with at the very least 50 GB of storage.

A big pocket book occasion isn’t required, as a result of the fine-tuning job will run on a separate ephemeral coaching occasion with GPU acceleration.

- To start fine-tuning, begin by cloning the GitHub repo and navigating to

3_distributed_training/reinforcement-learning/grpo-with-verifiable-rewardlisting, then launch the model-finetuning-grpo-rlvr.ipynb - Pocket book with a Python 3.12 or increased model kernel

Put together the dataset for fine-tuning

Working GRPO with RLVR requires you to have the ultimate reply to every query to calculate reward. First, put together the info by extracting the ultimate reply for every query.

As well as, this instance makes use of few-shot examples (8 pictures) to enhance mannequin coaching efficiency. For extra data on few-shot examples in reinforcement studying, check with the paper “Reinforcement Studying for Reasoning in Giant Language Fashions with One Coaching Instance”. Whereas the analysis paper focuses on single-shot examples, this publish will present you each single and multi-shot efficiency.

Every enter will comprise 8 examples, adopted by the issue to be solved:

After the info has been ready, hold 10 p.c of the info as a validation set and push each coaching and validation set to S3.

The Verifiable Reward Perform

This GRPO implementation for mathematical reasoning employs a dual-reward system that gives goal, verifiable suggestions throughout coaching. This strategy leverages the inherent verifiability of mathematical issues to create dependable coaching indicators with out requiring human annotation or subjective analysis.You’ll implement two complementary reward capabilities that work collectively to information the mannequin towards each right response formatting and mathematical accuracy of the end result:

Format Reward Perform

This perform helps confirm the mannequin learns to construction its responses accurately by:

- Sample Matching: Searches for the particular format

#### The ultimate reply is [number] - Constant Scoring: Awards 0.5 factors for correct formatting, 0.0 for incorrect format

- Coaching Sign: Encourages the mannequin to comply with the anticipated reply construction

Correctness Reward Perform

This perform offers the core mathematical verification by:

- Reply Extraction: Makes use of regex to extract numerical solutions from formatted responses

- Normalization: Removes widespread formatting characters (commas, foreign money symbols, items)

- Precision Comparability: Makes use of a tolerance of 1e-3 to deal with floating-point precision

- Binary Scoring: Awards 1.0 for proper solutions, 0.0 for incorrect ones

Integrating RLVR with GRPO

The reward capabilities are built-in into the GRPO coaching pipeline via the GRPOTrainer:

Throughout coaching, GRPO makes use of these reward capabilities to compute coverage gradients. First the mannequin generates a number of completions for every mathematical drawback. Subsequent, the reward for every response is computed for each reward capabilities. The format reward perform will grant as much as 0.5 for correct response construction, and the correctness reward perform will grant as much as 1.0 for the mathematical accuracy of the reply for a most mixed reward of 1.5 per completion. Then GRPO compares the completions inside teams to determine the very best responses. Lastly, within the coverage replace step, the loss perform makes use of reward variations to replace mannequin parameters. Increased-rewarded completions enhance their likelihood, whereas lower-rewarded completions lower their likelihood. This relative rating drives the optimization course of.The next instance demonstrates the best way to fine-tune Qwen2.5-0.5B. The recipe is offered within the scripts folder, permitting you to customise it or change the bottom mannequin. Right here you’ll use GRPO with verifiable rewards utilizing Quantized Low-Rank Adaptation (QLoRA). QLoRA is used right here as a way to cut back coaching useful resource necessities and velocity up the coaching course of, with a small commerce off in accuracy.

Recipe overview

This recipe implements Group Relative Coverage Optimization (GRPO) with verifiable rewards for fine-tuning the Qwen2.5-0.5B mannequin on mathematical reasoning duties. The recipe makes use of a dual-reward system that objectively evaluates each reply formatting and mathematical correctness with out requiring human annotation.

Necessary Hyperparameters:

learning_rate: 1.84e-4 – Studying price optimized for GRPO coachingnum_train_epochs: 2 – Coaching epochs to keep away from overfittingper_device_train_batch_size: 16 with gradient_accumulation_steps: 2 – Efficient batch measurement of 32max_seq_length: 2048 – Context window for 8-shot promptinglora_r: 16 andlora_alpha: 16 – LoRA rank and scaling parameterswarmup_ratio: 0.1 with cosine scheduler – Studying price schedulinglora_target_modules– Targets consideration and MLP layers for adaptation

As a subsequent step, you’ll use a SageMaker AI coaching job to spin up a coaching cluster and run the mannequin fine-tuning. The SageMaker AI Mannequin Coach. ModelTrainer runs coaching jobs on absolutely managed infrastructure; dealing with atmosphere setup, scaling, and artifact administration. It additionally means that you can specify coaching scripts, enter knowledge, and compute sources with out manually provisioning servers. Library dependencies may be managed via the necessities.txt file in scripts folder. ModelTrainer will robotically detect this file and set up the listed dependencies at runtime.

First, arrange your atmosphere. Right here you’ll specify the occasion kind and variety of cases for coaching and the placement of the coaching container.

Subsequent, configure the atmosphere variables, code places, and knowledge paths:

Arrange the channels for coaching and validation knowledge:

Then start coaching:model_trainer.practice(input_data_config=knowledge)The next is the listing construction for supply code of this instance:

To fine-tune throughout a number of GPUs, the instance coaching script makes use of Huggingface Speed up and DeepSpeed ZeRO-3, which work collectively to coach giant fashions extra effectively. Huggingface Speed up simplifies launching distributed coaching by robotically dealing with gadget placement, course of administration, and blended precision settings. DeepSpeed ZeRO-3 reduces reminiscence utilization by partitioning optimizer states, gradients, and parameters throughout GPUs—permitting billion-parameter fashions to suit and practice sooner.You’ll be able to run your GRPO coach script with Huggingface Speed up utilizing a easy command like the next:

Outcomes

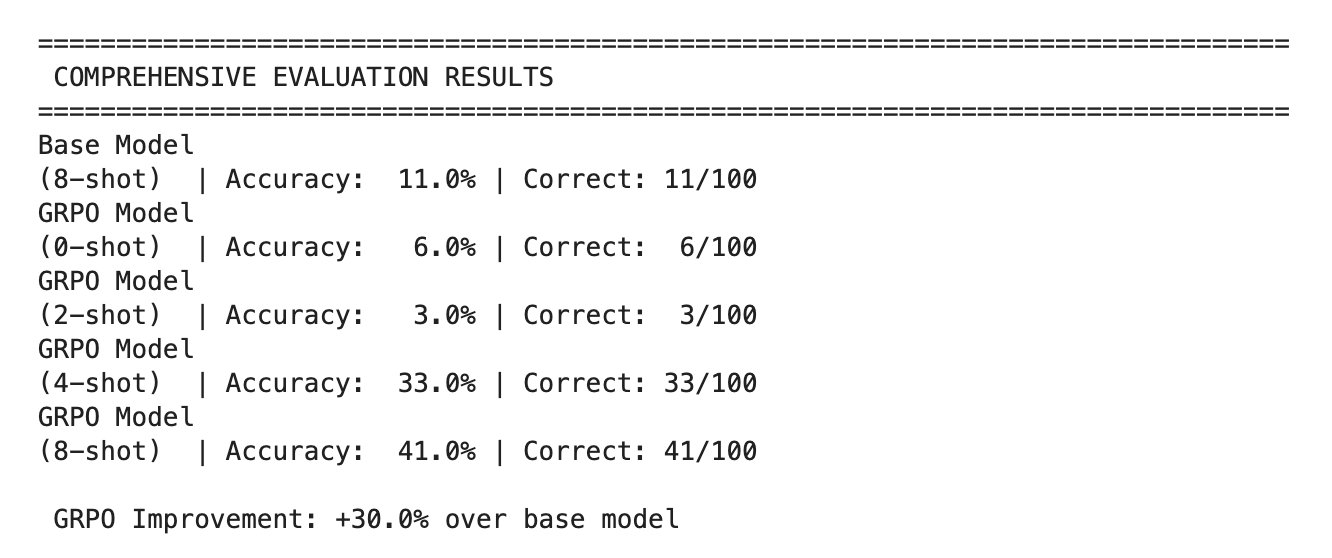

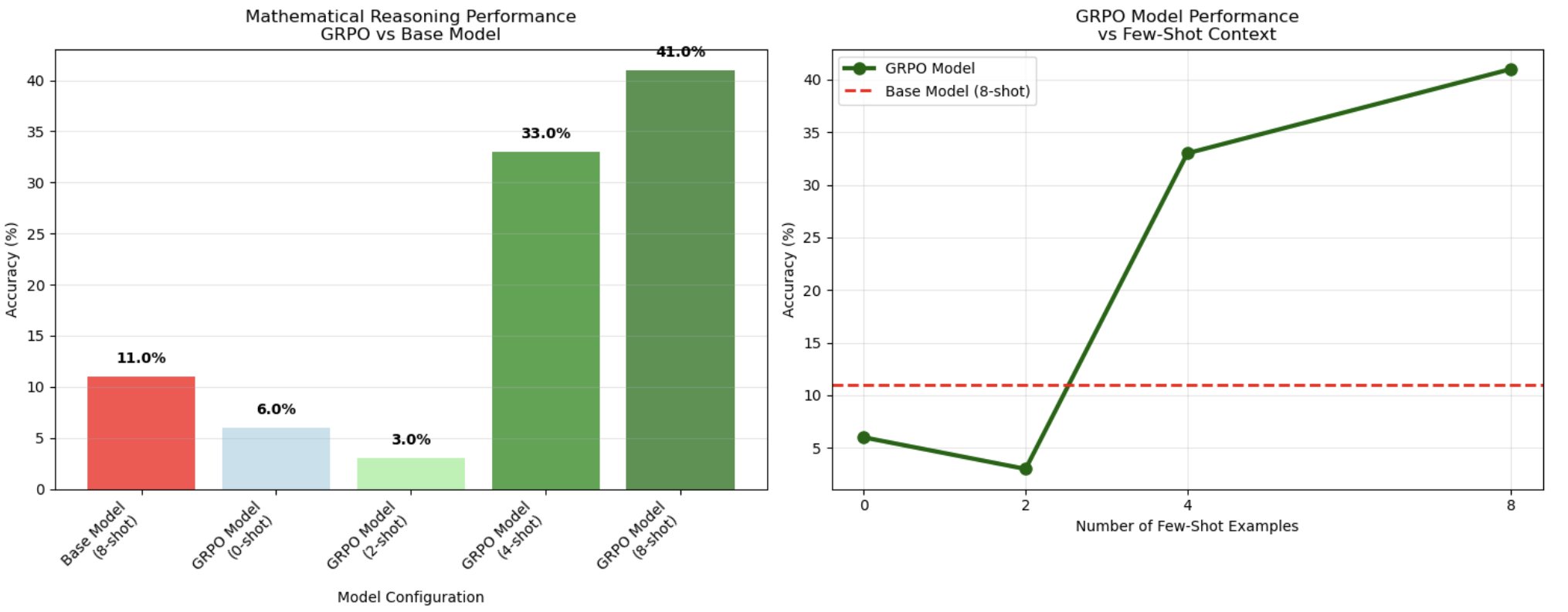

After evaluating the fashions on 100 check samples, the 8-shot GRPO-trained mannequin achieved 41% accuracy in comparison with the bottom mannequin’s 11%, demonstrating a 3.7x enchancment in chain-of-thought mathematical reasoning.

The next chart reveals a definite threshold associated to context size, revealing an optimum vary of samples for reasoning activation. Whereas 0-shot (6%) and 2-shot (3%) configurations carried out poorly – even worse than the bottom mannequin – efficiency dramatically improved at 4-shot prompting (33%), then peaked at 8-shot context (41%). This non-linear scaling sample means that GRPO coaching creates reasoning patterns that require a sure variety of examples to activate successfully. The mannequin seems to have realized to leverage group comparisons from a number of examples, according to GRPO’s group-based coverage optimization strategy the place the mannequin learns to match and choose optimum reasoning paths from a number of generated options.

Extending RLVR to different domains

Whereas this publish centered on mathematical reasoning with GSM8K, the RLVR strategy generalizes to domains with objectively verifiable outputs. Two promising instructions exhibit this versatility:

Code technology with execution-based rewards

Code technology offers pure verification via execution. Partial rewards may be awarded when code compiles and runs with out errors, whereas full rewards are achieved when outputs move complete unit assessments. Area consultants specify necessities utilizing pure language prompts, whereas the reward mannequin robotically evaluates correctness via code execution—assuaging subjective human analysis.

Area-specific textual content technology with semantic validation

For specialised domains like medical or technical writing, keyword-based rewards can information fashions towards acceptable terminology. Partial rewards encourage inclusion of required phrases, whereas full rewards require full key phrase units in semantically acceptable contexts. For example, medical textual content technology can reward outputs that mix diagnostic key phrases (“signs,” “analysis”) with therapy key phrases (“remedy,” “treatment”) in clinically legitimate patterns, educating area vocabulary via measurable targets. These examples illustrate how verifiable rewards lengthen past mathematical reasoning to duties the place correctness may be programmatically validated, establishing the inspiration for broader functions of this coaching strategy.

Cleansing Up

To scrub up your sources to keep away from incurring extra expenses, comply with these steps:

- Delete any unused SageMaker Studio sources.

- Optionally, delete the SageMaker Studio area.

- Delete any S3 buckets created

- Confirm that your coaching job isn’t working anymore! To take action, in your SageMaker console, select Coaching and verify Coaching jobs.

To be taught extra about cleansing up your sources provisioned, take a look at Clear up.

Conclusion

On this instance you educated a Qwen2.5-0.5B mannequin utilizing GRPO (Group Relative Coverage Optimization) on GSM8K: a dataset of 8,500 grade faculty math phrase issues that require multi-step arithmetic reasoning and pure language understanding. Every drawback features a query like “Janet’s geese lay 16 eggs per day…” with step-by-step options ending in numerical solutions, making it ultimate for verifiable reward coaching.

This implementation demonstrates the effectiveness of Reinforcement Studying with Verifiable Rewards (RLVR) for mathematical reasoning duties. The GRPO-trained Qwen2.5-0.5B mannequin achieved a 3.7x enchancment over the bottom mannequin, reaching 41% accuracy on GSM8K in comparison with the baseline 11%.The analysis outcomes validate RLVR as a promising strategy for domains with objectively verifiable outcomes, providing an alternative choice to preference-based coaching strategies. The brink conduct suggests GRPO learns to leverage group comparisons from a number of examples, according to its group-based optimization strategy. This work establishes a basis for making use of verifiable reward programs to different domains requiring logical rigor and mathematical accuracy.

For extra data on Amazon SageMaker AI absolutely managed coaching, check with the coaching part of the SageMaker AI documentation. The supporting code for this publish may be present in GitHub.

Concerning the authors