Greater than a trillion {dollars} are misplaced yearly because of system failures. To resolve them, engineers should troubleshoot outages shortly.

An vital activity in incident response includes analyzing observability metrics, or time collection information that snapshot the well being of software program programs. For instance, an engineer for a service might use Datadog to reply questions like “When did latency begin growing?” and “What metrics exterior of latency are additionally behaving abnormally?” to localize the foundation explanation for the anomalous habits. These time collection question-answering (TSQA) duties are important for engineers, and current difficult and obligatory duties for SRE fashions and brokers to carry out. On this work, we discover the diploma to which AI fashions can carry out TSQA duties.

To this finish, we’re excited to introduce the Anomaly Reasoning Framework Benchmark (ARFBench), a TSQA benchmark derived from actual inside incidents at Datadog, utilizing Datadog’s personal inside telemetry (Determine 1). On this weblog submit, we’ll current three key takeaways from our benchmarking experiments:

- Present fashions battle: Main LLMs, vision-language fashions (VLMs), and time collection basis fashions (TSFMs) have substantial room for enchancment on ARFBench.

- Hybrid fashions assist: We introduce a brand new hybrid TSFM-VLM mannequin that yields comparable total efficiency to high frontier fashions on ARFBench, demonstrating promising new approaches to TSQA modeling.

- Human–AI complementarity: We observe markedly totally different error profiles between our high TSFM-VLM mannequin and human specialists on ARFBench. These outcomes recommend that their strengths are complementary. We introduce a mannequin–knowledgeable oracle that establishes a brand new superhuman frontier for LLMs, VLMs, and TSFMs.

ARFBench: Utilizing real-world incident information to create a TSQA benchmark

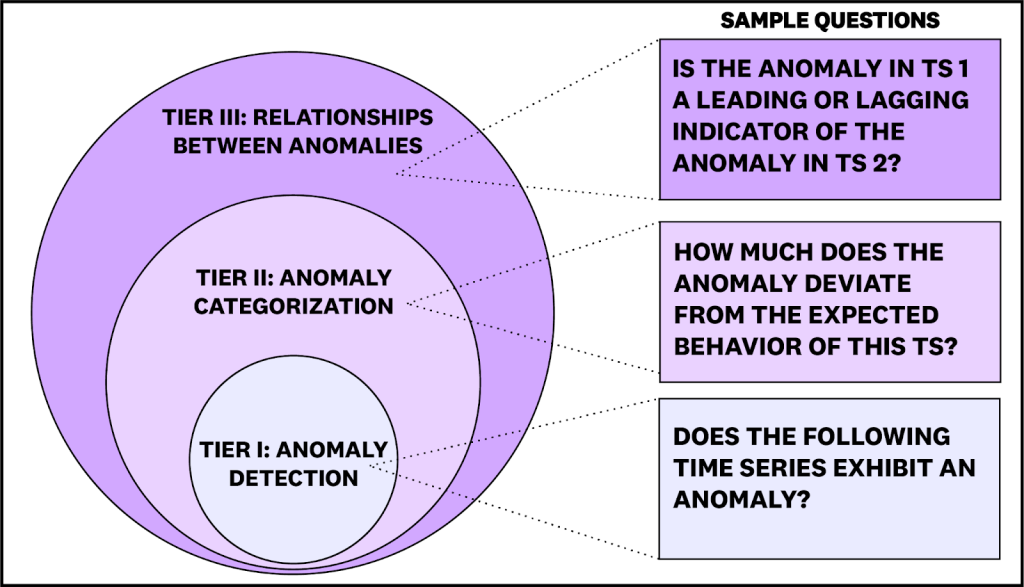

ARFBench is a TSQA benchmark primarily based on actual incidents inside to Datadog, utilizing our personal inside telemetry. In comparison with current benchmarks, ARFBench differs in three key features. First, it makes use of actual time collection information from manufacturing programs. Second, every question-answer (QA) instance is grounded in knowledgeable annotations and extra context. And third, duties are designed to check compositional reasoning: questions are organized into three tiers of accelerating problem, with higher-tier duties relying on right reasoning on decrease tiers (Determine 2).

ARFBench consists of 750 QA pairs drawn from 142 time collection and 63 incidents. Time collection in ARFBench have a most of 2283 variates (or dimensions) and 40k time steps, which current a difficult setting for context-limited fashions.

To create ARFBench, we constructed a VLM pipeline for extracting the time collection widgets from inside incident dialogue threads to assist generate and filter question-answer pairs. We then manually verified each generated query for correctness and privateness considerations, and threw away questions that we discovered unsuitable.

Reasoning about time collection and anomalies requires utilization of significant context throughout information modalities. ARFBench enriches time collection with two forms of context: time collection captions, which describe what the time collection symbolize, and multivariate groupings, which contextualize every channel relative to a bigger related assortment of time collection channels. For example, whereas it might not at all times matter {that a} single pod fails and restarts in a service, the mix of many pods failing and restarting concurrently might point out a major anomaly. This degree of complexity displays real-world situations that many current unimodal, artificial datasets fail to seize (Determine 3).

Main LLMs, VLMs, and TSFMs have substantial room for enchancment

We evaluated three classes of current fashions on ARFBench:

- LLMs, which take time collection as textual content enter

- VLMs, which take time collection plots as picture enter

- Time collection LLMs. which use a time collection encoder with an LLM spine.

We in contrast the fashions to 2 human baselines: observability specialists, and time collection researchers with out in depth observability expertise. The human specialists have been evaluated on a randomly sampled 25% subset of ARFBench.

Amongst current fashions, GPT-5 (VLM) yielded the highest efficiency at 62.7% accuracy and 51.9% F1 (Determine 4). That is a lot greater than the random alternative baseline at 22.5%, however nonetheless underperforms area specialists and is much beneath a model-expert oracle at 87.2% accuracy / 82.8% F1 (see beneath for additional dialogue). As anticipated, mannequin efficiency tends to worsen because the tier problem will increase.

We additionally observe a number of traits with our evaluations on ARFBench. Corroborating earlier works in time collection classification and QA similar to Daswani et al. 2024, we discover that VLMs outperform LLMs. The highest proprietary fashions and open-source fashions additionally confirmed a considerable hole in efficiency. Nonetheless, we discover that some open-source fashions carry out higher than many older proprietary fashions or fashions from the Claude household.

Hybrid TSFM-VLM fashions present promise for specialised TSQA modeling

Although VLMs yielded the best accuracy and F1 rating amongst current fashions, we discovered that plotting and enter illustration was a problem for each VLMs and LLMs. For instance, as a result of excessive variety of variates, we regularly couldn’t plot the time collection with out repeating colours for or occluding totally different variates. This motivated a local time collection method alongside the VLM mannequin by which we might make the most of time collection, plots, and textual content as joint enter.

To check this, we skilled a hybrid mannequin (Determine 5) by combining Toto, a state-of-the-art observability TSFM, with Qwen3-VL 32B, a number one open-source VLM. We used each artificial (Determine 6) and actual multimodal information in a multi-stage post-training pipeline incorporating each supervised fine-tuning (SFT) and reinforcement studying (RL). The ensuing mannequin, Toto-1.0-QA-Experimental, yielded the highest accuracy rating of 63.9%, and comparable F1 to high frontier fashions (48.9%). Within the Anomaly Identification activity class, the place a mannequin selects anomalous variates within the time collection, Toto-1.0-QA-Experimental outperforms all fashions by not less than 8.8 proportion factors in F1 and achieves greatest per-category accuracy, suggesting that TSFM-VLM modeling can extremely profit efficiency on specific duties. Moreover, Toto-1.0-QA-Experimental’s parameter depend is a number of magnitudes decrease than frontier fashions, thus offering potential effectivity positive aspects at inference time.

We refer readers to the paper for extra experimental particulars, error evaluation, and case research.

Area specialists complemented with fashions set a brand new superhuman frontier

The present mixture hole on ARFBench between the very best fashions (Toto-1.0-QA-Experimental & GPT-5) and the 2 human area specialists is barely 8.8 proportion factors in accuracy and 12.7 proportion factors in F1. Nonetheless, on the particular person query degree, we observe noticeably totally different habits between GPT-5 and the human specialists. GPT-5 solutions 48% of questions appropriately that each specialists get incorrect; on these questions, the human specialists are inclined to make errors in instruction-following or fine-grained notion. In the meantime, not less than one knowledgeable appropriately solutions 79% of questions that GPT-5 will get incorrect. On these units of questions, mannequin errors are inclined to contain hallucination and incorrect area data. We offer examples of each teams of errors within the paper.

Because of the giant distinction in error distribution, we hypothesize that when specialists are complemented with fashions, their joint functionality turns into a lot greater than any single knowledgeable or mannequin alone. To ascertain this, we compute a model-expert oracle, a best-of-2 metric the place an oracle completely chooses the very best reply between the mannequin and the knowledgeable, which yields 87.2% accuracy and 82.8% F1 on our information. That is far above current mannequin capabilities and units a brand new superhuman frontier for LLMs, VLMs, and TSFMs to attain.

What’s subsequent: time collection reasoning as a core part of brokers

Within the broader scope of incident response, ARFBench solely incorporates questions concentrating on analysis and reasoning. Nonetheless, we envision that robust analysis and reasoning skills will play a big half in end-to-end agentic programs (e.g., SRE or incident response brokers) that require time collection reasoning as a subroutine in understanding the incident. Whereas ARFBench can be utilized to judge time collection brokers, it isn’t at the moment a multi-turn benchmark. Nonetheless, we consider that future brokers and fashions that carry out properly on the single-turn ARFBench will finally carry out higher on end-to-end duties.

Getting began with ARFBench

If you’re considering testing your mannequin on ARFBench, you will discover the benchmark and leaderboard, and mannequin weights on Hugging Face, and the code on GitHub. To be taught extra, learn our paper.