Introduction

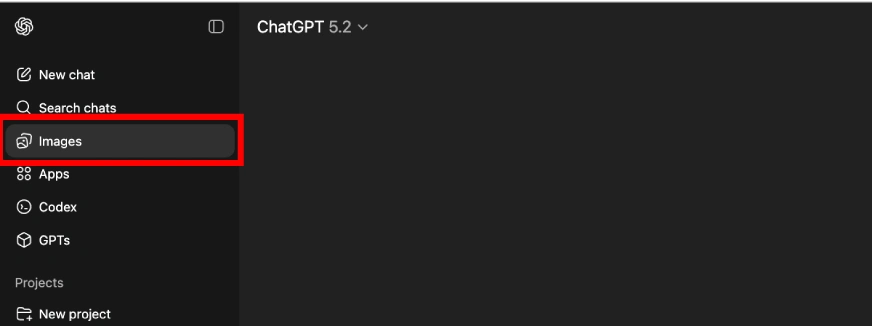

What makes Vercel and Netlify central to net growth in 2026?

Previously decade, entrance‑finish cloud platforms reworked how builders ship net functions. The Jamstack motion and fashionable frameworks like React and Subsequent.js separated the backend from the frontend, turning complicated deploys right into a single Git push. Platforms like Netlify, launched in 2014, and Vercel, based in 2015, popularized this idea by providing world CDNs, serverless features and automated builds. At the moment, thousands and thousands of builders depend on them for internet hosting all the things from private blogs to manufacturing‑grade SaaS functions. Selecting between them is not about whether or not you’ll be able to deploy; it’s about aligning your workflow, efficiency wants, pricing mannequin, and lengthy‑time period technique.

After the introduction, we offer a Fast Digest summarizing the important thing variations after which dive deep into deployment workflows, framework assist, compute fashions, pricing, efficiency, safety, AI integration, use instances, migration, rising tendencies, and FAQs.

Fast Digest

- Deployment Workflow: Netlify gives an intuitive drag‑and‑drop interface alongside Git‑primarily based deploys, making it ultimate for static websites and JAMstack initiatives. Vercel tightly integrates with Git and creates preview URLs for each department, which advantages groups engaged on Subsequent.js apps.

- Framework Assist: Netlify helps many static website mills and frameworks, together with Gatsby, Hugo, Vue and Angular. Vercel is optimized for Subsequent.js and React, providing seamless SSR, ISR and edge caching.

- Compute Fashions: Each platforms assist serverless features, however Netlify additionally contains edge features, background duties, scheduled features and sturdy features. Vercel presents serverless and edge features however is stricter with execution limits and lacks sturdy features.

- Pricing & Free Tiers: The free tiers embody 100 GB of bandwidth and restricted operate invocations. Netlify’s free plan permits business use, whereas Vercel’s free tier is supposed for interest initiatives and prohibits monetization. Professional plans begin round $20/consumer/month and scale with bandwidth and performance utilization.

- Efficiency & Scalability: Vercel’s edge community gives a decrease time‑to‑first‑byte (≈70 ms) in contrast with Netlify’s ~90 ms. Vercel additionally presents sooner construct occasions for medium Subsequent.js apps (1–2 minutes vs. Netlify’s 2–3 minutes).

- Safety & Compliance: Each platforms are SOC 2 and GDPR compliant, supply automated SSL certificates, and embody DDoS safety. Netlify provides constructed‑in type dealing with and identification providers, whereas Vercel depends on exterior suppliers.

- AI Integration: Vercel’s AI SDK and AI Gateway simplify constructing chatbots however are certain by serverless timeouts. Netlify contains AI growth credit and instruments like Agent Runners, whereas Vercel’s AI Gateway requires paid utilization. For manufacturing‑grade AI workloads, specialised platforms like Clarifai supply compute orchestration, persistent inference and native runners.

The Rise of Entrance‑Finish Cloud Platforms

Why are Vercel and Netlify essential for contemporary net growth?

Fashionable net growth shifted from monolithic servers to decoupled architectures the place the entrance finish is served individually from the backend. Platforms like Netlify and Vercel catalyzed this variation by providing instantaneous world deployments by a CDN, automated builds from Git and serverless features. Netlify, launched in 2014, pioneered the JAMstack motion, making static website deployment trivial. Vercel adopted in 2015 with a deal with Subsequent.js, providing seamless SSR and incremental static regeneration (ISR) for dynamic React functions. By 2026, these platforms energy blogs, e‑commerce websites, SaaS merchandise and AI prototypes.

The selection between them displays broader tendencies in net structure. Builders not ask find out how to deploy; they select which platform matches their workflow. Vercel’s opinionated stack is optimized for efficiency and tight integration with Subsequent.js, whereas Netlify champions an open, framework‑agnostic ecosystem with constructed‑in options like varieties, identification and plugin automation. Each supply world edge networks (100+ areas) to make sure quick loading throughout continents.

Knowledgeable Insights

- Shift to serverless and edge: The adoption of Netlify and Vercel underscores a motion away from conventional internet hosting towards serverless and edge‑native architectures. This variation reduces infrastructure administration and accelerates function supply.

- Philosophy issues: Vercel’s deal with a Subsequent.js‑centric stack delivers distinctive DX for React builders however can constrain flexibility. Netlify’s framework‑agnostic strategy appeals to companies and groups who juggle a number of stacks.

- Way forward for deployment: Because the complexity of entrance‑finish functions grows, the deployment platform should deal with caching methods, on‑the‑fly rendering and integration with AI providers. Understanding every platform’s philosophy is essential to creating an extended‑time period resolution.

Deployment Workflow & Developer Expertise

How do deployment workflows and developer expertise differ?

Each platforms reduce deployment friction, however they accomplish that in distinct methods. Netlify gives an intuitive drag‑and‑drop UI for static websites and robotically builds from Git repositories, making it ultimate for JAMstack initiatives. It additionally helps deploying a number of websites from a monorepo utilizing construct contexts and listing focusing on. Netlify’s CLI (netlify dev) emulates the manufacturing atmosphere domestically, permitting builders to check features, redirects and atmosphere variables earlier than pushing code.

Vercel integrates deeply with Git and robotically generates preview deployments for every department or pull request. Its CLI (vercel dev, vercel –prod) gives actual‑time suggestions throughout builds and shortly spins up preview environments. Vercel’s opinionated venture construction (particularly in Subsequent.js) presents conference over configuration, which accelerates early growth however will be restrictive for non‑React frameworks.

Knowledgeable Insights

- Construct occasions matter: For a medium Subsequent.js app, Vercel completes builds in about 1–2 minutes, whereas Netlify takes 2–3 minutes. This distinction will be vital in steady deployment pipelines.

- Preview environments: Each platforms supply one‑click on rollbacks and per‑department previews, however Vercel’s preview URLs are particularly nicely built-in into Git workflows, enhancing collaboration throughout groups.

- Native parity: Netlify’s native growth atmosphere carefully mirrors manufacturing, decreasing surprises at deployment time. Vercel’s native emulation is powerful for Subsequent.js however could require configuration for different frameworks.

- Monorepo assist: Netlify permits a number of websites from one repository by construct contexts; Vercel helps monorepos however requires guide configuration and venture linking.

Framework & Language Assist

Which frameworks do Vercel and Netlify assist finest?

Netlify prides itself on being framework‑agnostic. It helps static website mills and fashionable frameworks like Gatsby, Hugo, Vue, Angular, SvelteKit, Astro and Remix. As a result of Netlify’s construct system is decoupled from any specific framework, builders can run customized construct instructions and deploy a wide range of applied sciences with minimal friction. Static websites and JAMstack functions run notably nicely, however Netlify additionally delivers dynamic capabilities by way of serverless and edge features.

Vercel presents deep integration with Subsequent.js. It robotically configures server‑facet rendering, static era and incremental static regeneration (ISR) for React functions. Whereas different frameworks (Nuxt, SvelteKit, Astro) can deploy on Vercel, they could not profit from the identical degree of constructed‑in optimization. In response to comparative tables, Vercel is rated highest for Subsequent.js, whereas Netlify scores higher for frameworks like Astro and Remix.

Knowledgeable Insights

- React/Subsequent.js edge: In case your software makes use of Subsequent.js closely—particularly the App Router or server parts—Vercel presents automated configuration and efficiency advantages. Netlify achieves function parity for manufacturing‑prepared Subsequent.js options however lacks early entry to experimental options.

- Numerous ecosystems: Netlify’s broad framework assist permits companies and multi‑crew organizations to standardize on one deployment platform with out being tied to a particular ecosystem.

- Nuxt and Astro: Builders utilizing Vue/Nuxt or content material‑targeted frameworks like Astro usually favor Netlify for its easier configuration and constant assist throughout frameworks.

Edge Features, Serverless & Compute Fashions

What serverless and edge capabilities distinguish the platforms?

Each platforms supply serverless features that run code in response to HTTP requests. Netlify’s compute palette is broader: it contains conventional serverless features, edge features, background features for lengthy‑operating duties (as much as quarter-hour), scheduled features (CRON jobs) and sturdy features that persist throughout deployments. This flexibility permits builders to deal with asynchronous workflows, time‑primarily based duties and atomic operations with out leaving the platform.

Vercel gives serverless and edge features however lacks background or sturdy features. Its edge features run on V8 isolates and begin up in milliseconds, leading to very low time‑to‑first‑byte for light-weight duties. Nonetheless, serverless features on Vercel’s interest plan are capped at 10 seconds, and Professional plans permit as much as 5 minutes. Lengthy‑operating or compute‑intensive workloads could hit these limits shortly. Netlify’s features even have timeouts (10 seconds on free tier) however assist longer durations for background duties.

Knowledgeable Insights

- Chilly begins vs. edge: Vercel’s edge features get rid of chilly begins for easy duties and are perfect for streaming APIs or chat interfaces. Netlify’s edge features present related capabilities however could have barely larger chilly begin latency.

- Asynchronous jobs: Netlify’s background and scheduled features make it simpler to run experiences, ship emails or course of queues with out exterior providers.

- Sturdiness: Sturdy features on Netlify persist state throughout deployments, enabling atomic operations and decreasing duplication.

- AI workloads: Serverless limits can hinder complicated AI reasoning. Groups usually pair entrance‑finish deployments with devoted AI orchestration platforms like Clarifai, which offer persistent compute and native runners for lengthy‑operating inference duties.

Pricing & Price Buildings

How do pricing fashions and free tiers differ?

Each platforms supply beneficiant free tiers that embody 100 GB of bandwidth and restricted construct minutes or operate invocations. Netlify’s free plan can be utilized for business initiatives, whereas Vercel’s free tier prohibits monetization and is meant for interest initiatives. Every platform strikes to a credit score‑ or seat‑primarily based Professional plan beginning round $19‑20 per member/month, with bandwidth and performance limits scaling accordingly.

Vercel’s pricing expenses per consumer and per GB‑hour of serverless execution, which might grow to be costly at scale. Netlify sells credit protecting bandwidth, construct minutes and compute, however prices for add‑ons like varieties or identification could make predicting your invoice difficult. Each platforms supply enterprise plans with customized SLAs, SSO/SAML authentication and enhanced safety features.

Knowledgeable Insights

- Industrial use: In case you plan to monetize your product, remember that Vercel expects you to improve from the free tier instantly. Netlify lets startups run low‑visitors, income‑producing websites on the free tier so long as they keep inside limits.

- Predictability vs. flexibility: Vercel’s mannequin scales linearly with utilization, making prices extra predictable however usually larger. Netlify’s credit score system is extra versatile however can result in surprising overages when utilizing paid add‑ons.

- Perform timeouts and value: As a result of Vercel payments by GB‑hour, lengthy‑operating serverless features can accrue prices shortly. Netlify’s per‑invocation pricing could also be cheaper for easy duties however lacks transparency past the free tier.

Efficiency & Scalability

Which platform presents higher efficiency and scalability?

Efficiency will depend on each world supply and construct velocity. Vercel’s edge community delivers a Time‑to‑First‑Byte (TTFB) of round 70 ms on common. Netlify clocks in at roughly 90 ms, and Cloudflare Pages (one other competitor) reaches ~50 ms. For a medium Subsequent.js app, Vercel’s caching and construct optimizations produce builds in 1–2 minutes, whereas Netlify takes 2–3 minutes.

Each platforms distribute content material by way of a worldwide CDN (100+ factors of presence) and assist incremental static regeneration (ISR) to revalidate pages on demand. Netlify gives an picture CDN and granular cache management headers for superb‑grained caching, whereas Vercel ties caching methods to particular frameworks. Netlify’s sturdy directive reduces operate calls and improves efficiency throughout frameworks. Vercel’s edge runtime excels at streaming dynamic content material however is very optimized for Subsequent.js.

Knowledgeable Insights

- Edge community parity: Each platforms function on intensive edge networks; variations in TTFB are minor for many functions.

- Construct optimizations: Vercel’s Turbopack (in beta) guarantees even sooner builds, whereas Netlify continues to increase its construct plugin ecosystem.

- Cache management: Netlify’s specific cache headers and cache debugging instruments supply builders extra management over CDN conduct.

- Scaling past static: For extremely dynamic websites requiring heavy API interactions, serverless chilly begins and concurrency limits could grow to be bottlenecks. Utilizing devoted backends or compute orchestration platforms can mitigate these constraints.

Safety, Compliance & Information Storage

How do the platforms handle safety and knowledge administration?

Each Vercel and Netlify adhere to SOC 2 Kind 2 and GDPR requirements and supply automated SSL certificates and DDoS safety. They permit customized firewall guidelines and speedy world charge limiting. Vercel contains constructed‑in bot challenges (CAPTCHAs) on all plans, whereas Netlify depends on third‑celebration integrations for superior bot administration.

Netlify gives constructed‑in varieties dealing with and identification providers, enabling easy authentication flows with out exterior suppliers. Vercel lacks native type or authentication providers, pushing groups to 3rd‑celebration providers. For knowledge storage, each supply object storage and key–worth shops; Vercel’s Edge Config gives low‑latency function flags, whereas Netlify’s Cache API helps key–worth caching. Databases on each platforms are supported by way of companions.

Knowledgeable Insights

- Enterprise safety: Superior options resembling single signal‑on (SSO), SCIM provisioning and net software firewalls can be found on enterprise plans; consider whether or not you want them early to keep away from surprises.

- Constructed‑in identification vs. exterior suppliers: Netlify’s native identification service simplifies consumer administration for easy websites. Vercel groups sometimes combine Auth0, Clerk or customized auth suppliers.

- Compliance past SOC 2: In case you require HIPAA or FedRAMP compliance, confirm with every vendor or think about internet hosting delicate backend providers individually.

AI Integration & Developer Instruments

How do AI options and developer instruments examine?

AI integration is an rising differentiator. Vercel’s AI SDK permits builders to construct streaming chat interfaces shortly; throughout inner exams, connecting a Subsequent.js frontend to an OpenAI backend required lower than 20 strains of code. The SDK abstracts streaming protocols, backpressure and supplier switching. Edge execution additional improves time‑to‑first‑byte, eliminating container chilly begins for light-weight inference duties. Vercel’s AI Gateway gives a unified endpoint for a number of fashions however expenses per request and lacks AI‑particular observability metrics.

Netlify contains AI growth credit in all plans, helps AI agent workflows by instruments like Agent Runners, and lets groups charge‑restrict and handle token utilization by a unified gateway. Nonetheless, Netlify doesn’t but match Vercel’s deep Subsequent.js integration for streaming.

Each platforms share limitations inherent to serverless environments: features are capped at a couple of minutes, and edge features prohibit the time between request and first byte. These constraints make complicated AI reasoning or lengthy‑operating analysis brokers troublesome to host straight on Vercel or Netlify. Clarifai addresses this hole by providing compute orchestration, mannequin inference and native runners that run on persistent infrastructure. Builders can deploy their net entrance finish on Netlify or Vercel and connect with Clarifai’s backend to deal with heavy AI workloads, benefiting from options like asynchronous job queues, persistent API endpoints and built-in knowledge labeling.

Knowledgeable Insights

- Fast prototyping vs. manufacturing: Vercel’s AI SDK and Netlify’s AI instruments excel for prototypes and low‑latency chat experiences. For manufacturing‑grade AI pipelines, devoted orchestration platforms present persistent runtime environments and scalable GPUs.

- Timeout consciousness: Be conscious of execution limits; asynchronous or streaming duties may have to separate into shorter operations or offload heavy processing to separate providers.

- Observability: Neither platform at present presents constructed‑in token‑degree observability for LLMs. Use exterior monitoring instruments or Clarifai’s built-in dashboard to trace latency, throughput and value.

Use Circumstances & Goal Audiences

When must you select Vercel or Netlify?

- Static and advertising and marketing websites: Netlify shines for easy websites, blogs, documentation and advertising and marketing pages. Its drag‑and‑drop deploys, constructed‑in varieties and identification service swimsuit entrepreneurs and content material groups.

- Multi‑framework functions: Businesses and organizations that work with numerous frameworks profit from Netlify’s broad compatibility and plugin ecosystem.

- Dynamic Subsequent.js apps: Vercel is the default alternative for React/Subsequent.js initiatives requiring ISR, SSR or streaming. It gives automated preview URLs for each pull request.

- Early‑stage SaaS and demos: Each platforms are glorious for MVPs and prototypes. Netlify’s beneficiant free tier permits business use, whereas Vercel’s free tier is good for private demos.

- AI‑heavy functions: When your product depends on giant‑language‑mannequin inference or agentic workflows, deploy the UI on Netlify or Vercel and connect with a specialised AI platform like Clarifai to deal with lengthy‑operating inference and compute orchestration.

Knowledgeable Insights

- Assume forward: Select a platform aligned together with your anticipated development; migrating later will be time‑consuming.

- Combine with AI early: Even when AI isn’t a core a part of the product at present, designing your structure to attach with devoted AI providers makes future integration simpler.

- Take into account crew measurement: Pricing scales per consumer on Vercel and per crew member on Netlify; giant groups could discover Netlify extra price‑efficient initially.

Migration & Vendor Lock‑In Concerns

What ought to you recognize about migrating between platforms and avoiding lock‑in?

Migrating from Vercel to Netlify is mostly easy for Subsequent.js functions; many initiatives can change in below an hour, and Netlify robotically detects most Subsequent.js settings. Shifting in the other way requires eradicating Netlify‑particular configurations, resembling redirect guidelines and varieties, and updating atmosphere variable references. The first challenges come up from platform‑particular options like ISR caching, edge middleware or construct plugins.

Vendor lock‑in stems from deeper architectural dependencies. Vercel’s edge middleware runs on a proprietary runtime that doesn’t assist native Node APIs; code written for it might require rewrites when migrating to straightforward servers. Netlify’s plugin system and identification service additionally create dependencies, however these are simpler to take away. Authorized restrictions additionally matter: Vercel’s free tier forbids business use, so groups planning to monetize ought to funds for a paid plan from day one.

Knowledgeable Insights

- Summary your logic: Maintain enterprise logic in reusable modules or separate providers in order that deployment configuration is the one platform‑particular half.

- Keep away from proprietary middleware: Restrict reliance on platform‑particular middleware or make investments time in writing transportable fallbacks.

- Learn the superb print: Perceive free‑tier limitations and phrases of service earlier than launching; business use on Vercel’s free plan violates its coverage.

Future Outlook & Rising Tendencies

What does the longer term maintain for these platforms?

Trade roadmaps recommend speedy innovation. Vercel’s 2025–26 focus contains AI‑powered growth instruments (v0), enhanced observability, Turbopack for sooner builds, edge storage (KV and Postgres on the edge), and superior caching methods. Netlify plans to increase its composable structure, construct plugins ecosystem, monorepo assist, extra highly effective edge handlers and AI integration options. Each platforms proceed investing in AI capabilities, edge computing and developer expertise enhancements.

Past official roadmaps, rising tendencies embody edge AI inference, the place fashions run near customers to attenuate latency; multi‑cloud deployment, permitting groups to unfold workloads throughout suppliers; and improved observability to watch efficiency and value at superb granularity. Specialised AI platforms like Clarifai will more and more play a task in orchestrating mannequin coaching, deployment and inference, complementing entrance‑finish deployment platforms.

Knowledgeable Insights

- AI on the edge: As edge runtimes mature, count on to see small fashions deployed straight on CDNs for extremely‑low latency responses. Bigger fashions will nonetheless require devoted GPU backends.

- Composable architectures: Netlify’s investments in composable infrastructure recommend larger flexibility in chaining providers and customizing construct pipelines.

- Observability: Detailed metrics for construct efficiency, price and AI inference will grow to be desk stakes, serving to groups optimize deployments.

Conclusion & FAQs

Selecting between Vercel and Netlify in 2026 will depend on your framework, use case, scale expectations and whether or not AI workloads play a task. Vercel presents distinctive assist for Subsequent.js, sooner builds and edge streaming, however its pricing and free‑tier restrictions could deter some groups. Netlify gives broad framework assist, constructed‑in options like varieties and identification, and a business‑pleasant free plan, however its efficiency is barely slower and a few superior options require add‑ons. For AI‑heavy functions or lengthy‑operating duties, coupling both platform with a specialised AI service resembling Clarifai delivers the scalability and observability vital for manufacturing.

FAQs

- Which platform is finest for my venture? — In case you’re constructing a dynamic Subsequent.js app with server parts, Vercel’s tight integration will save time. For static websites, JAMstack apps or multi‑framework initiatives, Netlify’s flexibility and constructed‑in options make it a robust alternative.

- Are Netlify and Vercel actually free? — Each supply free tiers, however Netlify’s free plan permits business use. Vercel’s free tier is for interest initiatives and prohibits monetization.

- How do serverless limits have an effect on AI workloads? — Serverless features on each platforms have strict timeouts (10 seconds on interest plans and as much as 5 minutes on paid plans). Advanced AI reasoning or lengthy‑operating inference exceeds these limits; utilizing devoted AI platforms like Clarifai solves this problem.

- Can I migrate between platforms? — Sure. Shifting from Vercel to Netlify is often easy and will be achieved in below an hour for Subsequent.js apps. Migrating the opposite approach requires eradicating Netlify‑particular configurations and understanding Vercel’s opinionated construction.

- Do I would like each a deployment platform and an AI platform? — In case your software entails superior AI duties, you doubtless will. Use Netlify or Vercel for entrance‑finish deployment and Clarifai for mannequin internet hosting, inference and compute orchestration to make sure scalability and observability.

Bishesh Adhikari

Bishesh Adhikari Hin Yee Liu

Hin Yee Liu

Palash Choudhury

Palash Choudhury