This weblog publish focuses on new options and enhancements. For a complete listing, together with bug fixes, please see the launch notes.

Constructing Manufacturing-Prepared Agentic AI at Scale

Agentic AI methods are shifting from analysis prototypes to manufacturing workloads. These methods do not simply generate responses. They motive over multi-step duties, name exterior instruments, work together with APIs, and execute long-running workflows autonomously.

However manufacturing agentic AI requires greater than highly effective fashions. It requires infrastructure that may deploy brokers reliably, handle the instruments they rely on, deal with state throughout complicated workflows, and scale throughout cloud, on-prem, or hybrid environments with out vendor lock-in.

Clarifai’s Compute Orchestration was constructed for this. It gives the infrastructure layer to deploy any mannequin on any compute, at any scale, with built-in autoscaling, multi-environment assist, and centralized management. This launch extends these capabilities particularly for agentic workloads, making it simpler to construct, deploy, and handle manufacturing agentic AI methods.

With Clarifai 12.1, now you can deploy public MCP (Mannequin Context Protocol) servers instantly on the platform, giving agentic fashions entry to searching capabilities, real-time knowledge, and developer instruments with out managing server infrastructure. Mixed with assist for customized MCP servers and agentic mannequin uploads, Clarifai gives a whole orchestration layer for agentic AI: from growth to manufacturing deployment.

This launch additionally introduces Artifacts, a versioned storage system for recordsdata produced by pipelines, and Pipeline UI enhancements that streamline monitoring and management of long-running workflows.

Let’s stroll by what’s new and how you can get began.

Deploying Public MCP Servers for Agentic AI

Agentic AI methods break when fashions cannot entry the instruments they want. A reasoning mannequin would possibly know how to browse the online, execute code, or question a database, however with out the infrastructure to really name these instruments, it is restricted to producing textual content.

Mannequin Context Protocol (MCP) servers remedy this. They’re specialised internet companies that expose instruments, knowledge sources, and APIs to LLMs in a standardized means. An MCP server acts because the bridge between a mannequin’s reasoning capabilities and real-world actions, like fetching reside climate knowledge, navigating internet pages, or interacting with exterior methods.

Clarifai has already been supporting customized MCP servers, permitting groups to construct their very own device servers and run them on the platform utilizing Compute Orchestration. This provides full management over what instruments brokers can entry, but it surely requires writing and sustaining customized server code.

With 12.1, we’re making it simpler to get began by including assist for public MCP servers. These are open-source, community-maintained MCP servers you could deploy on Clarifai with a easy configuration, with out writing or internet hosting the server your self.

How Public MCP Servers Work

Public MCP servers are deployed as fashions on the Clarifai platform. As soon as deployed, they run as managed API endpoints on Compute Orchestration infrastructure, dealing with device execution and returning outcomes to agentic fashions throughout inference.

This is what the workflow appears like:

- Deploy a public MCP server as a mannequin on Clarifai utilizing the CLI or SDK

- Join it to an agentic mannequin that helps device calling and MCP integration

- The mannequin discovers out there instruments from the MCP server throughout inference

- The mannequin calls instruments as wanted, and the MCP server executes them and returns outcomes

- The mannequin makes use of these outcomes to proceed reasoning or full the duty

All the move is managed by Compute Orchestration. The MCP server runs as a containerized deployment, scales based mostly on demand, and may be deployed throughout any compute setting (cloud, on-prem, or hybrid) similar to every other mannequin on the platform.

Accessible Public MCP Servers

We have printed a number of open-source MCP servers on the Clarifai Neighborhood you could deploy immediately:

Browser MCP Server

Offers agentic fashions the flexibility to navigate internet pages, extract content material, take screenshots, and work together with internet kinds. Helpful for analysis duties, knowledge gathering, or any workflow that requires real-time internet interplay.

Climate MCP Server

Gives real-time climate knowledge lookup by location. A easy instance of how MCP servers can join fashions to exterior APIs with out requiring the mannequin to deal with authentication or API-specific logic.

These servers are already deployed and working on the platform. You need to use them instantly with any agentic mannequin, or reference them as examples when deploying your personal public MCP servers.

Deploying Your Personal Public MCP Server

If you wish to deploy an open-source MCP server from the group, the method is simple. You present a configuration pointing to the MCP server repository, and Clarifai handles containerization, deployment, and scaling.

This is an instance of deploying the Browser MCP server utilizing the identical workflow as importing a customized mannequin. The complete instance is accessible within the Clarifai runners-examples repository.

The configuration follows the identical construction as every other mannequin add on Clarifai. You outline the server’s runtime, dependencies, and compute necessities, then add it utilizing the CLI:

clarifai mannequin add

As soon as deployed, the MCP server turns into a callable API endpoint.

Utilizing MCP Servers with Agentic Fashions

A number of fashions on the Clarifai platform natively assist agentic capabilities and might combine with MCP servers throughout inference. These fashions are constructed with device calling and iterative reasoning, permitting them to find, name, and course of outcomes from MCP servers with out extra configuration.

Fashions with agentic MCP assist embrace:

Once you name one in every of these fashions by the Clarifai API, you may specify which MCP servers it ought to have entry to. The mannequin handles device discovery and execution throughout inference, iterating till the duty is full.

You can even add your personal agentic fashions with MCP assist utilizing the AgenticModelClass. This extends the usual mannequin add workflow with built-in assist for device discovery and execution. An entire instance is accessible within the agentic-gpt-oss-20b repository, exhibiting how you can add an agentic reasoning mannequin that integrates with MCP servers.

Why This Issues for Manufacturing Agentic AI

Deploying MCP servers on Compute Orchestration means you get the identical infrastructure advantages as every other workload on the platform:

- Deploy anyplace: MCP servers can run on Clarifai’s shared compute, devoted cases, or your personal infrastructure (VPC, on-prem, air-gapped)

- Autoscaling: Servers scale up or down based mostly on demand, with assist for scale-to-zero when idle

- Centralized management: Monitor efficiency, handle prices, and management entry by the Clarifai Management Heart

- No vendor lock-in: Run the identical MCP servers throughout totally different environments with out reconfiguration

That is production-grade orchestration for agentic AI. MCP servers aren’t simply working regionally or on a single cloud supplier. They’re deployed as managed companies with the identical reliability, scaling, and management you’d count on from any enterprise AI infrastructure.

For a step-by-step information on deploying public MCP servers, connecting them to agentic fashions, and constructing your personal tool-enabled workflows, take a look at the Clarifai MCP documentation and the examples within the runners-examples repository.

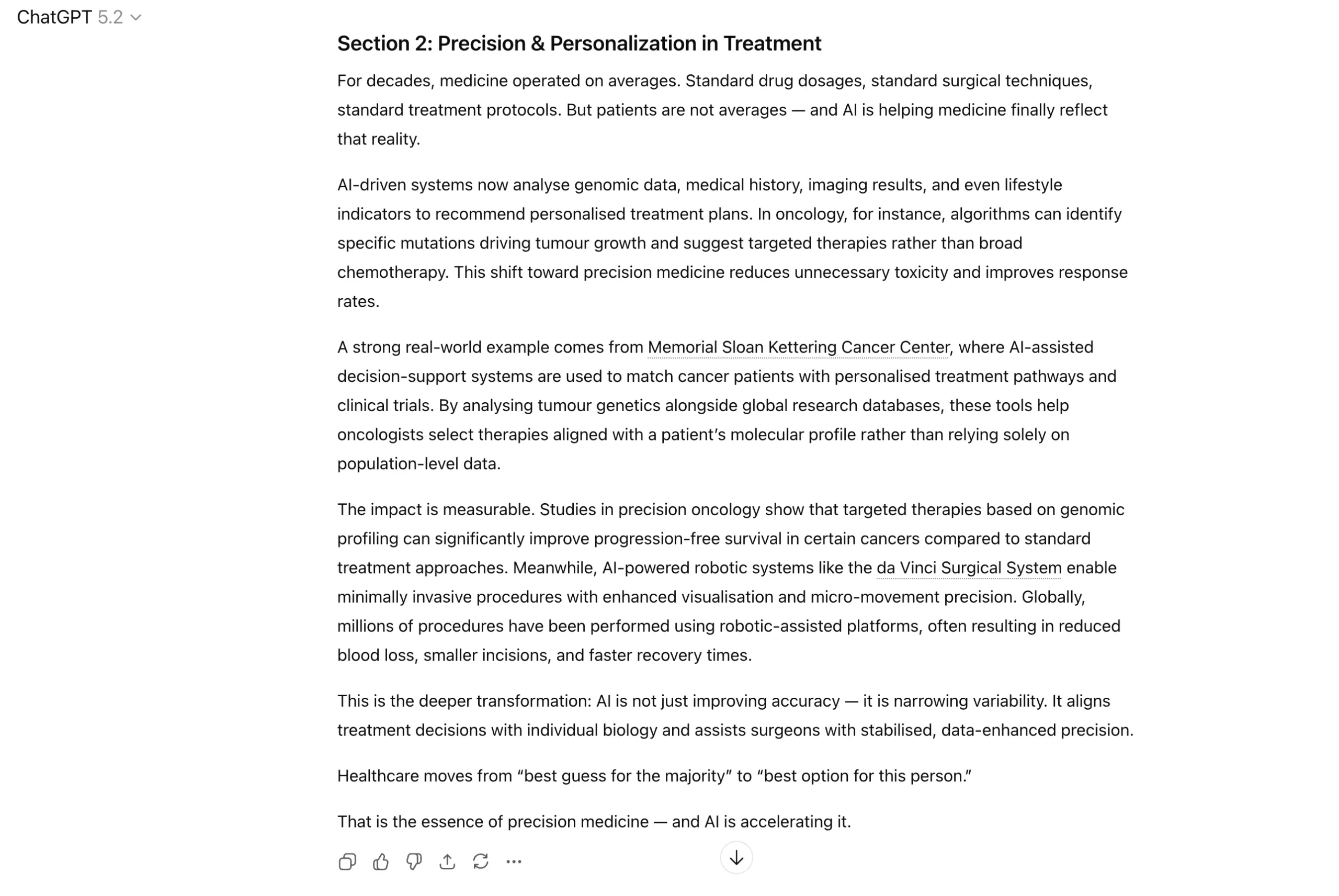

Artifacts: Versioned Storage for Pipeline Outputs

Clarifai Pipelines, launched in 12.0, let you outline and execute long-running, multi-step AI workflows instantly on the platform. These workflows deal with duties like mannequin coaching, batch processing, evaluations, and knowledge preprocessing as containerized steps that run asynchronously on Clarifai’s infrastructure.

Pipelines are at the moment in Public Preview as we proceed iterating based mostly on consumer suggestions.

Pipelines produce recordsdata. Mannequin checkpoints, coaching logs, analysis metrics, preprocessed datasets, configuration recordsdata. These outputs are useful, however till now, there was no standardized method to retailer, model, and retrieve them throughout the platform.

With 12.1, we’re introducing Artifacts, a versioned storage system designed particularly for recordsdata produced by pipelines or consumer workloads.

What Are Artifacts

An Artifact is a container for any binary or structured file. Every Artifact can have a number of ArtifactVersions, capturing distinct snapshots over time. Each model is immutable and references the precise file saved in object storage, whereas metadata like timestamps, descriptions, and visibility settings are tracked within the management aircraft.

This separation retains lookups quick and storage prices low.

Why Artifacts Matter

Reproducibility: Save the precise recordsdata (weights, checkpoints, configs, logs) that produced outcomes, making experiments reproducible and auditable.

Resume and checkpointing: Pipelines can resume from saved checkpoints as a substitute of recomputing, saving time and price on long-running jobs.

Model management: Monitor how mannequin checkpoints evolve over time or examine outputs throughout totally different pipeline runs.

Utilizing Artifacts with the CLI

The Clarifai CLI gives a easy interface for managing artifacts, modeled after acquainted instructions like cp for add and obtain.

Add a file as an artifact:

Add with description and visibility:

Obtain the newest model:

Obtain a selected model:

Record all artifacts in an app:

Record variations of a selected artifact:

The CLI handles multipart uploads for big recordsdata routinely, making certain environment friendly transfers even for multi-gigabyte checkpoints.

Utilizing Artifacts with the Python SDK

The SDK gives programmatic entry to artifact administration, helpful for integrating artifact uploads and downloads instantly into coaching scripts or pipeline steps.

Add a file:

Obtain a selected model:

Record all variations of an artifact:

Artifact Use Instances

Mannequin coaching workflows: Add mannequin checkpoints after every coaching epoch. If coaching is interrupted, resume from the final saved checkpoint as a substitute of restarting from scratch.

Pipeline outputs: Retailer analysis metrics, preprocessed embeddings, or serialized configurations produced by pipeline steps. Reference these artifacts in downstream steps or share them throughout groups.

Experiment monitoring: Model management for all outputs associated to an experiment. Monitor how mannequin efficiency evolves throughout coaching runs or examine artifacts produced by totally different hyperparameter configurations.

Artifacts are scoped to apps, similar to Pipelines and Fashions. This implies entry management, versioning, and lifecycle insurance policies observe the identical patterns you are already utilizing for different Clarifai sources.

Pipeline UI Enhancements

Managing long-running workflows requires visibility into what’s working, what’s queued, and what failed. With this launch, we have added a number of UI enhancements to make it simpler to observe and management pipeline execution instantly from the platform.

What’s New

Pipelines Record

View all pipelines in your app from a single interface. You possibly can see pipeline metadata, creation dates, and rapidly navigate to particular pipelines with no need to make use of the CLI or API.

Pipeline Variations Record

Every pipeline can have a number of variations, representing totally different configurations or iterations of the workflow. The brand new Variations view helps you to browse all variations of a pipeline, examine configurations, and choose which model to run.

Pipeline Model Runs View

That is the place you monitor energetic and accomplished runs. The Runs view reveals execution standing, timestamps, and logs for every run, making it simpler to debug failures or observe progress on long-running jobs.

Fast switching between pipelines and variations

Navigate between pipelines, their variations, and particular person runs with out leaving the UI. This makes it quicker to check outcomes throughout totally different pipeline configurations or troubleshoot particular runs.

Begin / Pause / Cancel Runs

Now you can begin, pause, or cancel pipeline runs instantly from the UI. Beforehand, this required CLI or API calls. Now, you may cease a run that is consuming sources unnecessarily or pause execution to examine intermediate state.

View run logs

Logs are streamed instantly into the UI, so you may monitor execution in actual time. That is particularly helpful for debugging failures or understanding what occurred throughout a selected step in a multi-step workflow.

These enhancements make pipelines extra accessible for groups that choose working by the UI reasonably than solely by the CLI or SDK. You continue to have full programmatic entry by the API, however now you may as well handle and monitor workflows visually.

Pipelines stay in Public Preview. We’re actively iterating based mostly on suggestions, so in case you’re utilizing pipelines and have recommendations for the way the UI or execution mannequin might be improved, we might love to listen to from you.

For a step-by-step information on defining, importing, and working pipelines, take a look at the Pipelines documentation.

Extra Adjustments

Cessation of the Neighborhood Plan

We have retired the Neighborhood Plan and migrated all customers to our new Pay-As-You-Go plan, which gives a extra sustainable and aggressive pricing mannequin.

All customers who confirm their cellphone quantity obtain a $5 free welcome bonus to get began. The Pay-As-You-Go plan has no month-to-month minimums and much fewer function gates, making it simpler to check and scale AI workloads with out upfront commitments.

For extra particulars on the brand new pricing construction, see our latest announcement on Pay-As-You-Go credit.

Python SDK Updates

We have made a number of enhancements to the Python SDK to enhance reliability, developer expertise, and compatibility with agentic workflows.

- Added the

load_concepts_from_config() methodology to VisualDetectorClass and VisualClassifierClass to load ideas from config.yaml.

- Added a Dockerfile template that conditionally installs packages required for video streaming.

- Fastened deployment cleanup logic to make sure it targets solely failed mannequin deployments.

- Carried out an computerized retry mechanism for OpenAI API calls to gracefully deal with transient

httpx.ConnectError exceptions.

- Fastened attribute entry for OpenAI response objects in agentic transport through the use of

hasattr() checks as a substitute of dictionary .get() strategies.

For an entire listing of SDK updates, see the Python SDK changelog.

Able to Begin Constructing?

You can begin deploying public MCP servers immediately to provide agentic fashions entry to searching capabilities, real-time knowledge, and developer instruments. Deploy them on Clarifai’s shared compute, devoted cases, or your personal infrastructure utilizing the identical orchestration layer as your fashions.

For those who’re working long-running workflows, use Artifacts to retailer and model recordsdata produced by pipelines. Add checkpoints, logs, and outputs instantly by the CLI or SDK, and resume execution from saved state when wanted.

For groups managing complicated pipelines, the brand new UI enhancements make it simpler to observe runs, view logs, and management execution with out leaving the platform.

Pipelines and public MCP server assist can be found in Public Preview. We might love your suggestions as you construct.

Enroll right here to get began with Clarifai, or take a look at the documentation. When you’ve got questions or need assistance whereas constructing, be part of us on Discord. Our group and group are there to assist.

.png)