Right now, out of an estimated 1 trillion species on Earth, 99.999 p.c are thought-about microbial — micro organism, archaea, viruses, and single-celled eukaryotes. For a lot of our planet’s historical past, microbes dominated the Earth, capable of stay and thrive in probably the most excessive of environments. Researchers have solely simply begun in the previous couple of many years to deal with the range of microbes — it’s estimated that lower than 1 p.c of recognized genes have laboratory-validated features. Computational approaches supply researchers the chance to strategically parse this actually astounding quantity of data.

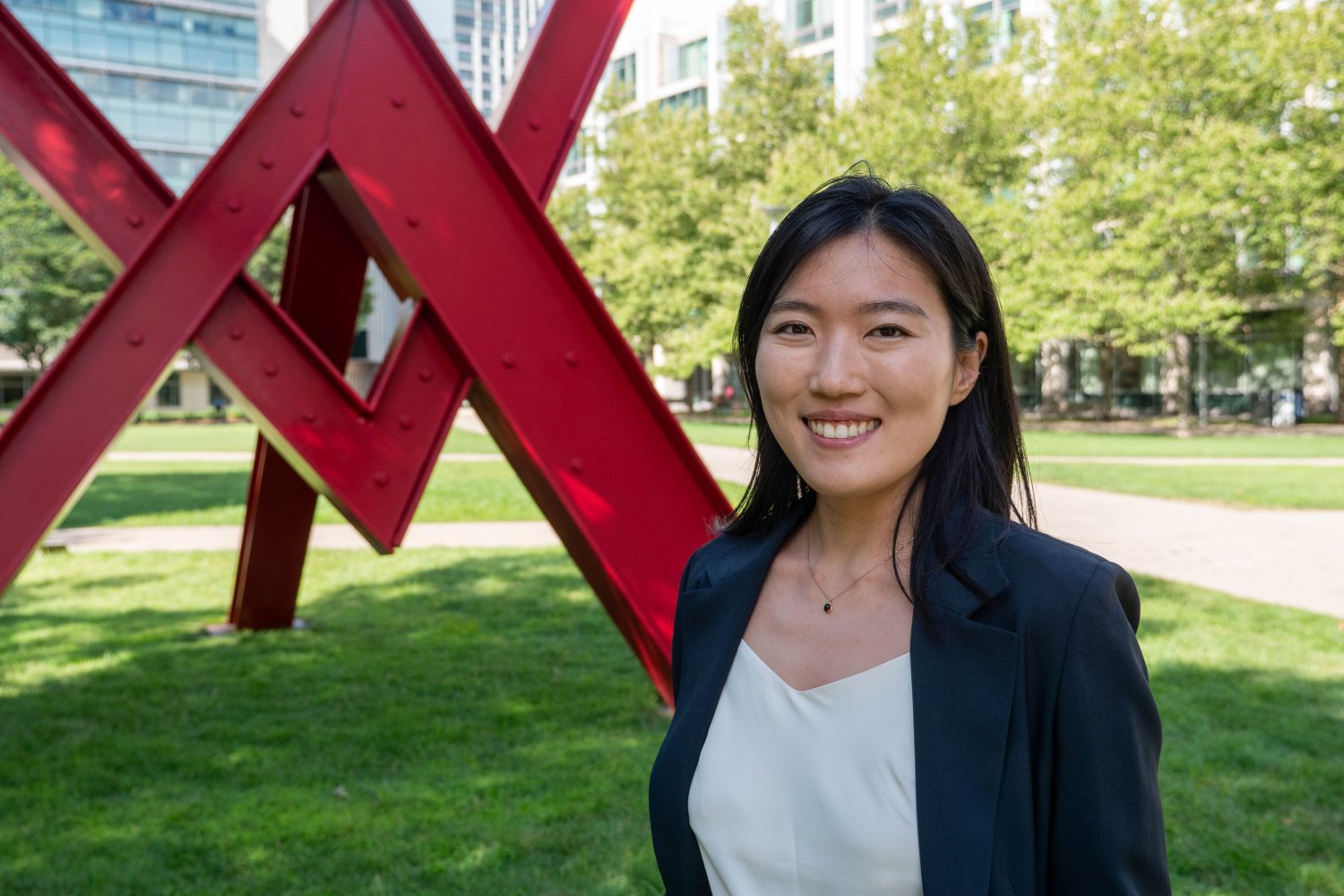

An environmental microbiologist and laptop scientist by coaching, new MIT college member Yunha Hwang is within the novel biology revealed by probably the most various and prolific life type on Earth. In a shared college place because the Samuel A. Goldblith Profession Improvement Professor within the Division of Biology, in addition to an assistant professor on the Division of Electrical Engineering and Laptop Science and the MIT Schwarzman Faculty of Computing, Hwang is exploring the intersection of computation and biology.

Q: What drew you to analysis microbes in excessive environments, and what are the challenges in finding out them?

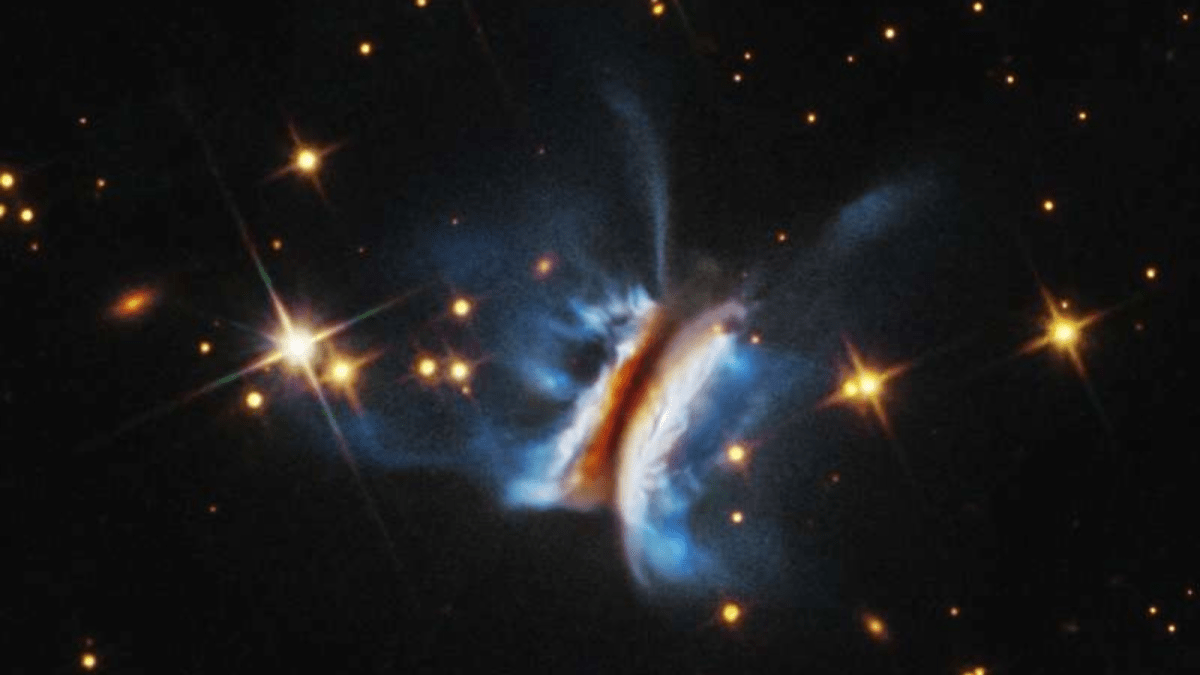

A: Excessive environments are nice locations to search for attention-grabbing biology. I wished to be an astronaut rising up, and the closest factor to astrobiology is analyzing excessive environments on Earth. And the one factor that lives in these excessive environments are microbes. Throughout a sampling expedition that I took half in off the coast of Mexico, we found a colourful microbial mat about 2 kilometers underwater that flourished as a result of the micro organism breathed sulfur as an alternative of oxygen — however not one of the microbes I hoped to review would develop within the lab.

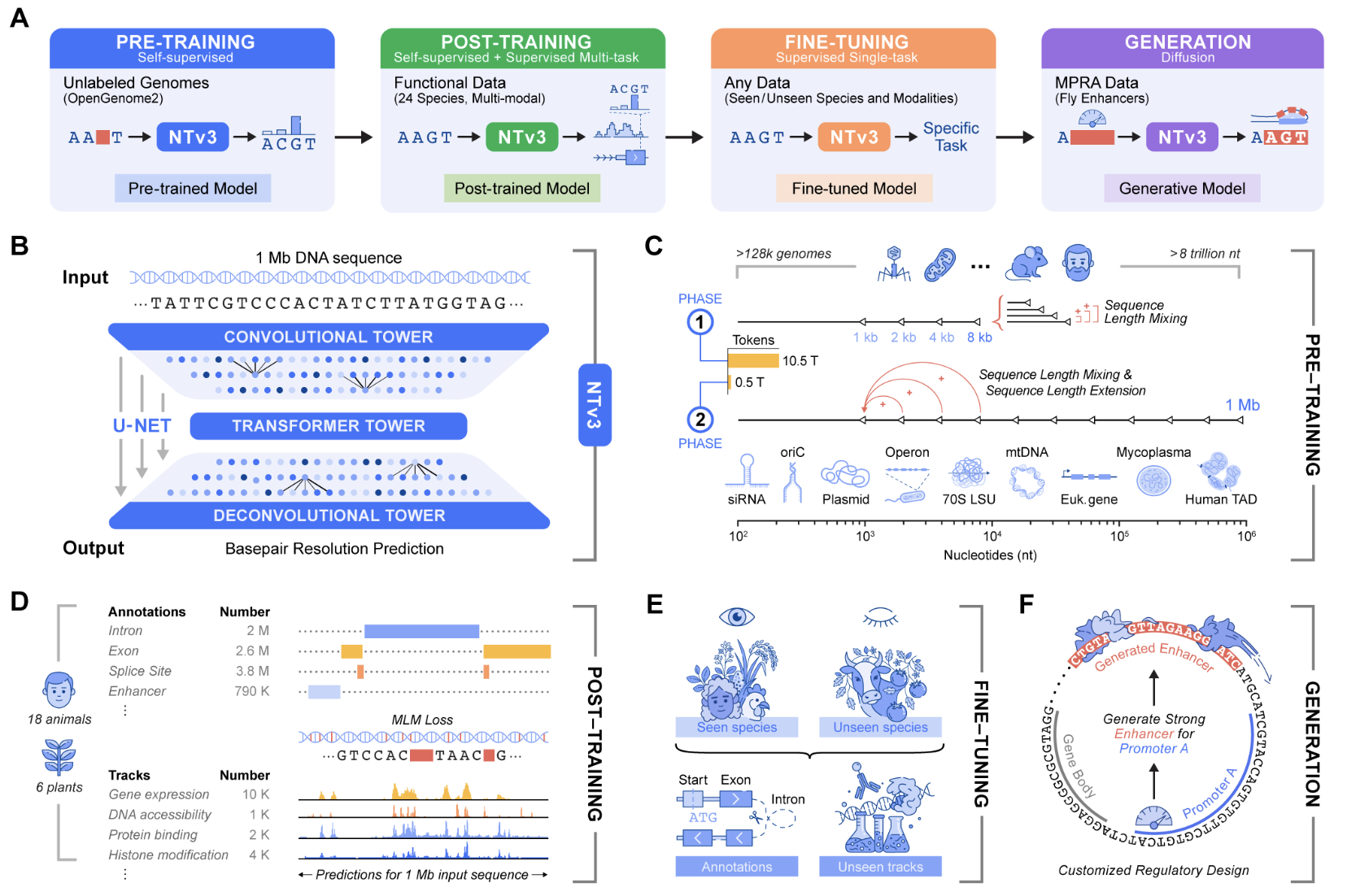

The largest problem in finding out microbes is {that a} majority of them can’t be cultivated, which signifies that the one option to examine their biology is thru a technique known as metagenomics. My newest work is genomic language modeling. We’re hoping to develop a computational system so we will probe the organism as a lot as doable “in silico,” simply utilizing sequence information. A genomic language mannequin is technically a big language mannequin, besides the language is DNA versus human language. It’s educated in an identical approach, simply in organic language versus English or French. If our goal is to be taught the language of biology, we should always leverage the range of microbial genomes. Though we have now a whole lot of information, and whilst extra samples turn out to be obtainable, we’ve simply scratched the floor of microbial range.

Q: Given how various microbes are and the way little we perceive about them, how can finding out microbes in silico, utilizing genomic language modeling, advance our understanding of the microbial genome?

A: A genome is many thousands and thousands of letters. A human can’t presumably take a look at that and make sense of it. We are able to program a machine, although, to section information into items which can be helpful. That’s form of how bioinformatics works with a single genome. However should you’re taking a look at a gram of soil, which may comprise 1000’s of distinctive genomes, that’s simply an excessive amount of information to work with — a human and a pc collectively are crucial so as to grapple with that information.

Throughout my PhD and grasp’s diploma, we have been solely simply discovering new genomes and new lineages that have been so totally different from something that had been characterised or grown within the lab. These have been issues that we simply known as “microbial darkish matter.” When there are a whole lot of uncharacterized issues, that’s the place machine studying might be actually helpful, as a result of we’re simply searching for patterns — however that’s not the top objective. What we hope to do is to map these patterns to evolutionary relationships between every genome, every microbe, and every occasion of life.

Beforehand, we’ve been desirous about proteins as a standalone entity — that will get us to an honest diploma of data as a result of proteins are associated by homology, and subsequently issues which can be evolutionarily associated might need an identical operate.

What is understood about microbiology is that proteins are encoded into genomes, and the context through which that protein is bounded — what areas come earlier than and after — is evolutionarily conserved, particularly if there’s a purposeful coupling. This makes whole sense as a result of when you might have three proteins that should be expressed collectively as a result of they type a unit, then you may want them situated proper subsequent to one another.

What I wish to do is incorporate extra of that genomic context in the best way that we seek for and annotate proteins and perceive protein operate, in order that we will transcend sequence or structural similarity so as to add contextual info to how we perceive proteins and hypothesize about their features.

Q: How can your analysis be utilized to harnessing the purposeful potential of microbes?

A: Microbes are presumably the world’s finest chemists. Leveraging microbial metabolism and biochemistry will result in extra sustainable and extra environment friendly strategies for producing new supplies, new therapeutics, and new kinds of polymers.

However it’s not nearly effectivity — microbes are doing chemistry we don’t even know the way to consider. Understanding how microbes work, and having the ability to perceive their genomic make-up and their purposeful capability, can even be actually necessary as we take into consideration how our world and local weather are altering. A majority of carbon sequestration and nutrient biking is undertaken by microbes; if we don’t perceive how a given microbe is ready to repair nitrogen or carbon, then we are going to face difficulties in modeling the nutrient fluxes of the Earth.

On the extra therapeutic aspect, infectious illnesses are an actual and rising menace. Understanding how microbes behave in various environments relative to the remainder of our microbiome is admittedly necessary as we take into consideration the long run and combating microbial pathogens.

![Prime 7 Free AI Programs with Certificates [2026 Edition] Prime 7 Free AI Programs with Certificates [2026 Edition]](https://cdn.analyticsvidhya.com/wp-content/uploads/2025/12/ai2.webp)