The bogus intelligence fashions that flip textual content into photographs are additionally helpful for producing new supplies. Over the previous couple of years, generative supplies fashions from corporations like Google, Microsoft, and Meta have drawn on their coaching information to assist researchers design tens of hundreds of thousands of latest supplies.

However with regards to designing supplies with unique quantum properties like superconductivity or distinctive magnetic states, these fashions battle. That’s too unhealthy, as a result of people might use the assistance. For instance, after a decade of analysis into a category of supplies that might revolutionize quantum computing, referred to as quantum spin liquids, solely a dozen materials candidates have been recognized. The bottleneck means there are fewer supplies to function the idea for technological breakthroughs.

Now, MIT researchers have developed a way that lets well-liked generative supplies fashions create promising quantum supplies by following particular design guidelines. The principles, or constraints, steer fashions to create supplies with distinctive constructions that give rise to quantum properties.

“The fashions from these massive corporations generate supplies optimized for stability,” says Mingda Li, MIT’s Class of 1947 Profession Improvement Professor. “Our perspective is that’s not often how supplies science advances. We don’t want 10 million new supplies to vary the world. We simply want one actually good materials.”

The strategy is described in the present day in a paper printed by Nature Supplies. The researchers utilized their approach to generate hundreds of thousands of candidate supplies consisting of geometric lattice constructions related to quantum properties. From that pool, they synthesized two precise supplies with unique magnetic traits.

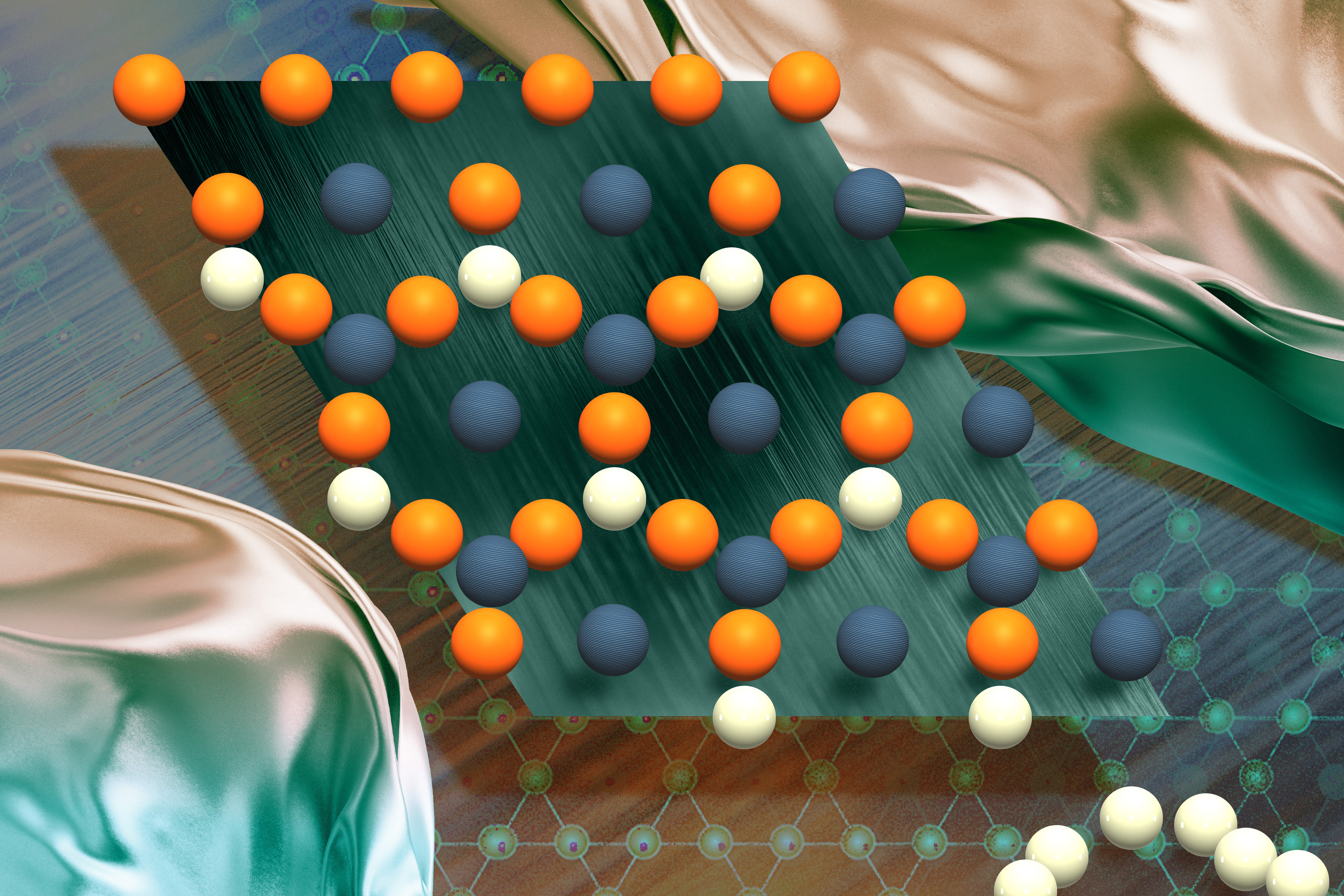

“Folks within the quantum neighborhood actually care about these geometric constraints, just like the Kagome lattices which are two overlapping, upside-down triangles. We created supplies with Kagome lattices as a result of these supplies can mimic the conduct of uncommon earth parts, so they’re of excessive technical significance.” Li says.

Li is the senior creator of the paper. His MIT co-authors embody PhD college students Ryotaro Okabe, Mouyang Cheng, Abhijatmedhi Chotrattanapituk, and Denisse Cordova Carrizales; postdoc Manasi Mandal; undergraduate researchers Kiran Mak and Bowen Yu; visiting scholar Nguyen Tuan Hung; Xiang Fu ’22, PhD ’24; and professor {of electrical} engineering and laptop science Tommi Jaakkola, who’s an affiliate of the Pc Science and Synthetic Intelligence Laboratory (CSAIL) and Institute for Information, Techniques, and Society. Extra co-authors embody Yao Wang of Emory College, Weiwei Xie of Michigan State College, YQ Cheng of Oak Ridge Nationwide Laboratory, and Robert Cava of Princeton College.

Steering fashions towards affect

A fabric’s properties are decided by its construction, and quantum supplies aren’t any completely different. Sure atomic constructions usually tend to give rise to unique quantum properties than others. As an example, sq. lattices can function a platform for high-temperature superconductors, whereas different shapes often called Kagome and Lieb lattices can help the creation of supplies that might be helpful for quantum computing.

To assist a well-liked class of generative fashions often called a diffusion fashions produce supplies that conform to explicit geometric patterns, the researchers created SCIGEN (brief for Structural Constraint Integration in GENerative mannequin). SCIGEN is a pc code that ensures diffusion fashions adhere to user-defined constraints at every iterative era step. With SCIGEN, customers can provide any generative AI diffusion mannequin geometric structural guidelines to comply with because it generates supplies.

AI diffusion fashions work by sampling from their coaching dataset to generate constructions that mirror the distribution of constructions discovered within the dataset. SCIGEN blocks generations that don’t align with the structural guidelines.

To check SCIGEN, the researchers utilized it to a well-liked AI supplies era mannequin often called DiffCSP. They’d the SCIGEN-equipped mannequin generate supplies with distinctive geometric patterns often called Archimedean lattices, that are collections of 2D lattice tilings of various polygons. Archimedean lattices can result in a spread of quantum phenomena and have been the main target of a lot analysis.

“Archimedean lattices give rise to quantum spin liquids and so-called flat bands, which may mimic the properties of uncommon earths with out uncommon earth parts, so they’re extraordinarily essential,” says Cheng, a co-corresponding creator of the work. “Different Archimedean lattice supplies have massive pores that might be used for carbon seize and different purposes, so it’s a set of particular supplies. In some instances, there aren’t any identified supplies with that lattice, so I believe it will likely be actually fascinating to search out the primary materials that matches in that lattice.”

The mannequin generated over 10 million materials candidates with Archimedean lattices. A million of these supplies survived a screening for stability. Utilizing the supercomputers in Oak Ridge Nationwide Laboratory, the researchers then took a smaller pattern of 26,000 supplies and ran detailed simulations to grasp how the supplies’ underlying atoms behaved. The researchers discovered magnetism in 41 % of these constructions.

From that subset, the researchers synthesized two beforehand undiscovered compounds, TiPdBi and TiPbSb, at Xie and Cava’s labs. Subsequent experiments confirmed the AI mannequin’s predictions largely aligned with the precise materials’s properties.

“We needed to find new supplies that might have an enormous potential affect by incorporating these constructions which were identified to present rise to quantum properties,” says Okabe, the paper’s first creator. “We already know that these supplies with particular geometric patterns are fascinating, so it’s pure to begin with them.”

Accelerating materials breakthroughs

Quantum spin liquids might unlock quantum computing by enabling steady, error-resistant qubits that function the idea of quantum operations. However no quantum spin liquid supplies have been confirmed. Xie and Cava consider SCIGEN might speed up the seek for these supplies.

“There’s a giant seek for quantum laptop supplies and topological superconductors, and these are all associated to the geometric patterns of supplies,” Xie says. “However experimental progress has been very, very gradual,” Cava provides. “Many of those quantum spin liquid supplies are topic to constraints: They should be in a triangular lattice or a Kagome lattice. If the supplies fulfill these constraints, the quantum researchers get excited; it’s a crucial however not ample situation. So, by producing many, many supplies like that, it instantly provides experimentalists tons of or hundreds extra candidates to play with to speed up quantum laptop supplies analysis.”

“This work presents a brand new software, leveraging machine studying, that may predict which supplies can have particular parts in a desired geometric sample,” says Drexel College Professor Steve Might, who was not concerned within the analysis. “This could pace up the event of beforehand unexplored supplies for purposes in next-generation digital, magnetic, or optical applied sciences.”

The researchers stress that experimentation continues to be crucial to evaluate whether or not AI-generated supplies could be synthesized and the way their precise properties examine with mannequin predictions. Future work on SCIGEN might incorporate extra design guidelines into generative fashions, together with chemical and purposeful constraints.

“Individuals who need to change the world care about materials properties greater than the steadiness and construction of supplies,” Okabe says. “With our strategy, the ratio of steady supplies goes down, nevertheless it opens the door to generate an entire bunch of promising supplies.”

The work was supported, partly, by the U.S. Division of Vitality, the Nationwide Vitality Analysis Scientific Computing Middle, the Nationwide Science Basis, and Oak Ridge Nationwide Laboratory.

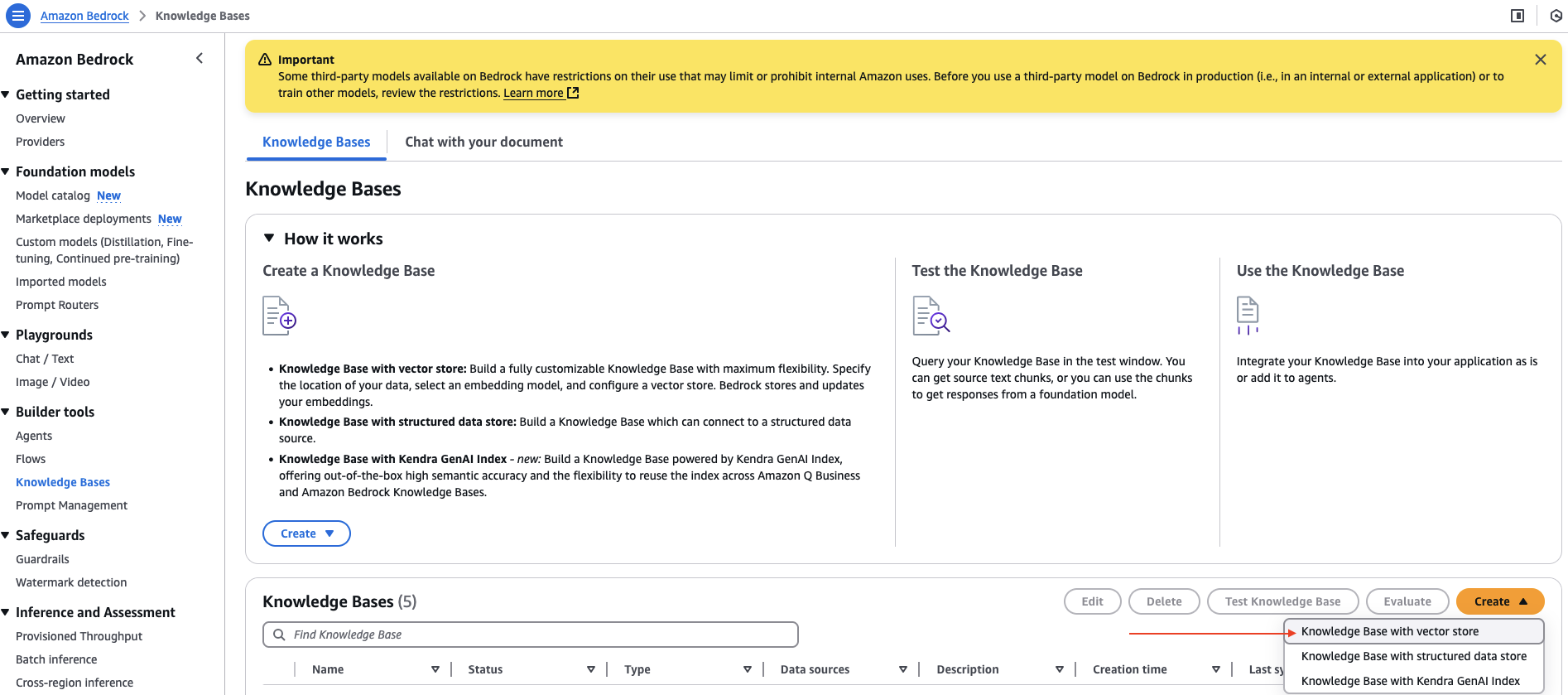

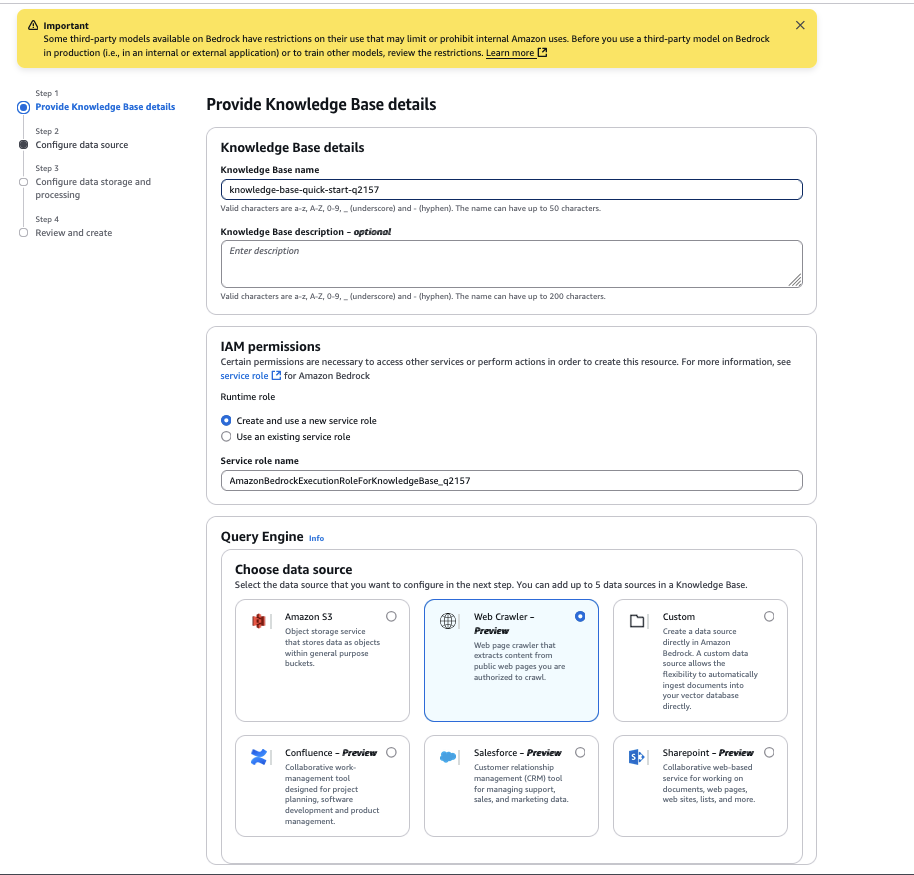

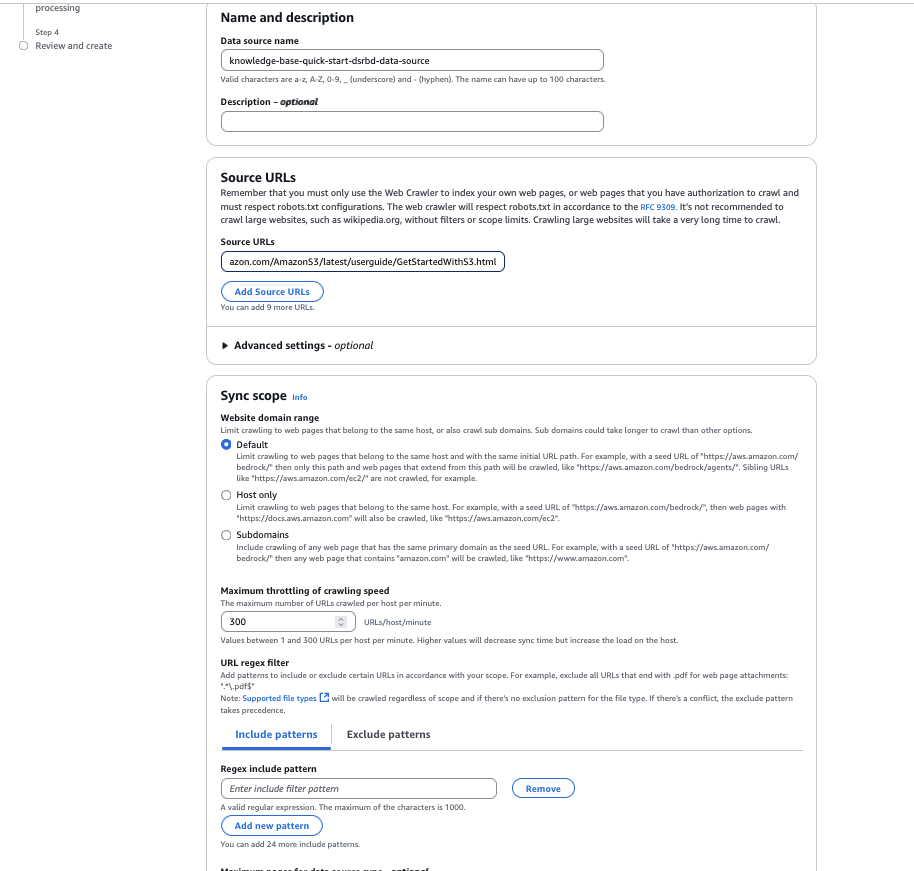

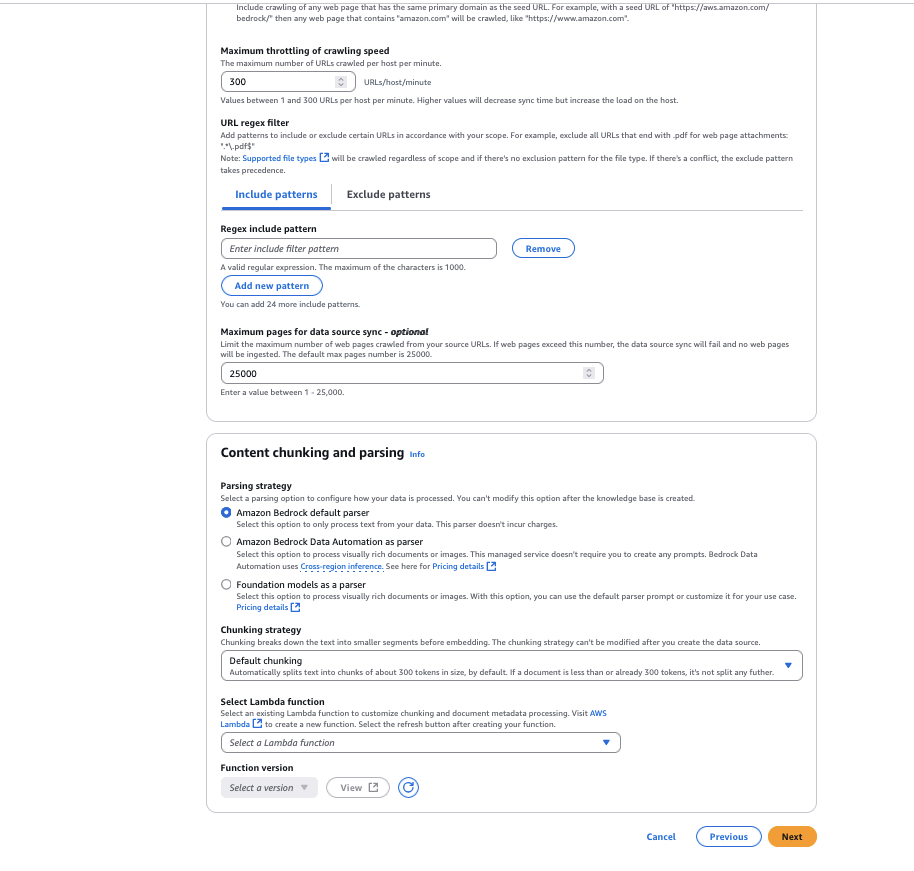

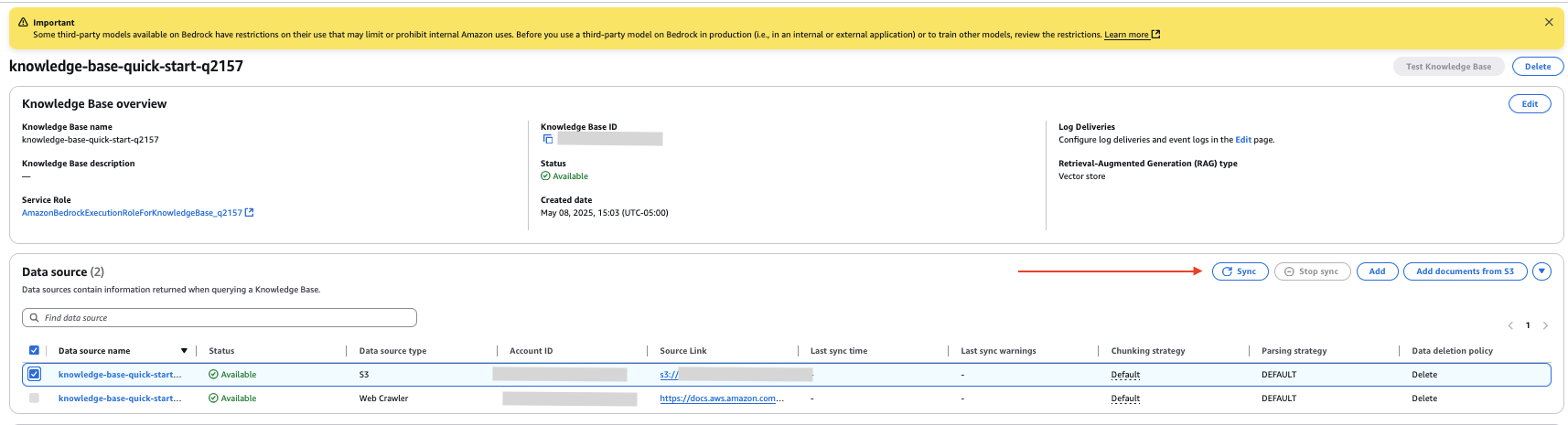

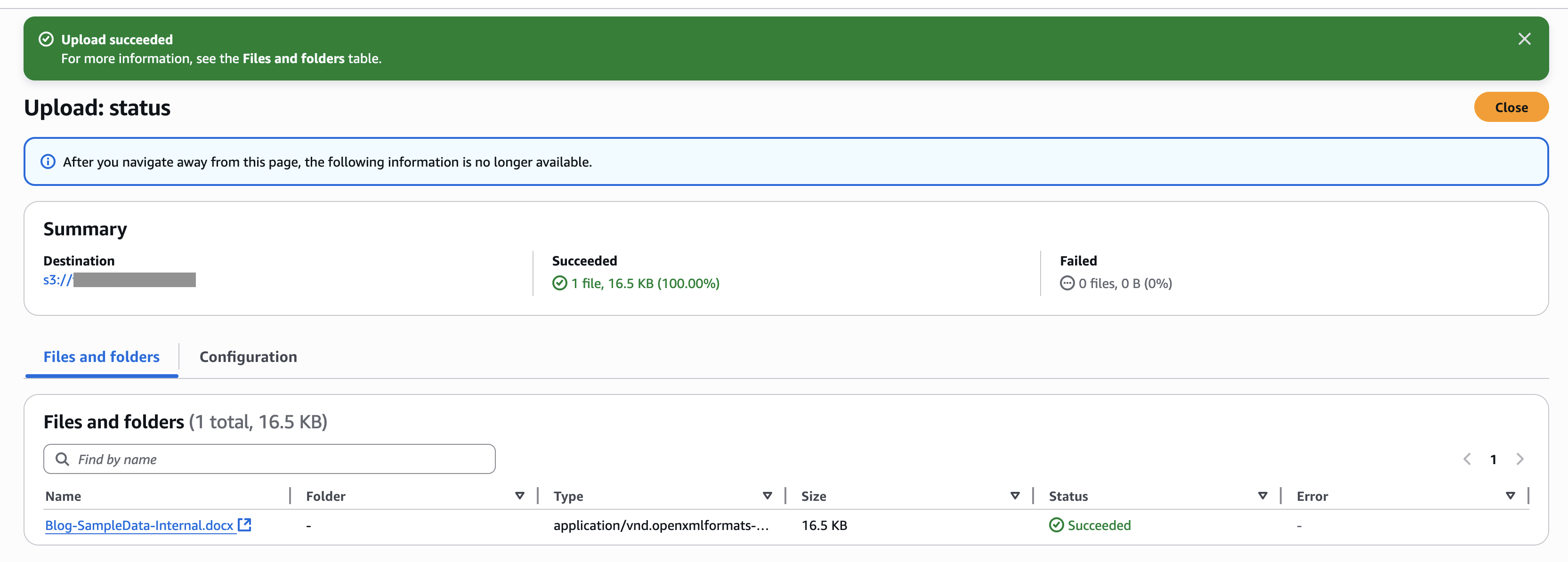

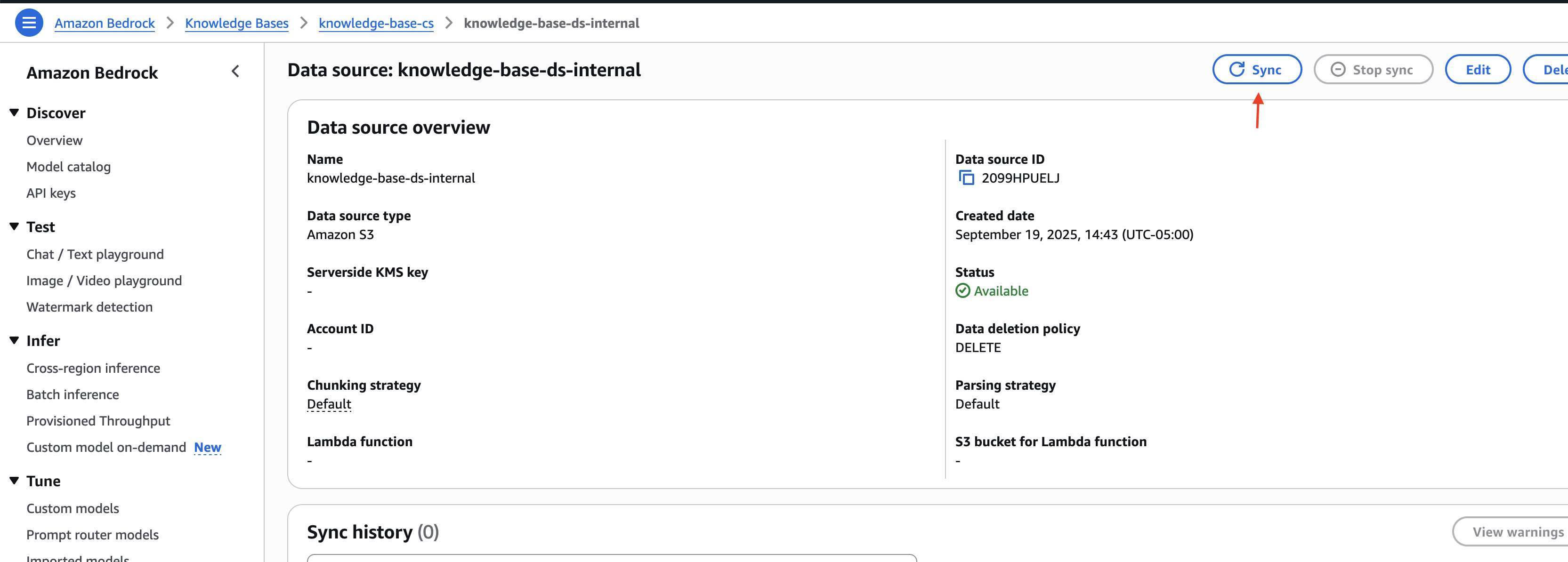

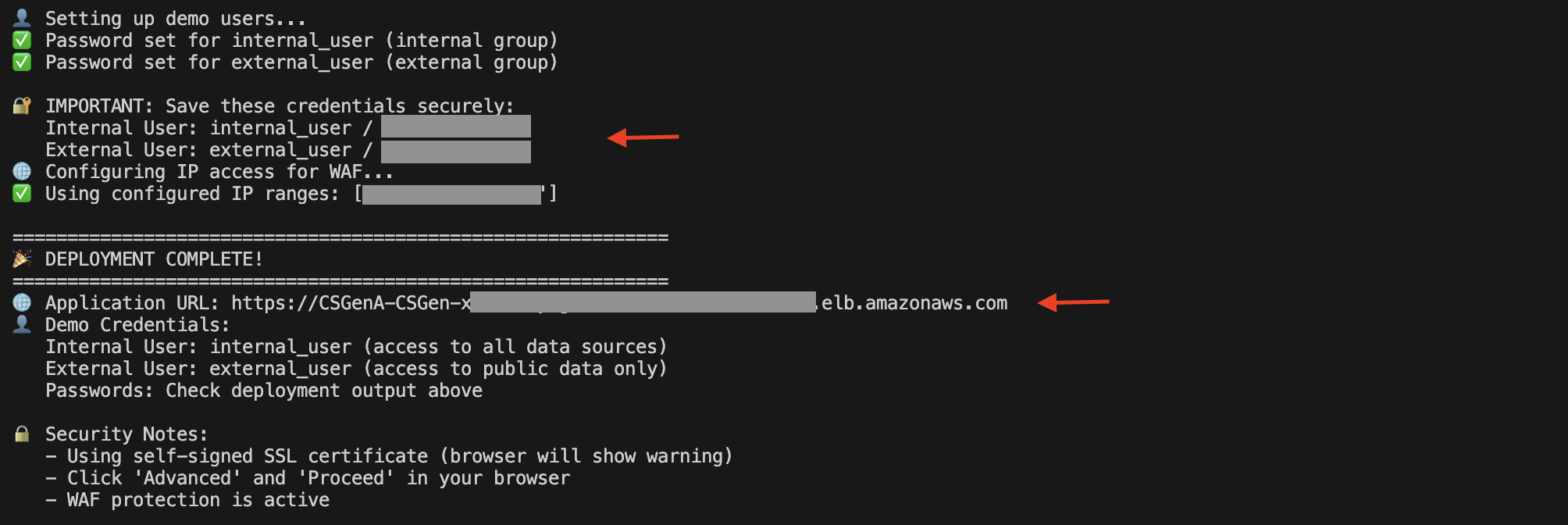

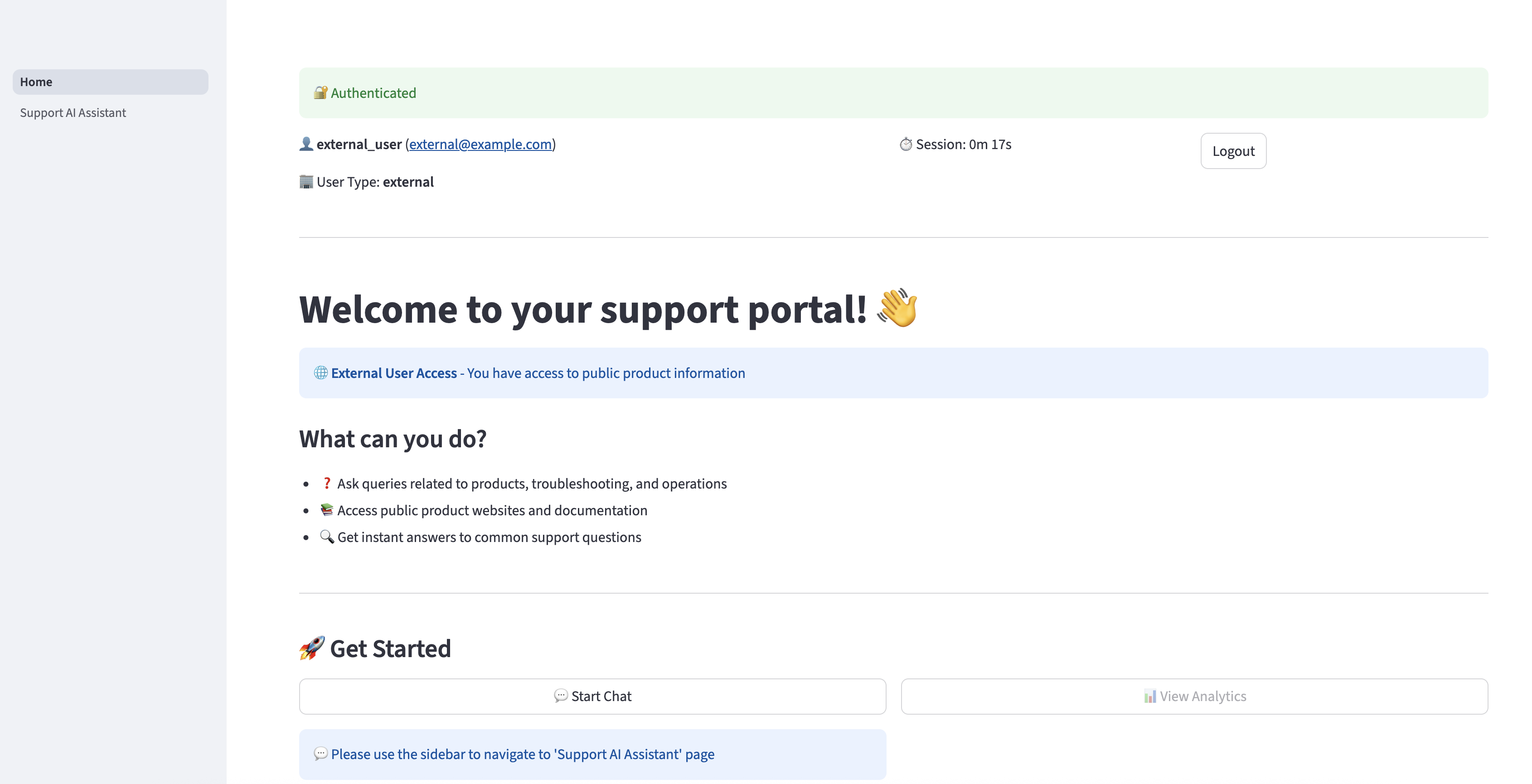

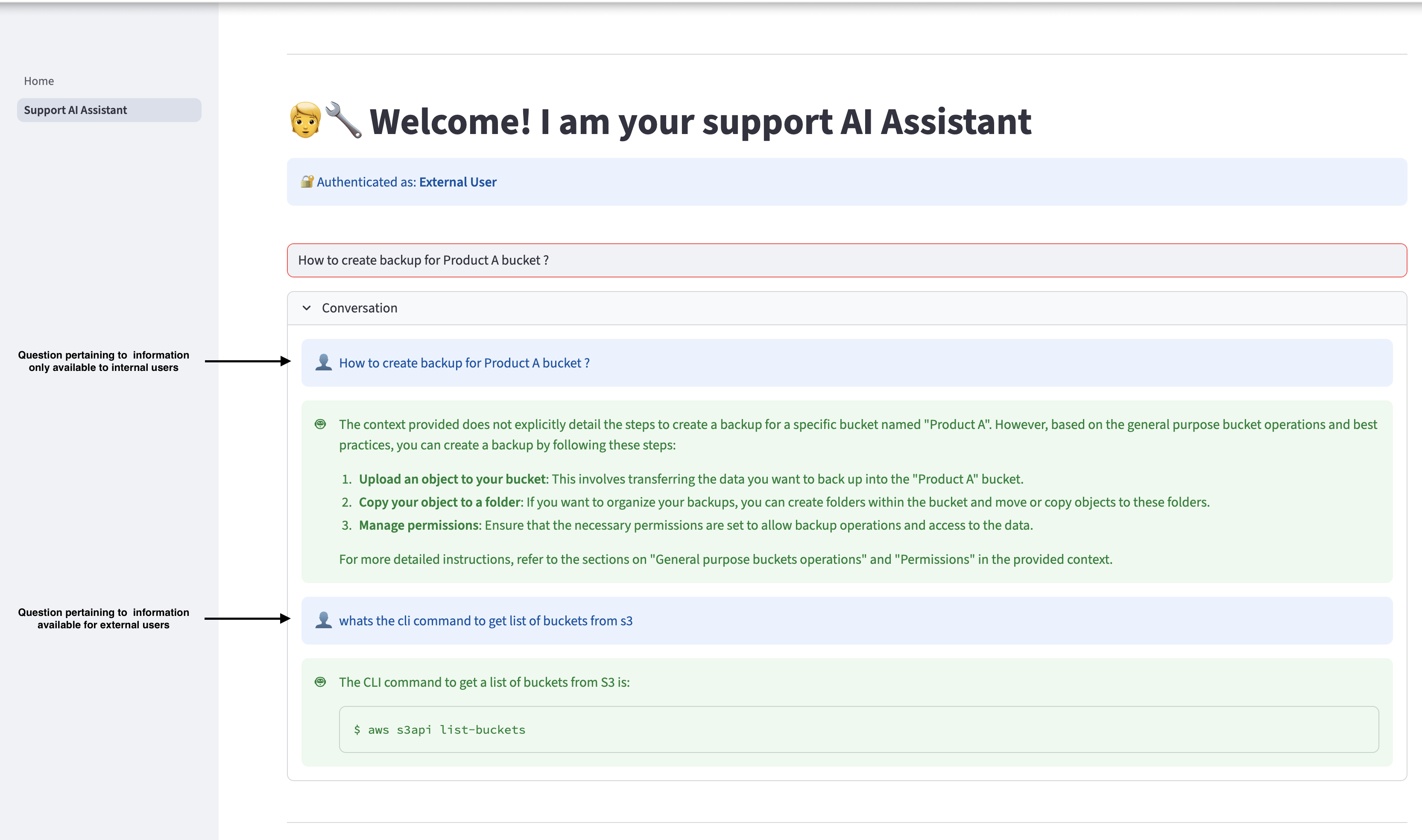

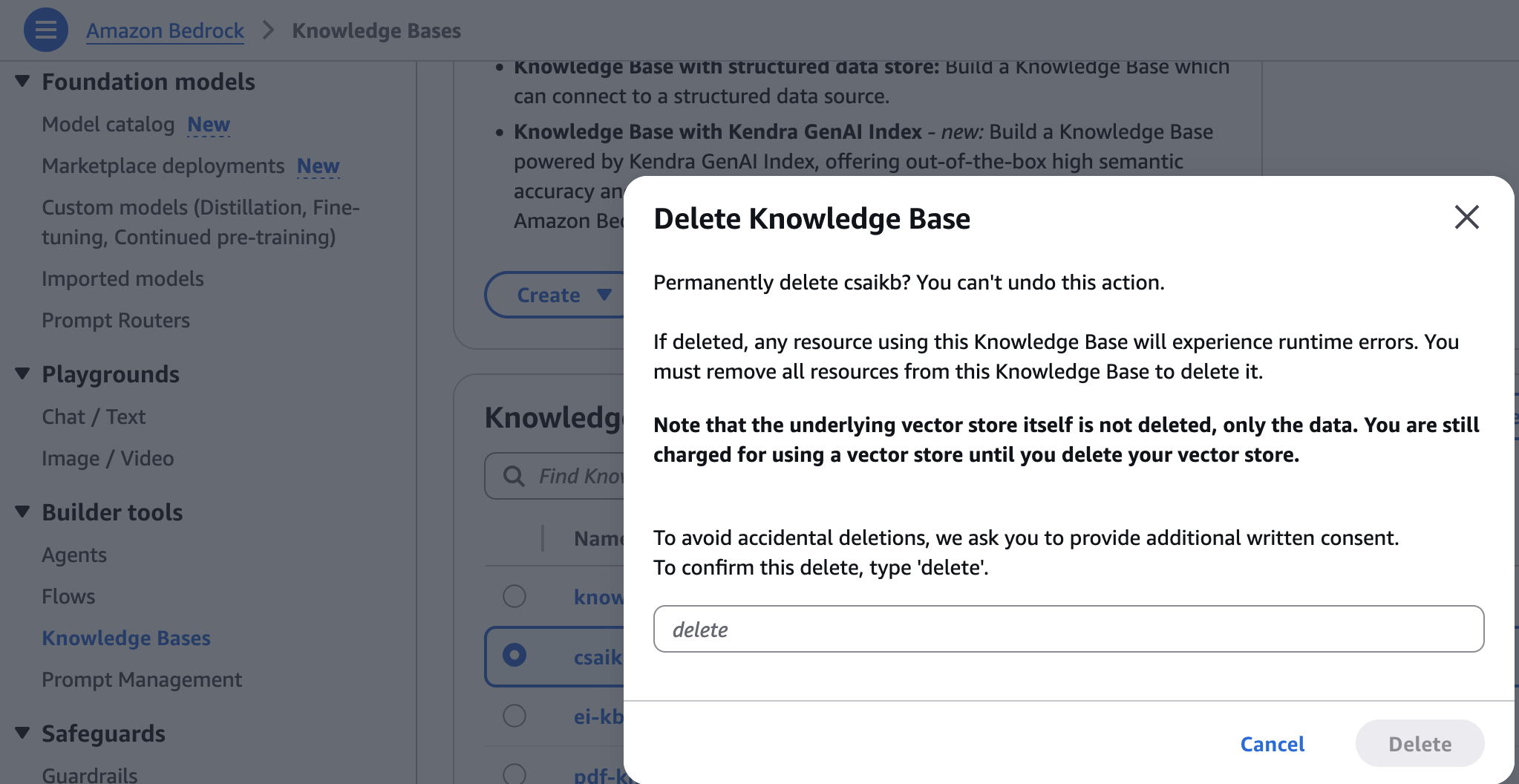

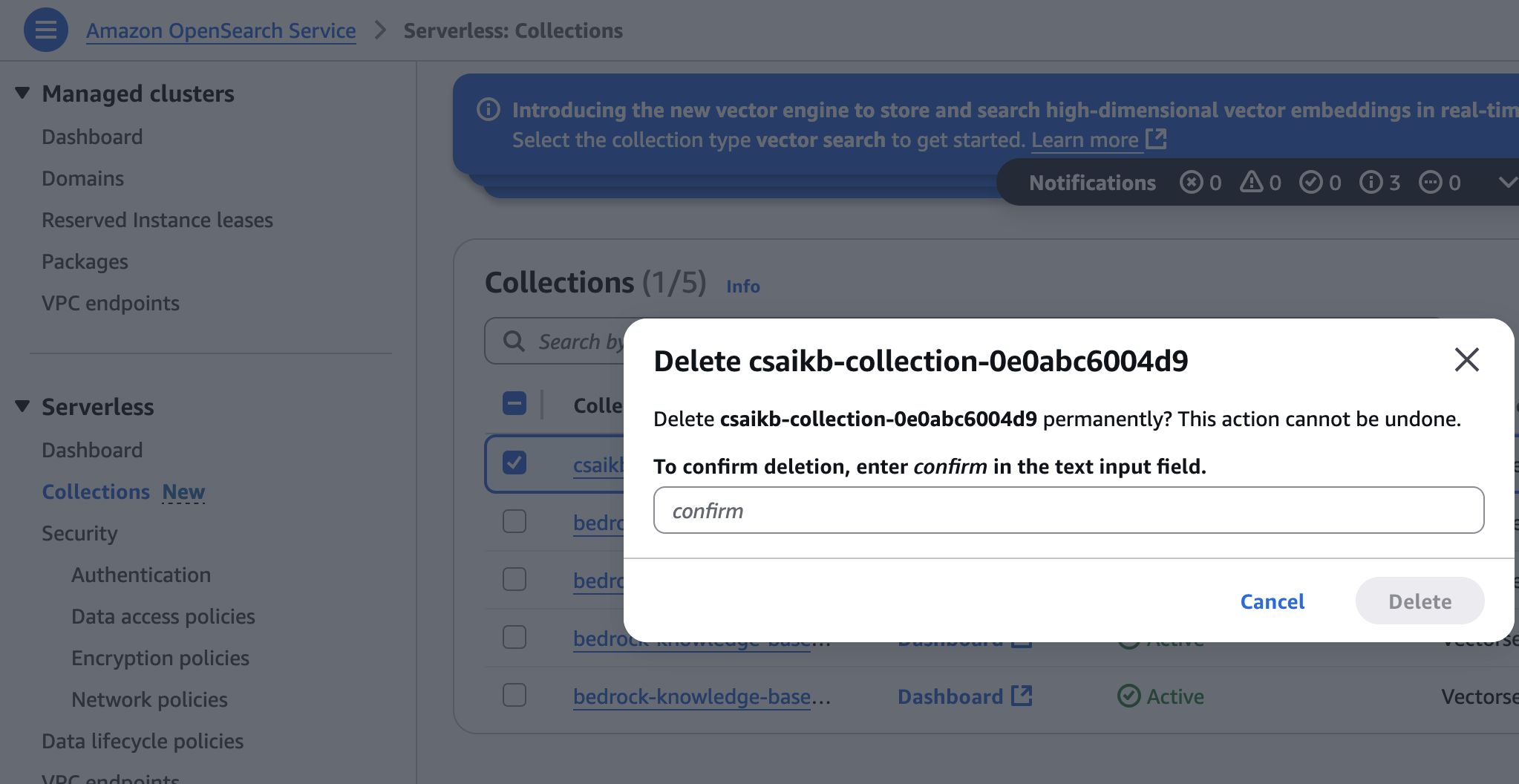

Shashank Jain is a Cloud Software Architect at Amazon Net Companies (AWS), specializing in generative AI options, cloud-native utility structure, and sustainability. He works with prospects to design and implement safe, scalable AI-powered functions utilizing serverless applied sciences, trendy DevSecOps practices, Infrastructure as Code, and event-driven architectures that ship measurable enterprise worth.

Shashank Jain is a Cloud Software Architect at Amazon Net Companies (AWS), specializing in generative AI options, cloud-native utility structure, and sustainability. He works with prospects to design and implement safe, scalable AI-powered functions utilizing serverless applied sciences, trendy DevSecOps practices, Infrastructure as Code, and event-driven architectures that ship measurable enterprise worth. Jeff Li is a Senior Cloud Software Architect with the Skilled Companies staff at AWS. He’s enthusiastic about diving deep with prospects to create options and modernize functions that assist enterprise improvements. In his spare time, he enjoys taking part in tennis, listening to music, and studying.

Jeff Li is a Senior Cloud Software Architect with the Skilled Companies staff at AWS. He’s enthusiastic about diving deep with prospects to create options and modernize functions that assist enterprise improvements. In his spare time, he enjoys taking part in tennis, listening to music, and studying. Ranjith Kurumbaru Kandiyil is a Knowledge and AI/ML Architect at Amazon Net Companies (AWS) based mostly in Toronto. He focuses on collaborating with prospects to architect and implement cutting-edge AI/ML options. His present focus lies in leveraging state-of-the-art synthetic intelligence applied sciences to unravel complicated enterprise challenges.

Ranjith Kurumbaru Kandiyil is a Knowledge and AI/ML Architect at Amazon Net Companies (AWS) based mostly in Toronto. He focuses on collaborating with prospects to architect and implement cutting-edge AI/ML options. His present focus lies in leveraging state-of-the-art synthetic intelligence applied sciences to unravel complicated enterprise challenges.