ImageNet (Deng et al. 2009) is a picture database organized in accordance with the WordNet (Miller 1995) hierarchy which, traditionally, has been utilized in pc imaginative and prescient benchmarks and analysis. Nonetheless, it was not till AlexNet (Krizhevsky, Sutskever, and Hinton 2012) demonstrated the effectivity of deep studying utilizing convolutional neural networks on GPUs that the computer-vision self-discipline turned to deep studying to realize state-of-the-art fashions that revolutionized their area. Given the significance of ImageNet and AlexNet, this put up introduces instruments and strategies to think about when coaching ImageNet and different large-scale datasets with R.

Now, so as to course of ImageNet, we are going to first must divide and conquer, partitioning the dataset into a number of manageable subsets. Afterwards, we are going to practice ImageNet utilizing AlexNet throughout a number of GPUs and compute situations. Preprocessing ImageNet and distributed coaching are the 2 subjects that this put up will current and focus on, beginning with preprocessing ImageNet.

Preprocessing ImageNet

When coping with massive datasets, even easy duties like downloading or studying a dataset might be a lot more durable than what you’ll anticipate. As an example, since ImageNet is roughly 300GB in measurement, you will have to verify to have a minimum of 600GB of free house to go away some room for obtain and decompression. However no worries, you may at all times borrow computer systems with large disk drives out of your favourite cloud supplier. When you are at it, you also needs to request compute situations with a number of GPUs, Strong State Drives (SSDs), and an inexpensive quantity of CPUs and reminiscence. If you wish to use the precise configuration we used, check out the mlverse/imagenet repo, which comprises a Docker picture and configuration instructions required to provision affordable computing sources for this process. In abstract, be sure you have entry to adequate compute sources.

Now that we’ve got sources able to working with ImageNet, we have to discover a place to obtain ImageNet from. The simplest means is to make use of a variation of ImageNet used within the ImageNet Giant Scale Visible Recognition Problem (ILSVRC), which comprises a subset of about 250GB of knowledge and might be simply downloaded from many Kaggle competitions, just like the ImageNet Object Localization Problem.

For those who’ve learn a few of our earlier posts, you may be already pondering of utilizing the pins bundle, which you should use to: cache, uncover and share sources from many providers, together with Kaggle. You’ll be able to study extra about information retrieval from Kaggle within the Utilizing Kaggle Boards article; within the meantime, let’s assume you might be already conversant in this bundle.

All we have to do now’s register the Kaggle board, retrieve ImageNet as a pin, and decompress this file. Warning, the next code requires you to stare at a progress bar for, probably, over an hour.

library(pins)

board_register("kaggle", token = "kaggle.json")

pin_get("c/imagenet-object-localization-challenge", board = "kaggle")[1] %>%

untar(exdir = "/localssd/imagenet/")

If we’re going to be coaching this mannequin time and again utilizing a number of GPUs and even a number of compute situations, we wish to be sure that we don’t waste an excessive amount of time downloading ImageNet each single time.

The primary enchancment to think about is getting a quicker exhausting drive. In our case, we locally-mounted an array of SSDs into the /localssd path. We then used /localssd to extract ImageNet and configured R’s temp path and pins cache to make use of the SSDs as effectively. Seek the advice of your cloud supplier’s documentation to configure SSDs, or check out mlverse/imagenet.

Subsequent, a well known method we will comply with is to partition ImageNet into chunks that may be individually downloaded to carry out distributed coaching afterward.

As well as, it is usually quicker to obtain ImageNet from a close-by location, ideally from a URL saved inside the similar information heart the place our cloud occasion is positioned. For this, we will additionally use pins to register a board with our cloud supplier after which re-upload every partition. Since ImageNet is already partitioned by class, we will simply break up ImageNet into a number of zip recordsdata and re-upload to our closest information heart as follows. Make sure that the storage bucket is created in the identical area as your computing situations.

board_register("", identify = "imagenet", bucket = "r-imagenet")

train_path <- "/localssd/imagenet/ILSVRC/Knowledge/CLS-LOC/practice/"

for (path in dir(train_path, full.names = TRUE)) {

dir(path, full.names = TRUE) %>%

pin(identify = basename(path), board = "imagenet", zip = TRUE)

}

We are able to now retrieve a subset of ImageNet fairly effectively. If you’re motivated to take action and have about one gigabyte to spare, be at liberty to comply with alongside executing this code. Discover that ImageNet comprises heaps of JPEG pictures for every WordNet class.

board_register("https://storage.googleapis.com/r-imagenet/", "imagenet")

classes <- pin_get("classes", board = "imagenet")

pin_get(classes$id[1], board = "imagenet", extract = TRUE) %>%

tibble::as_tibble()

# A tibble: 1,300 x 1

worth

1 /localssd/pins/storage/n01440764/n01440764_10026.JPEG

2 /localssd/pins/storage/n01440764/n01440764_10027.JPEG

3 /localssd/pins/storage/n01440764/n01440764_10029.JPEG

4 /localssd/pins/storage/n01440764/n01440764_10040.JPEG

5 /localssd/pins/storage/n01440764/n01440764_10042.JPEG

6 /localssd/pins/storage/n01440764/n01440764_10043.JPEG

7 /localssd/pins/storage/n01440764/n01440764_10048.JPEG

8 /localssd/pins/storage/n01440764/n01440764_10066.JPEG

9 /localssd/pins/storage/n01440764/n01440764_10074.JPEG

10 /localssd/pins/storage/n01440764/n01440764_1009.JPEG

# … with 1,290 extra rows

When doing distributed coaching over ImageNet, we will now let a single compute occasion course of a partition of ImageNet with ease. Say, 1/16 of ImageNet might be retrieved and extracted, in underneath a minute, utilizing parallel downloads with the callr bundle:

classes <- pin_get("classes", board = "imagenet")

classes <- classes$id[1:(length(categories$id) / 16)]

procs <- lapply(classes, perform(cat)

callr::r_bg(perform(cat) {

library(pins)

board_register("https://storage.googleapis.com/r-imagenet/", "imagenet")

pin_get(cat, board = "imagenet", extract = TRUE)

}, args = checklist(cat))

)

whereas (any(sapply(procs, perform(p) p$is_alive()))) Sys.sleep(1)

We are able to wrap this up partition in an inventory containing a map of pictures and classes, which we are going to later use in our AlexNet mannequin by means of tfdatasets.

information <- checklist(

picture = unlist(lapply(classes, perform(cat) {

pin_get(cat, board = "imagenet", obtain = FALSE)

})),

class = unlist(lapply(classes, perform(cat) {

rep(cat, size(pin_get(cat, board = "imagenet", obtain = FALSE)))

})),

classes = classes

)

Nice! We’re midway there coaching ImageNet. The following part will concentrate on introducing distributed coaching utilizing a number of GPUs.

Distributed Coaching

Now that we’ve got damaged down ImageNet into manageable components, we will neglect for a second concerning the measurement of ImageNet and concentrate on coaching a deep studying mannequin for this dataset. Nonetheless, any mannequin we select is prone to require a GPU, even for a 1/16 subset of ImageNet. So be sure that your GPUs are correctly configured by working is_gpu_available(). For those who need assistance getting a GPU configured, the Utilizing GPUs with TensorFlow and Docker video might help you rise up to hurry.

[1] TRUE

We are able to now determine which deep studying mannequin would finest be fitted to ImageNet classification duties. As a substitute, for this put up, we are going to return in time to the glory days of AlexNet and use the r-tensorflow/alexnet repo as a substitute. This repo comprises a port of AlexNet to R, however please discover that this port has not been examined and isn’t prepared for any actual use circumstances. In truth, we might admire PRs to enhance it if somebody feels inclined to take action. Regardless, the main focus of this put up is on workflows and instruments, not about attaining state-of-the-art picture classification scores. So by all means, be at liberty to make use of extra acceptable fashions.

As soon as we’ve chosen a mannequin, we are going to wish to me ensure that it correctly trains on a subset of ImageNet:

remotes::install_github("r-tensorflow/alexnet")

alexnet::alexnet_train(information = information)

Epoch 1/2

103/2269 [>...............] - ETA: 5:52 - loss: 72306.4531 - accuracy: 0.9748

Up to now so good! Nonetheless, this put up is about enabling large-scale coaching throughout a number of GPUs, so we wish to be sure that we’re utilizing as many as we will. Sadly, working nvidia-smi will present that just one GPU at the moment getting used:

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 418.152.00 Driver Model: 418.152.00 CUDA Model: 10.1 |

|-------------------------------+----------------------+----------------------+

| GPU Identify Persistence-M| Bus-Id Disp.A | Unstable Uncorr. ECC |

| Fan Temp Perf Pwr:Utilization/Cap| Reminiscence-Utilization | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 Tesla K80 Off | 00000000:00:05.0 Off | 0 |

| N/A 48C P0 89W / 149W | 10935MiB / 11441MiB | 28% Default |

+-------------------------------+----------------------+----------------------+

| 1 Tesla K80 Off | 00000000:00:06.0 Off | 0 |

| N/A 74C P0 74W / 149W | 71MiB / 11441MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: GPU Reminiscence |

| GPU PID Kind Course of identify Utilization |

|=============================================================================|

+-----------------------------------------------------------------------------+

With a purpose to practice throughout a number of GPUs, we have to outline a distributed-processing technique. If this can be a new idea, it may be time to check out the Distributed Coaching with Keras tutorial and the distributed coaching with TensorFlow docs. Or, when you enable us to oversimplify the method, all it’s a must to do is outline and compile your mannequin underneath the suitable scope. A step-by-step clarification is obtainable within the Distributed Deep Studying with TensorFlow and R video. On this case, the alexnet mannequin already helps a technique parameter, so all we’ve got to do is cross it alongside.

library(tensorflow)

technique <- tf$distribute$MirroredStrategy(

cross_device_ops = tf$distribute$ReductionToOneDevice())

alexnet::alexnet_train(information = information, technique = technique, parallel = 6)

Discover additionally parallel = 6 which configures tfdatasets to utilize a number of CPUs when loading information into our GPUs, see Parallel Mapping for particulars.

We are able to now re-run nvidia-smi to validate all our GPUs are getting used:

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 418.152.00 Driver Model: 418.152.00 CUDA Model: 10.1 |

|-------------------------------+----------------------+----------------------+

| GPU Identify Persistence-M| Bus-Id Disp.A | Unstable Uncorr. ECC |

| Fan Temp Perf Pwr:Utilization/Cap| Reminiscence-Utilization | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 Tesla K80 Off | 00000000:00:05.0 Off | 0 |

| N/A 49C P0 94W / 149W | 10936MiB / 11441MiB | 53% Default |

+-------------------------------+----------------------+----------------------+

| 1 Tesla K80 Off | 00000000:00:06.0 Off | 0 |

| N/A 76C P0 114W / 149W | 10936MiB / 11441MiB | 26% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: GPU Reminiscence |

| GPU PID Kind Course of identify Utilization |

|=============================================================================|

+-----------------------------------------------------------------------------+

The MirroredStrategy might help us scale as much as about 8 GPUs per compute occasion; nonetheless, we’re prone to want 16 situations with 8 GPUs every to coach ImageNet in an inexpensive time (see Jeremy Howard’s put up on Coaching Imagenet in 18 Minutes). So the place can we go from right here?

Welcome to MultiWorkerMirroredStrategy: This technique can use not solely a number of GPUs, but additionally a number of GPUs throughout a number of computer systems. To configure them, all we’ve got to do is outline a TF_CONFIG setting variable with the suitable addresses and run the very same code in every compute occasion.

library(tensorflow)

partition <- 0

Sys.setenv(TF_CONFIG = jsonlite::toJSON(checklist(

cluster = checklist(

employee = c("10.100.10.100:10090", "10.100.10.101:10090")

),

process = checklist(kind = 'employee', index = partition)

), auto_unbox = TRUE))

technique <- tf$distribute$MultiWorkerMirroredStrategy(

cross_device_ops = tf$distribute$ReductionToOneDevice())

alexnet::imagenet_partition(partition = partition) %>%

alexnet::alexnet_train(technique = technique, parallel = 6)

Please word that partition should change for every compute occasion to uniquely establish it, and that the IP addresses additionally should be adjusted. As well as, information ought to level to a unique partition of ImageNet, which we will retrieve with pins; though, for comfort, alexnet comprises related code underneath alexnet::imagenet_partition(). Apart from that, the code that it’s worthwhile to run in every compute occasion is precisely the identical.

Nonetheless, if we had been to make use of 16 machines with 8 GPUs every to coach ImageNet, it could be fairly time-consuming and error-prone to manually run code in every R session. So as a substitute, we should always consider making use of cluster-computing frameworks, like Apache Spark with barrier execution. If you’re new to Spark, there are a lot of sources accessible at sparklyr.ai. To study nearly working Spark and TensorFlow collectively, watch our Deep Studying with Spark, TensorFlow and R video.

Placing all of it collectively, coaching ImageNet in R with TensorFlow and Spark appears as follows:

library(sparklyr)

sc <- spark_connect("yarn|mesos|and so forth", config = checklist("sparklyr.shell.num-executors" = 16))

sdf_len(sc, 16, repartition = 16) %>%

spark_apply(perform(df, barrier) {

library(tensorflow)

Sys.setenv(TF_CONFIG = jsonlite::toJSON(checklist(

cluster = checklist(

employee = paste(

gsub(":[0-9]+$", "", barrier$handle),

8000 + seq_along(barrier$handle), sep = ":")),

process = checklist(kind = 'employee', index = barrier$partition)

), auto_unbox = TRUE))

if (is.null(tf_version())) install_tensorflow()

technique <- tf$distribute$MultiWorkerMirroredStrategy()

consequence <- alexnet::imagenet_partition(partition = barrier$partition) %>%

alexnet::alexnet_train(technique = technique, epochs = 10, parallel = 6)

consequence$metrics$accuracy

}, barrier = TRUE, columns = c(accuracy = "numeric"))

We hope this put up gave you an inexpensive overview of what coaching large-datasets in R appears like – thanks for studying alongside!

Deng, Jia, Wei Dong, Richard Socher, Li-Jia Li, Kai Li, and Li Fei-Fei. 2009. “Imagenet: A Giant-Scale Hierarchical Picture Database.” In 2009 IEEE Convention on Pc Imaginative and prescient and Sample Recognition, 248–55. Ieee.

Krizhevsky, Alex, Ilya Sutskever, and Geoffrey E Hinton. 2012. “Imagenet Classification with Deep Convolutional Neural Networks.” In Advances in Neural Info Processing Techniques, 1097–1105.

Miller, George A. 1995. “WordNet: A Lexical Database for English.” Communications of the ACM 38 (11): 39–41.

Take pleasure in this weblog? Get notified of recent posts by electronic mail:

Posts additionally accessible at r-bloggers

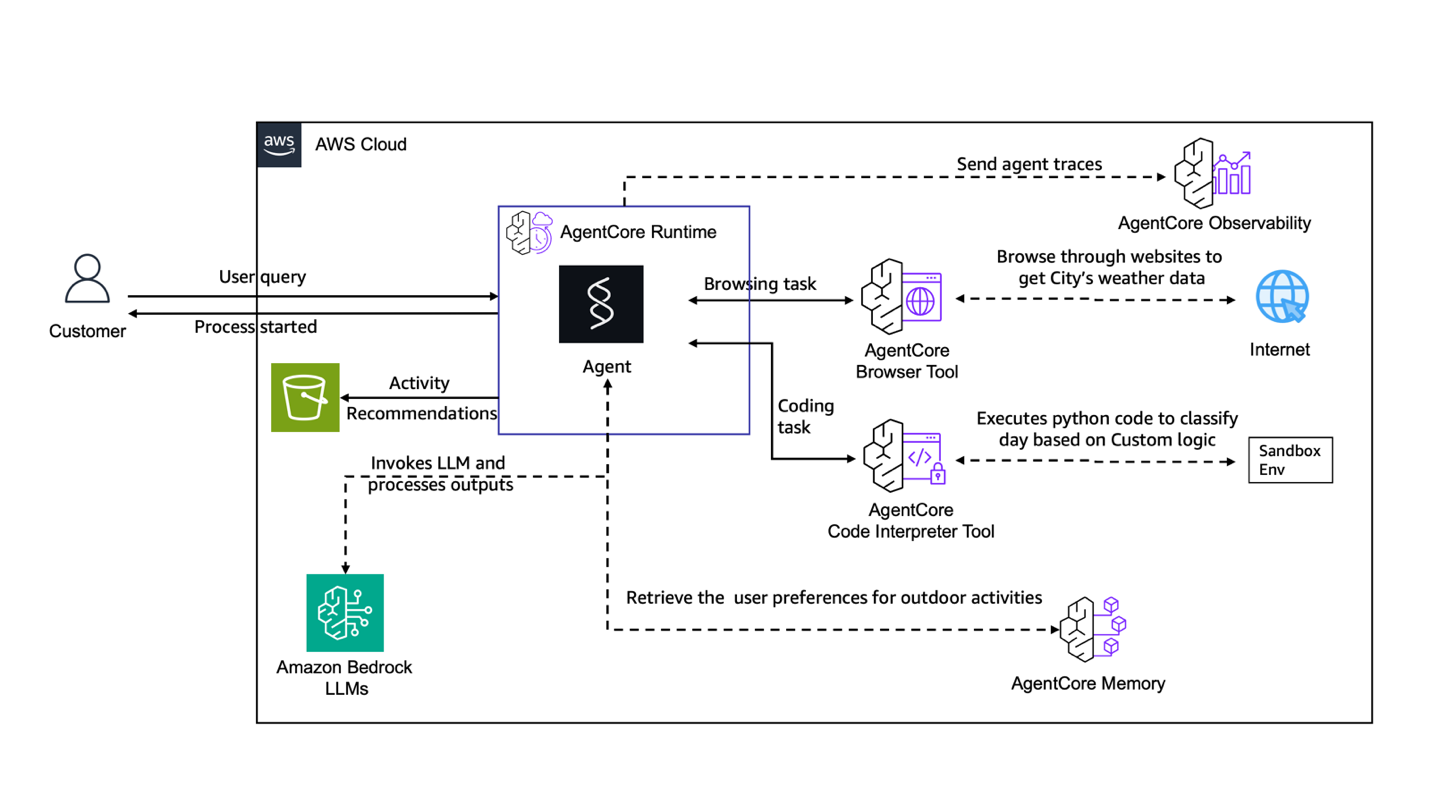

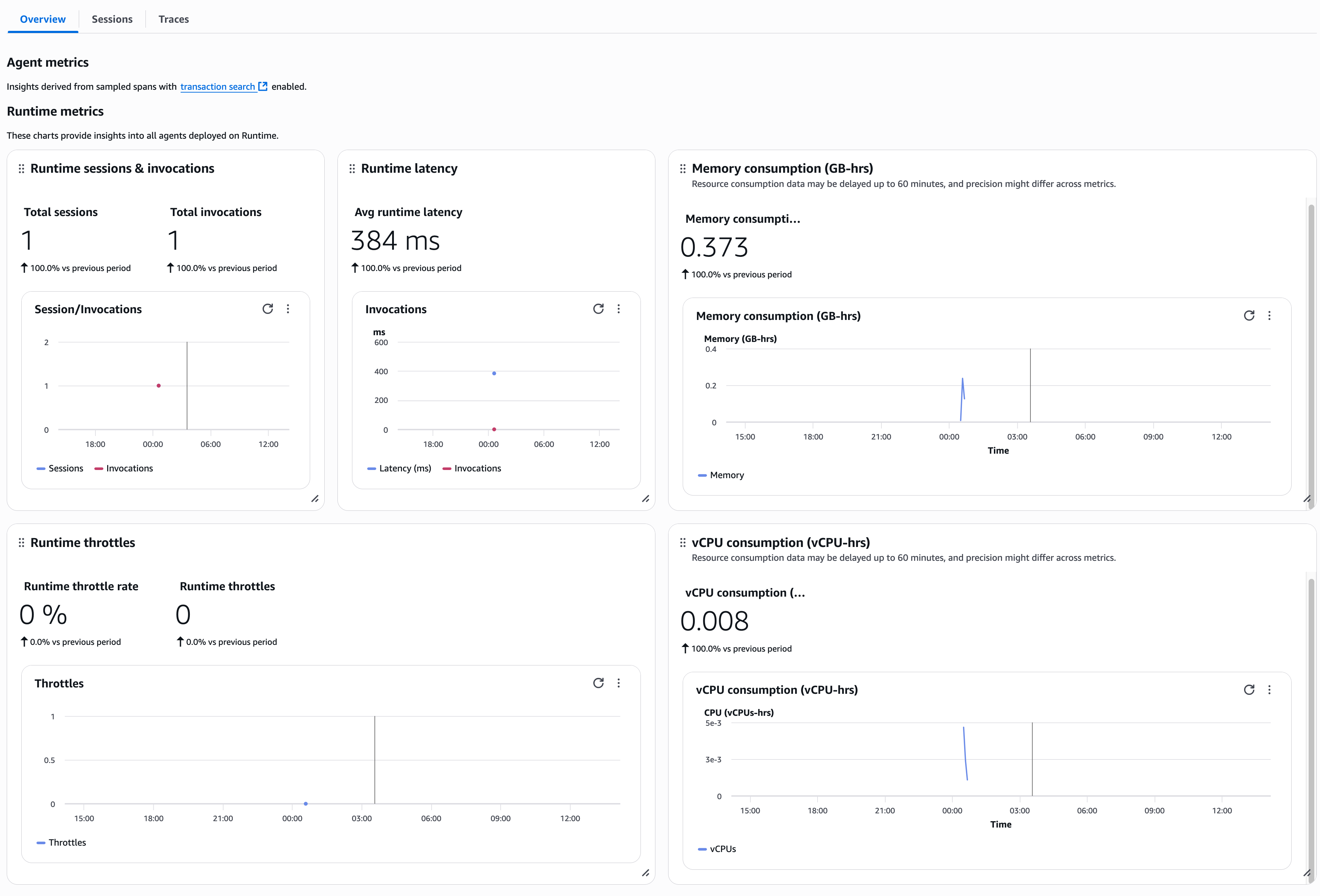

Chintan Patel is a Senior Resolution Architect at AWS with in depth expertise in answer design and growth. He helps organizations throughout numerous industries to modernize their infrastructure, demystify Generative AI applied sciences, and optimize their cloud investments. Outdoors of labor, he enjoys spending time along with his youngsters, taking part in pickleball, and experimenting with AI instruments.

Chintan Patel is a Senior Resolution Architect at AWS with in depth expertise in answer design and growth. He helps organizations throughout numerous industries to modernize their infrastructure, demystify Generative AI applied sciences, and optimize their cloud investments. Outdoors of labor, he enjoys spending time along with his youngsters, taking part in pickleball, and experimenting with AI instruments. Shreyas Subramanian is a Principal Information Scientist and helps prospects through the use of Generative AI and deep studying to resolve their enterprise challenges utilizing AWS companies like Amazon Bedrock and AgentCore. Dr. Subramanian contributes to cutting-edge analysis in deep studying, Agentic AI, basis fashions and optimization methods with a number of books, papers and patents to his identify. In his present function at Amazon, Dr. Subramanian works with numerous science leaders and analysis groups inside and out of doors Amazon, serving to to information prospects to greatest leverage state-of-the-art algorithms and methods to resolve enterprise important issues. Outdoors AWS, Dr. Subramanian is a consultant reviewer for AI papers and funding through organizations like Neurips, ICML, ICLR, NASA and NSF.

Shreyas Subramanian is a Principal Information Scientist and helps prospects through the use of Generative AI and deep studying to resolve their enterprise challenges utilizing AWS companies like Amazon Bedrock and AgentCore. Dr. Subramanian contributes to cutting-edge analysis in deep studying, Agentic AI, basis fashions and optimization methods with a number of books, papers and patents to his identify. In his present function at Amazon, Dr. Subramanian works with numerous science leaders and analysis groups inside and out of doors Amazon, serving to to information prospects to greatest leverage state-of-the-art algorithms and methods to resolve enterprise important issues. Outdoors AWS, Dr. Subramanian is a consultant reviewer for AI papers and funding through organizations like Neurips, ICML, ICLR, NASA and NSF. Kosti Vasilakakis is a Principal PM at AWS on the Agentic AI group, the place he has led the design and growth of a number of Bedrock AgentCore companies from the bottom up, together with Runtime. He beforehand labored on Amazon SageMaker since its early days, launching AI/ML capabilities now utilized by hundreds of corporations worldwide. Earlier in his profession, Kosti was an information scientist. Outdoors of labor, he builds private productiveness automations, performs tennis, and explores the wilderness along with his household.

Kosti Vasilakakis is a Principal PM at AWS on the Agentic AI group, the place he has led the design and growth of a number of Bedrock AgentCore companies from the bottom up, together with Runtime. He beforehand labored on Amazon SageMaker since its early days, launching AI/ML capabilities now utilized by hundreds of corporations worldwide. Earlier in his profession, Kosti was an information scientist. Outdoors of labor, he builds private productiveness automations, performs tennis, and explores the wilderness along with his household.