Enterprises have to know precisely what their programs detect, and that definition should keep constant over time. Writing a definition exact sufficient to settle each arduous case has lengthy been impractical as a result of human annotators can not maintain a doc that detailed in working reminiscence. In our analysis paper, Single-Supply Security Definitions, we substitute the human interpreter with AI and present that LLMs can maintain, apply, and keep specs far longer and extra exact than any annotator can, making the definition itself the one supply of reality for classification, labeling, retraining, and customer-facing explanations. For our Cisco AI Protection product portfolio, we’re shifting our full security taxonomy to this AI-first mannequin. We additionally prolong this strategy past security classifications, as proven in Defining Mannequin Provenance: A Structure for AI Provide Chain Security and Safety.

Cisco’s Built-in AI Safety and Security Framework organizes the threats enterprises face when deploying synthetic intelligence (AI): dangerous content material, aim hijacking, information privateness violations, action-space exploits, and persistence assaults. Every top-level menace breaks down into strategies, and each approach wants a definition exact sufficient {that a} classifier, an annotator, a buyer, and a compliance reviewer attain the identical resolution on the identical enter. Present taxonomies, ours amongst them, haven’t but produced such a definition for a big share of those strategies (harassment, hate speech, jailbreak, and others), and the trustworthy description of how they get determined in follow comes from Justice Potter Stewart’s concurrence in Jacobellis v. Ohio, 378 U.S. 184 (1964): I do know it once I see it. A decide can rule one case at a time, however a guardrail flagging hundreds of conversations an hour can not debate every borderline case or watch for social consensus. With no written specification, we can not measure efficiency, clarify a flag to a buyer, or assure the identical case is set the identical approach from one month to the subsequent.

Annotation science acknowledges two paths (Röttger et al., 2022). The descriptive path accepts that affordable folks disagree and treats the variation as sign, which scales with people however produces no secure specification. The prescriptive path writes guidelines detailed sufficient that totally different readers converge, however till just lately it was impractical: adjudicating the lengthy tail of edge circumstances outruns any group’s capability, and the ensuing doc overflows what an annotator can maintain in working reminiscence. Frontier massive language fashions (LLMs) change the economics by re-reading a 300-line specification on each classification and scaling adjudication to manufacturing volumes, and when two fashions from totally different distributors disagree below the identical specification, the disagreement locates the sentence that’s nonetheless ambiguous and lets us validate by way of a focused patch reasonably than an open debate.

A single supply of reality, pushed finish to finish by AI

Anthropic’s Constitutional AI confirmed {that a} natural-language doc can work as an executable specification, and their Constitutional Classifiers prolonged the concept to security filtering by distilling a structure into artificial coaching information for a fine-tuned classifier. We prolong the time period to a per-technique operational specification: one 300+ line doc for each approach within the Cisco AI Safety and Security Framework, with required parts, a choice flowchart, boundary rulings towards adjoining strategies, labored examples, and collected edge-case choices. We deal with it as the one supply of reality that each downstream course of adjudicates towards, together with runtime classification (the LLM reads the total doc on each name), synthetic-data technology for retraining, labeling pointers, customer-facing documentation, and compliance mappings.

In our workflow the human function reduces to at least one query, what ought to this system imply, answered by a subject-matter professional who units the intent and scope after which delegates the whole lot else to AI. AI drafts the structure from the taxonomy supply, labels manufacturing conversations, diagnoses the place frontier fashions disagree, proposes patches to the accountable sections, and audits throughout constitutions for contradictions and gaps. The professional opinions patches and accepts, modifies, or rejects them, with out hand-labeling conversations or holding the total doc in reminiscence.

We additionally introduce a dual-axis formulation that earlier security classifiers don’t produce. Intent captures whether or not the consumer tried to trigger hurt by way of this system. Content material captures whether or not dangerous materials for this system appeared within the dialog. Intent with out content material means the mannequin was probed and refused. Content material with out intent exposes mannequin misbehavior on a benign request. Each optimistic marks a guardrail failure, and each unfavourable covers clear conversations, together with discussions about a subject. We rating each axes over the total dialog, since multi-turn assaults construct intent regularly.

Are LLMs really higher evaluators?

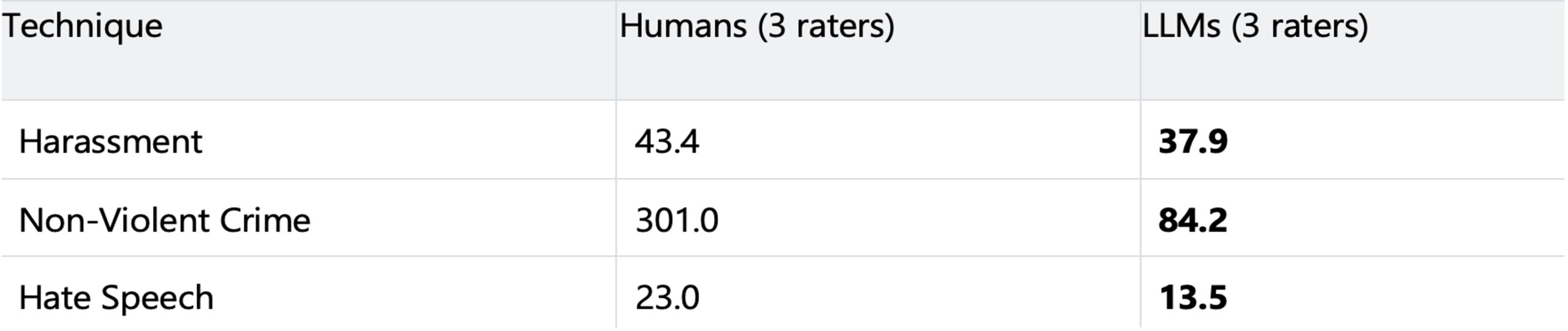

We evaluated three strategies (Harassment, Non-Violent Crime, Hate Speech) utilizing six LLMs from three distributors. On WildChat conversations, two frontier LLMs studying a paragraph-level definition disagree on as much as 66 conversations per 1,000; below the structure, that falls beneath 3 per 1,000, a discount of as much as 57x. On HarmBench, three frontier LLMs studying a structure attain unanimous intent labels extra typically than three people studying the identical doc.

Non-unanimous circumstances per 1,000 conversations on HarmBench (decrease is healthier). LLM raters: GPT-5.4, Opus 4.6, Gemini 3.1, every studying the identical structure the people obtained.

We traced the human failures to 2 causes. A 300+ line doc exceeds working reminiscence, so annotators compress the written guidelines into remembered heuristics and fall again on instinct. In addition they collapse multi-technique taxonomies into single-label triage, submitting a dialog below one sibling approach as an alternative of evaluating every structure independently. LLMs keep away from each failures by re-reading the total doc each name and judging every approach in isolation. Their remaining failures misapply resolution logic in methods we are able to hint to particular sections, whereas human failures silently skip the principles. We count on the hole to widen: constitutions develop as new edge circumstances accumulate, human working reminiscence stays fastened, and mannequin instruction following, context size, and reasoning all maintain bettering.

Residual disagreement between frontier fashions stops being noise to vote away. Every remaining case factors to a particular sentence that’s ambiguous or incomplete, and our refinement loop converts that sentence into an specific ruling.

What this implies for Cisco AI Protection clients

Prospects care much less a couple of analysis quantity than about seeing why the system made a given resolution. Each flag traces to a particular rule in a readable doc: the classifier cites the rule it utilized, the weather it discovered, and the boundary notes it checked, and when we don’t flag, the identical doc explains why the case fell exterior the road. Within the close to future clients will be capable to question this specification instantly by way of an AI assistant, with no need to be specialists in a class , and get a plain-language reply grounded within the textual content. The identical doc drives retraining, labeling, product, authorized, and go-to-market, so a wording change spreads in every single place from one supply. AI-first just isn’t a slogan however a concrete shift in how we construct these programs, sooner, easier, and extra correct internally and for our clients.

Learn the total analysis paper: Bettering Labeling Consistency with Detailed Constitutional Definitions and AI-Pushed Analysis.