Deep Brokers can plan, use instruments, handle state, and deal with lengthy multi-step duties. However their actual efficiency depends upon context engineering. Poor directions, messy reminiscence, or an excessive amount of uncooked enter shortly degrade outcomes, whereas clear, structured context makes brokers extra dependable, cheaper, and simpler to scale.

For this reason the system is organized into 5 layers: enter context, runtime context, compression, isolation, and long-term reminiscence. On this article, you’ll see how every layer works, when to make use of it, and how you can implement it utilizing the create_deep_agent(...) Python interface.

What context engineering means in Deep Brokers

Context in Deep Brokers is just not that of the chat historical past alone. Some context is loaded into the system immediate at startup. Half is handed over on the time of invocation. A part of it’s robotically compressed when the working set of the agent turns into too large. An element is confined inside subagents. Others is carried over between conversations utilizing the digital filesystem and store-backed reminiscence. The documentation is evident that they’re separate mechanisms with separate scopes and that’s what makes deep brokers usable in manufacturing.

The 5 layers are:

- Enter context: Begin-up, fastened info, which was pooled into the system immediate.

- Runtime context: Per-run, dynamic configuration at invocation.

- Context compression: Offloading and summarization based mostly on automated reminiscence administration.

- Isolation of context with subagents: Assigning duties to subagents with new context home windows.

- Lengthy-term reminiscence: Enduring information that’s saved between classes.

Let’s assemble each one proper.

Conditions

You’ll require Python 3.10 or later, the deepagents bundle and a supported mannequin supplier. In case you wish to use stay net search or hosted instruments, configure the supplier API keys in your surroundings. The official quickstart helps supplier setups to Anthropic, OpenAI, Google, OpenRouter, Fireworks, Baseten and Ollama.

!pip set up -U deepagents langchain langgraph Layer 1: Enter context

Enter context refers to all that the agent perceives at initiation as a part of its constructed system immediate. That accommodates your customized system immediate, reminiscence information like AGENTS.md, expertise loaded based mostly on SKILL.md, and power prompts based mostly on built-in or customized instruments within the Deep Brokers docs. The docs additionally reveal that the whole assembled system immediate accommodates inbuilt planning recommendation, filesystem software recommendation, subagent recommendation, and non-compulsory middleware prompts. That’s, what you customized immediate is is only one part of what the mannequin will get.

That design issues. It doesn’t indicate that you’ll hand concatenate your agent immediate, reminiscence file, expertise file and power assist right into a single massive string. Deep Brokers already understands how you can assemble such a construction. It’s your process to position the suitable content material within the acceptable channel.

Use system_prompt for identification and conduct

Request the system immediate on the position of the agent, tone, boundaries and top-level priorities. The documentation signifies that system immediate is immutable and in case you need it to be completely different relying on the consumer or request, you need to use dynamic immediate middleware slightly than enhancing immediate strings straight.

Use reminiscence for always-relevant guidelines

Reminiscence information like AGENTS.md are all the time loaded when configured. The docs recommend that reminiscence needs to be used to retailer steady conventions, consumer preferences or vital directions which needs to be used all through all conversations. Since reminiscence is all the time injected, it should stay quick and high-signal.

Use expertise for workflows

Expertise are reusable workflows that are solely partially relevant. Deep Brokers masses the ability frontmatter on startup, and solely masses the total ability physique when it determines the ability applies. The sample of progressive disclosure is among the many easiest strategies of minimizing token waste with out compromising capability.

Use software descriptions as operational steering

The metadata of the software is included within the immediate that the mannequin is reasoning about. The docs recommend giving names to instruments in clear language, write descriptions indicating when to make use of them, and doc arguments in a way that may be understood by the agent and so, they will choose the instruments appropriately.

Arms-on Lab 1: Construct a mission supervisor agent with layered enter context

The First lab develops a easy but reasonable mission supervisor agent. It has a hard and fast place, a hard and fast reminiscence file of conventions and a capability to do weekly reporting.

Undertaking construction

mission/

├── AGENTS.md

├── expertise/

│ └── weekly-report/

│ └── SKILL.md

└── agent_setup.py

AGENTS.md

## Function

You're a mission supervisor agent for Acme Corp.## Conventions

- All the time reference duties by process ID, equivalent to TASK-42

- Summarize standing in three phrases or fewer

- By no means expose inner price knowledge to exterior stakeholders

expertise/weekly-report/SKILL.md

---

identify: weekly-report

description: Use this ability when the consumer asks for a weekly replace or standing report.

---

# Weekly report workflow

1. Pull all duties up to date within the final 7 days.

2. Group them by standing: Achieved, In Progress, Blocked.

3. Format the consequence as a markdown desk with proprietor and process ID.

4. Add a brief govt abstract on the high.agent_setup.py

from pathlib import Path

from IPython.core.show import Markdown

from deepagents import create_deep_agent

from deepagents.backends import FilesystemBackend

from langchain.instruments import software

ROOT = Path.cwd().resolve().mum or dad

@software

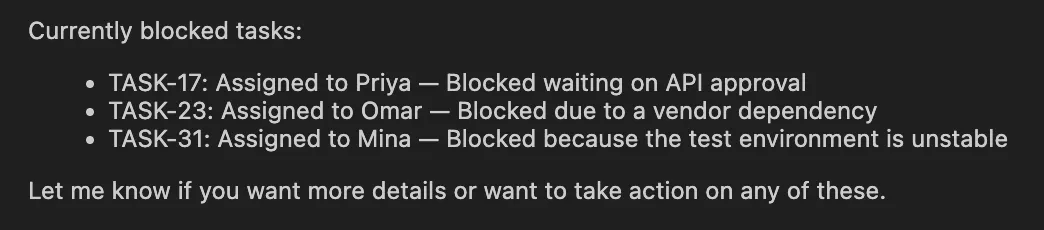

def get_blocked_tasks() -> str:

"""Return blocked duties for the present mission."""

return """

TASK-17 | Blocked | Priya | Ready on API approval

TASK-23 | Blocked | Omar | Vendor dependency

TASK-31 | Blocked | Mina | Check surroundings unstable

""".strip()

agent = create_deep_agent(

mannequin="openai:gpt-4.1",

system_prompt="You're Acme Corp's mission supervisor agent.",

instruments=[get_blocked_tasks],

reminiscence=["./AGENTS.md"],

expertise=["./skills/"],

backend=FilesystemBackend(root_dir=str(ROOT), virtual_mode=True),

)

consequence = agent.invoke(

{

"messages": [

{"role": "user", "content": "What tasks are currently blocked?"}

]

}

)

Markdown(consequence["messages"][-1].content material[0]["text"])Output:

This variant coincides with the recorded Deep Brokers sample. Reminiscence is proclaimed with reminiscence=… and expertise with expertise=… and a backend offers entry to these information by the agent. The agent won’t ever get optimistic concerning the contents of AGENTS.md, however absolutely load SKILL.md on events when it finds it vital to take action, i.e. when the weekly-report workflow is in play.

The ethical of the story is straightforward. Repair lasting legal guidelines in thoughts. Find reusable and non-constant workflows in expertise. Preserve a system that’s behaviorally and identification oriented. A single separation already aids a great deal of well timed bloat.

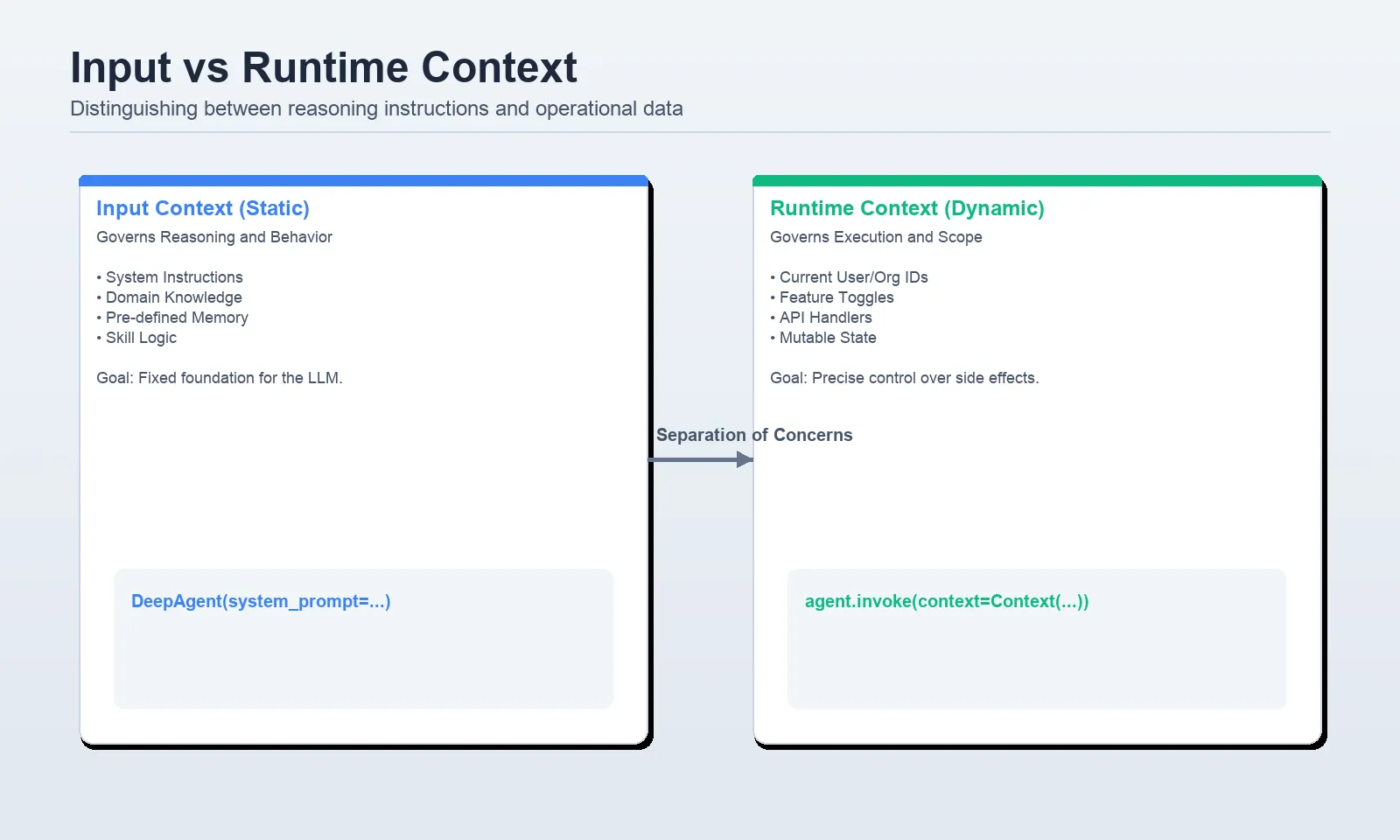

Layer 2: Runtime context

The info that you simply move throughout invocation time is the runtime context. One other vital truth that’s made very clear by the docs is that the runtime context is just not robotically introduced to the mannequin. Solely is it seen whether or not instruments or middleware explicitly learn it and floor it. It’s the proper place, then, to maintain consumer IDs, roles, function flags, database handles, API keys, or something that’s operational however to not be present in a immediate.

The sample that’s presently advised is to specify a context_schema, and invoke the agent with context=…, and to entry these values inside instruments with ToolRuntime. The docs of the LangChain instruments additionally point out that runtime is the suitable injection level of execution info, context, entry to a retailer, and different related metadata.

Arms-on Lab 2: Go runtime context with out polluting the immediate

from openai import api_key

from dataclasses import dataclass

import os

from IPython.core.show import Markdown

from deepagents import create_deep_agent

from langchain.instruments import software, ToolRuntime

@dataclass

class Context:

user_id: str

org_id: str

db_connection_string: str

weekly_report_enabled: bool

@software

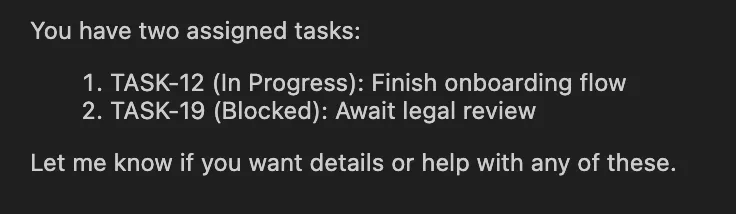

def get_my_tasks(runtime: ToolRuntime[Context]) -> str:

"""Return duties assigned to the present consumer."""

user_id = runtime.context.user_id

org_id = runtime.context.org_id

# Substitute this stub with an actual question in manufacturing.

return (

f"Duties for consumer={user_id} in org={org_id}n"

"- TASK-12 | In Progress | End onboarding flown"

"- TASK-19 | Blocked | Await authorized reviewn"

)

agent = create_deep_agent(

mannequin="openai:gpt-4.1",

instruments=[get_my_tasks],

context_schema=Context,

)

consequence = agent.invoke(

{

"messages": [

{"role": "user", "content": "What tasks are assigned to me?"}

]

},

context=Context(

user_id="usr_8821",

org_id="acme-corp",

db_connection_string="postgresql://localhost/acme",

weekly_report_enabled=True,

),

)

Markdown(consequence["messages"][-1].content material[0]["text"])Output:

That is the clear minimize that you simply need in manufacturing. The mannequin can invoke get my duties however the actual userid and orgid stay within the runtime context slightly than being pushed onto the system immediate or chat historical past. It’s a lot safer and simpler to motive about, throughout debugging of permissions and knowledge stream.

One rule is as follows: When the mannequin must motive a few truth straight, put it in prompt-space. To depart it in runtime context in case your instruments require it to be in operational state.

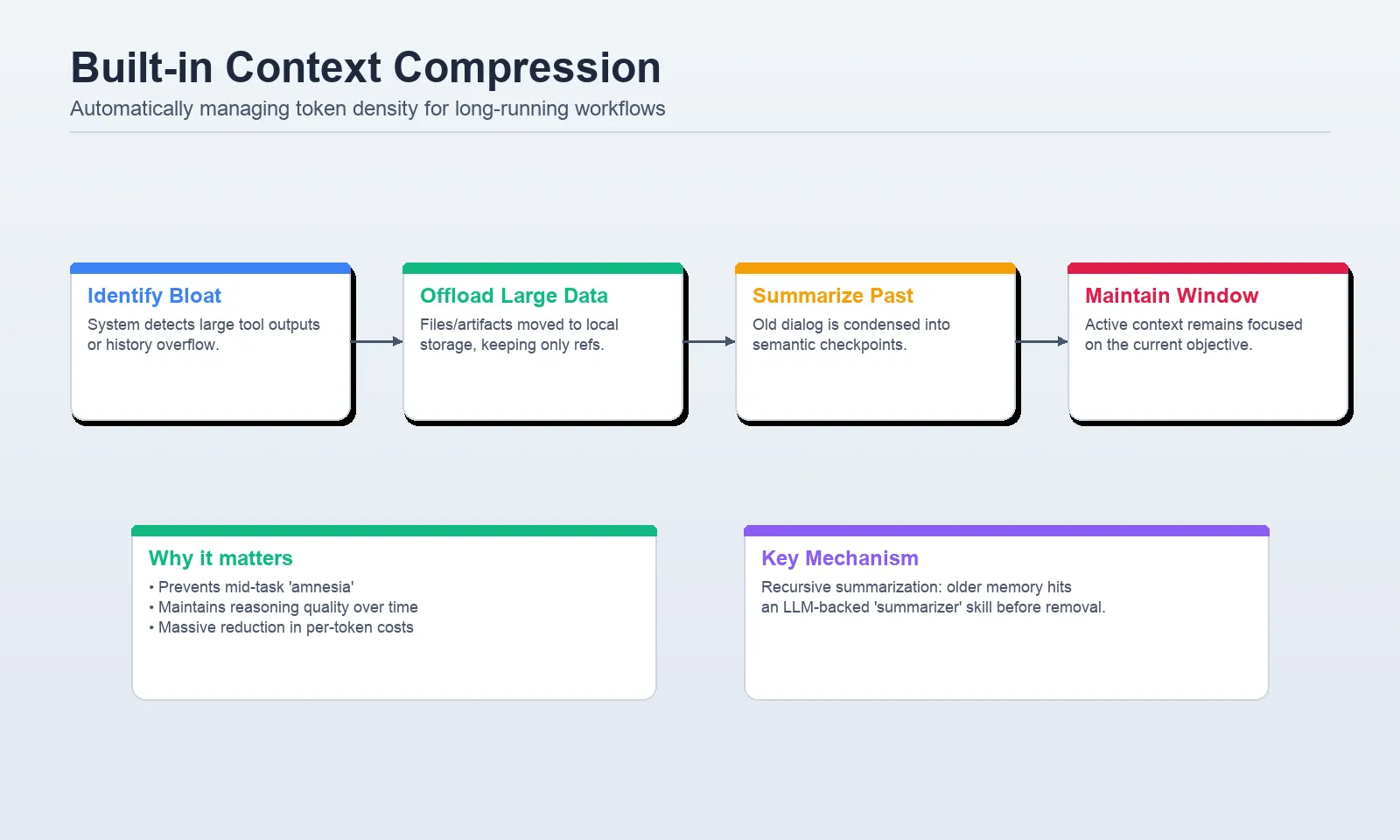

Layer 3: Context compression

Duties which might be long-running generate two points shortly: enormous software outputs and prolonged histories. Deep Brokers helps them each with inbuilt context compression. The 2 native mechanisms, offloading and summarization, are described within the docs. Unloads shops with massive software inputs and replicates them with references within the filesystem. Summarization is used to cut back the dimensions of older messages because the agent nears the context constraint of the mannequin.

Offloading

In line with the context engineering docs, content material offloading happens when the software name inputs or outputs surpass a token threshold, with default threshold being 20,000 tokens. Large historic instruments knowledge are substituted with references to the information which were endured in order that the agent can entry it later when required.

Summarization

In case the energetic context turns into excessively massive, Deep Brokers summarizes older elements of the dialog to proceed with the duty with out surpassing the window of the mannequin. It additionally has an non-compulsory summarization software middleware, which permits the agent to summarize on extra attention-grabbing boundaries, e.g., between process phases, slightly than simply on the automated threshold.

Arms-on Lab 3: Use built-in compression the best approach

from deepagents import create_deep_agent

from IPython.core.show import Markdown

def generate_large_report(matter: str) -> str:

"""Generate a really detailed report on vector database tradeoffs."""

# Simulate a big software consequence

return ("Detailed report about " + matter + "n") * 5000

agent = create_deep_agent(

mannequin="openai:gpt-4.1-mini",

instruments=[generate_large_report],

)

consequence = agent.invoke(

{

"messages": [

{

"role": "user",

"content": "Generate a very detailed report on vector database tradeoffs.",

}

]

}

)

Markdown(consequence["messages"][-1].content material[0]["text"])Output:

In a setup like this, Deep Brokers handles the heavy lifting. If the software output turns into massive sufficient, the framework can offload it to the filesystem and preserve solely the related reference in energetic context. Which means you need to begin with the built-in conduct earlier than inventing your individual middleware.

If you would like proactive summarization between levels, use the documented middleware:

from deepagents import create_deep_agent

from deepagents.backends import StateBackend

from deepagents.middleware.summarization import create_summarization_tool_middleware

agent = create_deep_agent(

mannequin="openai:gpt-4.1",

middleware=[

create_summarization_tool_middleware("openai:gpt-4.1", StateBackend),

],

)That provides an non-compulsory summarization software so the agent can compress context at logical checkpoints as an alternative of ready till the window is almost full.

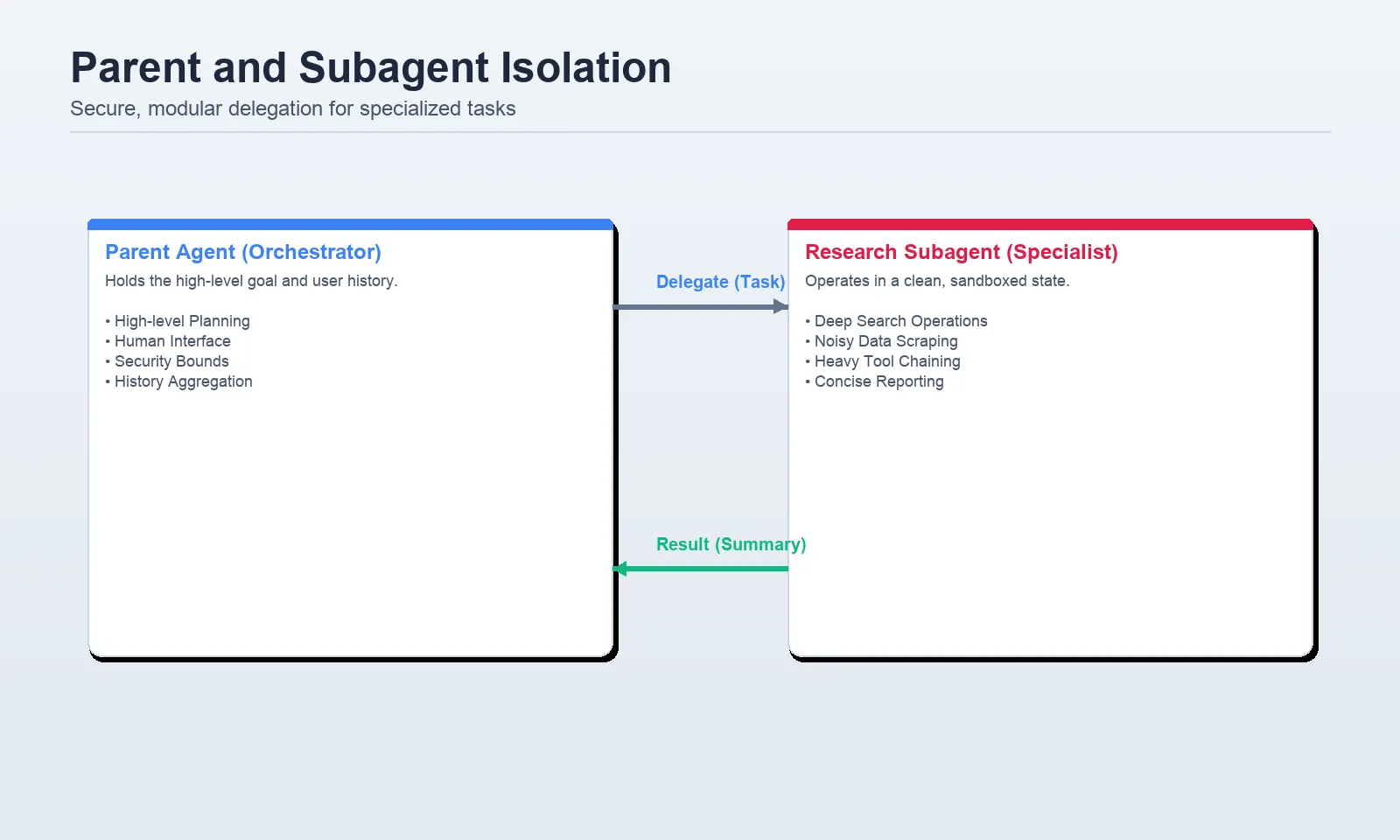

Layer 4: Context isolation with subagents

Subagents can be found to take care of the first agent clear. The docs recommend them in multi-step work that might in any other case litter the mum or dad context, in particular areas, and in work that may require a special toolset or mannequin. They clearly recommend that they don’t seem to be for use in one-step duties or duties the place the mother and father intermediate reasoning will nonetheless be inside the scope.

The Deep Brokers sample is presently to declare subagents with the subagents= parameter. Within the majority of functions, they are often represented as a dictionary with a reputation, description, system immediate, instruments and non-compulsory mannequin override as every subagent.

Arms-on Lab 4: Delegate analysis to an remoted subagent

from deepagents import create_deep_agent

from IPython.core.show import Markdown

def internet_search(question: str, max_results: int = 5) -> str:

"""Run an internet seek for the given question."""

return f"Search outcomes for: {question} (high {max_results})"

research_subagent = {

"identify": "research-agent",

"description": "Use for deep analysis and proof gathering.",

"system_prompt": (

"You're a analysis specialist. "

"Analysis completely, however return solely a concise abstract. "

"Don't return uncooked search outcomes, lengthy excerpts, or software logs."

),

"instruments": [internet_search],

"mannequin": "openai:gpt-4.1",

}

agent = create_deep_agent(

mannequin="openai:gpt-4.1",

system_prompt="You coordinate work and delegate deep analysis when wanted.",

subagents=[research_subagent],

)

consequence = agent.invoke(

{

"messages": [

{

"role": "user",

"content": "Research best practices for retrieval evaluation and summarize them.",

}

]

}

)

Markdown(consequence["messages"][-1].content material[0]["text"])Output:

Delegation is just not the important thing to good subagent design. It’s containment. The subagent is just not supposed to provide the uncooked knowledge, however a concise reply. In any other case, you lose all of the overhead of isolation with out having any context financial savings.

The opposite noteworthy truth talked about within the paperwork is that runtime context is propagated to subagents. When the mum or dad has an current consumer, org or position within the runtime context, the subagent inherits it as effectively. That’s the reason subagents are much more handy to work with in actual programs since you don’t want to re-enter the identical knowledge in each place manually.

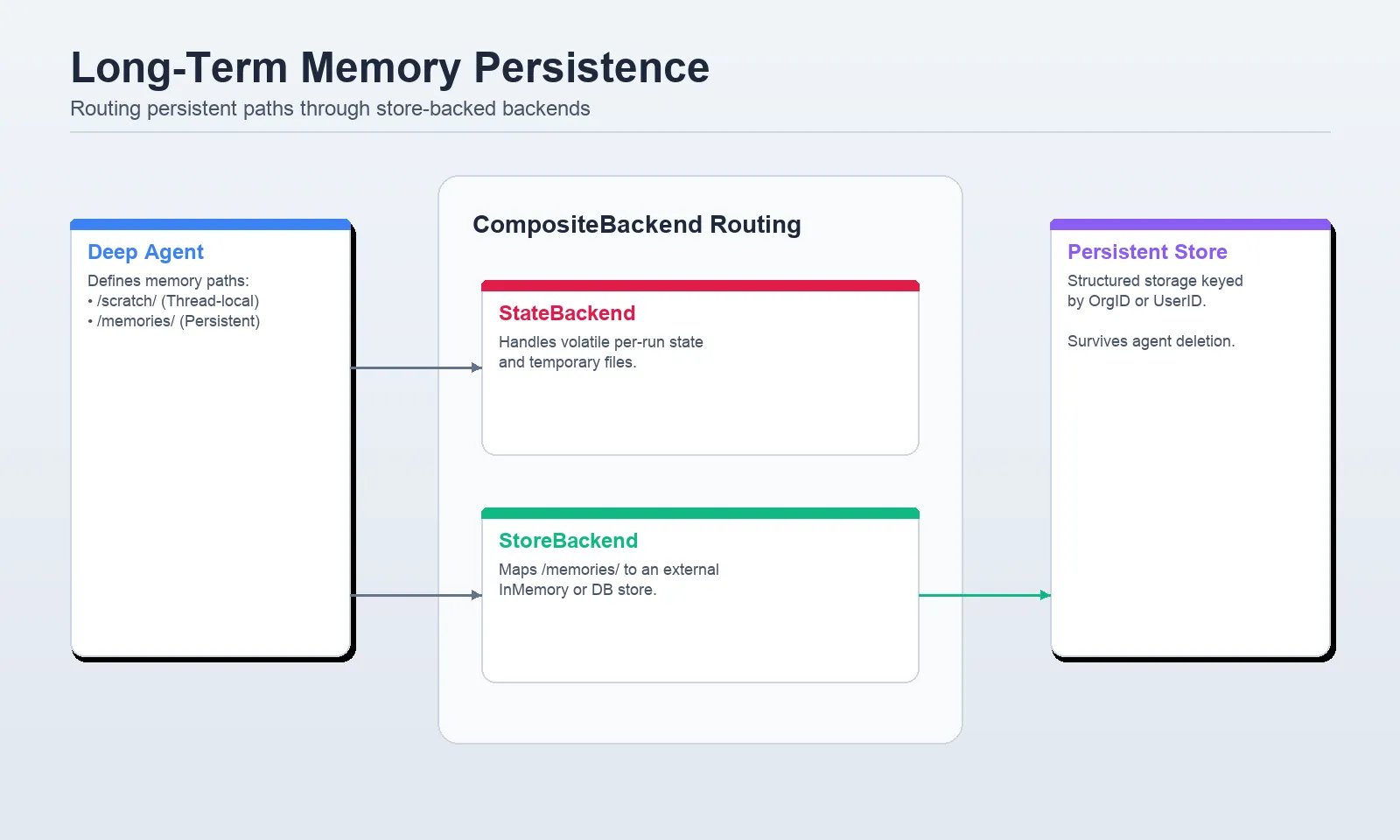

Layer 5: Lengthy-term reminiscence

Lengthy-term reminiscence is the place Deep Brokers turns into far more than a elaborate immediate wrapper. The docs describe reminiscence as persistent storage throughout threads by way of the digital filesystem, often routed with StoreBackend and infrequently mixed with CompositeBackend so completely different filesystem paths can have completely different storage conduct.

That is what most examples err at wrongly. It ought to have a path to a backend equivalent to StoreBackend and to not a uncooked retailer object. The shop itself is exchanged to type create deep_agent(...). The paths of the reminiscence information are outlined in reminiscence=[…], which might be then loaded robotically into the system immediate.

The reminiscence docs additional make clear that there are different dimensions to reminiscence aside from storage. It’s essential to contemplate size, kind of knowledge, protection, and updating plan. Virtually, probably the most vital alternative is scope: Is it going to be per-user, per-agent, or an organization-wide reminiscence?

CompositeBackend to ship scratch knowledge to StateBackend and /reminiscences/ paths to StoreBackend. Arms-on Lab 5: Add user-scoped cross-session reminiscence

from dataclasses import dataclass

from IPython.core.show import Markdown

from deepagents import create_deep_agent

from deepagents.backends import CompositeBackend, StateBackend, StoreBackend

from deepagents.backends.utils import create_file_data

from langgraph.retailer.reminiscence import InMemoryStore

from langchain_core.utils.uuid import uuid7

@dataclass

class Context:

user_id: str

retailer = InMemoryStore()

# Seed reminiscence for one consumer

retailer.put(

("user-alice",),

"/reminiscences/preferences.md",

create_file_data("""## Preferences

- Maintain responses concise

- Choose Python examples

"""),

)

agent = create_deep_agent(

mannequin="openai:gpt-4.1-mini",

reminiscence=["/memories/preferences.md"],

context_schema=Context,

backend=lambda rt: CompositeBackend(

default=StateBackend(rt),

routes={

"/reminiscences/": StoreBackend(

rt,

namespace=lambda ctx: (ctx.runtime.context.user_id,),

),

},

),

retailer=retailer,

system_prompt=(

"You're a useful assistant. "

"Use reminiscence information to personalize your solutions when related."

),

)

consequence = agent.invoke(

{

"messages": [

{"role": "user", "content": "How do I read a CSV file in Python?"}

]

},

config={"configurable": {"thread_id": str(uuid7())}},

context=Context(user_id="user-alice"),

)

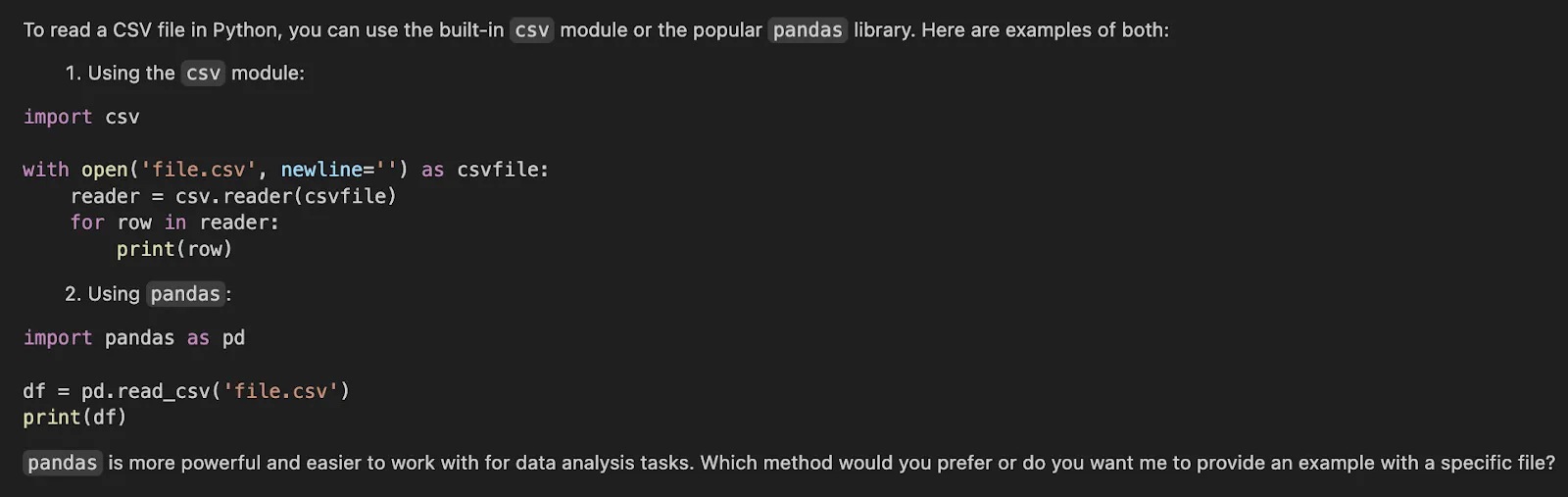

Markdown(consequence["messages"][-1].content material[0]["text"])Output:

There are three important issues that this setup does. It masses a reminiscence file to the agent. It sends /reminiscences/ to persistent store-backed storage. And it’s namespace remoted per consumer by utilizing user_id because the namespace. That is the proper default with most multi-user programs because it doesn’t permit reminiscence to leak between customers.

If you require organizational reminiscence that you simply share, you need to use a special namespace and continuously a special path like /insurance policies or /org-memory. If you require agent degree shared procedural reminiscence, then use agent particular namespace. Nonetheless, consumer scope is probably the most safe start line, when it comes to consumer preferences and customised conduct.

Frequent errors to keep away from

The prevailing documentation implicitly cautions towards among the typical pitfalls, they usually can’t damage to be express.

- Watch out to not overload the system. All the time-loaded immediate house is dear and troublesome to take care of. Be aware of reminiscence and expertise.

- Don’t switch runtime-only info utilizing chat messages. IDs, permissions, function flags and connection particulars fall in runtime context.

- Offloading and summarization shouldn’t be reimplemented till you have got quantified an precise distinction within the built-ins.

- Do not need subagents undertake insignificant single duties. The paperwork clearly point out to set them apart to context-intensive or specialised work.

- The default is to not retailer all long-term reminiscence in a single shared namespace. Decide the proprietor of the reminiscence, the consumer or the agent, or the group.

Conclusion

Deep Brokers usually are not efficient since they possess prolonged prompts. They’re sturdy since they can help you decouple context by position and lifecycle. Cross-thread reminiscence, per-run state, startup directions, compressed historical past, and delegated work are a couple of different issues. Deep Brokers framework offers you with a clear abstraction of every. If you straight use these abstractions slightly than debugging round them, your brokers are less complicated to debug, cheaper to execute, and extra dependable to make use of in actual workloads.

That’s the precise artwork of context engineering. It doesn’t matter about offering extra context. It’s giving the agent simply the context that it requires, simply the place it’s required.

Often Requested Questions

A. It’s the process of giving AI brokers the proper info. That is offered within the acceptable format and on the opportune second. It directs their actions and makes them accomplish any process.

A. Context performs an vital position because it helps brokers to remain targeted. It assists them in not being irrelevant. It additionally makes certain that they get requisite knowledge. This outcomes into efficient and reliable efficiency of duties.

A. Subagents are context isolating. They sort out intricate output-intensive jobs inside their very distinct setting. This ensures that the reminiscence of the principle agent is clear and goal in the direction of its fundamental targets.

Login to proceed studying and revel in expert-curated content material.