xtmixed was constructed from the bottom up for coping with multilevel random results — that’s its raison d’être. sem was constructed for multivariate outcomes, for dealing with latent variables, and for estimating structural equations (additionally known as simultaneous techniques or fashions with endogeneity). Can sem additionally deal with multilevel random results (REs)? Will we care?

This is able to be a brief entry if both reply had been “no”, so let’s get after the primary query.

Can sem deal with multilevel REs?

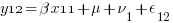

A superb place to begin is to simulate some multilevel RE knowledge. Let’s create knowledge for the 3-level regression mannequin

the place the classical multilevel regression assumption holds that

and

and  are distributed

are distributed  regular and are uncorrelated.

regular and are uncorrelated.

This represents a mannequin of  nested inside

nested inside  nested inside

nested inside  . An instance can be college students nested inside colleges nested inside counties. We have now random intercepts on the 2nd and Third ranges —

. An instance can be college students nested inside colleges nested inside counties. We have now random intercepts on the 2nd and Third ranges —  ,

,  . As a result of these are random results, we want estimate solely the variance of

. As a result of these are random results, we want estimate solely the variance of  ,

,  , and

, and  .

.

For our simulated knowledge, let’s assume there are 3 teams on the Third stage, 2 teams on the 2nd stage inside every Third stage group, and a couple of people inside every 2nd stage group. Or,  ,

,  , and

, and  . Having solely 3 teams on the Third stage is foolish. It offers us solely 3 observations to estimate the variance of

. Having solely 3 teams on the Third stage is foolish. It offers us solely 3 observations to estimate the variance of  . However with solely

. However with solely  observations, we will simply see our complete dataset, and the ideas scale to any variety of Third-level teams.

observations, we will simply see our complete dataset, and the ideas scale to any variety of Third-level teams.

First, create our Third-level random results —  .

.

. set obs 3

. gen ok = _n

. gen Uk = rnormal()

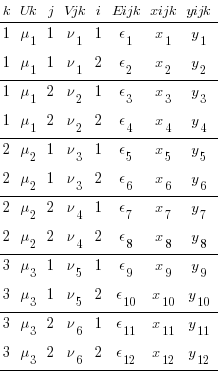

There are solely 3  in our dataset.

in our dataset.

I’m displaying the results symbolically within the desk fairly than displaying numeric values. It’s the sample of distinctive results that may turn out to be attention-grabbing, not their precise values.

Now, create our 2nd-level random results —  — by doubling this knowledge and creating 2nd-level results.

— by doubling this knowledge and creating 2nd-level results.

. develop 2

. by ok, kind: gen j = _n

. gen Vjk = rnormal()

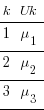

We have now 6 distinctive values of our 2nd-level results and the identical 3 distinctive values of our Third-level results. Our unique Third-level results simply seem twice every.

Now, create our 1st-level random results —  — which we sometimes simply name errors.

— which we sometimes simply name errors.

. develop 2

. by ok j, kind: gen i = _n

. gen Eijk = rnormal()

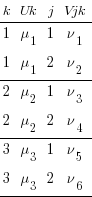

There are nonetheless solely 3 distinctive  in our dataset, and solely 6 distinctive

in our dataset, and solely 6 distinctive  .

.

Lastly, we create our regression knowledge, utilizing  ,

,

. gen xijk = runiform()

. gen yijk = 2 * xijk + Uk + Vjk + Eijk

We might estimate our multilevel RE mannequin on this knowledge by typing,

. xtmixed yijk xijk || ok: || j:

xtmixed makes use of the index variables ok and j to deeply perceive the multilevel construction of the our knowledge. sem has no such understanding of multilevel knowledge. What it does have is an understanding of multivariate knowledge and a snug willingness to use constraints.

Let’s restructure our knowledge in order that sem may be made to know its multilevel construction.

First some renaming in order that the outcomes of our restructuring will probably be simpler to interpret.

. rename Uk U

. rename Vjk V

. rename Eijk E

. rename xijk x

. rename yijk y

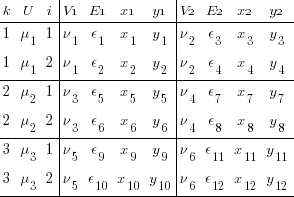

We reshape to show our multilevel knowledge into multivariate knowledge that sem has an opportunity of understanding. First, we reshape large on our 2nd-level identifier j. Earlier than that, we egen to create a novel identifier for every commentary of the 2 teams recognized by j.

. egen ik = group(i ok)

. reshape large y x E V, i(ik) j(j)

We now have a y variable for every group in j (y1 and y2). Likewise, we now have two x variables, two residuals, and most significantly two 2nd-level random results V1 and V2. This is identical knowledge, we now have merely created a set of variables for each stage of j. We have now gone from multilevel to multivariate.

We nonetheless have a multilevel part. There are nonetheless two ranges of i in our dataset. We should reshape large once more to take away any remnant of multilevel construction.

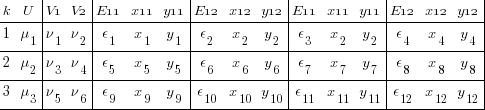

. drop ik

. reshape large y* x* E*, i(ok) j(i)

I admit that could be a microscopic font, however it’s the construction that’s essential, not the values. We now have 4 y’s, one for every mixture of 2nd- and Third-level identifiers — i and j. Likewise for the x’s and E’s.

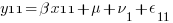

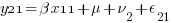

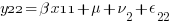

We will consider every xji yji pair of columns as representing a regression for a particular mixture of j and i — y11 on x11, y12 on x12, y21 on x21, and y22 on x22. Or, extra explicitly,

So, fairly than a univariate multilevel regression with 4 nested commentary units, ( ) * (

) * ( ), we now have 4 regressions that are all associated via

), we now have 4 regressions that are all associated via  and every of two pairs are associated via

and every of two pairs are associated via  . Oh, and all share the identical coefficient

. Oh, and all share the identical coefficient  . Oh, and the

. Oh, and the  all have an identical variances. Oh, and the

all have an identical variances. Oh, and the  even have an identical variances. Fortunately each the sem command and the SEM Builder (the GUI for sem) make setting constraints simple.

even have an identical variances. Fortunately each the sem command and the SEM Builder (the GUI for sem) make setting constraints simple.

There’s one different factor we haven’t addressed. xtmixed understands random results. Does sem? Random results are simply unobserved (latent) variables and sem clearly understands these. So, sure, sem does perceive random results.

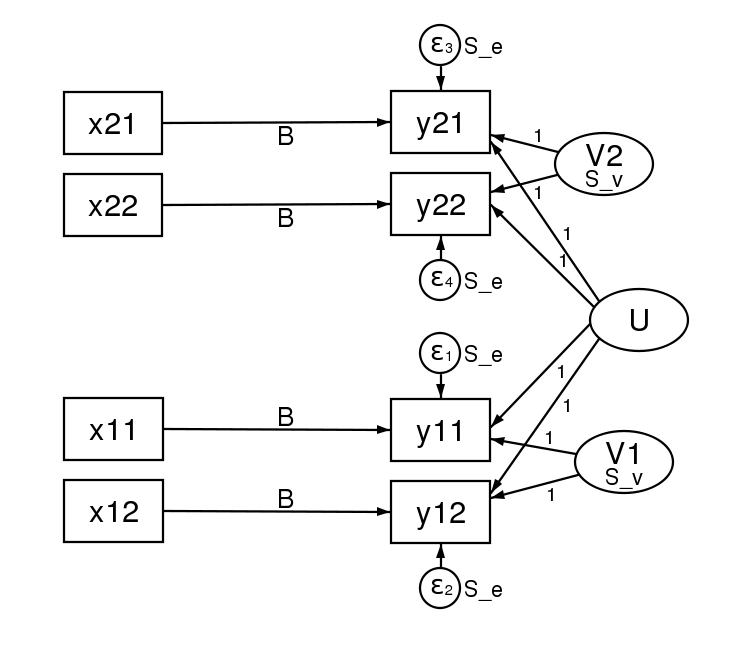

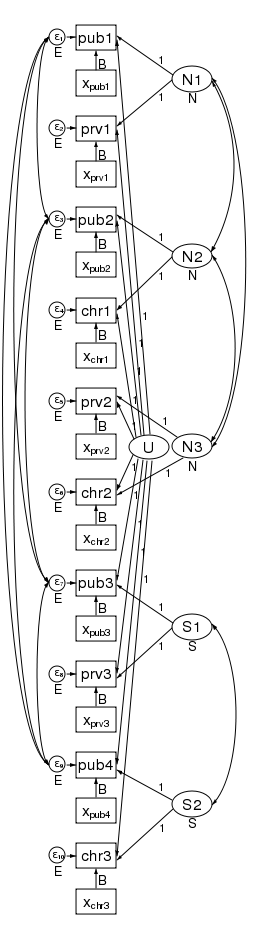

Many SEMers would signify this mannequin in a path diagram by drawing.

There’s lots of info in that diagram. Every regression is represented by one of many x containers being linked by a path to a y field. That every of the 4 paths is labeled with  signifies that we now have constrained the regressions to have the identical coefficient. The y21 and y22 containers additionally obtain enter from the random latent variable V2 (representing our 2nd-level random results). The opposite two y containers obtain enter from V1 (additionally our 2nd-level random results). For this to match how xtmixed handles random results, V1 and V2 have to be constrained to have the identical variance. This was accomplished within the path diagram by “locking” them to have the identical variance — S_v. To match xtmixed, every of the 4 residuals should even have the identical variance — proven within the diagram as S_e. The residuals and random impact variables even have their paths constrained to 1. That’s to say, they don’t have coefficients.

signifies that we now have constrained the regressions to have the identical coefficient. The y21 and y22 containers additionally obtain enter from the random latent variable V2 (representing our 2nd-level random results). The opposite two y containers obtain enter from V1 (additionally our 2nd-level random results). For this to match how xtmixed handles random results, V1 and V2 have to be constrained to have the identical variance. This was accomplished within the path diagram by “locking” them to have the identical variance — S_v. To match xtmixed, every of the 4 residuals should even have the identical variance — proven within the diagram as S_e. The residuals and random impact variables even have their paths constrained to 1. That’s to say, they don’t have coefficients.

We don’t want any of the U, V, or E variables. We stored these solely to clarify how the multilevel knowledge was restructured to multivariate knowledge. We’d “comply with the cash” in a felony investigation, however with simulated multilevel knowledge is is finest to “comply with the results”. Seeing how these results had been distributed in our reshaped knowledge made it clear how they entered our multivariate mannequin.

Simply to show that this all works, listed here are the outcomes from a simulated dataset ( fairly than the three that we now have been utilizing). The xtmixed outcomes are,

fairly than the three that we now have been utilizing). The xtmixed outcomes are,

. xtmixed yijk xijk || ok: || j: , mle var

(log omitted)

Blended-effects ML regression Variety of obs = 400

-----------------------------------------------------------

| No. of Observations per Group

Group Variable | Teams Minimal Common Most

----------------+------------------------------------------

ok | 100 4 4.0 4

j | 200 2 2.0 2

-----------------------------------------------------------

Wald chi2(1) = 61.84

Log probability = -768.96733 Prob > chi2 = 0.0000

------------------------------------------------------------------------------

yijk | Coef. Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

xijk | 1.792529 .2279392 7.86 0.000 1.345776 2.239282

_cons | .460124 .2242677 2.05 0.040 .0205673 .8996807

------------------------------------------------------------------------------

------------------------------------------------------------------------------

Random-effects Parameters | Estimate Std. Err. [95% Conf. Interval]

-----------------------------+------------------------------------------------

ok: Id |

var(_cons) | 2.469012 .5386108 1.610034 3.786268

-----------------------------+------------------------------------------------

j: Id |

var(_cons) | 1.858889 .332251 1.309522 2.638725

-----------------------------+------------------------------------------------

var(Residual) | .9140237 .0915914 .7510369 1.112381

------------------------------------------------------------------------------

LR take a look at vs. linear regression: chi2(2) = 259.16 Prob > chi2 = 0.0000

Notice: LR take a look at is conservative and offered just for reference.

The sem outcomes are,

sem (y11 <- x11@bx _cons@c V1@1 U@1)

(y12 <- x12@bx _cons@c V1@1 U@1)

(y21 <- x21@bx _cons@c V2@1 U@1)

(y22 <- x22@bx _cons@c V2@1 U@1) ,

covstruct(_lexog, diagonal) cov(_lexog*_oexog@0)

cov( V1@S_v V2@S_v e.y11@S_e e.y12@S_e e.y21@S_e e.y22@S_e)

(notes omitted)

Endogenous variables

Noticed: y11 y12 y21 y22

Exogenous variables

Noticed: x11 x12 x21 x22

Latent: V1 U V2

(iteration log omitted)

Structural equation mannequin Variety of obs = 100

Estimation technique = ml

Log probability = -826.63615

(constraint itemizing omitted)

------------------------------------------------------------------------------

| OIM | Coef. Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

Structural |

y11 <- |

x11 | 1.792529 .2356323 7.61 0.000 1.330698 2.25436

V1 | 1 7.68e-17 1.3e+16 0.000 1 1

U | 1 2.22e-18 4.5e+17 0.000 1 1

_cons | .460124 .226404 2.03 0.042 .0163802 .9038677

-----------+----------------------------------------------------------------

y12 <- |

x12 | 1.792529 .2356323 7.61 0.000 1.330698 2.25436

V1 | 1 2.00e-22 5.0e+21 0.000 1 1

U | 1 5.03e-17 2.0e+16 0.000 1 1

_cons | .460124 .226404 2.03 0.042 .0163802 .9038677

-----------+----------------------------------------------------------------

y21 <- |

x21 | 1.792529 .2356323 7.61 0.000 1.330698 2.25436

U | 1 5.70e-46 1.8e+45 0.000 1 1

V2 | 1 5.06e-45 2.0e+44 0.000 1 1

_cons | .460124 .226404 2.03 0.042 .0163802 .9038677

-----------+----------------------------------------------------------------

y22 <- |

x22 | 1.792529 .2356323 7.61 0.000 1.330698 2.25436

U | 1 (constrained)

V2 | 1 (constrained)

_cons | .460124 .226404 2.03 0.042 .0163802 .9038677

-------------+----------------------------------------------------------------

Variance |

e.y11 | .9140239 .091602 .75102 1.112407

e.y12 | .9140239 .091602 .75102 1.112407

e.y21 | .9140239 .091602 .75102 1.112407

e.y22 | .9140239 .091602 .75102 1.112407

V1 | 1.858889 .3323379 1.309402 2.638967

U | 2.469011 .5386202 1.610021 3.786296

V2 | 1.858889 .3323379 1.309402 2.638967

-------------+----------------------------------------------------------------

Covariance |

x11 |

V1 | 0 (constrained)

U | 0 (constrained)

V2 | 0 (constrained)

-----------+----------------------------------------------------------------

x12 |

V1 | 0 (constrained)

U | 0 (constrained)

V2 | 0 (constrained)

-----------+----------------------------------------------------------------

x21 |

V1 | 0 (constrained)

U | 0 (constrained)

V2 | 0 (constrained)

-----------+----------------------------------------------------------------

x22 |

V1 | 0 (constrained)

U | 0 (constrained)

V2 | 0 (constrained)

-----------+----------------------------------------------------------------

V1 |

U | 0 (constrained)

V2 | 0 (constrained)

-----------+----------------------------------------------------------------

U |

V2 | 0 (constrained)

------------------------------------------------------------------------------

LR take a look at of mannequin vs. saturated: chi2(25) = 22.43, Prob > chi2 = 0.6110

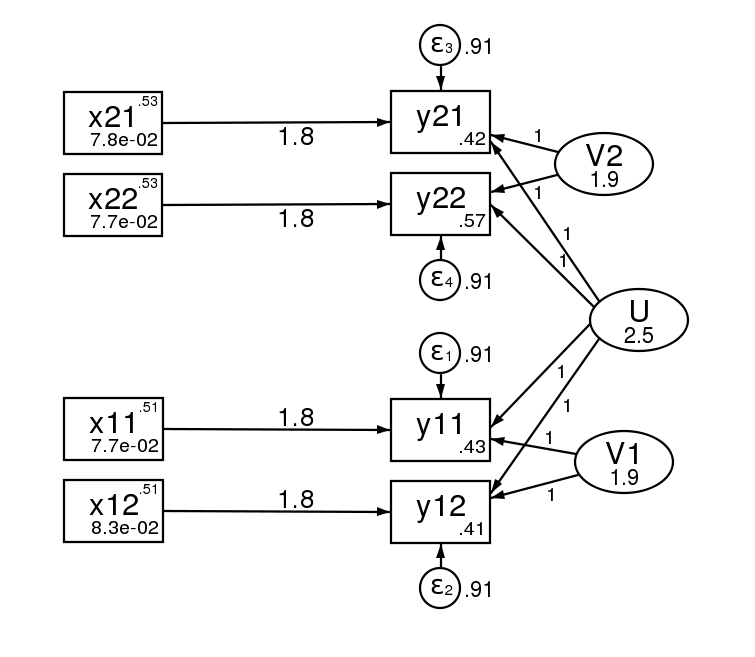

And right here is the trail diagram after estimation.

The usual errors of the 2 estimation strategies are asymptotically equal, however will differ in finite samples.

Sidenote: These acquainted with multilevel modeling will probably be questioning if sem can deal with unbalanced knowledge. That’s to say a distinct variety of observations or subgroups inside teams. It will possibly. Merely let reshape create lacking values the place it would after which add the technique(mlmv) choice to your sem command. mlmv stands for max probability with lacking values. And, as unusual as it could appear, with this feature the multivariate sem illustration and the multilevel xtmixed representations are the identical.

Will we care?

You’ll have seen that the sem command was, effectively, it was actually lengthy. (I wrote slightly loop to get all of the constraints proper.) Additionally, you will have seen that there’s a lot of redundant output as a result of our SEM mannequin has so many constraints. Why would anybody go to all this hassle to do one thing that’s so easy with xtmixed? The reply lies in all of these constraints. With sem we will loosen up any of these constraints we want!

Chill out the constraint that the V# have the identical variance and you’ll introduce heteroskedasticity within the 2nd-level results. That appears slightly foolish when there are solely two ranges, however think about there have been 10 ranges.

Add a covariance between the V# and also you introduce correlation between the teams within the Third stage.

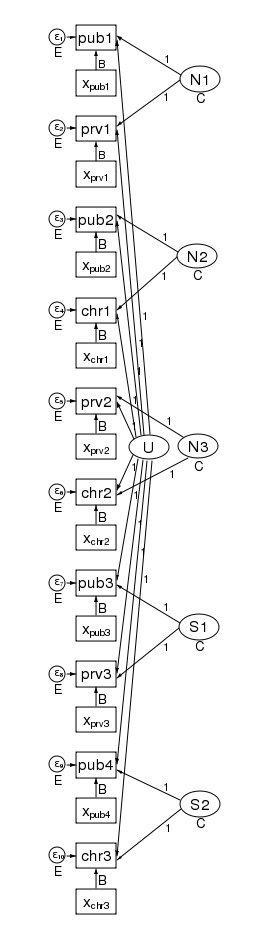

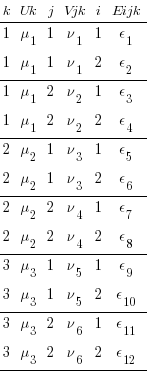

What’s extra, the sample of heteroskedasticity and correlation may be arbitrary. Right here is our path diagram redrawn to signify youngsters inside colleges inside counties and growing the variety of teams within the 2nd stage.

We have now 5 counties on the Third stage and two colleges inside every county on the 2nd stage — for a complete of 10 dimensions in our multivariate regression. The diagram doesn’t change based mostly on the variety of youngsters drawn from every college.

Our regression coefficients have been organized horizontally down the middle of the diagram to permit room alongside the left and proper for the random results. Taken as a multilevel mannequin, we now have solely a single covariate — x. Simply to be clear, we might generalize this to a number of covariates by including extra containers with covariates for every dependent variable within the diagram.

The labels are chosen rigorously. The Third-level results N1, N2, and N3 are for northern counties, and the remaining second stage results S1 and S2 are for southern counties. There’s a separate dependent variable and related error for every college. We have now 4 public colleges (pub1 pub2, pub3, and pub4); three non-public colleges (prv1 prv2, and prv3); and three church-sponsored colleges (chr1 chr2, and chr3).

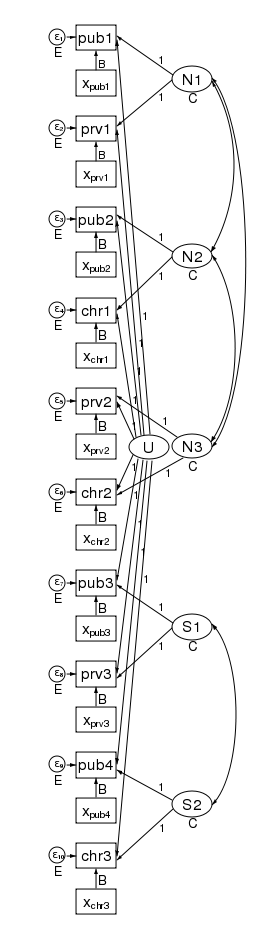

The multivariate construction seen within the diagram makes it clear that we will loosen up some constraints that the multilevel mannequin imposes. As a result of the sem illustration of the mannequin breaks the 2nd stage impact into an impact for every county, we will apply a construction to the 2nd stage impact. Contemplate the trail diagram beneath.

We have now correlated the results for the three northern counties. We did this by drawing curved traces between the results. We have now additionally correlated the results of the 2 southern counties. xtmixed doesn’t permit a majority of these correlations. Had we wished, we might have constrained the correlations of the three northern counties to be the identical.

We might even have allowed the northern and southern counties to have completely different variances. We did simply that within the diagram beneath by constraining the northern counties variances to be N and the southern counties variances to be S.

On this diagram we now have additionally correlated the errors for the 4 public colleges. As drawn, every correlation is free to take by itself values, however we might simply as simply constrain every public college to be equally correlated with all different public colleges. Likewise, to maintain the diagram readable, we didn’t correlate the non-public colleges with one another or the church colleges with one another. We might have accomplished that.

There’s one factor that xtmixed can try this sem can not. It will possibly put a construction on the residual correlations throughout the 2nd stage teams. xtmixed has a particular possibility, residuals(), for simply this function.

With xtmixed and sem you get,

- strong and cluster-robust SEs

- survey knowledge

With sem you additionally get

- endogenous covariates

- estimation by GMM

- lacking knowledge — MAR (additionally known as lacking on observables)

- heteroskedastic results at any stage

- correlated results at any stage

- simple rating checks utilizing estat scoretests

- are the

coefficients actually are the identical throughout all equations/ranges, whether or not results?

coefficients actually are the identical throughout all equations/ranges, whether or not results? - are results or units of results uncorrelated?

- are results inside a grouping homoskedastic?

- …

- are the

Whether or not you view this rethinking of multilevel random-effects fashions as multivariate structural equation fashions (SEMs) as attention-grabbing, or merely an instructional train, is dependent upon whether or not your mannequin requires any of the gadgets within the second listing.