Residual connections are one of many least questioned components of contemporary Transformer design. In PreNorm architectures, every layer provides its output again right into a operating hidden state, which retains optimization secure and permits deep fashions to coach. Moonshot AI researchers argue that this normal mechanism additionally introduces a structural downside: all prior layer outputs are gathered with fastened unit weights, which causes hidden-state magnitude to develop with depth and progressively weakens the contribution of any single layer.

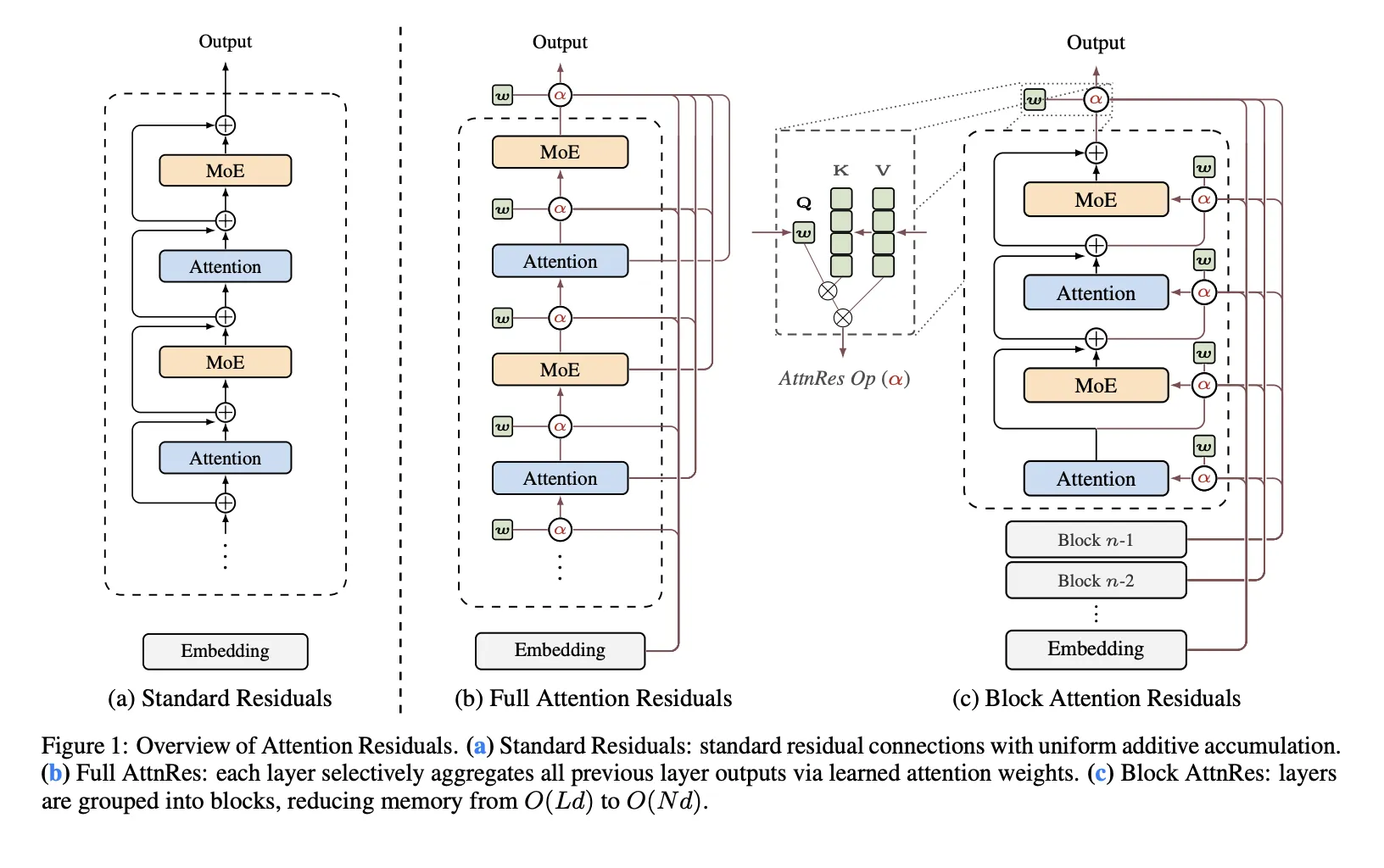

The analysis workforce proposes Consideration Residuals (AttnRes) as a drop-in alternative for traditional residual accumulation. As an alternative of forcing each layer to eat the identical uniformly blended residual stream, AttnRes lets every layer mixture earlier representations utilizing softmax consideration over depth. The enter to layer (l) is a weighted sum of the token embedding and former layer outputs, the place the weights are computed over prior depth positions fairly than over sequence positions. The core thought is easy: if consideration improved sequence modeling by changing fastened recurrence over time, the same thought might be utilized to the depth dimension of a community.

Why Normal Residuals Turn into a Bottleneck

The analysis workforce recognized three points with normal residual accumulation. First, there may be no selective entry: all layers obtain the identical aggregated state though consideration layers and feed-forward or MoE layers might profit from completely different mixtures of earlier data. Second, there may be irreversible loss: as soon as data is mixed right into a single residual stream, later layers can not selectively recuperate particular earlier representations. Third, there may be output progress: deeper layers have a tendency to supply bigger outputs to stay influential inside an ever-growing gathered state, which might destabilize coaching.

That is the analysis workforce’s primary framing: normal residuals behave like a compressed recurrence over layers. AttnRes replaces that fastened recurrence with express consideration over earlier layer outputs.

Full AttnRes: Consideration Over All Earlier Layers

In Full AttnRes, every layer computes consideration weights over all previous depth sources. The default design does not use an input-conditioned question. As an alternative, every layer has a realized layer-specific pseudo-query vector wl ∈ Rd, whereas keys and values come from the token embedding and former layer outputs after RMSNorm. The RMSNorm step is vital as a result of it prevents large-magnitude layer outputs from dominating the depth-wise consideration weights.

Full AttnRes is simple, however it will increase price. Per token, it requires O(L2 d) arithmetic and (O(Ld)) reminiscence to retailer layer outputs. In normal coaching this reminiscence largely overlaps with activations already wanted for backpropagation, however underneath activation re-computation and pipeline parallelism the overhead turns into extra vital as a result of these earlier outputs should stay out there and will should be transmitted throughout phases.

Block AttnRes: A Sensible Variant for Giant Fashions

To make the tactic usable at scale, Moonshot AI analysis workforce introduces Block AttnRes. As an alternative of attending over each earlier layer output, the mannequin partitions layers into N blocks. Inside every block, outputs are gathered right into a single block illustration, and a focus is utilized solely over these block-level representations plus the token embedding. This reduces reminiscence and communication overhead from O(Ld) to O(Nd).

The analysis workforce describes cache-based pipeline communication and a two-phase computation technique that make Block AttnRes sensible in distributed coaching and inference. This ends in lower than 4% coaching overhead underneath pipeline parallelism, whereas the repository experiences lower than 2% inference latency overhead on typical workloads.

Scaling Outcomes

The analysis workforce evaluates 5 mannequin sizes and compares three variants at every dimension: a PreNorm baseline, Full AttnRes, and Block AttnRes with about eight blocks. All variants inside every dimension group share the identical hyperparameters chosen underneath the baseline, which the analysis workforce notice makes the comparability conservative. The fitted scaling legal guidelines are reported as:

Baseline: L = 1.891 x C-0.057

Block AttnRes: L = 1.870 x C-0.058

Full AttnRes: L = 1.865 x C-0.057

The sensible implication is that AttnRes achieves decrease validation loss throughout the examined compute vary, and the Block AttnRes matches the lack of a baseline skilled with about 1.25× extra compute.

Integration into Kimi Linear

Moonshot AI additionally integrates AttnRes into Kimi Linear, its MoE structure with 48B whole parameters and 3B activated parameters, and pre-trains it on 1.4T tokens. Based on the analysis paper, AttnRes mitigates PreNorm dilution by preserving output magnitudes extra bounded throughout depth and distributing gradients extra uniformly throughout layers. One other implementation element is that each one pseudo-query vectors are initialized to zero so the preliminary consideration weights are uniform throughout supply layers, successfully lowering AttnRes to equal-weight averaging in the beginning of coaching and avoiding early instability.

On downstream analysis, the reported features are constant throughout all listed duties. It experiences enhancements from 73.5 to 74.6 on MMLU, 36.9 to 44.4 on GPQA-Diamond, 76.3 to 78.0 on BBH, 53.5 to 57.1 on Math, 59.1 to 62.2 on HumanEval, 72.0 to 73.9 on MBPP, 82.0 to 82.9 on CMMLU, and 79.6 to 82.5 on C-Eval.

Key Takeaways

- Consideration Residuals replaces fastened residual accumulation with softmax consideration over earlier layers.

- The default AttnRes design makes use of a realized layer-specific pseudo-query, not an input-conditioned question.

- Block AttnRes makes the tactic sensible by lowering depth-wise reminiscence and communication from O(Ld) to O(Nd).

- Moonshot analysis teamreports decrease scaling loss than the PreNorm baseline, with Block AttnRes matching about 1.25× extra baseline compute.

- In Kimi Linear, AttnRes improves outcomes throughout reasoning, coding, and analysis benchmarks with restricted overhead.

Try Paper and Repo. Additionally, be at liberty to observe us on Twitter and don’t neglect to affix our 120k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you may be a part of us on telegram as effectively.