Neither Chrome, Safari, nor Firefox have shipped new options within the final couple of weeks, however concern not as a result of main this subject of What’s !essential is a number of the internet improvement trade’s finest educators with, frankly, some killer content material.

Sustaining video state throughout completely different pages utilizing view transitions

Chris Coyier demonstrates keep a video’s state throughout completely different pages utilizing CSS view transitions. He notes that that is pretty straightforward to do with same-page view transitions, however with multi-page view transitions you’ll must leverage JavaScript’s pageswap occasion to avoid wasting details about the video’s state in sessionStorage as a JSON string (works with audio and iframes too), after which use that data to revive the state on pagereveal. Sure, there’s a tiiiiny little bit of audio stutter as a result of we’re technically faking it, but it surely’s nonetheless tremendous neat.

Additionally, CodePen, which I’m certain you already know was based by Chris, introduced a personal beta of CodePen 2.0, which you’ll request to be part of. One of many advantages of CodePen 2.0 is you could create precise initiatives with a number of recordsdata, which suggests you could create view transitions in CodePen. Fairly cool!

How you can ‘title’ media queries

Kevin Powell reveals us leverage CSS cascade layers to ‘title’ media queries. This system isn’t as efficient as @custom-media (and even container type queries, as one commenter advised), however till these are supported in all internet browsers, Kevin’s trick is fairly inventive.

Adam Argyle reminded us final week that @custom-media is being trialed in Firefox Nightly (no phrase on container type queries but), however should you rise up to hurry on CSS cascade layers, you may make the most of Kevin’s trick within the meantime.

Vale’s CSS reset

I do love a very good CSS reset. It doesn’t matter what number of of them I learn, I all the time uncover one thing superior and add it to my very own reset. From Vale’s CSS reset I stole svg:not([fill]) { fill: currentColor; }, however there’s way more to remove from it than that!

How browsers work

In the event you’ve ever questioned how internet browsers truly work — how they get IP addresses, make HTTP requests, parse HTML, construct DOM timber, render layouts, and paint, the recently-shipped How Browsers Work by Dmytro Krasun is an extremely fascinating, interactive learn. It actually makes you surprise concerning the bottlenecks of internet improvement languages and why sure HTML, CSS, and JavaScript options are the best way they’re.

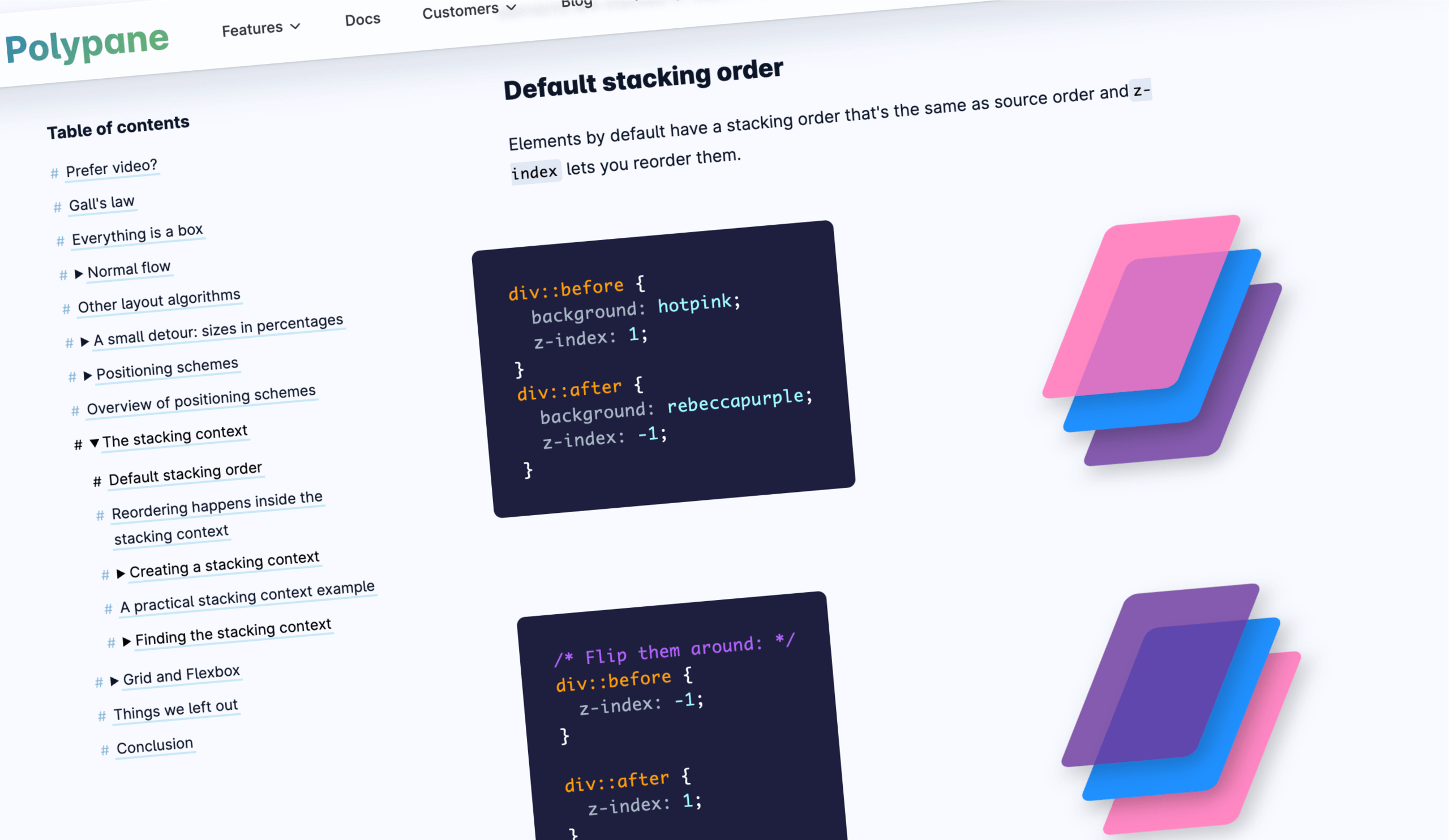

How CSS structure works

As well as, Polypane explains the basics of CSS structure, together with the field mannequin, strains and baselines, positioning schemes, the stacking context, grid structure, and flexbox. In the event you’re new to CSS, I believe these explanations will actually assist you to click on with it. In the event you’re an old-timer (like me), I nonetheless suppose it’s essential to find out how these foundational ideas apply to newer CSS options, particularly since CSS is evolving exponentially lately.

CSS masonry is (most likely) simply across the nook

Talking of layouts, Jen Simmons clarifies after we’ll be capable of use show: grid-lanes, in any other case generally known as CSS masonry. Whereas it’s not supported in any internet browser but, Firefox, Safari, and Chrome/Edge are all trialing it, so that would change fairly rapidly. Jen offers some polyfills, anyway!

If you wish to get forward of the curve, you may let Sunkanmi Fafowora stroll you thru show: grid-lanes.

Theming animations utilizing relative colour syntax

In the event you’re obsessive about design techniques and group, and also you have a tendency to think about illustration and animation as spectacular however messy artwork kinds, Andy Clarke’s article on theming animations utilizing CSS relative colour syntax will actually assist you to to bridge the hole between artwork and logic. If CSS variables are your jam, then this text is unquestionably for you.

Modals vs. pages (and every part in-between)

Modals? Pages? Lightboxes? Dialogs? Tooltips? Understanding the several types of overlays and figuring out when to make use of each remains to be fairly complicated, particularly since newer CSS options like popovers and curiosity invokers, whereas extremely helpful, are making the panorama extra cloudy. In brief, Ryan Neufeld clears up the entire modal vs. web page factor and even offers a framework for deciding which kind of overlay to make use of.

Textual content scaling help is being trialed in Chrome Canary

You realize while you’re coping with textual content that’s been elevated or decreased on the OS-level? Effectively…should you’re an online developer, perhaps you don’t. In spite of everything, this function doesn’t work on the internet! Nevertheless, Josh Tumath tells us that Chrome Canary is trialing a meta tag that makes internet browsers respect this OS setting. In the event you’re curious, it’s , however Josh goes into extra element and it’s value a learn.

See you subsequent time!