Picture by Writer

# Introduction

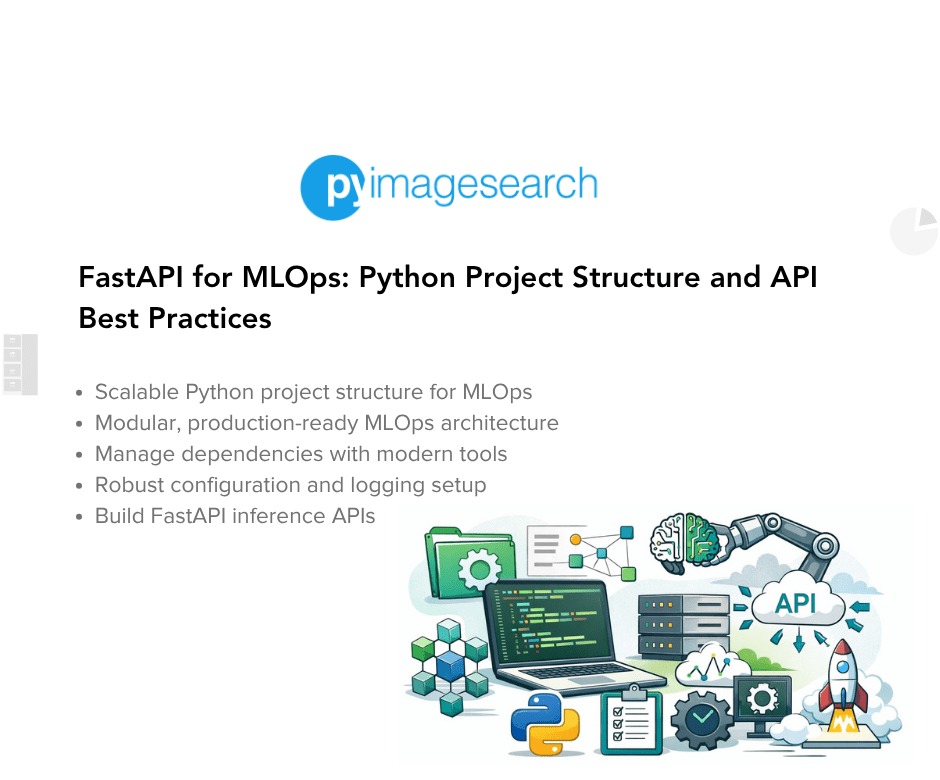

After we work with information scientists making ready for interviews, we see this always: immediate in, response out, transfer on. Nobody ever evaluations something, and nobody ever thinks about why.

What in regards to the corporations transport essentially the most progressive tasks? They’ve discovered a brand new strategy to collaborate. They’ve developed environments during which individuals and AI collaborate on choices. AI generates choices, surfaces patterns, and flags what wants consideration. It exhibits its work so you may confirm. People evaluation, add context, and make the ultimate name. Neither get together merely offers orders to the opposite.

Picture by Writer

# Observing Actual-World Purposes

This isn’t simply concept; it’s taking place now.

// Remodeling Scientific Analysis and Healthcare

AlphaFold generated protein construction predictions that might in any other case require years of analysis in a laboratory. Nonetheless, figuring out the which means behind these predictions, their significance, and the sequence of experiments to carry out subsequent nonetheless requires human experience.

The biotech firm Insilico Drugs took it even additional. Conventional drug growth takes 4 to 5 years simply to determine a promising compound. Insilico Drugs constructed an AI platform that generates and screens 1000’s of potential drug molecules, predicting which of them are most definitely to work. Subsequent, medicinal chemists evaluation the perfect candidates, refine the construction, and create experiments to validate them. The outcomes had been important: the time required to find a lead compound decreased by roughly 75% — from 4 or 5 years to only 18 months.

The identical sample exists in pathology. PathAI analyzes tissue samples to diagnose ailments like most cancers. Pathologists then evaluation the AI findings and add their very own medical expertise to make a prognosis. Based on a Beth Israel Deaconess Medical Heart examine, the outcome was 99.5% correct most cancers detections in comparison with 96% when the pathologist reviewed the slides independently. Moreover, the time required to evaluation slides decreased considerably. AI catches patterns missed attributable to fatigue; people present medical context.

Picture by Writer

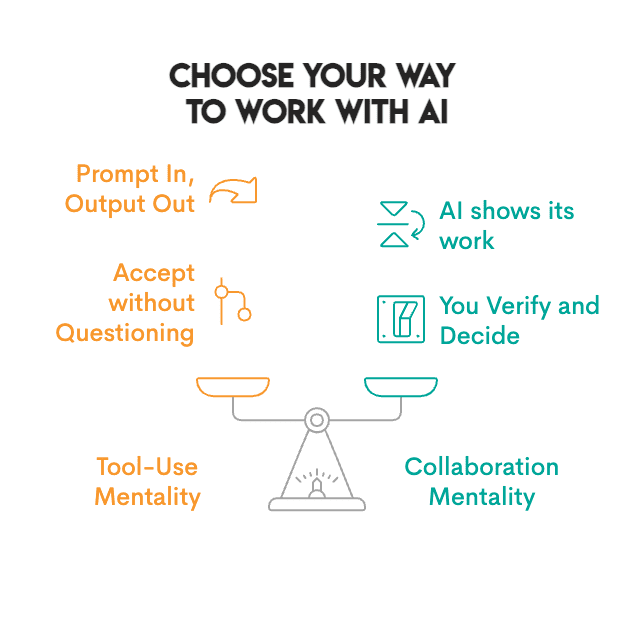

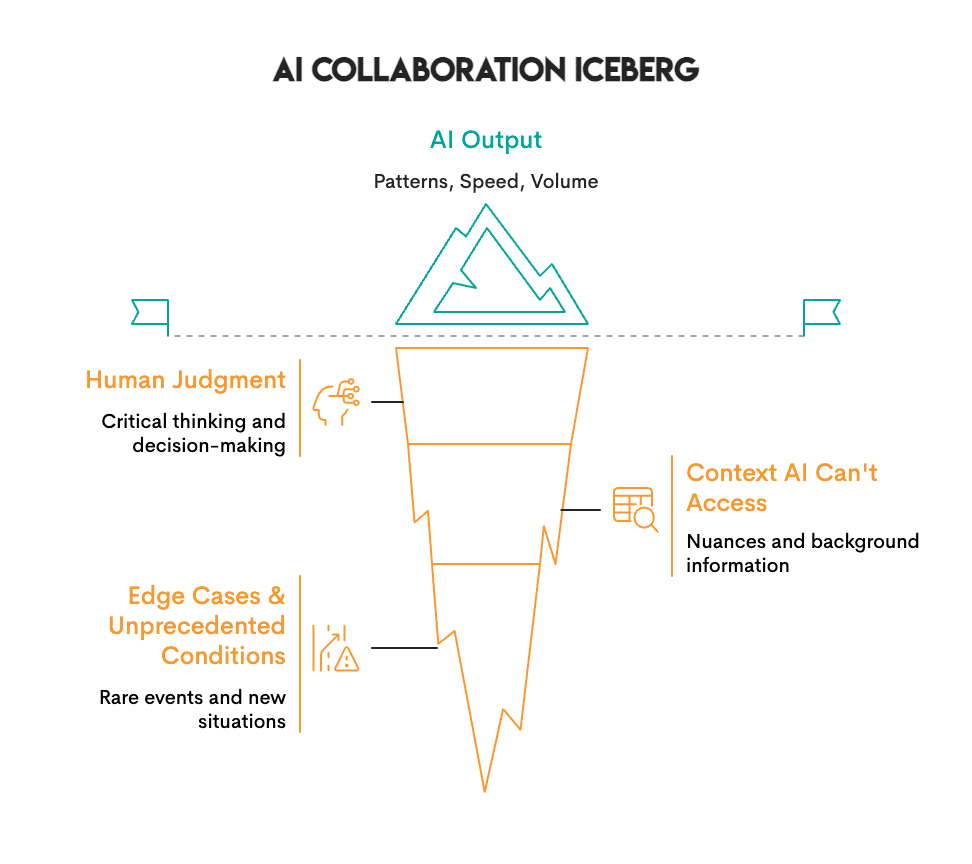

What we now have discovered is that AI finds patterns — it excels at quantity and pace. Folks excel at judgment and context; they decide if these patterns matter.

AlphaFold predicted protein constructions in hours that might take labs years, however scientists nonetheless determine what these constructions imply and which experiments to run subsequent. Insilico’s AI generated 1000’s of drug molecules, however chemists determined which of them had been price synthesizing. PathAI flags suspicious cells at scale, however pathologists add the medical context that determines prognosis.

In every case, neither AI nor individuals alone achieved the outcome. The mix did.

// Enhancing Enterprise Selections

AI can accomplish in hours what took groups weeks: reviewing 1000’s of contracts, analyzing threat throughout international markets, and figuring out patterns in utilization information. All of this may be achieved shortly, however deciding what to do with that data stays a human duty.

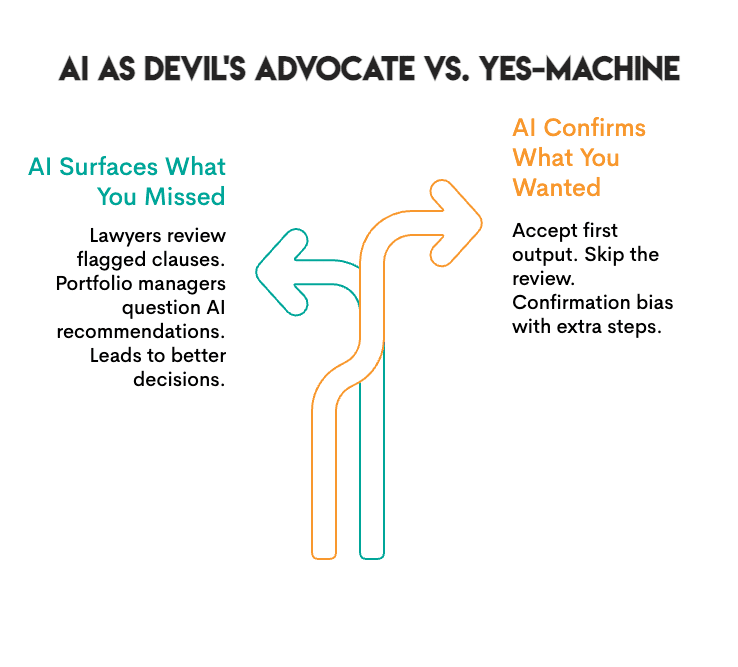

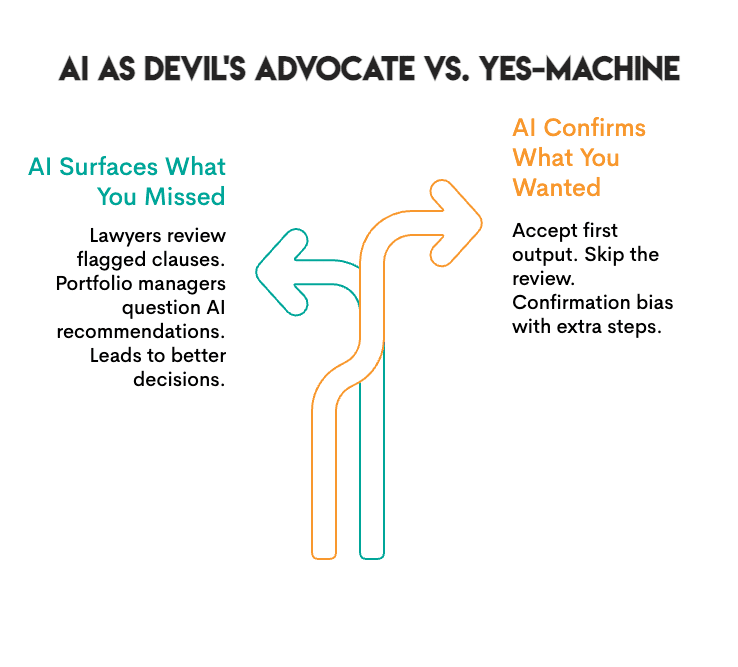

For instance, JPMorgan Chase’s authorized groups manually reviewed contracts for 360,000 hours annually, a course of that was gradual, pricey, and susceptible to errors. They created an answer referred to as COiN, a synthetic intelligence platform designed to learn authorized paperwork through pure language processing (NLP) and machine studying. COiN can extract key factors inside authorized paperwork, determine uncommon or questionable clauses, and categorize provisions inside seconds. Nonetheless, attorneys nonetheless evaluation the objects flagged by the system. Consequently, JPMorgan can course of contracts a lot sooner than earlier than, cut back its compliance errors by 80%, and permit its attorneys to spend their time negotiating and creating methods reasonably than repeatedly studying contracts.

In one other instance, BlackRock is the world’s greatest asset supervisor, controlling belongings price a complete of $21.6 trillion for institutional purchasers and particular person traders. At this scale, BlackRock should analyze thousands and thousands of threat situations throughout a number of international markets, which can’t be finished by hand. To unravel this drawback, BlackRock developed Aladdin (Asset, Legal responsibility, Debt, and Derivatives Funding Community), an AI-based platform to gather and course of massive quantities of market information and determine potential dangers earlier than they happen. There’s nonetheless a human part: BlackRock portfolio managers evaluation Aladdin’s analytics after which make all allocations. The outcomes present that threat evaluation that beforehand took days is now carried out in actual time. Moreover, BlackRock’s portfolios created using Aladdin’s analytics, mixed with human judgment, outperformed each pure algorithmic and pure human approaches. Presently, over 200 monetary establishments license the Aladdin platform for their very own operations.

Picture by Writer

The sample is evident: AI surfaces choices and data at scale. But it surely is not going to inform you when you’re unsuitable; you’ll have to determine that out your self. JPMorgan’s attorneys nonetheless evaluation what COiN flags, and BlackRock’s portfolio managers nonetheless make the ultimate choices.

# Reviewing Collaborative AI Instruments

Not all AI instruments are constructed for collaboration. Some ship an output as a “black field,” whereas others had been created to collaborate with you. The record under highlights instruments that assist collaboration:

// Utilizing Common Goal Assistants

- Claude / ChatGPT: These are conversational AIs that present suggestions in your reasoning, flag ambiguity, and can inform you when they’re uncertain. They signify the closest instruments to precise back-and-forth collaboration.

// Conducting Analysis and Evaluation

- Elicit: This software searches tutorial papers and extracts findings, displaying you the proof behind claims so you may decide whether or not to simply accept the knowledge.

- Consensus: This platform synthesizes scientific literature and shows areas of settlement and disagreement amongst researchers so that you could be view all elements of a dialogue.

- Perplexity: This offers search outcomes with citations. Every declare hyperlinks to a verified supply.

// Optimizing Coding and Improvement

- GitHub Copilot: This software suggests code completions. You evaluation, settle for, or modify; nothing runs except you approve it.

- Cursor: That is an AI-native code editor. It shows diffs of proposed adjustments so that you see precisely what the AI needs to switch earlier than it occurs.

- Replit: This offers explanations for code, suggests fixes, and assists with debugging. You stay in management of what’s deployed.

// Advancing Knowledge Science Workflows

- Julius: This software analyzes information and creates visualizations. It shows the code that was used to create the visualization so you may audit the methodology.

- Hex: This can be a collaborative information workspace with AI help. It was created for groups the place people and AI work collectively on evaluation.

- DataRobot: That is an automatic machine studying (AutoML) platform that gives explanations of mannequin choices. It shows characteristic significance and prediction confidence so that you perceive the underlying logic.

// Enhancing Writing and Communication

- Notion AI: This software is built-in into your workspace for drafts, summaries, and brainstorms, however you select what stays.

- Grammarly: This offers prompt edits with explanations. You both settle for or reject every particular person edit.

What makes these instruments collaborative is that they present their work. They allow you to confirm their findings and don’t demand that you simply settle for their output. That’s the distinction between a software and a collaborator.

# Measuring Collaborative Success

Picture by Writer

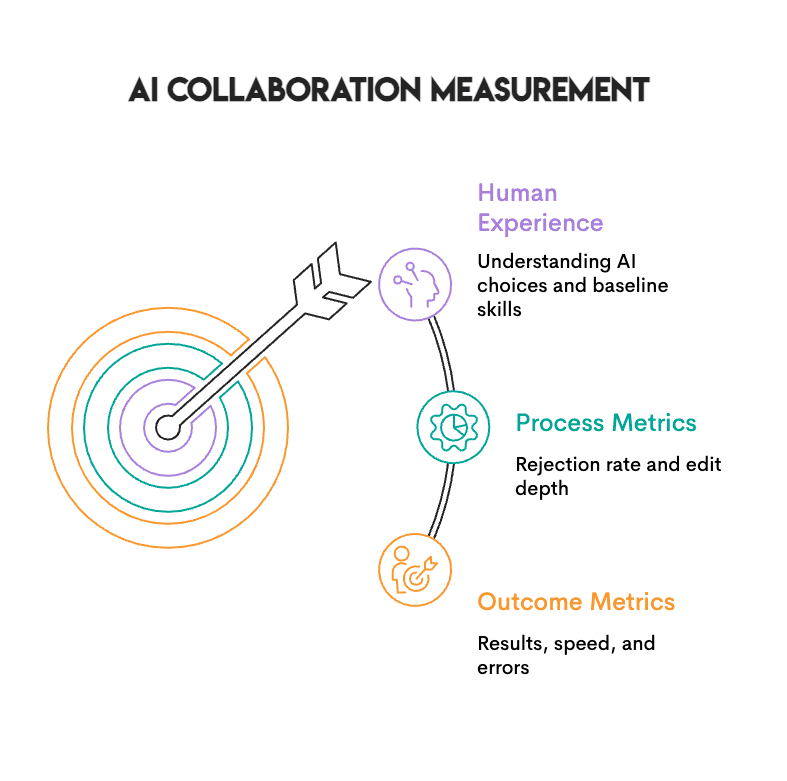

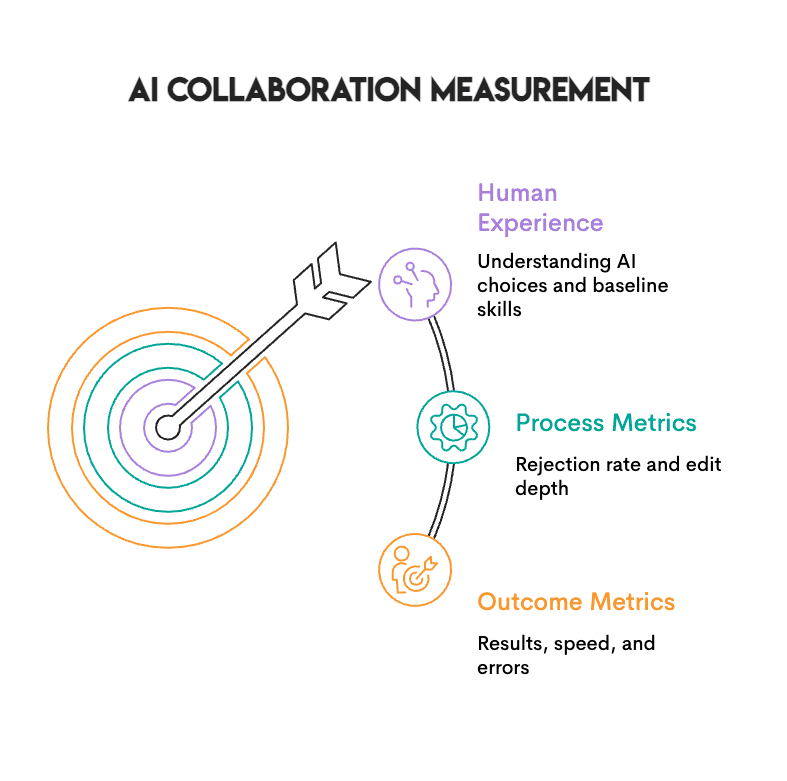

Three varieties of metrics enable you consider whether or not human-AI collaboration is definitely working:

- Final result metrics are simple to trace. Are you seeing higher outcomes? Sooner turnaround? Fewer errors? You need to observe these.

- Course of metrics are much more important. If you’re by no means rejecting AI outputs, that’s not an indication of high-quality AI; it’s a signal that you’ve got stopped pondering.

- Human expertise issues as properly. Are you able to produce these outcomes with out AI? Do you actually perceive why the AI selected what it did, or are you simply going together with it as a result of it sounds clever?

An excellent test: if you’re at all times accepting the primary output, that’s nearer to rubber-stamping than collaborating. Working with out AI sometimes helps you keep a baseline, so you realize what’s your work and what’s the software’s.

# Implementing Efficient Practices

Picture by Writer

Groups that get this proper are inclined to observe a couple of widespread practices:

- Set up clear roles: Decide what function you play and what function the AI performs. One widespread setup includes the AI producing choices whereas you choose the perfect one. This lets you use AI’s capability to discover many prospects whereas holding the ultimate determination with you.

- Construct in checkpoints: Don’t enable AI outputs to proceed on to the subsequent section and not using a transient pause. You don’t want formal approval, however it is best to take a minute to consider why the AI selected what it did. When you can’t articulate the rationale, don’t settle for the output.

- Demand transparency: Use instruments that present their work, together with the code they generated, the sources they used, and the adjustments they proposed. When you can’t see how the AI reached its output, you can’t confirm it.

- Keep sharp: Periodically work with out AI. This isn’t a press release of resistance, however reasonably a normal to match towards. You wish to know what your unassisted work appears like, and also you need to have the ability to carry out if the instruments fail.

# Concluding Ideas

Picture by Writer

Human-AI teaming represents an actual shift. We’re studying to work together with programs that present enter, reasonably than simply executing instructions.

Making it work requires new abilities, resembling understanding when to depend on AI and when to query it. It includes evaluating processes to know whether or not they produce outcomes or just really feel productive. Most significantly, it requires staying sharp sufficient to catch errors once they occur.

Groups that develop methods to collaborate with AI produce higher outcomes. They determine errors sooner and think about choices they might not in any other case have considered. Groups that don’t develop these abilities are inclined to both make the most of AI in such a restricted trend that they miss the potential advantages, or they grow to be so dependent that they can’t operate with out it.

# Answering Frequent Questions

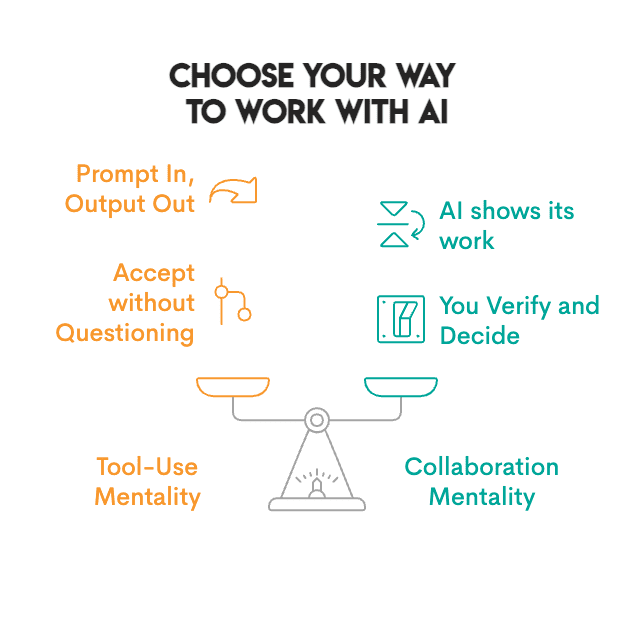

// What’s the distinction between using AI as a software versus collaborating with it?

Device use includes offering a command to the AI, which it executes whilst you settle for the output. Collaboration includes the AI displaying its work so you may confirm and determine. You may see the sources, the code, and the reasoning, after which select whether or not to simply accept, modify, or reject the output. When you can’t see how the AI reached its conclusion, you can’t really collaborate.

// How can I keep away from turning into too reliant on AI?

Periodically work with out AI and observe whether or not you may articulate why the AI introduced the output it did. When you discover that you’re routinely accepting the primary output supplied, or in case your efficiency suffers considerably when working with out AI, you might be probably overly reliant on it.

// Are corporations evaluating this in interviews?

Sure. Interviewers now watch how candidates work together with AI. Those that settle for each suggestion with out questioning show poor judgment, whereas those that evaluation, query, and modify AI outputs show common sense.

Nate Rosidi is an information scientist and in product technique. He is additionally an adjunct professor educating analytics, and is the founding father of StrataScratch, a platform serving to information scientists put together for his or her interviews with actual interview questions from prime corporations. Nate writes on the newest developments within the profession market, offers interview recommendation, shares information science tasks, and covers every thing SQL.