We’ve all used single AI fashions, whether or not it’s a bot answering questions or an algorithm working seamlessly within the background. However are you able to think about what would occur when many AI methods come collectively to boost effectivity? That’s what a multi-agent system in AI does.

A multi-agent system in AI, also called MAS, is a man-made intelligence computation system that consists of many brokers interacting with one another and with their surroundings to attain their particular person or collective objectives. In distinction to single-agent methods, the place one major agent undertakes selections, purposes of multi-agent methods in AI allow brokers to work via cooperation, competitors, and coordination with one another.

Whereas multi-agent methods are sophisticated to construct, they supply a large useful edge to particular person entrepreneurs who could also be struggling to compete with bigger organizations. The important thing, then, is to simplify it so it really works for you. Precisely the way you need it! This text will focus on all that, and the advantages and challenges of multi-agent AI. Learn on!

Dive Into The World of Synthetic Intelligence! Discover How AI Can Rework Your Enterprise Operations

How Multi-Agent Intelligence Works?

Based on Roots Evaluation, AI agent purposes in customer support and digital assistants are predicted to account for 78.65% of the market share by 2035. Value a deep dive, don’t you suppose?

Since now we have established what multi-agent AI methods are, let’s dive into their make-up and the way they work.

The muse of MAS is synthetic intelligence brokers. These, in essence, are methods or packages that may autonomously carry out duties requested by the person or one other system.

How do they perform? Massive language fashions (LLMs) are the powerhouses behind it. Pure language processing methods are tapped into to know and reply to person inputs. Brokers comply with a no-nonsense, strategic step-by-step course of to resolve issues. After they really feel the necessity to name on exterior instruments, they alert the person to do what is required.

If Multi-agent intelligence is damaged down into items, it consists of 4 main parts –

- Brokers: As mentioned earlier, these are particular person components of the system which have their very own talents, data, and objectives. Brokers can vary from easy assistant bots to superior robots that may study and adapt. Brokers are thought of the blood that programs via the veins of MAS.

- Shared Surroundings: That is outlined by the area during which the brokers function. This could possibly be a bodily place, like a manufacturing facility. Or it could possibly be a digital place, like a digital platform. Both means, this surroundings will decide how the brokers act and work together.

- Interactions: As soon as the suitable brokers are positioned in probably the most acceptable surroundings, they proceed to work together with one another via varied strategies, akin to collaboration or competitors. These dialogues are important for the system’s workings and enchancment.

- Communication: Brokers are sometimes required to speak to share data, negotiate, and/or coordinate their actions.

The 2 most necessary behaviors of Multi-agent intelligence are –

- Flocking: Right here, brokers have a single intention and a few group or supervisor to coordinate their habits.

- Swarming: That is the place the easy decentralized interactions of straightforward AI brokers come collectively collectively. Shared context is the crux of this advanced and wonderful collaboration.

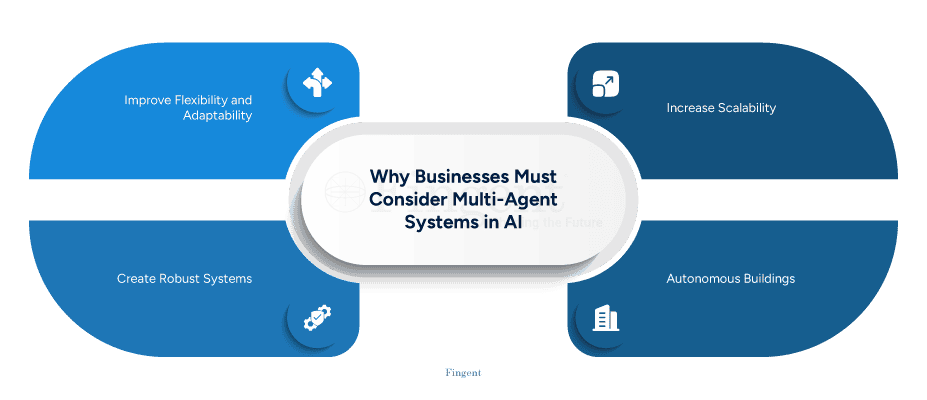

Enterprise Advantages of Multi-Agent Methods

Arms down, multi-agent AI methods can and have solved many intricate and real-world duties. With unmatched ease and effectivity at that. At its root, its predominant profit is that it makes advanced processes extra clever and environment friendly. Listed below are some the reason why multi-agent methods work so nicely for companies.

1. Provides flexibility and flexibility

Analysis signifies that attributable to AI, 81% of firms react quicker to market shifts. MAS can add to this profit as it may possibly simply adapt to enterprise fashions, wants, and objectives.

2. Further palms to extend scalability

If the complexity of an issue will increase, additional AI brokers will be seamlessly launched to steer new duties or obligations. This stage of scalability makes MAS appropriate for a variety of purposes and dynamic environments.

3. Creates a strong system

Multi-agent methods enhance fault tolerance. Because of this if one AI element fails or malfunctions, one other takes over with out lacking a beat. This ensures that there’s continuity to MAS and will be vital for industries like healthcare and finance.

4. Area Specialization

The ingredient for the effectivity of multi-agent methods is delegation. Every agent is assigned a particular area experience. In distinction, single-agent methods want one agent to multitask and deal with duties in varied domains. In multi-agent methods, every agent focuses on their very own distinctive activity. Focus means extra effectivity and diminished danger of guide errors.

Constructing Belief In AI: Enabling Companies to Strategize an Moral AI Future

Challenges of Multi-Agent Methods

Simply as each facet of Synthetic Intelligence has its fair proportion of challenges, there are a number of push-backs in designing and implementing Multi-agent intelligence, together with:

1. Agent malfunctions

Basis fashions are a kind of synthetic intelligence mannequin skilled via methods like fine-tuning, prompting, and switch studying. They’re subjected to large, various datasets to carry out a variety of basic duties. Typically, multi-agent methods constructed on the identical basis mannequin can expertise shared obstacles. This will trigger a system-wide failure of all brokers concerned. It additionally exposes vulnerability to hostile assaults.

2. Coordination complexity

That is maybe the best problem with creating multi-agent methods – the complexity of making brokers that may coordinate and negotiate with each other. This cooperation is significant for a multi-agent system to perform at full potential.

3. Unpredictable habits

Some multi-AI brokers which can be set to carry out autonomously and independently in decentralized networks can exhibit conflicts or unpredictable habits. This will make the detection of points and their administration troublesome.

How do you cope with these challenges?

Fingent Can Assist!

Fingent might help organizations implement multi-agent methods by providing customized AI software program growth, cloud options, and experience in designing and deploying intricate AI methods. Fingent’s experience in AI might help companies create specialised, distinctive, and autonomous multi-AI brokers which can be programmed to collaborate and resolve advanced issues. In addition they handle workflows and automate processes at scale.

Fingent designs and implements workflows for AI brokers to make sure harmonious collaboration and environment friendly execution of duties. We incorporate human oversight and intervention to spotlight vital workflows. We additionally assist create the required infrastructure, akin to MCP servers, to attach and handle AI brokers and their interactions. Lastly, Fingent makes use of multi-agent methods to automate and optimize advanced enterprise procedures, thus resulting in larger effectivity and price financial savings.