Banks have been utilizing AI for some time now—flagging fraud, crunching credit score scores, and personalizing gives. However Agentic AI in Monetary Companies? That’s a complete new recreation. It doesn’t simply observe directions. It units its personal objectives, makes strategic selections, and adjusts dynamically –like a monetary analyst with instinct, pace, and 0 downtime.

If conventional AI is your calculator. Agentic AI is your CFO.

In a sector outlined by threat, regulation, and razor-thin margins, the emergence of agentic techniques marks a turning level. We’re not speaking about marginal features or fancy dashboards. We’re speaking about structural change—throughout asset administration, compliance, buyer engagement, and credit score decisioning.

However right here’s a sizzling take: For those who’re nonetheless utilizing AI simply to automate workflows, you’re already behind. The leaders aren’t simply automating—they’re delegating.

So ask your self—would you belief an AI to make a million-dollar lending resolution in your behalf?

Create Frictionless Experiences For Your Monetary Service Prospects Construct Distinctive, Consumer-Pleasant Apps & Digital Cost Options

What Is Agentic AI – How Is It Remodeling the Monetary Service Business?

Agentic AI refers to synthetic intelligence techniques able to autonomous decision-making, goal-setting, and adaptation—while not having fixed human supervision. That’s an enormous deal in monetary providers—as a result of it means AI isn’t simply supporting back-office processes. It’s starting to run essential processes with context-awareness and real-time optimization. All achieved with distinctive pace.

A report by McKinsey highlights that agentic techniques may enhance productiveness by as much as 30%. That is particularly in areas like buyer onboarding, threat evaluation, and portfolio administration.

Right here’s the way it’s already remodeling the trade:

- In asset administration, agentic AI acts like your sharpest portfolio supervisor—minus the espresso breaks. No handbook hustle. Simply good, automated strikes.

- In lending, selections that used to take hours now take milliseconds. Agentic techniques crunch structured and unstructured knowledge—credit score historical past, financial institution statements, even sentiment—then ship sooner, fairer mortgage outcomes. It’s pace with out bias.

- In compliance, it’s like having a 24/7 watchdog with a legislation diploma. Agentic AI tracks regulation shifts, flags suspicious patterns, and adapts to new insurance policies earlier than your compliance workforce even hits refresh. No extra scrambling when auditors present up.

This isn’t experimental anymore. In case your financial institution nonetheless depends on handbook resolution chains, the true threat won’t be in adopting Agentic AI—it’s in ignoring it.

Weblog: Agentic Al vs Conventional Al: Understanding The New Period of Expertise

What Are the Advantages of Agentic AI within the Monetary Sector?

The largest benefit of Agentic AI in monetary providers is straightforward: higher selections, made sooner—with much less human drag.

Agentic AI doesn’t simply course of knowledge; it interprets intent, adapts to new alerts, and takes initiative. That’s an enormous leap in a world the place timing and belief are all the things.

By 2028, Deloitte says AI may slash software program funding prices by 20% to 40%. Do it proper, and banks may save as much as $1.1 million per engineer.

- Quicker selections, zero drama – Fraud alerts? Mortgage approvals? Agentic techniques deal with it in actual time. No extra batch queues or purple tape.

- Personalization on autopilot – These AIs know what clients need earlier than they do. Dynamic gives, tailor-made nudges, frictionless onboarding—achieved.

- Compliance that by no means clocks out – Agentic AI in banking and finance can be careful for regs 24/7. It spots coverage shifts and stops breaches earlier than they occur.

- Prices down, pace up – What takes a human hours, brokers do in seconds. Now scale that throughout 1000’s of duties. That’s effectivity with a capital E.

- AI with a method hat – This isn’t reactive AI. It thinks forward—optimizing portfolios, forecasting liquidity. Principally, your tireless junior strategist—minus the all-nighters.

Right here’s the query: Are your human groups spending hours making selections that an AI agent may resolve in seconds? As a result of in finance, gradual selections are costly selections.

Prime Use Circumstances

Listed below are the highest 5 real-world purposes of AI-powered monetary providers pushed by agentic techniques:

1. Autonomous Portfolio Rebalancing

Robo-advisors powered by agentic AI at the moment are in a position to make micro-adjustments to portfolios based mostly on market swings, consumer sentiment, and long-term objectives—with out ready for human evaluation. Platforms like Wealthfront use AI to maintain funding portfolios in verify.

2. Dynamic Fraud Detection

Somewhat than flagging predefined purple flags, agentic AI in banking and finance learns the person’s behavioral fingerprint. It will probably detect anomalous exercise in seconds, even when it’s by no means seen that sample earlier than.

3. AI-Pushed Credit score Underwriting

Conventional scoring fashions use fastened standards. Agentic techniques mix conventional and various knowledge—like transaction historical past, geolocation, and even tone in buyer communication—to construct nuanced borrower profiles.

4. Regulatory Change Administration

As a substitute of manually deciphering 1000’s of pages of recent compliance updates, agentic techniques ingest and act on them robotically—triggering workflows, updating documentation, and coaching workers by means of personalised AI tutors.

5. Personalised AI Brokers for HNIs

Some non-public banks are providing bespoke AI monetary brokers that act on behalf of high-net-worth people—dealing with alerts, rebalancing portfolios, producing stories, and even reserving conferences with human advisors. These brokers be taught preferences and modify methods over time—similar to a human relationship supervisor would.

Uncover Custom-made Fintech Options That Can Ramp Up Your Monetary Companies

Widespread FAQs

Q: How Agentic AI is remodeling monetary providers?

A: AI doesn’t simply predict dangers—it anticipates them. It doesn’t simply personalize—it preempts. McKinsey says the prize is huge: $1 trillion in annual worth for world banking, due to sharper selections, smarter workflows, and leaner operations.

This isn’t an “innovation lab” experiment anymore. It’s core technique. It’s in underwriting, fraud detection, funding methods, compliance monitoring—and it’s altering how banks suppose, function, and compete.

Q: What are the purposes of Agentic AI in banking?

A: Agentic AI powers autonomous decision-making throughout the banking worth chain.

Key use instances embrace:

- Autonomous buyer onboarding

- Actual-time fraud prevention

- Portfolio optimization for wealth shoppers

- Credit score decisioning utilizing various knowledge

- Dynamic compliance monitoring

- Conversational AI brokers can suppose and act

In contrast to conventional AI, which waits for enter, agentic fashions provoke actions based mostly on context and intent. It features extra like clever teammates than static instruments.

Q: Is AI secure to make use of in monetary decision-making?

A: It may be—however solely when ruled correctly. Agentic AI doesn’t simply automate — it acts. And with that autonomy comes new tasks: traceability, auditability, and equity. Good intentions imply little if AI selections are a black field. Use explainable frameworks so each motion may be traced and trusted.

Q: How does Agentic AI enhance buyer expertise in finance?

A: By remodeling banking into extra of a relationship moderately than a mere transaction. Agentic AI permits for hyper-personalization: tailor-made offers, well timed notifications, adaptive spending evaluation, and fast help. It will probably predict buyer needs, reply to decisions, and even deal with points earlier than the shopper alerts for assist. An agentic system may acknowledge a buyer’s worldwide journey and instantly modify fraud detection limits, notify them of overseas alternate charges, or suggest journey insurance coverage—all robotically.

That’s not simply good CX. That’s loyalty, inbuilt.

Q: Might Agentic AI be the important thing to monetary compliance effectivity?

A: Undoubtedly—and it’s in progress.

Agentic AI can analyze new rules, align them with inner insurance policies, and robotically provoke updates all through techniques. It constantly performs checks, identifies anomalies immediately, and produces audit-ready logs robotically.

Q: What does Agentic AI consult with?

A; Agentic AI denotes AI techniques that work with a level of freedom. They can set up aims, make decisions, modify to new circumstances, and take motion while not having human prompts. They don’t adhere to guidelines—they discover options.

This idea goes past automation. Agentic AI mimics human reasoning and initiative, permitting monetary establishments to dump whole resolution chains, not simply remoted duties.

Q: What’s the position of Agentic AI in banking?

A: The core operate? Delegation with confidence.

Agentic AI in finance features as a battalion of relentless junior analysts. It performs fraud evaluations, optimizes funding techniques, and executes fast, data-informed decisions. No delays. No burnout. Simply constant efficiency throughout thousands and thousands of transactions. It cuts human bottlenecks and retains issues shifting—quick and honest.

Q: What are the Agent AI-related risks and Challenges in Finance?

A: Right here’s the trustworthy fact: agentic AI may be sensible—and in addition brittle.

Selections can lack transparency, making it robust to hint logic—dangerous information for compliance and fame. If skilled on biased knowledge, brokers could reinforce unfair practices in lending or fraud checks. They’re additionally susceptible to assaults, particularly in high-stakes monetary environments. And the extra we depend on them, the extra human oversight can fade—harmful when edge instances hit.

Greatest Practices to Start with AI Brokers in Finance

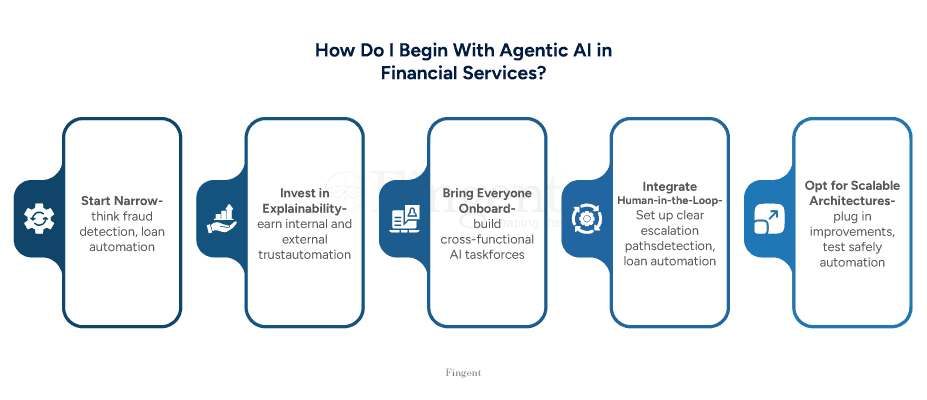

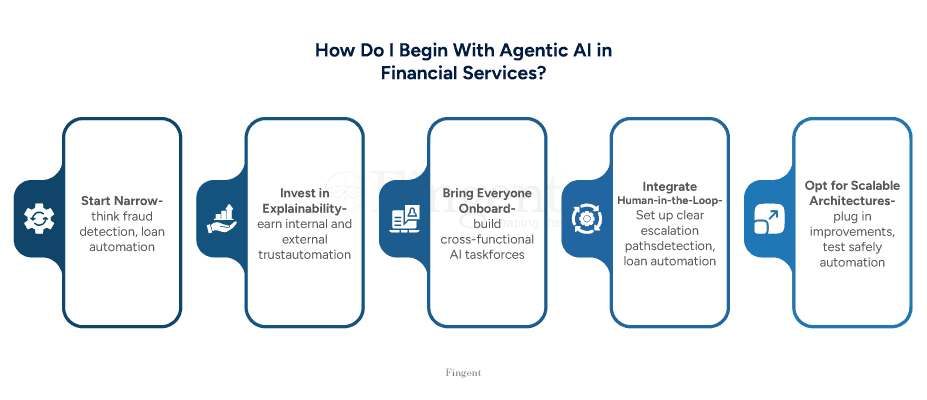

You don’t leap into agentic AI the way in which you’d check a chatbot. This isn’t simply plug-and-play tech—it’s strategic infrastructure. All the time preserve the objectives and strengths of Agentic AI on the forefront and play to those strengths. Suppose high-impact use instances. Set up cross-functional activity forces. Construct scalable, modular architectures. That is what is going to get you probably the most profit from Agentic AI.

Listed below are the very best practices that separate good adopters from costly errors:

1. Begin with Slim, Excessive-Influence Use Circumstances

Don’t boil the ocean. Start with agentic pilots the place the enterprise case is evident—suppose fraud detection, mortgage automation, or KYC. Show worth. Then scale.

2. Spend money on Explainability from Day One

Agentic AI should earn inner and exterior belief. Guarantee all selections are auditable and interpretable. That’s not optionally available—it’s regulatory survival.

3. Construct Cross-Purposeful AI Taskforces

Carry collectively knowledge scientists, compliance officers, finance leads, and buyer expertise heads. Why? As a result of deploying AI brokers is everybody’s job.

4. Combine Human-in-the-Loop Governance

Give AI brokers autonomy. However inside good boundaries. Arrange clear escalation paths for when brokers hit a wall. Don’t depart them guessing.

5. Go for Scalable, Modular Architectures

Guarantee to select scalable, modular architectures. That means, you may plug in enhancements, check safely, and develop with out breaking what already works.

How Can Fingent Assist?

Fingent brings greater than AI functionality—we carry enterprise readability.

Our method to agentic AI in monetary providers is grounded in a single precept: technique earlier than software program. We don’t simply throw fashions at issues. We diagnose what issues, design what scales, and deploy what works.

Right here’s how we assist circumnavigate and win with agentic AI:

- Use Case Identification with Measurable ROI

We work together with your stakeholders to pinpoint the highest-leverage agentic alternatives—people who lower prices, enhance margins, or elevate expertise. Quick. - Customized AI Agent Improvement

Want an agent that adapts to your threat fashions? Or one which acts on portfolio thresholds? We design and construct autonomous brokers that talk your corporation language—not generic code. - Belief-First Structure

All our deployments embrace explainability frameworks, equity checks, and built-in compliance mapping—so your AI earns inner belief and passes exterior scrutiny. - Integration with Your Current Stack

Whether or not you’re on Salesforce, Temenos, or a customized core system—we combine cleanly. No forklift upgrades. No system sprawl.

AI Brokers are the longer term. In case you are but to embrace them now on your monetary providers, then you could act now! Join with our consultants at present and discover your alternatives with Agentic AI in monetary providers.