This put up is co-written with Ranjit Rajan, Abdullahi Olaoye, and Abhishek Sawarkar from NVIDIA.

AI’s subsequent frontier isn’t merely smarter chat-based assistants, it’s autonomous brokers that cause, plan, and execute throughout total techniques. However to perform this, enterprise builders want to maneuver from prototypes to production-ready AI brokers that scale securely. This problem grows as enterprise issues turn into extra complicated, requiring architectures the place a number of specialised brokers collaborate to perform subtle duties.

Constructing AI brokers in growth differs essentially from deploying them at scale. Builders face a chasm between prototype and manufacturing, combating efficiency optimization, useful resource scaling, safety implementation, and operational monitoring. Typical approaches go away groups juggling a number of disconnected instruments and frameworks, making it tough to keep up consistency from growth via deployment with optimum efficiency. That’s the place the highly effective mixture of Strands Brokers, Amazon Bedrock AgentCore, and NVIDIA NeMo Agent Toolkit shine. You should utilize these instruments collectively to design subtle multi-agent techniques, orchestrate them, and scale them securely in manufacturing with built-in observability, agent analysis, profiling, and efficiency optimization. This put up demonstrates the best way to use this built-in answer to construct, consider, optimize, and deploy AI brokers on Amazon Internet Companies (AWS) from preliminary growth via manufacturing deployment.

Basis for enterprise-ready brokers

The open supply Strands Brokers framework simplifies AI agent growth via its model-driven strategy. Builders create brokers utilizing three elements:

- Basis fashions (FMs) resembling Amazon Nova, Claude by Anthropic, and Meta’s Llama

- Instruments (over 20 built-in, plus customized instruments utilizing Python decorators)

- Prompts that information agent conduct.

The framework consists of built-in integrations with AWS companies resembling Amazon Bedrock and Amazon Easy Storage Service (Amazon S3), native testing assist, steady integration and steady growth (CI/CD) workflows, a number of deployment choices, and OpenTelemetry observability.

Amazon Bedrock AgentCore is an agentic platform for constructing, deploying, and working efficient brokers securely at scale. It has composable, absolutely managed companies:

- Runtime for safe, serverless agent deployment

- Reminiscence for short-term and long-term context retention

- Gateway for safe instrument entry by remodeling APIs and AWS Lambda capabilities into agent-compatible instruments and connecting to present Mannequin Context Protocol (MCP) servers

- Id for safe agent id and entry administration

- Code Interpreter for safe code execution in sandbox environments

- Browser for quick, safe internet interactions

- Observability for complete operational insights to hint, debug, and monitor agent efficiency

- Evaluations for constantly inspecting agent high quality primarily based on real-world conduct

- Coverage to maintain brokers inside outlined boundaries

These companies, designed to work independently or collectively, summary the complexity of constructing, deploying, and working subtle brokers whereas working with open supply frameworks or fashions delivering enterprise-grade safety and reliability.

Agent analysis, profiling, and optimization with NeMo Agent Toolkit

NVIDIA NeMo Agent Toolkit is an open supply framework designed to assist builders construct, profile, and optimize AI brokers no matter their underlying framework. Its framework-agnostic strategy means it really works seamlessly with Strands Brokers, LangChain, LlamaIndex, CrewAI, and customized enterprise frameworks. As well as, completely different frameworks can interoperate after they’re related within the NeMo Agent Toolkit.

The toolkit’s profiler gives full agent workflow evaluation that tracks token utilization, timing, workflow-specific latency, throughput, and run instances for particular person brokers and instruments, enabling focused efficiency enhancements. Constructed on the toolkit’s analysis harness, it consists of Retrieval Augmented Technology (RAG)-specific evaluators (resembling reply accuracy, context relevance, response groundedness, and agent trajectory) and helps customized evaluators for specialised use instances, enabling focused efficiency optimization. The automated hyperparameter optimizer profiles and systematically discovers optimum settings for parameters resembling temperature, top_p, and max_tokens whereas maximizing accuracy, groundedness, context relevance, and minimizing token utilization, latency, and optimizing for different customized metrics as properly. This automated strategy profiles your full agent workflows, recognized bottlenecks, and uncovers optimum parameter combos that guide tuning would possibly miss. The toolkit’s clever GPU sizing calculator alleviates guesswork by simulating agent latency and concurrency situations and predicting exact GPU infrastructure necessities for manufacturing deployment.

The toolkit’s observability integration connects with well-liked monitoring companies together with Arize Phoenix, Weights & Biases Weave, Langfuse, and OpenTelemetry supported techniques, like Amazon Bedrock AgentCore Observability, making a steady suggestions loop for ongoing optimization and upkeep.

Actual-world implementation

This instance demonstrates a knowledge-based agent that retrieves and synthesizes info from internet URLs to reply consumer queries. Constructed utilizing Strands Brokers with built-in NeMo Agent Toolkit, the answer is containerized for fast deployment in Amazon Bedrock AgentCore Runtime and takes benefit of Bedrock AgentCore companies, resembling AgentCore Observability. Moreover, builders have the flexibleness to combine with absolutely managed fashions in Amazon Bedrock, fashions hosted in Amazon SageMaker AI, containerized fashions in Amazon Elastic Kubernetes Service (Amazon EKS) or different mannequin API endpoints. The general structure is designed for a streamlined workflow, transferring from agent definition and optimization to containerization and scalable deployment.

The next structure diagram illustrates an agent constructed with Strands Brokers integrating NeMo Agent Toolkit deployed in Amazon Bedrock AgentCore.

Agent growth and analysis

Begin by defining your agent and workflows in Strands Brokers, then wrap it with NeMo Agent Toolkit to configure elements resembling a giant language mannequin (LLM) for inference and instruments. Discuss with the Strands Brokers and NeMo Agent Toolkit integration instance in GitHub for an in depth setup information. After configuring your surroundings, validate your agent logic by operating a single workflow from the command line with an instance immediate:

nat run --config_file examples/frameworks/strands_demo/configs/config.yml --input "How do I take advantage of the Strands Brokers API?"

The next is the truncated terminal output:

Workflow Consequence:

['The Strands Agents API is a flexible system for managing prompts, including both

system prompts and user messages. System prompts provide high-level instructions to

the model about its role, capabilities, and constraints, while user messages are your

queries or requests to the agent. The API supports multiple techniques for prompting,

including text prompts, multi-modal prompts, and direct tool calls. For guidance on

how to write safe and responsible prompts, please refer to the Safety & Security -

Prompt Engineering documentation.']

As a substitute of executing a single workflow and exiting, to simulate a real-world state of affairs, you’ll be able to spin up a long-running API server able to dealing with concurrent requests with the serve command:

nat serve --config_file examples/frameworks/strands_demo/configs/config.yml

The next is the truncated terminal output:

INFO: Software startup full.

INFO: Uvicorn operating on http://localhost:8000 (Press CTRL+C to give up)

The agent is now operating regionally on port 8000. To work together with the agent, open a brand new terminal and execute the next cURL command. It will generate output just like the earlier nat run step however the agent runs constantly as a persistent service relatively than executing one time and exiting. This simulates the manufacturing surroundings the place Amazon Bedrock AgentCore will run the agent as a containerized service:

curl -X 'POST' 'http://localhost:8080/invocations' -H 'settle for: software/json' -H 'Content material-Kind: software/json' -d '{"inputs" : "How do I take advantage of the Strands Brokers API?"}'curl -X 'POST' 'http://localhost:8000/generate' -H 'settle for: software/json' -H 'Content material-Kind: software/json' -d '{"inputs" : "How do I take advantage of the Strands Brokers API?"}'

The next is the truncated terminal output:

{"worth":"The Strands Brokers API gives a versatile system for managing prompts,

together with each system prompts and consumer messages. System prompts present high-level

directions to the mannequin about its position, capabilities, and constraints, whereas consumer

messages are your queries or requests to the agent. The SDK helps a number of methods

for prompting, together with textual content prompts, multi-modal prompts, and direct instrument calls.

For steering on the best way to write secure and accountable prompts, please discuss with the

Security & Safety - Immediate Engineering documentation."}

Agent profiling and workflow efficiency monitoring

With the agent operating, the subsequent step is to ascertain a efficiency baseline. For example the depth of insights out there, on this instance, we use a self-managed Llama 3.3 70B Instruct NIM on an Amazon Elastic Compute Cloud (Amazon EC2) P4de.24xlarge occasion powered by NVIDIA A100 Tensor Core GPUs (8xA100 80 GB GPU) operating on Amazon EKS. We use the nat eval command to judge the agent and generate the evaluation:

nat eval --config_file examples/frameworks/strands_demo/configs/eval_config.yml

The next is the truncated terminal output:

Evaluating Trajectory: 100%|████████████████████████████████████████████████████████████████████| 10/10 [00:10<00:00, 1.00s/it]

2025-11-24 16:59:18 - INFO - nat.profiler.profile_runner:127 - Wrote mixed knowledge to: .tmp/nat/examples/frameworks/strands_demo/eval/all_requests_profiler_traces.json

2025-11-24 16:59:18 - INFO - nat.profiler.profile_runner:146 - Wrote merged standardized DataFrame to .tmp/nat/examples/frameworks/strands_demo/eval/standardized_data_all.csv

2025-11-24 16:59:18 - INFO - nat.profiler.profile_runner:200 - Wrote inference optimization outcomes to: .tmp/nat/examples/frameworks/strands_demo/eval/inference_optimization.json

2025-11-24 16:59:28 - INFO - nat.profiler.profile_runner:224 - Nested stack evaluation full

2025-11-24 16:59:28 - INFO - nat.profiler.profile_runner:235 - Concurrency spike evaluation full

2025-11-24 16:59:28 - INFO - nat.profiler.profile_runner:264 - Wrote workflow profiling report back to: .tmp/nat/examples/frameworks/strands_demo/eval/workflow_profiling_report.txt

2025-11-24 16:59:28 - INFO - nat.profiler.profile_runner:271 - Wrote workflow profiling metrics to: .tmp/nat/examples/frameworks/strands_demo/eval/workflow_profiling_metrics.json

2025-11-24 16:59:28 - INFO - nat.eval.consider:345 - Workflow output written to .tmp/nat/examples/frameworks/strands_demo/eval/workflow_output.json

2025-11-24 16:59:28 - INFO - nat.eval.consider:356 - Analysis outcomes written to .tmp/nat/examples/frameworks/strands_demo/eval/rag_relevance_output.json

2025-11-24 16:59:28 - INFO - nat.eval.consider:356 - Analysis outcomes written to .tmp/nat/examples/frameworks/strands_demo/eval/rag_groundedness_output.json

2025-11-24 16:59:28 - INFO - nat.eval.consider:356 - Analysis outcomes written to .tmp/nat/examples/frameworks/strands_demo/eval/rag_accuracy_output.json

2025-11-24 16:59:28 - INFO - nat.eval.consider:356 - Analysis outcomes written to .tmp/nat/examples/frameworks/strands_demo/eval/trajectory_accuracy_output.json

2025-11-24 16:59:28 - INFO - nat.eval.utils.output_uploader:62 - No S3 config supplied; skipping add.

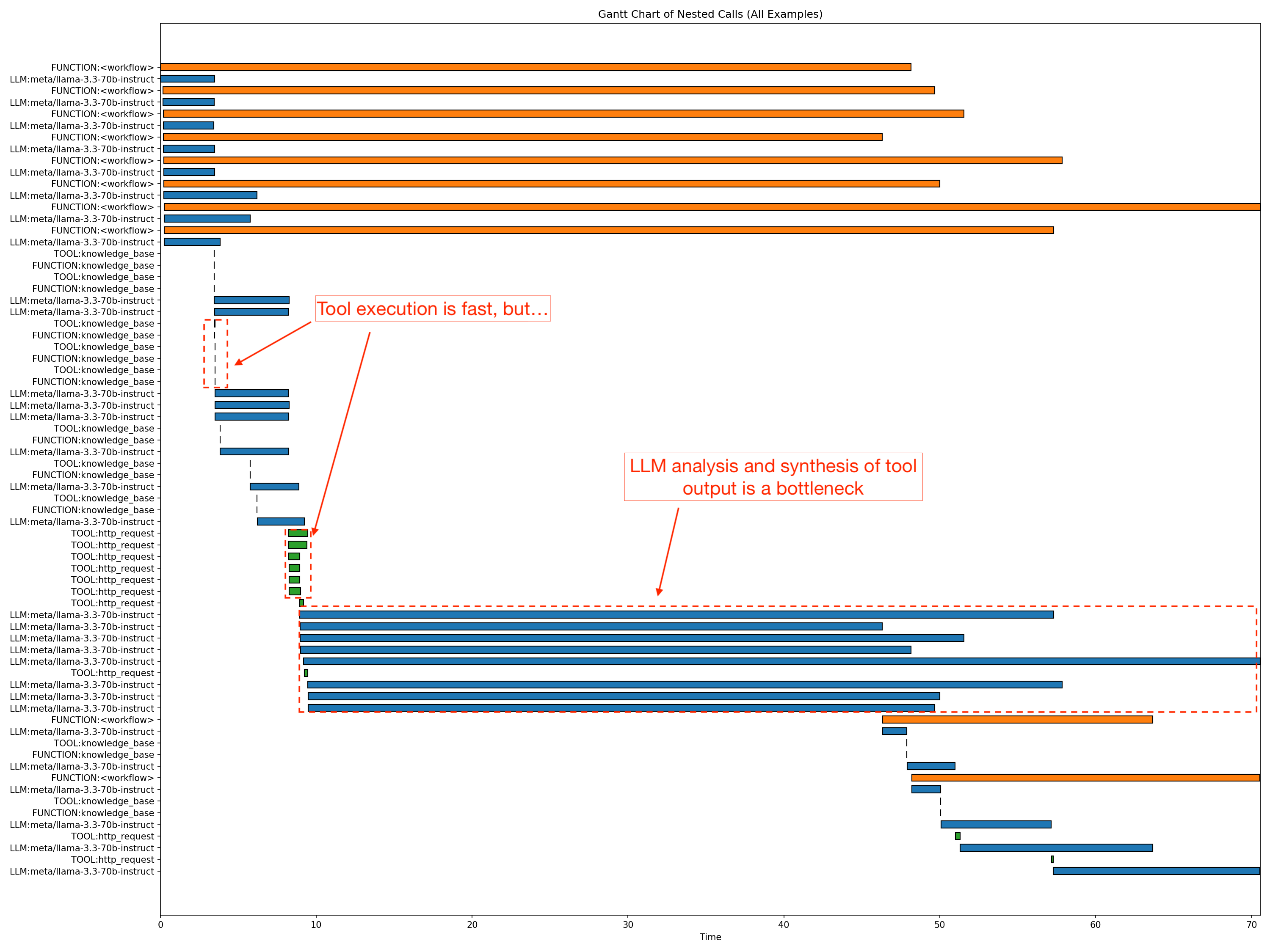

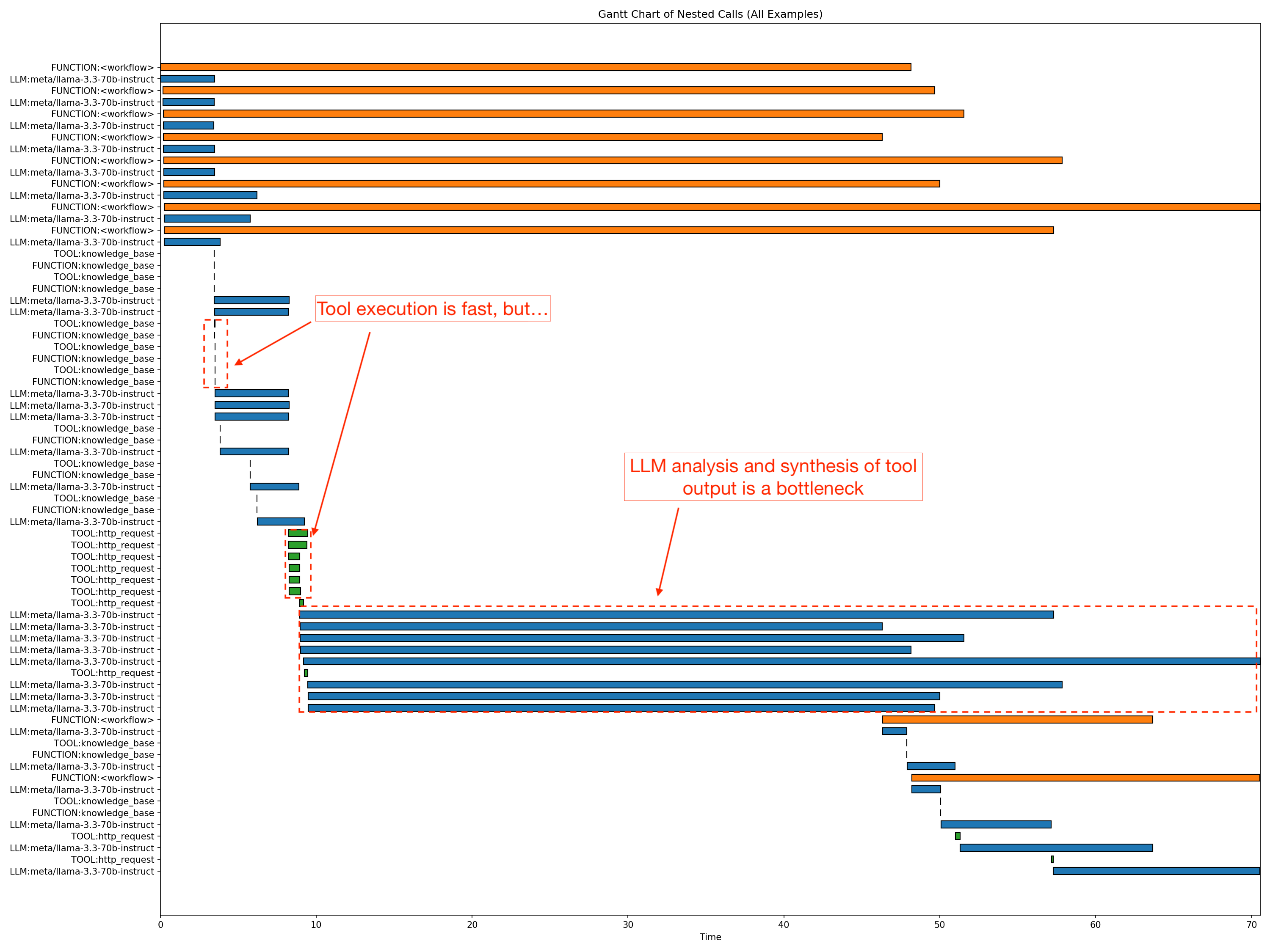

The command generates detailed artifacts that embrace JSON information per analysis metric (resembling accuracy, groundedness, relevance, and Trajectory accuracy) exhibiting scores from 0–1, reasoning traces, retrieved contexts, and aggregated averages. Further info within the artifacts generated embrace workflow outputs, standardized tables, profile traces, and compact summaries for latency and token effectivity. This multi-metric sweep gives a holistic view of agent high quality and conduct. The analysis highlights that whereas the agent achieved constant groundedness scores—that means solutions have been reliably supported by sources—there’s nonetheless a chance to enhance retrieval relevance. The profile hint output incorporates workflow-specific latency, throughput, and runtime at 90%, 95%, and 99% confidence intervals. The command generates a Gantt chart of the agent stream and nested stack evaluation to pinpoint precisely the place bottlenecks exist, as seen within the following determine. It additionally studies concurrency spikes and token effectivity so you’ll be able to perceive exactly how scaling impacts immediate and completion utilization.

In the course of the profiling, nat spawns eight concurrent agent workflows (proven in orange bars within the chart), which is the default concurrency configuration throughout analysis. The p90 latency for the workflow proven is roughly 58.9 seconds. Crucially, the information confirmed that response technology was the first bottleneck, with the longest LLM segments taking roughly 61.4 seconds. In the meantime, non-LLM overhead remained minimal. HTTP requests averaged solely 0.7–1.2 seconds, and data base entry was negligible. Utilizing this degree of granularity, now you can determine and optimize particular bottlenecks within the agent workflows.

Agent efficiency optimization

After profiling, refine the agent’s parameters to steadiness high quality, efficiency, and price. Guide tuning of LLM settings like temperature and top_p is commonly a recreation of guesswork. The NeMo Agent Toolkit turns this right into a data-driven science. You should utilize the built-in optimizer to carry out a scientific sweep throughout your parameter search house:

nat optimize --config_file examples/frameworks/strands_demo/configs/optimizer_config.yml

The next is the truncated terminal output:

Evaluating Trajectory: 100%|██████████████████████████████████████████████████████████████| 10/10 [00:10<00:00, 1.00it/s]

2025-10-31 16:50:41 - INFO - nat.profiler.profile_runner:127 - Wrote mixed knowledge to: ./tmp/nat/strands_demo/eval/all_requests_profiler_traces.json

2025-10-31 16:50:41 - INFO - nat.profiler.profile_runner:146 - Wrote merged standardized DataFrame to: ./tmp/nat/strands_demo/eval/standardized_data_all.csv

2025-10-31 16:50:41 - INFO - nat.profiler.profile_runner:208 - Wrote inference optimization outcomes to: ./tmp/nat/strands_demo/eval/inference_optimization.json

2025-10-31 16:50:41 - INFO - nat.eval.consider:337 - Workflow output written to ./tmp/nat/strands_demo/eval/workflow_output.json

2025-10-31 16:50:41 - INFO - nat.eval.consider:348 - Analysis outcomes written to ./tmp/nat/strands_demo/eval/token_efficiency_output.json

2025-10-31 16:50:41 - INFO - nat.eval.consider:348 - Analysis outcomes written to ./tmp/nat/strands_demo/eval/llm_latency_output.json

2025-10-31 16:50:41 - INFO - nat.eval.consider:348 - Analysis outcomes written to ./tmp/nat/strands_demo/eval/rag_relevance_output.json

2025-10-31 16:50:41 - INFO - nat.eval.consider:348 - Analysis outcomes written to ./tmp/nat/strands_demo/eval/rag_groundedness_output.json

2025-10-31 16:50:41 - INFO - nat.eval.consider:348 - Analysis outcomes written to ./tmp/nat/strands_demo/eval/rag_accuracy_output.json

2025-10-31 16:50:41 - INFO - nat.eval.consider:348 - Analysis outcomes written to ./tmp/nat/strands_demo/eval/trajectory_accuracy_output.json

2025-10-31 16:50:41 - INFO - nat.eval.utils.output_uploader:61 - No S3 config supplied; skipping add.

Evaluating Regex-Ex_Accuracy: 100%|████████████████████████████████████████████████████████| 10/10 [00:21<00:00, 2.15s/it]

2025-10-31 16:50:44 - INFO - nat.profiler.profile_runner:127 - Wrote mixed knowledge to: ./tmp/nat/strands_demo/eval/all_requests_profiler_traces.json

2025-10-31 16:50:44 - INFO - nat.profiler.profile_runner:146 - Wrote merged standardized DataFrame to: ./tmp/nat/strands_demo/eval/standardized_data_all.csv

2025-10-31 16:50:45 - INFO - nat.profiler.profile_runner:208 - Wrote inference optimization outcomes to: ./tmp/nat/strands_demo/eval/inference_optimization.json

2025-10-31 16:50:46 - INFO - nat.eval.consider:337 - Workflow output written to ./tmp/nat/strands_demo/eval/workflow_output.json

2025-10-31 16:50:47 - INFO - nat.eval.consider:348 - Analysis outcomes written to ./tmp/nat/strands_demo/eval/token_efficiency_output.json

2025-10-31 16:50:48 - INFO - nat.eval.consider:348 - Analysis outcomes written to ./tmp/nat/strands_demo/eval/llm_latency_output.json

2025-10-31 16:50:49 - INFO - nat.eval.consider:348 - Analysis outcomes written to ./tmp/nat/strands_demo/eval/rag_relevance_output.json

2025-10-31 16:50:50 - INFO - nat.eval.consider:348 - Analysis outcomes written to ./tmp/nat/strands_demo/eval/rag_groundedness_output.json

2025-10-31 16:50:51 - INFO - nat.eval.consider:348 - Analysis outcomes written to ./tmp/nat/strands_demo/eval/trajectory_accuracy_output.json

2025-10-31 16:50:52 - INFO - nat.eval.consider:348 - Analysis outcomes written to ./tmp/nat/strands_demo/eval/rag_accuracy_output.json

2025-10-31 16:50:53 - INFO - nat.eval.utils.output_uploader:61 - No S3 config supplied; skipping add.

[I 2025-10-31 16:50:53,361] Trial 19 completed with values: [0.6616666666666667, 1.0, 0.38000000000000007, 0.26800000000000006, 2.1433333333333333, 2578.222222222222] and parameters: {'llm_sim_llm.top_p': 0.8999999999999999, 'llm_sim_llm.temperature': 0.38000000000000006, 'llm_sim_llm.max_tokens': 5632}.

2025-10-31 16:50:53 - INFO - nat.profiler.parameter_optimization.parameter_optimizer:120 - Numeric optimization completed

2025-10-31 16:50:53 - INFO - nat.profiler.parameter_optimization.parameter_optimizer:162 - Producing Pareto entrance visualizations...

2025-10-31 16:50:53 - INFO - nat.profiler.parameter_optimization.pareto_visualizer:320 - Creating Pareto entrance visualizations...

2025-10-31 16:50:53 - INFO - nat.profiler.parameter_optimization.pareto_visualizer:330 - Whole trials: 20

2025-10-31 16:50:53 - INFO - nat.profiler.parameter_optimization.pareto_visualizer:331 - Pareto optimum trials: 14

2025-10-31 16:50:54 - INFO - nat.profiler.parameter_optimization.pareto_visualizer:345 - Parallel coordinates plot saved to: ./tmp/nat/strands_demo/optimizer/plots/pareto_parallel_coordinates.png

2025-10-31 16:50:56 - INFO - nat.profiler.parameter_optimization.pareto_visualizer:374 - Pairwise matrix plot saved to: ./tmp/nat/strands_demo/optimizer/plots/pareto_pairwise_matrix.png

2025-10-31 16:50:56 - INFO - nat.profiler.parameter_optimization.pareto_visualizer:387 - Visualization full!

2025-10-31 16:50:56 - INFO - nat.profiler.parameter_optimization.pareto_visualizer:389 - Plots saved to: ./tmp/nat/strands_demo/optimizer/plots

2025-10-31 16:50:56 - INFO - nat.profiler.parameter_optimization.parameter_optimizer:171 - Pareto visualizations saved to: ./tmp/nat/strands_demo/optimizer/plots

2025-10-31 16:50:56 - INFO - nat.profiler.parameter_optimization.optimizer_runtime:88 - All optimization phases full.

This command launches an automatic sweep throughout key LLM parameters, resembling temperature, top_p, and max_tokens, as outlined within the config (on this case optimizer_config.yml) search house. The optimizer runs 20 trials with three repetitions every, utilizing weighted analysis metrics to mechanically uncover optimum mannequin settings. It’d take as much as 15–20 minutes for the optimizer to run 20 trials.

The toolkit evaluates every parameter set in opposition to a weighted multi-objective rating, aiming to maximise high quality (for instance, accuracy, groundedness, or instrument use) whereas minimizing token price and latency. Upon completion, it generates detailed efficiency artifacts and abstract tables so you’ll be able to shortly determine and choose the optimum configuration for manufacturing. The next is the hyperparameter optimizer configuration:

llms:

nim_llm:

_type: nim

model_name: meta/llama-3.3-70b-instruct

temperature: 0.5

top_p: 0.9

max_tokens: 4096

# Allow optimization for these parameters

optimizable_params:

- temperature

- top_p

- max_tokens

# Outline search areas

search_space:

temperature:

low: 0.1

excessive: 0.7

step: 0.2 # Checks: 0.1, 0.3, 0.5, 0.7

top_p:

low: 0.7

excessive: 1.0

step: 0.1 # Checks: 0.7, 0.8, 0.9, 1.0

max_tokens:

low: 4096

excessive: 8192

step: 512 # Checks: 4096, 4608, 5120, 5632, 6144, 6656, 7168, 7680, 8192

On this instance, NeMo Agent Toolkit Optimize systematically evaluated parameter configurations and recognized temperature ≈ 0.7, top_p ≈ 1.0, and max_tokens ≈ 6k (6144) as optimum configuration yielding the best accuracy throughout 20 trials. This configuration delivered a 35% accuracy enchancment over baseline whereas concurrently reaching 20% token effectivity features in comparison with the 8192 max_tokens setting—maximizing each efficiency and price effectivity for these manufacturing deployments.

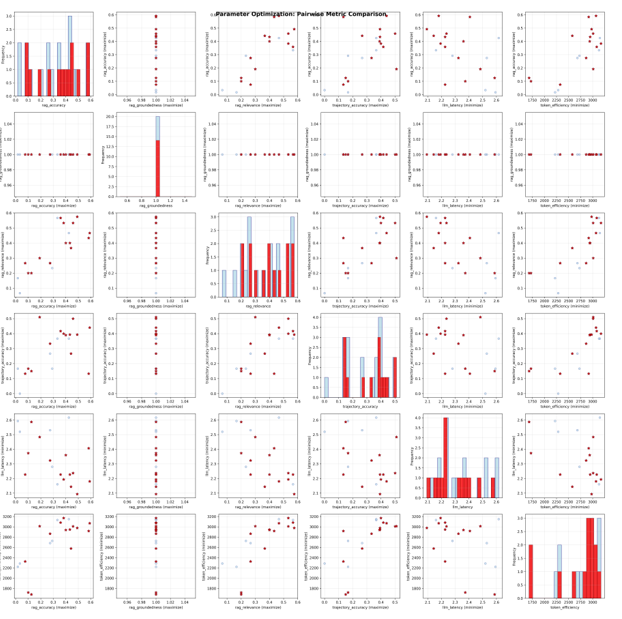

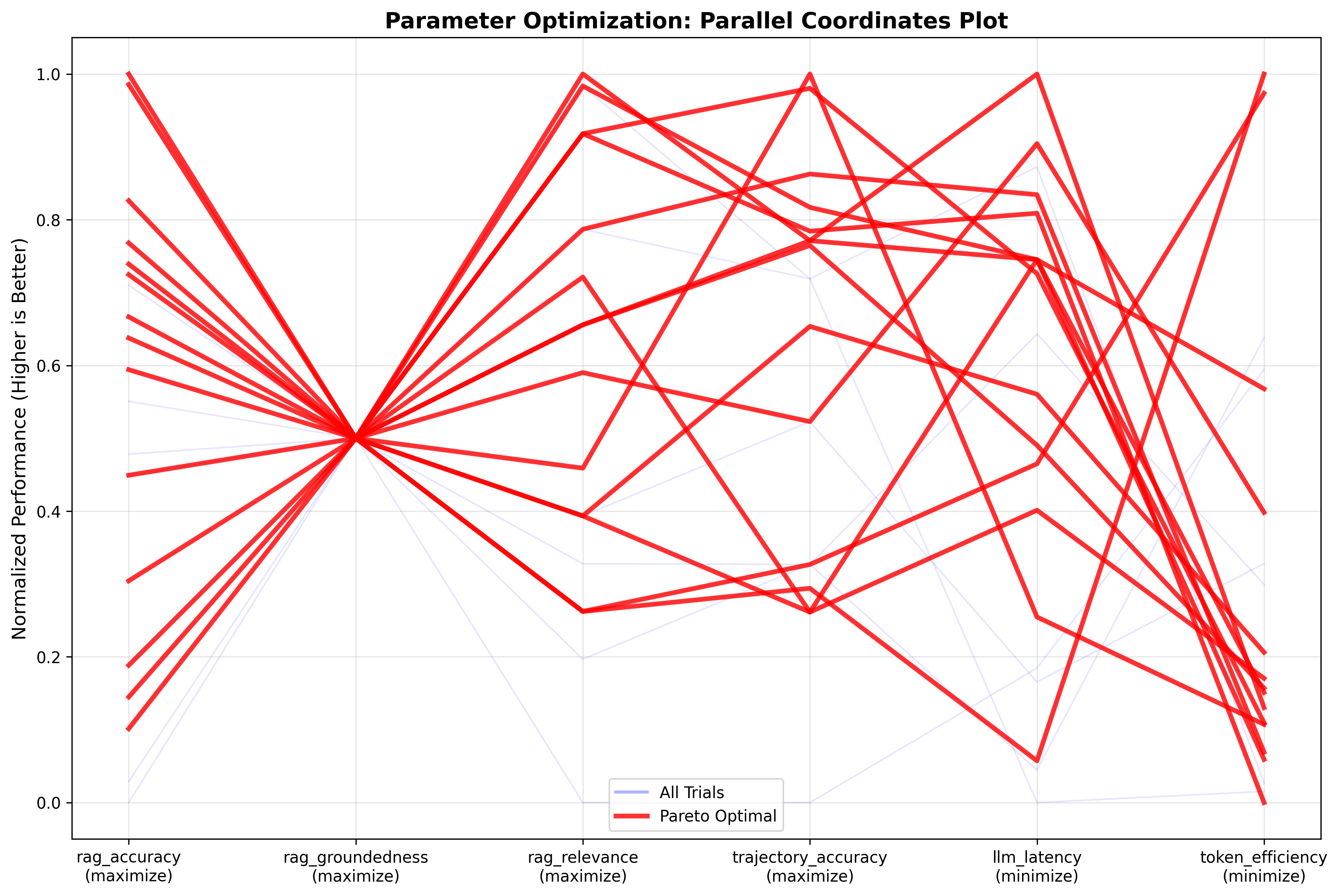

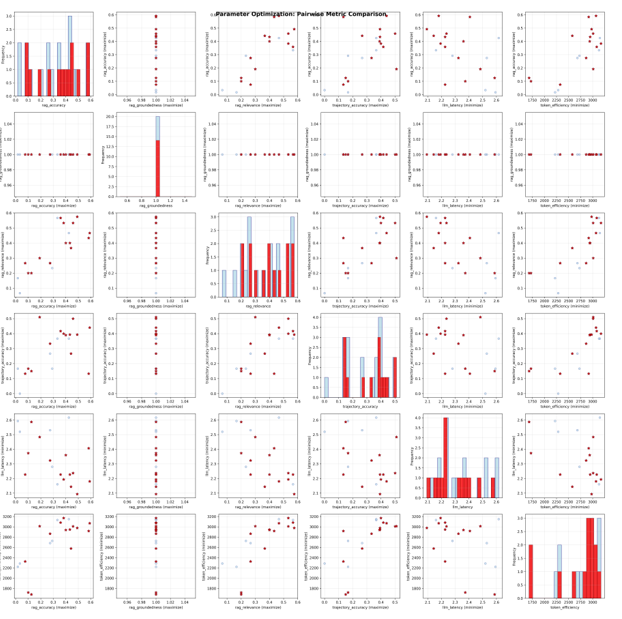

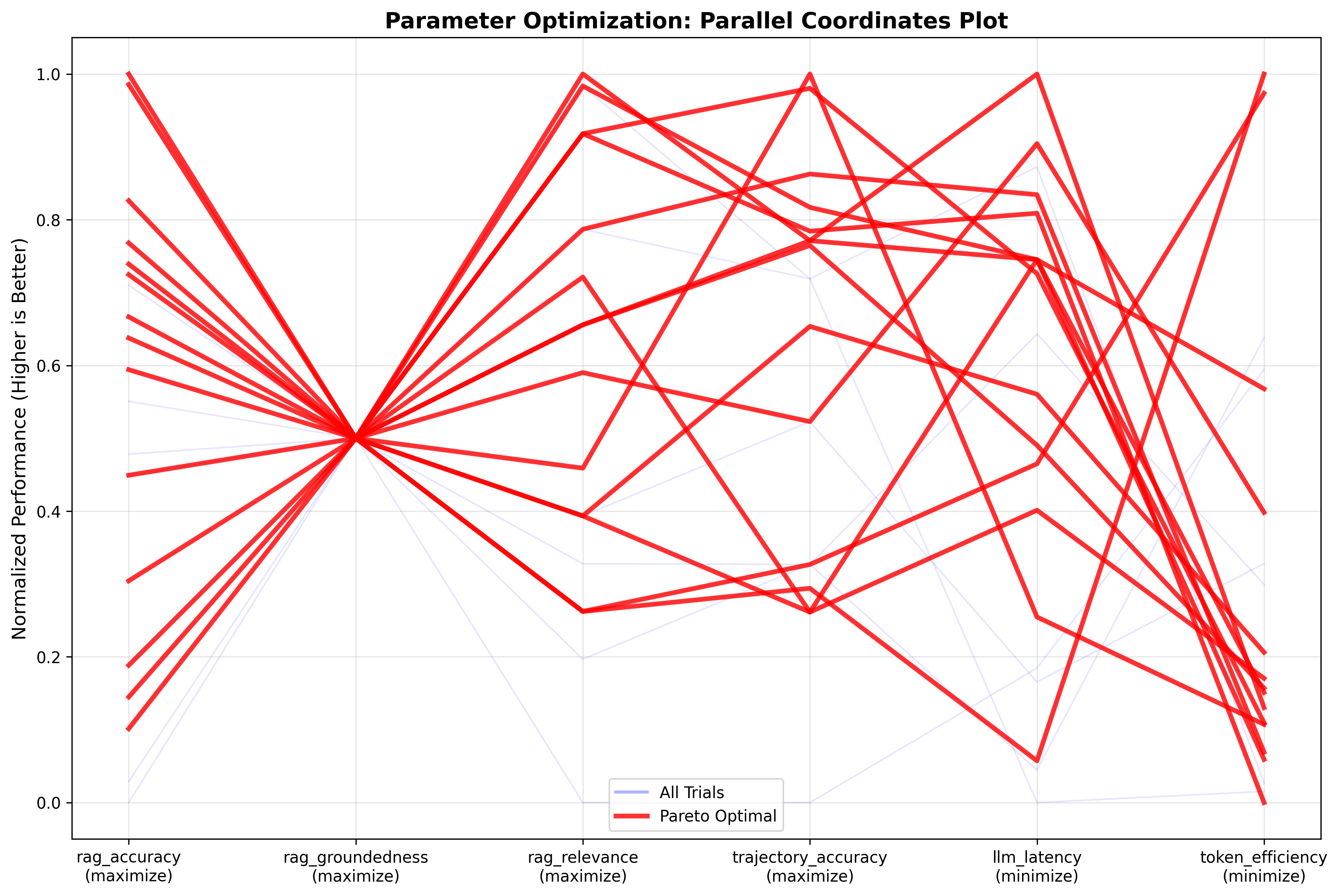

The optimizer plots pairwise pareto curves, as proven within the following pairwise matrix comparability charts, to research trade-offs between completely different parameters. The parallel coordinates plot, that follows the matrix comparability chart, exhibits optimum trials (pink strains) reaching prime quality scores (0.8–1.0) throughout accuracy, groundedness, and relevance whereas buying and selling off some effectivity as token utilization and latency drop to 0.6–0.8 on the normalized scale. The pairwise matrix confirms robust correlations between high quality metrics and divulges precise token consumption clustered tightly round 2,500–3,100 tokens throughout all trials. These outcomes point out that additional features in accuracy and token effectivity is perhaps attainable via immediate engineering. That is one thing that growth groups can obtain utilizing NeMo Agent Toolkit’s immediate optimization capabilities, serving to scale back prices whereas maximizing efficiency.

The next picture exhibits the pairwise matrix comparability:

The next picture exhibits the parallel coordinates plot:

Proper-sizing manufacturing GPU infrastructure

After your agent is optimized and also you’ve finalized the runtime or inference configuration, you’ll be able to shift your focus to assessing your mannequin deployment infrastructure. In case you’re self-managing your mannequin deployment on a fleet of EC2 GPU-powered cases, then one of the crucial tough facets of transferring brokers to manufacturing is predicting precisely what compute sources are essential to assist a goal use case and concurrent customers with out overrunning the funds or inflicting timeouts. The NeMo Agent Toolkit GPU sizing calculator addresses this problem through the use of your agent’s precise efficiency profile to find out the optimum cluster measurement for particular service degree aims (SLOs), enabling right-sizing that alleviates the trade-off between efficiency and price. To generate a sizing profile, you run the sizing calculator throughout a variety of concurrency ranges (for instance, 1–32 simultaneous customers):

nat sizing calc --config_file examples/frameworks/strands_demo/configs/sizing_config.yml --calc_output_dir /tmp/strands_demo/sizing_calc_run1/ --concurrencies 1,2,4,8,12,20,24,28,32 --num_passes 2

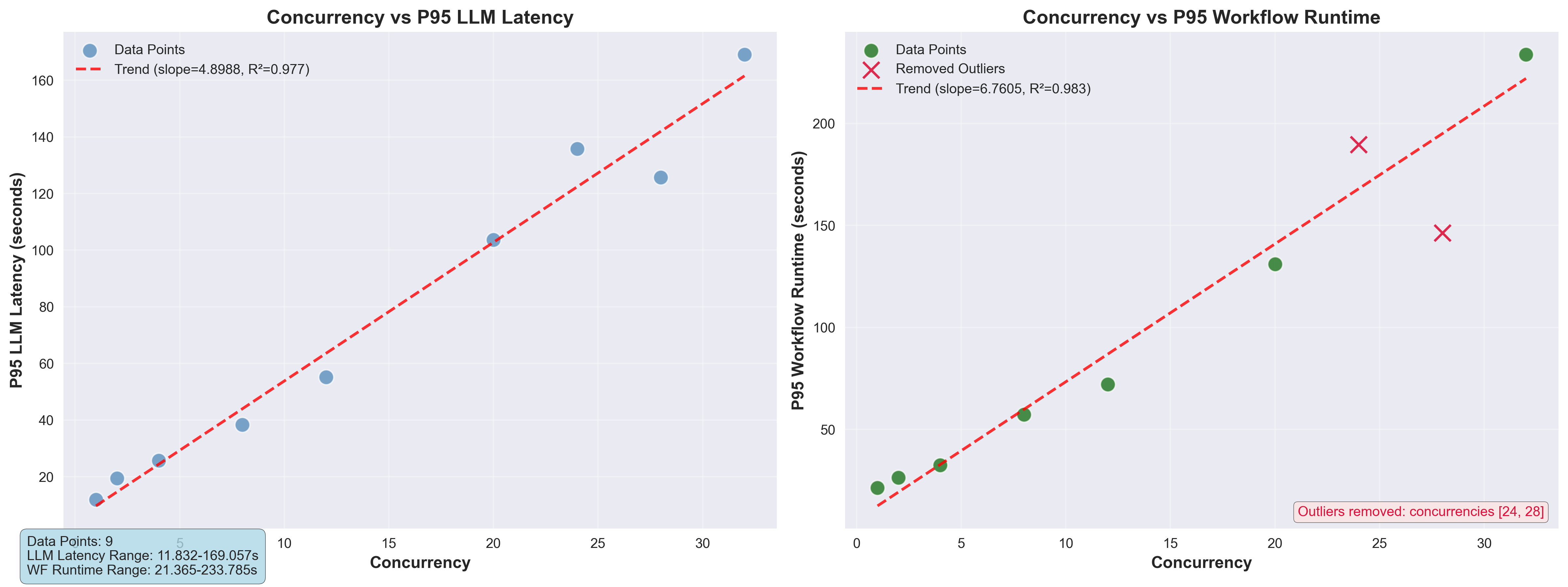

Executing this on our reference EC2 P4de.24xlarge occasion powered by NVIDIA A100 Tensor Core GPUs operating on Amazon EKS for a Llama 3.3 70B Instruct NIM produced the next capability evaluation:

Per concurrency outcomes:

Alerts!: W = Workflow interrupted, L = LLM latency outlier, R = Workflow runtime outlier

| Alerts | Concurrency | p95 LLM Latency | p95 WF Runtime | Whole Runtime |

|--------|--------------|-----------------|----------------|---------------|

| | 1 | 11.8317 | 21.3647 | 33.2416 |

| | 2 | 19.3583 | 26.2694 | 36.931 |

| | 4 | 25.728 | 32.4711 | 61.13 |

| | 8 | 38.314 | 57.1838 | 89.8716 |

| | 12 | 55.1766 | 72.0581 | 130.691 |

| | 20 | 103.68 | 131.003 | 202.791 |

| !R | 24 | 135.785 | 189.656 | 221.721 |

| !R | 28 | 125.729 | 146.322 | 245.654 |

| | 32 | 169.057 | 233.785 | 293.562 |

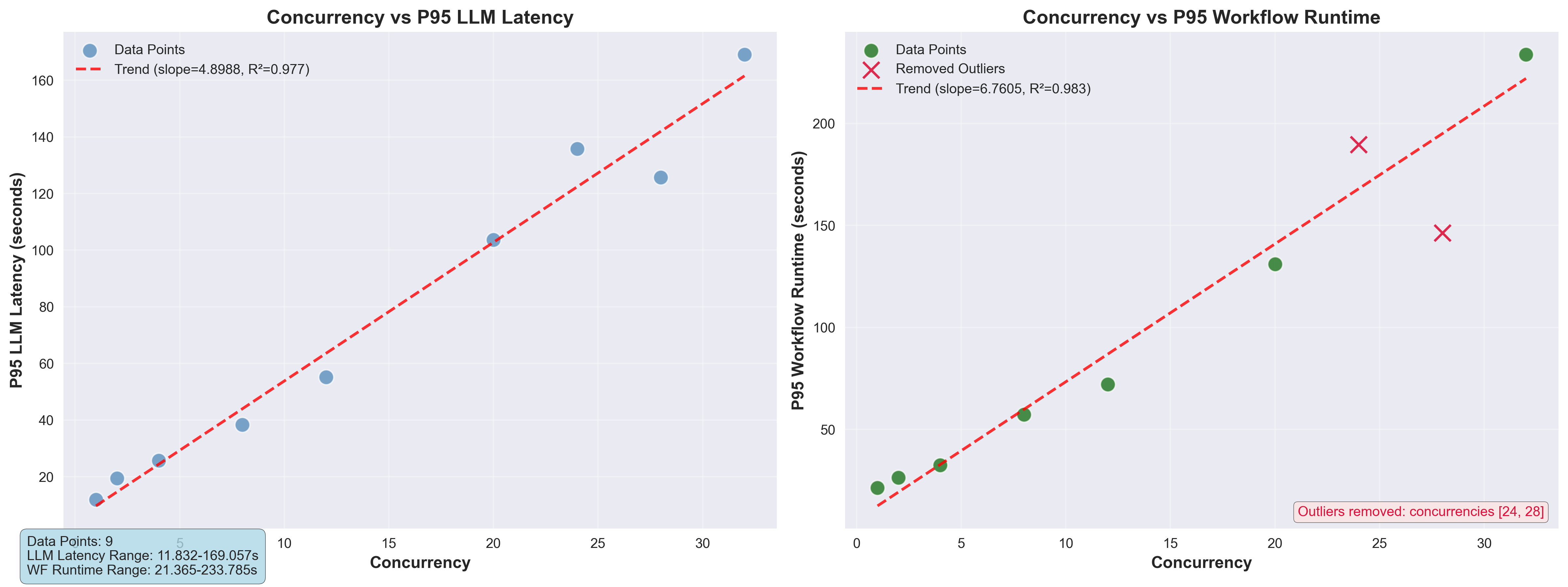

As proven within the following chart, calculated concurrency scales virtually linearly with each latency and finish‑to‑finish runtime, with P95 LLM latency and workflow runtime demonstrating near-perfect pattern matches (R² ≈ 0.977/0.983). Every extra concurrent request introduces a predictable latency penalty, suggesting the system operates inside a linear capability zone the place throughput could be optimized by adjusting latency tolerance.

With the sizing metrics captured, you’ll be able to estimate the GPU cluster measurement for a selected concurrency and latency. For instance, to assist 25 concurrent customers with a goal workflow runtime of fifty seconds, you’ll be able to run the calculator:

nat sizing calc --offline_mode --calc_output_dir /tmp/strands_demo/sizing_calc_run1/ --test_gpu_count 8 --target_workflow_runtime 50 --target_users 25

This workflow analyzes present efficiency metrics and generates a useful resource advice. In our instance state of affairs, the instrument calculates that to satisfy strict latency necessities for 25 simultaneous customers, roughly 30 GPUs are required primarily based on the next formulation:

gpu_estimate = (target_users / calculated_concurrency) * test_gpu_count

calculated_concurrency = (target_time_metric - intercept) / slope

The next is the output from the sizing estimation:

Targets: LLM Latency ≤ 0.0s, Workflow Runtime ≤ 50.0s, Customers = 25

Take a look at parameters: GPUs = 8

Per concurrency outcomes:

Alerts!: W = Workflow interrupted, L = LLM latency outlier, R = Workflow runtime outlier

| Alerts | Concurrency | p95 LLM Latency | p95 WF Runtime | Whole Runtime | GPUs (WF Runtime, Tough) |

|--------|-------------|-----------------|----------------|---------------|--------------------------|

| | 1 | 11.8317 | 21.3647 | 33.2416 | 85.4587 |

| | 2 | 19.3583 | 26.2694 | 36.931 | 52.5388 |

| | 4 | 25.728 | 32.4711 | 61.13 | 32.4711 |

| | 8 | 38.314 | 57.1838 | 89.8716 | |

| | 12 | 55.1766 | 72.0581 | 130.691 | |

| | 20 | 103.68 | 131.003 | 202.791 | |

| !R | 24 | 135.785 | 189.656 | 221.721 | |

| !R | 28 | 125.729 | 146.322 | 245.654 | |

| | 32 | 169.057 | 233.785 | 293.562 | |

=== GPU ESTIMATES ===

Estimated GPU rely (Workflow Runtime): 30.5

Manufacturing agent deployment to Amazon Bedrock AgentCore

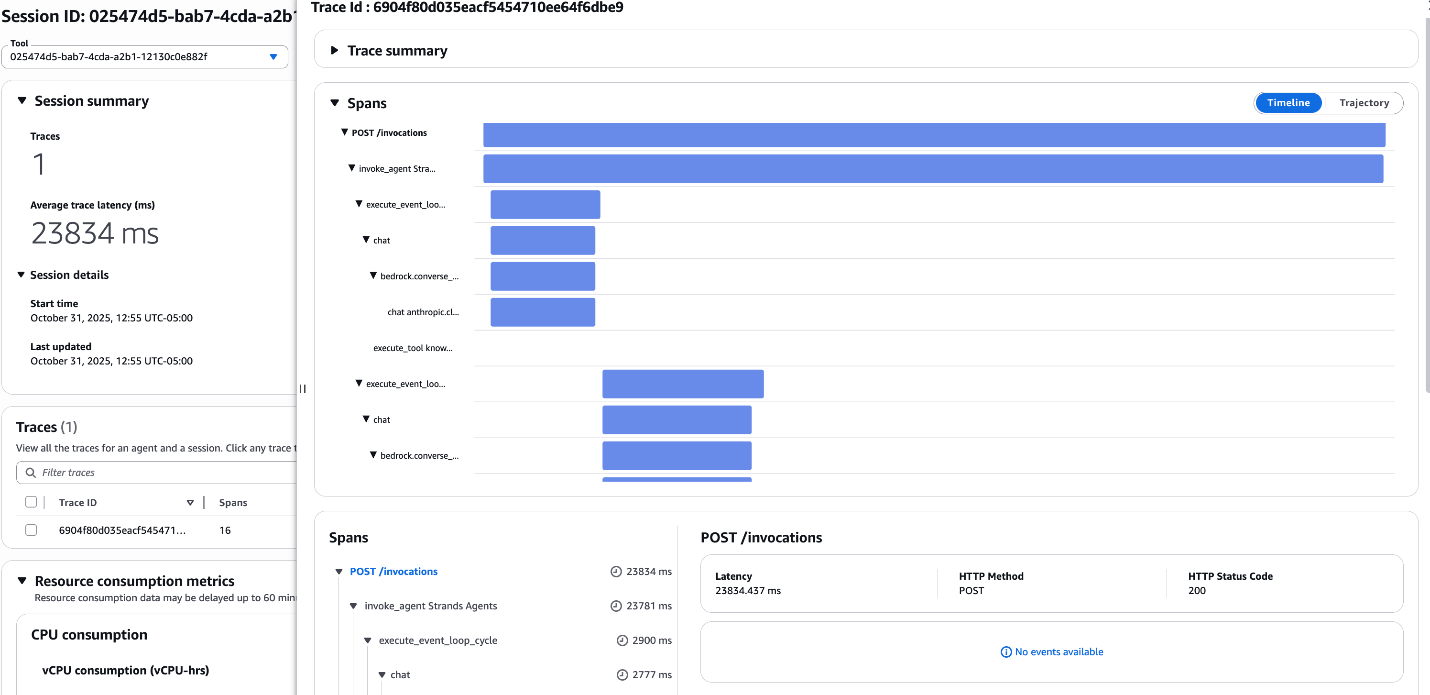

After evaluating, profiling, and optimizing your agent, deploy it to manufacturing. Though operating the agent regionally is ample for testing, enterprise deployment requires an agent runtime that helps present safety, scalability, and sturdy reminiscence administration with out the overhead of managing infrastructure. That is the place Amazon Bedrock AgentCore Runtime shines—offering enterprise-grade serverless agent runtime with out the infrastructure overhead. Discuss with the step-by-step deployment information within the NeMo Agent Toolkit Repository. By packaging your optimized agent in a container and deploying it to the serverless Bedrock AgentCore Runtime, you elevate your prototype agent to a resilient software for long-running duties and concurrent consumer requests. After you deploy the agent, visibility turns into vital. This integration creates a unified observability expertise, remodeling opaque black-box execution into deep visibility. You achieve actual traces, spans, and latency breakdowns for each interplay in manufacturing, built-in into Bedrock AgentCore Observability utilizing OpenTelemetry.

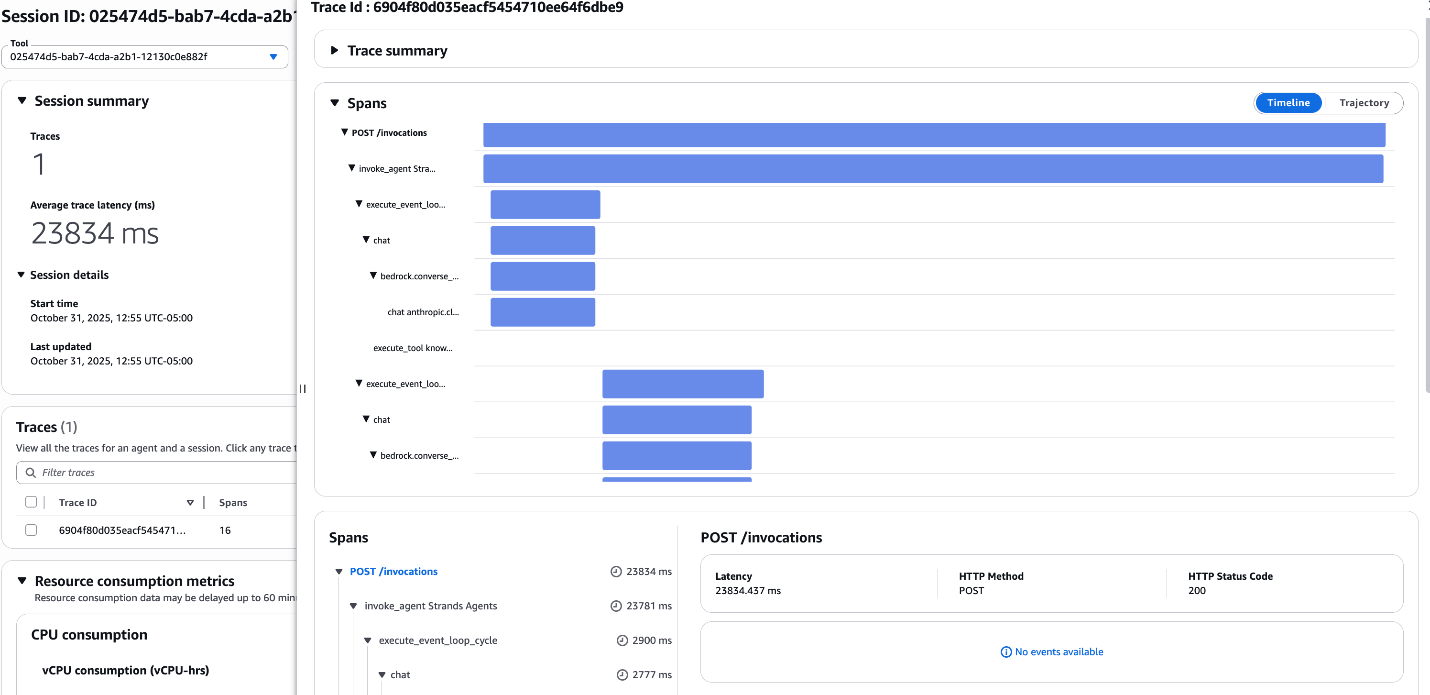

The next screenshot exhibits the Amazon CloudWatch dashboard displaying Amazon Bedrock AgentCore traces and spans, visualizing the execution path and latency of the deployed Strands agent.

Amazon Bedrock AgentCore companies lengthen properly past agent runtime administration and observability. Your deployed brokers can seamlessly use extra Bedrock AgentCore companies, together with Amazon Bedrock AgentCore Id for authentication and authorization, Amazon Bedrock AgentCore Gateway for instruments entry, Amazon Bedrock AgentCore Reminiscence for context-awareness, Amazon Bedrock AgentCore Code Interpreter for safe code execution, and Amazon Bedrock AgentCore Browser for internet interactions, to create enterprise-ready brokers.

Conclusion

Manufacturing AI brokers want efficiency visibility, optimization, and dependable infrastructure. For the instance use case, this integration delivered on all three fronts: reaching 20% token effectivity features, 35% accuracy enhancements for the instance use case, and performance-tuned GPU infrastructure calibrated for goal concurrency. By combining Strands Brokers for foundational agent growth and orchestration, the NVIDIA NeMo Agent Toolkit for deep agent profiling, optimization, and right-sizing manufacturing GPU infrastructure, and Amazon Bedrock AgentCore for safe, scalable agent infrastructure, builders can have an end-to-end answer that helps present predictable outcomes. Now you can construct, consider, optimize, and deploy brokers at scale on AWS with this built-in answer. To get began, take a look at the Strands Brokers and NeMo Agent Toolkit integration instance and deploying Strands Brokers and NeMo Agent Toolkit to Amazon Bedrock AgentCore Runtime.

Concerning the authors

Kosti Vasilakakis is a Principal PM at AWS on the Agentic AI staff, the place he has led the design and growth of a number of Bedrock AgentCore companies from the bottom up, together with Runtime, Browser, Code Interpreter, and Id. He beforehand labored on Amazon SageMaker since its early days, launching AI/ML capabilities now utilized by hundreds of corporations worldwide. Earlier in his profession, Kosti was an information scientist. Outdoors of labor, he builds private productiveness automations, performs tennis, and enjoys life together with his spouse and youngsters.

Kosti Vasilakakis is a Principal PM at AWS on the Agentic AI staff, the place he has led the design and growth of a number of Bedrock AgentCore companies from the bottom up, together with Runtime, Browser, Code Interpreter, and Id. He beforehand labored on Amazon SageMaker since its early days, launching AI/ML capabilities now utilized by hundreds of corporations worldwide. Earlier in his profession, Kosti was an information scientist. Outdoors of labor, he builds private productiveness automations, performs tennis, and enjoys life together with his spouse and youngsters.

Sagar Murthy is an agentic AI GTM chief at AWS, the place he collaborates with frontier basis mannequin companions, agentic frameworks, startups, and enterprise prospects to evangelize AI and knowledge improvements, open-source options, and scale impactful partnerships. With collaboration experiences spanning knowledge, cloud and AI, he brings a mix of technical options background and enterprise outcomes focus to thrill builders and prospects.

Sagar Murthy is an agentic AI GTM chief at AWS, the place he collaborates with frontier basis mannequin companions, agentic frameworks, startups, and enterprise prospects to evangelize AI and knowledge improvements, open-source options, and scale impactful partnerships. With collaboration experiences spanning knowledge, cloud and AI, he brings a mix of technical options background and enterprise outcomes focus to thrill builders and prospects.

Chris Smith is a Options Architect at AWS specializing in AI-powered automation and enterprise AI agent orchestration. With over a decade of expertise architecting options on the intersection of generative AI, cloud computing, and techniques integration, he helps organizations design and deploy agent techniques that remodel rising applied sciences into measurable enterprise outcomes. His work spans technical structure, security-first implementation, and cross-functional staff management.

Chris Smith is a Options Architect at AWS specializing in AI-powered automation and enterprise AI agent orchestration. With over a decade of expertise architecting options on the intersection of generative AI, cloud computing, and techniques integration, he helps organizations design and deploy agent techniques that remodel rising applied sciences into measurable enterprise outcomes. His work spans technical structure, security-first implementation, and cross-functional staff management.

Ranjit Rajan is a Senior Options Architect at NVIDIA, the place he helps prospects design and construct options spanning generative AI, agentic AI, and accelerated multi-modal knowledge processing pipelines for pre-training and fine-tuning basis fashions.

Ranjit Rajan is a Senior Options Architect at NVIDIA, the place he helps prospects design and construct options spanning generative AI, agentic AI, and accelerated multi-modal knowledge processing pipelines for pre-training and fine-tuning basis fashions.

Abdullahi Olaoye is a Senior AI Options Architect at NVIDIA, specializing in integrating NVIDIA AI libraries, frameworks, and merchandise with cloud AI companies and open-source instruments to optimize AI mannequin deployment, inference, and generative AI workflows. He collaborates with AWS to reinforce AI workload efficiency and drive adoption of NVIDIA-powered AI and generative AI options.

Abdullahi Olaoye is a Senior AI Options Architect at NVIDIA, specializing in integrating NVIDIA AI libraries, frameworks, and merchandise with cloud AI companies and open-source instruments to optimize AI mannequin deployment, inference, and generative AI workflows. He collaborates with AWS to reinforce AI workload efficiency and drive adoption of NVIDIA-powered AI and generative AI options.

Abhishek Sawarkar is a product supervisor within the NVIDIA AI Enterprise staff engaged on Agentic AI. He focuses on product technique and roadmap of integrating Agentic AI library in associate platforms & enhancing consumer expertise on accelerated computing for AI Brokers.

Abhishek Sawarkar is a product supervisor within the NVIDIA AI Enterprise staff engaged on Agentic AI. He focuses on product technique and roadmap of integrating Agentic AI library in associate platforms & enhancing consumer expertise on accelerated computing for AI Brokers.

Kosti Vasilakakis is a Principal PM at AWS on the Agentic AI staff, the place he has led the design and growth of a number of Bedrock AgentCore companies from the bottom up, together with Runtime, Browser, Code Interpreter, and Id. He beforehand labored on Amazon SageMaker since its early days, launching AI/ML capabilities now utilized by hundreds of corporations worldwide. Earlier in his profession, Kosti was an information scientist. Outdoors of labor, he builds private productiveness automations, performs tennis, and enjoys life together with his spouse and youngsters.

Kosti Vasilakakis is a Principal PM at AWS on the Agentic AI staff, the place he has led the design and growth of a number of Bedrock AgentCore companies from the bottom up, together with Runtime, Browser, Code Interpreter, and Id. He beforehand labored on Amazon SageMaker since its early days, launching AI/ML capabilities now utilized by hundreds of corporations worldwide. Earlier in his profession, Kosti was an information scientist. Outdoors of labor, he builds private productiveness automations, performs tennis, and enjoys life together with his spouse and youngsters. Sagar Murthy is an agentic AI GTM chief at AWS, the place he collaborates with frontier basis mannequin companions, agentic frameworks, startups, and enterprise prospects to evangelize AI and knowledge improvements, open-source options, and scale impactful partnerships. With collaboration experiences spanning knowledge, cloud and AI, he brings a mix of technical options background and enterprise outcomes focus to thrill builders and prospects.

Sagar Murthy is an agentic AI GTM chief at AWS, the place he collaborates with frontier basis mannequin companions, agentic frameworks, startups, and enterprise prospects to evangelize AI and knowledge improvements, open-source options, and scale impactful partnerships. With collaboration experiences spanning knowledge, cloud and AI, he brings a mix of technical options background and enterprise outcomes focus to thrill builders and prospects. Chris Smith is a Options Architect at AWS specializing in AI-powered automation and enterprise AI agent orchestration. With over a decade of expertise architecting options on the intersection of generative AI, cloud computing, and techniques integration, he helps organizations design and deploy agent techniques that remodel rising applied sciences into measurable enterprise outcomes. His work spans technical structure, security-first implementation, and cross-functional staff management.

Chris Smith is a Options Architect at AWS specializing in AI-powered automation and enterprise AI agent orchestration. With over a decade of expertise architecting options on the intersection of generative AI, cloud computing, and techniques integration, he helps organizations design and deploy agent techniques that remodel rising applied sciences into measurable enterprise outcomes. His work spans technical structure, security-first implementation, and cross-functional staff management. Ranjit Rajan is a Senior Options Architect at NVIDIA, the place he helps prospects design and construct options spanning generative AI, agentic AI, and accelerated multi-modal knowledge processing pipelines for pre-training and fine-tuning basis fashions.

Ranjit Rajan is a Senior Options Architect at NVIDIA, the place he helps prospects design and construct options spanning generative AI, agentic AI, and accelerated multi-modal knowledge processing pipelines for pre-training and fine-tuning basis fashions. Abdullahi Olaoye is a Senior AI Options Architect at NVIDIA, specializing in integrating NVIDIA AI libraries, frameworks, and merchandise with cloud AI companies and open-source instruments to optimize AI mannequin deployment, inference, and generative AI workflows. He collaborates with AWS to reinforce AI workload efficiency and drive adoption of NVIDIA-powered AI and generative AI options.

Abdullahi Olaoye is a Senior AI Options Architect at NVIDIA, specializing in integrating NVIDIA AI libraries, frameworks, and merchandise with cloud AI companies and open-source instruments to optimize AI mannequin deployment, inference, and generative AI workflows. He collaborates with AWS to reinforce AI workload efficiency and drive adoption of NVIDIA-powered AI and generative AI options. Abhishek Sawarkar is a product supervisor within the NVIDIA AI Enterprise staff engaged on Agentic AI. He focuses on product technique and roadmap of integrating Agentic AI library in associate platforms & enhancing consumer expertise on accelerated computing for AI Brokers.

Abhishek Sawarkar is a product supervisor within the NVIDIA AI Enterprise staff engaged on Agentic AI. He focuses on product technique and roadmap of integrating Agentic AI library in associate platforms & enhancing consumer expertise on accelerated computing for AI Brokers.