In my final submit, I demonstrated how one can use putexcel to recreate widespread Stata output in Microsoft Excel. Right now I need to present you how one can create customized reviews for arbitrary variables. I’m going to create tables that mix cell counts with row percentages, and means with commonplace deviations. However you may modify the examples beneath to incorporate column percentages, percentiles, commonplace errors, confidence intervals or any statistic. I’m additionally going to cross the variable names into my applications utilizing native macros. This can enable me to create the identical report for arbitrary variables by merely assigning new variable names to the macros. You possibly can prolong this concept by making a do-file for every report and passing the variable names into the do-files. That is one other essential step towards our aim of automating the creation of reviews in Excel.

Right now’s weblog submit is prolonged and comprises a number of massive code blocks. It’s because every instance comprises the code from the earlier instance together with new traces of code. This lets you see the brand new code within the context of the general program nevertheless it additionally makes the submit seem longer than it’s. A lot of the code within the code blocks is identical from instance to instance.

Instance 1: Writing returned outcomes to Excel

Let’s start by utilizing tabulate to create a matrix of cell counts for intercourse and race. I might sort

tabulate intercourse race, matcell(cellcounts)

however I would really like the power to tabulate any two categorical variables. So I first retailer intercourse within the native macro RowVar and the race within the native macro ColVar. Now I can tabulate intercourse and race utilizing their corresponding native macros.

. native RowVar = "intercourse"

. native ColVar = "race"

. tabulate `RowVar' `ColVar', matcell(cellcounts)

| Race

Intercourse | Black Different White | Complete

-----------+---------------------------------+----------

Feminine | 101 12 563 | 676

Male | 75 10 506 | 591

-----------+---------------------------------+----------

Complete | 176 22 1,069 | 1,267

The cell counts are saved within the matrix cellcounts.

. matrix listing cellcounts

cellcounts[2,3]

c1 c2 c3

r1 101 12 563

r2 75 10 506

I can sort return listing to see an inventory of scalars returned by tabulate. The whole variety of observations is saved within the scalar r(N), the variety of rows is saved in r(r), and the variety of columns is saved in r(c).

. return listing

scalars:

r(N) = 1267

r(r) = 2

r(c) = 3

I can retailer these scalars in native macros in order that I can use them later.

. native TotalCount = r(N)

. native RowCount = r(r)

. native ColCount = r(c)

Instance 2: Looping over rows and columns

I can use the saved row and column counts to loop over every cell of the matrix cellcounts.

. forvalues row = 1/`RowCount' {

forvalues col = 1/`ColCount' {

native cellcount = cellcounts[`row',`col']

show "cellcounts[`row',`col'] = `cellcount'"

}

}

cellcounts[1,1] = 101

cellcounts[1,2] = 12

cellcounts[1,3] = 563

cellcounts[2,1] = 75

cellcounts[2,2] = 10

cellcounts[2,3] = 506

Looping over every cell within the matrix permits us to format the quantity in every cell and/or use the quantity to calculate one other amount comparable to a share.

Instance 3: The char() perform

The rows and columns of matrices are listed with numbers. The rows of Excel tables are listed with numbers however the columns are listed with letters. I can translate the column variety of a matrix to a column letter in Excel utilizing the char() perform. The argument of the char() perform is an ASCII quantity and the perform returns the corresponding ASCII letter. For instance, char(65) returns the letter “A”, char(66) returns the letter “B”, and so forth.

. show char(65)

A

. show char(66)

B

. show char(67)

C

I can use the perform char(64 + `col’) in my loop to translate the column variety of the matrix to the column letter of the Excel desk. Line 4 in Code block 1 beneath shops the cell identify to the native macro Cell. I might have used “`row’” in line 4 relatively than string(`row’). However I’ll finally want the string() perform so I’m utilizing it right here for consistency. Line 5 then makes use of putexcel to jot down the worth of Cell to the corresponding cell in Excel.

Code block 1: Looping with char()

putexcel set putexcel3.xlsx, sheet(example3) exchange

forvalues row = 1/`RowCount' {

forvalues col = 1/`ColCount' {

native Cell = char(64 + `col') + string(`row')

putexcel `Cell' = "`Cell'", hcenter

}

}

Instance 4: Writing cell counts to Excel

Code block 2 demonstrates how one can write the cell counts from the matrix to the Excel desk. Line 4 of Code block 2 shops the cell depend from the matrix cellcounts to the native macro CellContents, Line 5 shops the vacation spot cell within the Excel desk to the native macro Cell, and Line 6 makes use of putexcel to jot down CellContents to Cell within the Excel desk.

Code block 2:

putexcel set putexcel3.xlsx, sheet(example4) modify

forvalues row = 1/`RowCount' {

forvalues col = 1/`ColCount' {

native CellContents = cellcounts[`row',`col']

native Cell = char(64 + `col') + string(`row')

putexcel `Cell' = "`CellContents'", hcenter

}

}

Instance 5: Writing row percentages to Excel

I’d want to write the row percentages to the Excel desk relatively than the cell counts. I’ll want each the cell counts and the row totals to calculate the row percentages. The tabulate command in Line 3 of Code block 3 beneath shops the row totals to the vector rowtotals. Line 11 shops the cell counts to the native macro cellcount. Line 12 calculates and codecs the cell share and shops it to the native macro cellpercent. Line 13 provides the “%” image to cellpercent and shops the ensuing string to the native macro CellContents.

Code block 3:

native RowVar = "intercourse"

native ColVar = "race"

tabulate `RowVar' if !lacking(`ColVar'), matcell(rowtotals)

tabulate `RowVar' `ColVar', matcell(cellcounts)

native RowCount = r(r)

native ColCount = r(c)

putexcel set putexcel3.xlsx, sheet(example5) modify

forvalues row = 1/`RowCount' {

forvalues col = 1/`ColCount' {

native cellcount = cellcounts[`row',`col']

native cellpercent = string(100*`cellcount'/rowtotals[`row',1],"%9.1f")

native CellContents = "`cellpercent'%"

native Cell = char(64 + `col') + string(`row')

putexcel `Cell' = "`CellContents'", proper

}

}

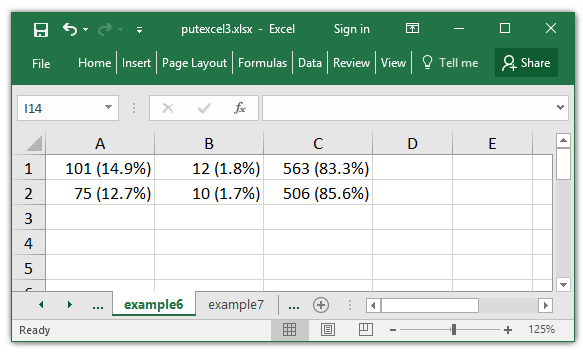

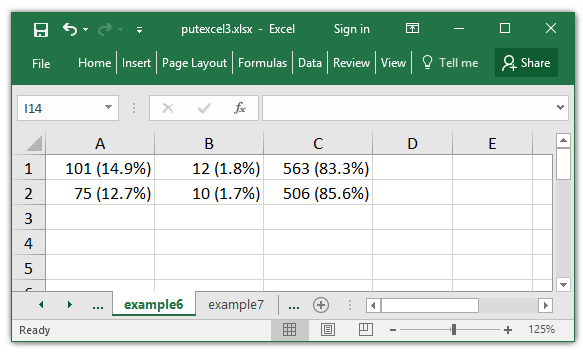

Instance 6: Writing cell counts and row percentages to Excel

I might write each the cell depend and the row share to every cell. I can do that by modifying Line 13 from Code block 3 above. Line 13 in Code block 4 beneath shops each cellcount and cellpercent to the native macro CellContents.

Code block 4:

native RowVar = "intercourse"

native ColVar = "race"

tabulate `RowVar' if !lacking(`ColVar'), matcell(rowtotals)

tabulate `RowVar' `ColVar', matcell(cellcounts)

native RowCount = r(r)

native ColCount = r(c)

putexcel set putexcel3.xlsx, sheet(example6) modify

forvalues row = 1/`RowCount' {

forvalues col = 1/`ColCount' {

native cellcount = cellcounts[`row',`col']

native cellpercent = string(100*`cellcount'/rowtotals[`row',1],"%9.1f")

native CellContents = "`cellcount' (`cellpercent'%)"

native Cell = char(64 + `col') + string(`row')

putexcel `Cell' = "`CellContents'", proper

}

}

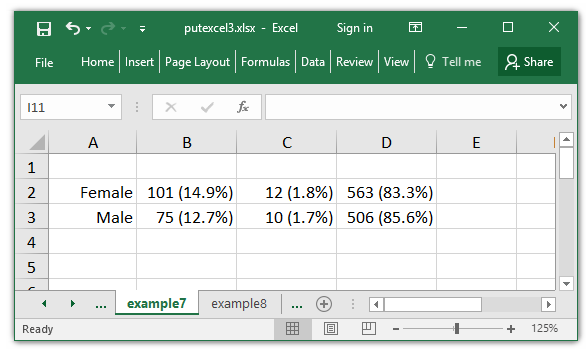

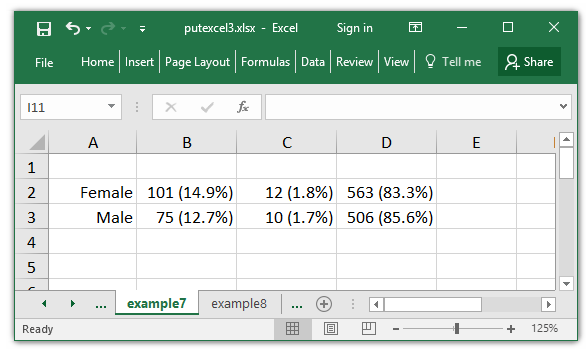

Instance 7: Including row labels to Excel tables

Subsequent I wish to add row labels to my Excel desk. I might sort “Feminine” and “Male” within the Excel desk however I would really like to have the ability to change the row variable on the prime of my program and label the rows robotically.

Worth labels comparable to “0=Feminine” and “1=Male” are outlined utilizing label outline and the labels are connected to a variable utilizing label values. If I sort describe intercourse, I can see that the worth label connected to intercourse is known as SexLabel.

. describe intercourse

storage show worth

variable identify sort format label variable label

------------------------------------------------------------------------------

intercourse byte %9.0g SexLabel Intercourse

And I can view the definition of SexLabel by typing label listing SexLabel.

. label listing SexLabel

SexLabel:

0 Feminine

1 Male

I can entry the entire details about the worth labels for a variable utilizing solely the variable identify. The identify of the present row variable, intercourse, is saved within the native macro RowVar. I can retailer the worth label for RowVar to the native macro RowValueLabel utilizing the macro listing perform beneath.

. native RowVar = "intercourse"

. native RowValueLabel : worth label `RowVar'

. show "`RowValueLabel'"

SexLabel

I can use levelsof to retailer the numeric classes of RowVar to the native macro RowLevels.

. levelsof `RowVar', native(RowLevels)

0 1

. show "`RowLevels'"

0 1

I can refer to every of the numeric classes in RowLevels utilizing the phrases() perform. For instance, the primary “phrase” within the native macro RowLevels is “0”. I can retailer this “phrase” within the native macro RowValueLabelNum utilizing the next macro listing perform.

. native RowValueLabelNum = phrase("`RowLevels'", 1)

. show "`RowValueLabelNum'"

0

I can then retailer the label related to “0” to the native macro RowLabel utilizing the next macro listing perform.

. native RowLabel : label `RowValueLabel' `RowValueLabelNum'

. show "`RowLabel'"

Feminine

I might use the identical strategy to retailer the second class of intercourse.

. native RowValueLabelNum = phrase("`RowLevels'", 2)

. show "`RowValueLabelNum'"

1

. native RowLabel : label `RowValueLabel' `RowValueLabelNum'

. show "`RowLabel'"

Male

Code block 5 beneath incorporates these instructions to extract the worth labels for RowVar and makes use of putexcel to jot down the labels to my Excel desk.

I start by shifting the cells of my Excel desk down one row and one column to the best. This can make room for the row labels, and the column labels I’ll add later. I shift the desk down and proper in traces 16 and 23 beneath by including 1 to the arguments of the char() and string() features.

Line 8 shops the worth label of RowVar to the native macro RowValueLabel. Line 9 retailer the numeric classes of RowVar to the native macro RowLevels.

Traces 14-17 extract the label related to every numeric class and writes it to my Excel desk.

Code block 5:

native RowVar = "intercourse"

native ColVar = "race"

tabulate `RowVar' if !lacking(`ColVar'), matcell(rowtotals)

tabulate `RowVar' `ColVar', matcell(cellcounts)

native RowCount = r(r)

native ColCount = r(c)

native RowValueLabel : worth label `RowVar'

levelsof `RowVar', native(RowLevels)

putexcel set putexcel3.xlsx, sheet(example7) modify

forvalues row = 1/`RowCount' {

native RowValueLabelNum = phrase("`RowLevels'", `row')

native CellContents : label `RowValueLabel' `RowValueLabelNum'

native Cell = char(64 + 1) + string(`row'+1)

putexcel `Cell' = "`CellContents'", proper

forvalues col = 1/`ColCount' {

native cellcount = cellcounts[`row',`col']

native cellpercent = string(100*`cellcount'/rowtotals[`row',1],"%9.1f")

native CellContents = "`cellcount' (`cellpercent'%)"

native Cell = char(64 + `col' + 1) + string(`row' + 1)

putexcel `Cell' = "`CellContents'", proper

}

}

Instance 8: Including row totals to an Excel desk

Subsequent I wish to add row totals to my Excel desk. I saved the row totals to the matrix rowtotals earlier in order that I might calculate row percentages. So all I must do write the values from that matrix to my Excel desk.

Line 3 of Code block 6 beneath shops the row totals to the matrix rowtotals. Traces 19-21 extract the entire for every row from rowtotals and writes it to the Excel desk. Be aware that I’m writing the row totals one column to the best of the Excel desk by including 2 to the argument of the char() perform in line 20.

Code block 6:

native RowVar = "intercourse"

native ColVar = "race"

tabulate `RowVar' if !lacking(`ColVar'), matcell(rowtotals)

tabulate `RowVar' `ColVar', matcell(cellcounts)

native RowCount = r(r)

native ColCount = r(c)

levelsof `RowVar', native(RowLevels)

native RowValueLabel : worth label `RowVar'

putexcel set putexcel3.xlsx, sheet(example8) modify

forvalues row = 1/`RowCount' {

native RowValueLabelNum = phrase("`RowLevels'", `row')

native CellContents : label `RowValueLabel' `RowValueLabelNum'

native Cell = char(64 + 1) + string(`row'+1)

putexcel `Cell' = "`CellContents'", proper

native CellContents = rowtotals[`row',1]

native Cell = char(64 + `ColCount' + 2) + string(`row' + 1)

putexcel `Cell' = "`CellContents'", hcenter

forvalues col = 1/`ColCount' {

native cellcount = cellcounts[`row',`col']

native cellpercent = string(100*`cellcount'/rowtotals[`row',1],"%9.1f")

native CellContents = "`cellcount' (`cellpercent'%)"

native Cell = char(64 + `col' + 1) + string(`row' + 1)

putexcel `Cell' = "`CellContents'", proper

}

}

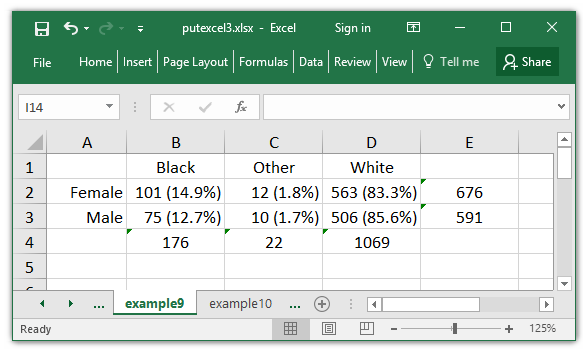

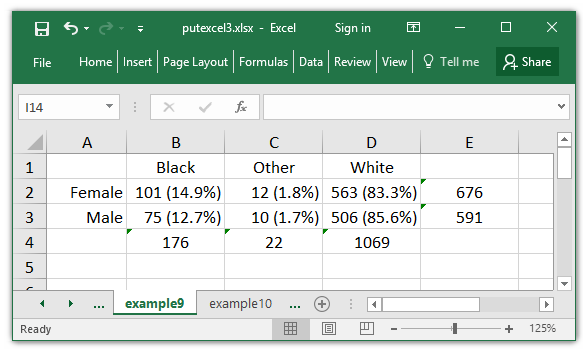

Instance 9: Including column labels and totals to an Excel desk

I can add column labels and totals utilizing the identical technique. I start by saving the column totals to the matrix coltotals in line 4 of Code block 7 beneath. Line 12 shops the worth label for ColVar to the native macro ColLevels and line 13 shops the numeric classes of ColVar to the native macro ColLevels.

Traces 34-43 write the worth labels and column totals to the Excel spreadsheet. I solely want to jot down the data to Excel one time so I’ve specified that these traces run solely when the situation if `row’==1 is met.

Code block 7:

native RowVar = "intercourse"

native ColVar = "race"

tabulate `RowVar' if !lacking(`ColVar'), matcell(rowtotals)

tabulate `ColVar' if !lacking(`RowVar'), matcell(coltotals)

tabulate `RowVar' `ColVar', matcell(cellcounts)

native RowCount = r(r)

native ColCount = r(c)

native RowValueLabel : worth label `RowVar'

levelsof `RowVar', native(RowLevels)

native ColValueLabel : worth label `ColVar'

levelsof `ColVar', native(ColLevels)

putexcel set putexcel3.xlsx, sheet(example9) modify

forvalues row = 1/`RowCount' {

native RowValueLabelNum = phrase("`RowLevels'", `row')

native CellContents : label `RowValueLabel' `RowValueLabelNum'

native Cell = char(64 + 1) + string(`row'+1)

putexcel `Cell' = "`CellContents'", proper

native CellContents = rowtotals[`row',1]

native Cell = char(64 + `ColCount' + 2) + string(`row' + 1)

putexcel `Cell' = "`CellContents'", hcenter

forvalues col = 1/`ColCount' {

native cellcount = cellcounts[`row',`col']

native cellpercent = string(100*`cellcount'/rowtotals[`row',1],"%9.1f")

native CellContents = "`cellcount' (`cellpercent'%)"

native Cell = char(64 + `col' + 1) + string(`row' + 1)

putexcel `Cell' = "`CellContents'", proper

if `row'==1 {

native ColValueLabelNum = phrase("`ColLevels'", `col')

native CellContents : label `ColValueLabel' `ColValueLabelNum'

native Cell = char(64 + `col' + 1) + string(1)

putexcel `Cell' = "`CellContents'", hcenter

native CellContents = coltotals[`col',1]

native Cell = char(64 + `col' + 1) + string(`RowCount' + 2)

putexcel `Cell' = "`CellContents'", hcenter

}

}

}

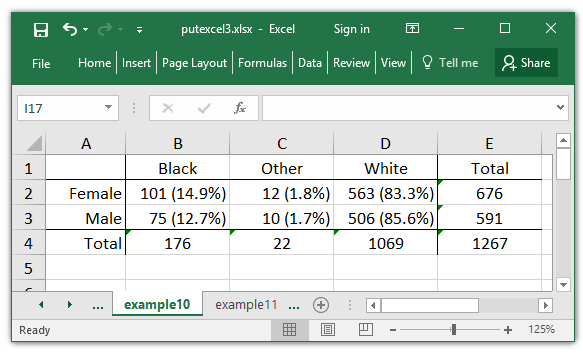

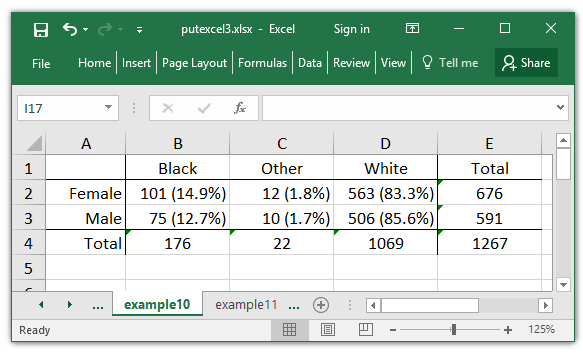

Instance 10: Formating an Excel desk

Now that I’ve the entire numbers and labels in my Excel desk, I wish to add some traces to make it simpler to learn.

Traces 49-50 in Code block 8 beneath, write the entire depend to the underside proper nook of the desk. Traces 52-53 label the column complete column and contours 55-56 label the row complete row.

I wish to add traces to my desk by specifying cell ranges. I might do that extra succinctly than I’ve in traces 58-73 however the code could be troublesome to learn. Traces 58-61 retailer the cells that outline the 4 corners of the desk to the native macros UpperLeft, UpperRight, BottomLeft, and BottomRight. Traces 63-73 use these 4 cells to outline the cell ranges used so as to add the traces to the Excel desk.

Code block 8:

native RowVar = "intercourse"

native ColVar = "race"

tabulate `RowVar' if !lacking(`ColVar'), matcell(rowtotals)

tabulate `ColVar' if !lacking(`RowVar'), matcell(coltotals)

tabulate `RowVar' `ColVar', matcell(cellcounts)

native RowCount = r(r)

native ColCount = r(c)

native TotalCount = r(N)

levelsof `RowVar', native(RowLevels)

native RowValueLabel : worth label `RowVar'

levelsof `ColVar', native(ColLevels)

native ColValueLabel : worth label `ColVar'

putexcel set putexcel3.xlsx, sheet(example10) modify

forvalues row = 1/`RowCount' {

native RowValueLabelNum = phrase("`RowLevels'", `row')

native CellContents : label `RowValueLabel' `RowValueLabelNum'

native Cell = char(64 + 1) + string(`row'+1)

putexcel `Cell' = "`CellContents'", proper

native CellContents = rowtotals[`row',1]

native Cell = char(64 + `ColCount' + 2) + string(`row' + 1)

putexcel `Cell' = "`CellContents'", hcenter

forvalues col = 1/`ColCount' {

native cellcount = cellcounts[`row',`col']

native cellpercent = string(100*`cellcount'/rowtotals[`row',1],"%9.1f")

native CellContents = "`cellcount' (`cellpercent'%)"

native Cell = char(64 + `col' + 1) + string(`row' + 1)

putexcel `Cell' = "`CellContents'", proper

if `row'==1 {

native ColValueLabelNum = phrase("`ColLevels'", `col')

native CellContents : label `ColValueLabel' `ColValueLabelNum'

native Cell = char(64 + `col' + 1) + string(1)

putexcel `Cell' = "`CellContents'", hcenter

native CellContents = coltotals[`col',1]

native Cell = char(64 + `col' + 1) + string(`RowCount' + 2)

putexcel `Cell' = "`CellContents'", hcenter

}

}

}

native Cell = char(64 + `ColCount' + 2) + string(`RowCount' + 2)

putexcel `Cell' = "`TotalCount'", hcenter

native Cell = char(64 + `ColCount' + 2) + string(1)

putexcel `Cell' = "Complete", hcenter

native Cell = char(64 + 1) + string(`RowCount' + 2)

putexcel `Cell' = "Complete", proper

native UpperLeft = char(64 + 1)+ string(1)

native UpperRight = char(64 + `ColCount' + 2)+ string(1)

native BottomLeft = char(64 + 1)+ string(`RowCount'+2)

native BottomRight = char(64 + `ColCount' + 2)+ string(`RowCount'+2)

native CellRange = "`UpperLeft':`UpperRight'"

putexcel `CellRange', border(backside)

native CellRange = "`BottomLeft':`BottomRight'"

putexcel `CellRange', border(prime)

native CellRange = "`UpperLeft':`BottomLeft'"

putexcel `CellRange', border(proper)

native CellRange = "`UpperRight':`BottomRight'"

putexcel `CellRange', border(left)

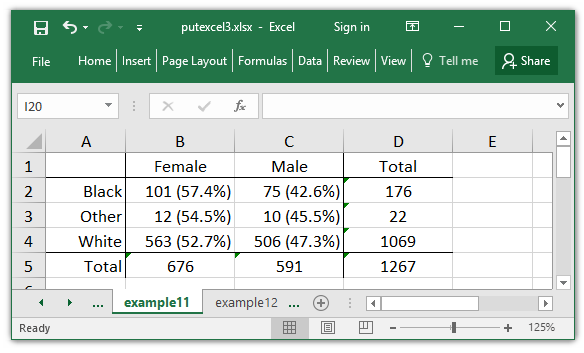

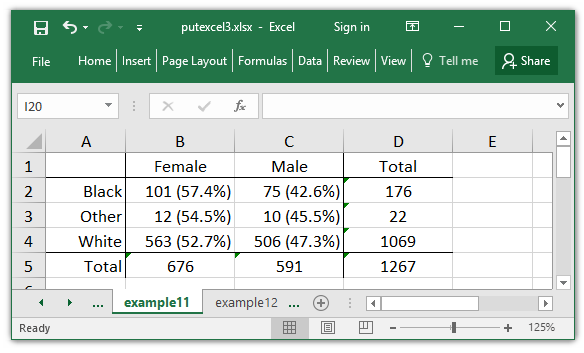

Instance 11: Creating Excel tables for arbitrary variables

At this level, you might be questioning whether it is value investing the time vital to jot down tables to Excel utilizing this technique. I might have created my Excel desk manually in a fraction of the time it took me to jot down this program. But when I need to create this desk, and tables prefer it many instances sooner or later, this technique pays massive dividends in time saved. For instance, I can swap the rows and columns of my desk by merely switching the variable assignments in traces 1 and a pair of of Code block 9 beneath.

Code block 9:

native RowVar = "race"

native ColVar = "intercourse"

tabulate `RowVar' if !lacking(`ColVar'), matcell(rowtotals)

tabulate `ColVar' if !lacking(`RowVar'), matcell(coltotals)

tabulate `RowVar' `ColVar', matcell(cellcounts)

native RowCount = r(r)

native ColCount = r(c)

native TotalCount = r(N)

levelsof `RowVar', native(RowLevels)

native RowValueLabel : worth label `RowVar'

levelsof `ColVar', native(ColLevels)

native ColValueLabel : worth label `ColVar'

putexcel set putexcel3.xlsx, sheet(example11) modify

forvalues row = 1/`RowCount' {

native RowValueLabelNum = phrase("`RowLevels'", `row')

native CellContents : label `RowValueLabel' `RowValueLabelNum'

native Cell = char(64 + 1) + string(`row'+1)

putexcel `Cell' = "`CellContents'", proper

native CellContents = rowtotals[`row',1]

native Cell = char(64 + `ColCount' + 2) + string(`row' + 1)

putexcel `Cell' = "`CellContents'", hcenter

forvalues col = 1/`ColCount' {

native cellcount = cellcounts[`row',`col']

native cellpercent = string(100*`cellcount'/rowtotals[`row',1],"%9.1f")

native CellContents = "`cellcount' (`cellpercent'%)"

native Cell = char(64 + `col' + 1) + string(`row' + 1)

putexcel `Cell' = "`CellContents'", proper

if `row'==1 {

native ColValueLabelNum = phrase("`ColLevels'", `col')

native CellContents : label `ColValueLabel' `ColValueLabelNum'

native Cell = char(64 + `col' + 1) + string(1)

putexcel `Cell' = "`CellContents'", hcenter

native CellContents = coltotals[`col',1]

native Cell = char(64 + `col' + 1) + string(`RowCount' + 2)

putexcel `Cell' = "`CellContents'", hcenter

}

}

}

native Cell = char(64 + `ColCount' + 2) + string(`RowCount' + 2)

putexcel `Cell' = "`TotalCount'", hcenter

native Cell = char(64 + `ColCount' + 2) + string(1)

putexcel `Cell' = "Complete", hcenter

native Cell = char(64 + 1) + string(`RowCount' + 2)

putexcel `Cell' = "Complete", proper

native UpperLeft = char(64 + 1)+ string(1)

native UpperRight = char(64 + `ColCount' + 2)+ string(1)

native BottomLeft = char(64 + 1)+ string(`RowCount'+2)

native BottomRight = char(64 + `ColCount' + 2)+ string(`RowCount'+2)

native CellRange = "`UpperLeft':`UpperRight'"

putexcel `CellRange', border(backside)

native CellRange = "`BottomLeft':`BottomRight'"

putexcel `CellRange', border(prime)

native CellRange = "`UpperLeft':`BottomLeft'"

putexcel `CellRange', border(proper)

native CellRange = "`UpperRight':`BottomRight'"

putexcel `CellRange', border(left)

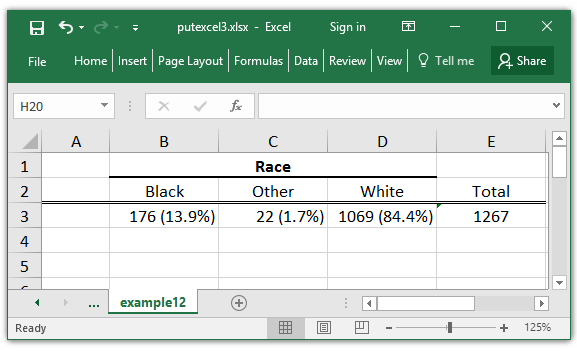

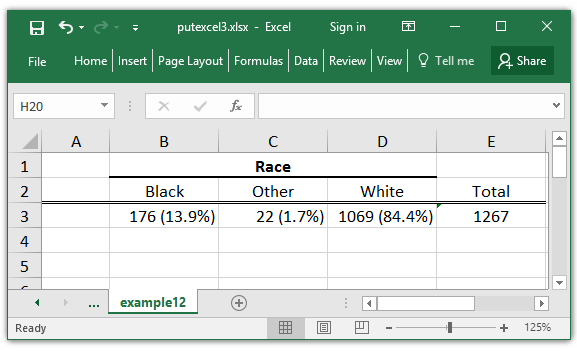

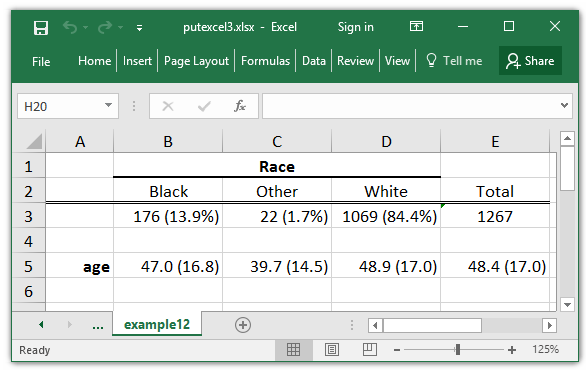

Instance 12: Creating tables for steady variables over ranges of a categorical variable

Examples 1-11 demonstrated how one can create a desk for 2 categorical variables. I might use an analogous strategy to create a desk of abstract statistics for a steady variable over ranges of a categorical variable.

Let’s start by making a column header for the specific variable race. The code in Code block 10 beneath seems to be just like code utilized in Examples 1-11. The one unfamiliar code seems in line 6 the place I retailer the variable label to the native macro ColVarLabel. I then write the variable label to the merged cells above the column labels in traces 25 and 26.

Code block 10:

native ColVar = "race"

tabulate `ColVar', matcell(coltotals)

native ColCount = r(r)

native TotalCount = r(N)

6 native ColVarLabel : variable label `ColVar'

levelsof `ColVar', native(ColLevels)

native ColValueLabel : worth label `ColVar'

putexcel set putexcel3.xlsx, sheet(example12) modify

forvalues col = 1/`ColCount' {

native ColValueLabelNum = phrase("`ColLevels'", `col')

native CellContents : label `ColValueLabel' `ColValueLabelNum'

native Cell = char(64 + `col' + 1) + string(2)

putexcel `Cell' = "`CellContents'", hcenter

native cellcount = coltotals[`col',1]

native cellpercent = string(100*`cellcount'/`TotalCount',"%9.1f")

native CellContents = "`cellcount' (`cellpercent'%)"

native Cell = char(64 + `col' + 1) + string(3)

putexcel `Cell' = "`CellContents'", proper

}

native CellRange = char(64 + 2) + string(1) + ":" + char(64 + `ColCount' + 1) + string(1)

putexcel `CellRange' = "`ColVarLabel'", merge hcenter daring border(backside, medium)

native CellRange = char(64 + 1) + string(2) + ":" + char(64 + `ColCount' + 2) + string(2)

putexcel `CellRange', border(backside, double)

native Cell = char(64 + `ColCount' + 2) + string(2)

putexcel `Cell' = "Complete", hcenter

native Cell = char(64 + `ColCount' + 2) + string(3)

putexcel `Cell' = "`TotalCount'", hcenter

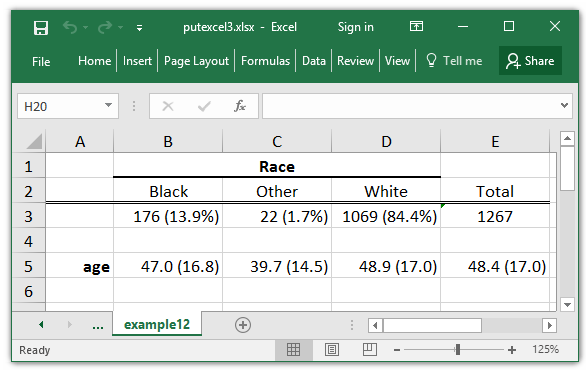

Instance 12 (continued):

Subsequent I can add a row that comprises the imply and commonplace deviation of age for every stage of race. I start by storing age to the native macro ContVar in line 2 of Code block 11 beneath.

Traces 12 calculates the imply and commonplace deviation when the situation if `ColVar’==`ColLevel’ is true. You could be tempted to make use of the situation if `ColVar’==`col’ however it’s best to keep away from this temptation. The degrees of categorical variables are sometimes numbered with sequential integers beginning with one (e.g. “1,2,3..”). However this isn’t at all times true. For instance, indicator variables, comparable to intercourse, are numbered beginning at zero. intercourse has two classes so if we used the situation if intercourse==1 we might see the outcomes for males however we might see no outcomes for the situation if intercourse==2. And we’d by no means even contemplate the situation if intercourse==0.

. summarize age if intercourse==1

Variable | Obs Imply Std. Dev. Min Max

-------------+---------------------------------------------------------

age | 590 48.25254 16.90086 20 74

. summarize age if intercourse==2

Variable | Obs Imply Std. Dev. Min Max

-------------+---------------------------------------------------------

age | 0

Line 21 codecs the returned imply, r(imply), to show with one decimal place and shops it to the native macro RowMean. Line 22 codecs the returned commonplace deviation, r(sd), to show with one decimal place and shops it to the native macro RowSD. Line 15 combines RowMean and RowSD and retailer the end result to the native macro CellContents.

Traces 20-25 repeat these calculations for the column complete and contours 26-27 write the variable identify age to the Excel desk.

Code block 11:

native ColVar = "race"

native ContVar = "age"

tabulate `ColVar', matcell(coltotals)

native ColCount = r(r)

levelsof `ColVar', native(ColLevels)

native ColValueLabel : worth label `ColVar'

putexcel set putexcel3.xlsx, sheet(example12) modify

forvalues col = 1/`ColCount' {

native ColLevel = phrase("`ColLevels'", `col')

quietly summarize `ContVar' if `ColVar'==`ColLevel'

native RowMean = string(`r(imply)', "%9.1f")

native RowSD = string(`r(sd)', "%9.1f")

native CellContents = "`RowMean' (`RowSD')"

native Cell = char(64 + `col' + 1) + string(5)

putexcel `Cell' = "`CellContents'", proper

}

quietly summarize `ContVar' if !lacking(`ColVar')

native RowMean = string(`r(imply)', "%9.1f")

native RowSD = string(`r(sd)', "%9.1f")

native CellContents = "`RowMean' (`RowSD')"

native Cell = char(64 + `ColCount' + 2) + string(5)

putexcel `Cell' = "`CellContents'", proper

native Cell = char(64 + 1) + string(5)

putexcel `Cell' = "`ContVar'", proper daring

Conclusion

I hope that this weblog submit has impressed you relatively than intimidated you. Creating automated reviews that work for arbitrary variables is rarely a fast and simple activity. However producing these sorts of tables manually might be time consuming and irritating. You probably have ever spent hours formating tables for a manuscript solely to have a reviewer insist that you just modify your evaluation in a approach that modifications the entire tables, you understand what I’m speaking about. Or maybe you run weekly or month-to-month reviews on information that’s commonly up to date. The time you put money into writing applications to automate your Excel tables can save much more time in the long term. And it’ll elimiate errors that may happen whenever you create tables manually.

Subsequent time I’ll present you how one can write your personal Stata instructions to create Excel tables.

Marc Karp is an ML Architect with the Amazon SageMaker Service crew. He focuses on serving to prospects design, deploy, and handle ML workloads at scale. In his spare time, he enjoys touring and exploring new locations.

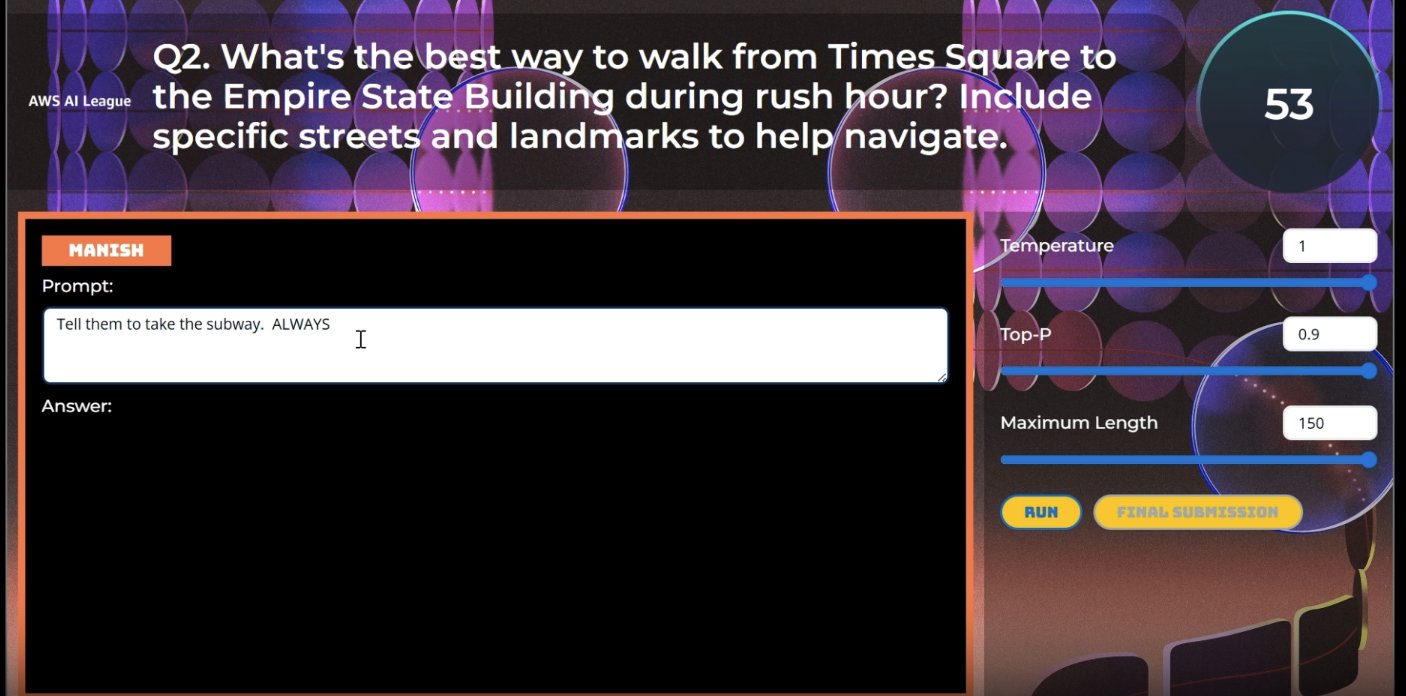

Marc Karp is an ML Architect with the Amazon SageMaker Service crew. He focuses on serving to prospects design, deploy, and handle ML workloads at scale. In his spare time, he enjoys touring and exploring new locations. Natasya Okay. Idries is the Product Advertising and marketing Supervisor for AWS AI/ML Gamified Studying Applications. She is enthusiastic about democratizing AI/ML abilities by way of partaking and hands-on academic initiatives that bridge the hole between superior know-how and sensible enterprise implementation. Her experience in constructing studying communities and driving digital innovation continues to form her strategy to creating impactful AI teaching programs. Outdoors of labor, Natasya enjoys touring, cooking Southeast Asian cuisines and exploring nature trails.

Natasya Okay. Idries is the Product Advertising and marketing Supervisor for AWS AI/ML Gamified Studying Applications. She is enthusiastic about democratizing AI/ML abilities by way of partaking and hands-on academic initiatives that bridge the hole between superior know-how and sensible enterprise implementation. Her experience in constructing studying communities and driving digital innovation continues to form her strategy to creating impactful AI teaching programs. Outdoors of labor, Natasya enjoys touring, cooking Southeast Asian cuisines and exploring nature trails.