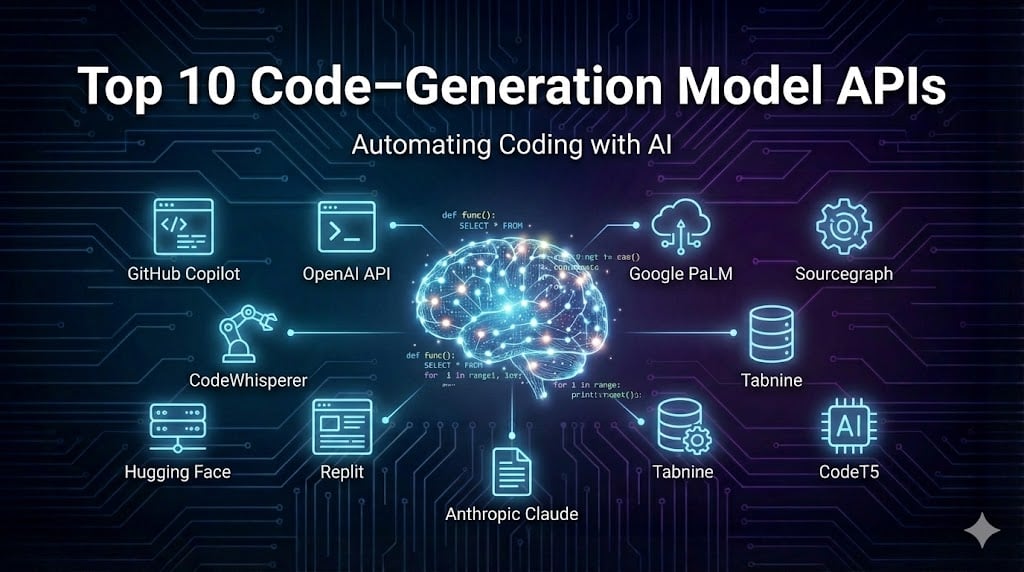

Fast abstract – What are code‑era mannequin APIs and which of them ought to builders use in 2026?

Reply: Code‑era APIs are AI companies that generate, full or refactor code when given pure‑language prompts or partial code. Trendy fashions transcend autocomplete; they will learn whole repositories, name instruments, run assessments and even open pull requests. This information compares main APIs (OpenAI’s Codex/GPT‑5, Anthropic’s Claude, Google’s Gemini, Amazon Q, Mistral’s Codestral, DeepSeek R1, Clarifai’s StarCoder2, IQuest Coder, Meta’s open fashions and multi‑agent platforms like Stride 100×) on options akin to context window, instrument integration and price. It additionally explores rising analysis – diffusion language fashions, recursive language fashions and code‑move coaching – and reveals how you can combine these APIs into your IDE, agentic workflows and CI/CD pipelines. Every part consists of professional insights that will help you make knowledgeable choices.

The explosion of AI coding assistants over the previous few years has modified how builders write, check and deploy software program. As a substitute of manually composing boilerplate or looking out Stack Overflow, engineers now leverage code‑era fashions that talk pure language and perceive advanced repositories. These companies can be found via APIs and IDE plug‑ins, making them accessible to freelancers and enterprises alike. Because the panorama evolves, new fashions emerge with bigger context home windows, higher reasoning and extra environment friendly architectures. On this article we’ll evaluate the prime 10 code‑era mannequin APIs for 2026, clarify how you can consider them, and spotlight analysis tendencies shaping their future. As a market‑main AI firm, Clarifai believes in transparency, equity and accountable innovation; we’ll combine our personal merchandise the place related and share practices that align with EEAT (Experience, Expertise, Authoritativeness and Trustworthiness). Let’s dive in.

Fast Digest – What You’ll Study

- Definition and significance of code‑era APIs and why they matter for IDEs, brokers and automation.

- Analysis standards: supported languages, context home windows, instrument integration, benchmarks, value and privateness.

- Comparative profiles for ten main fashions, together with proprietary and open‑supply choices.

- Step‑by‑step integration information for IDEs, agentic coding and CI/CD pipelines.

- Rising tendencies: diffusion fashions, recursive language fashions, code‑move coaching, RLVR and on‑system fashions.

- Actual‑world case research and professional quotes to floor theoretical ideas in follow.

- FAQs addressing frequent issues about adoption, privateness and the way forward for AI coding.

What Are Code‑Technology Mannequin APIs and Why Do They Matter?

Fast abstract – What do code‑era APIs do?

These APIs permit builders to dump coding duties to AI. Trendy fashions can generate features from pure‑language descriptions, refactor legacy modules, write assessments, discover bugs and even doc code. They work via REST endpoints or IDE extensions, returning structured outputs that may be built-in into tasks.

Coding assistants started as autocomplete instruments however have developed into agentic methods that learn and edit whole repositories. They combine with IDEs, command‑line interfaces and steady‑integration pipelines. In 2026, the market gives dozens of fashions with totally different strengths—some excel at reasoning, others at scaling to tens of millions of tokens, and a few are open‑supply for self‑internet hosting.

Why These APIs Are Remodeling Software program Growth

- Time‑to‑market discount: AI assistants automate repetitive duties like scaffolding, documentation and testing, liberating engineers to deal with structure and product options. Research present that builders adopting AI instruments scale back coding time and speed up launch cycles.

- High quality and consistency: The perfect fashions incorporate coaching knowledge from numerous repositories and may spot errors, implement model guides and recommend safety enhancements. Some even combine vulnerability scanning into the era course of.

- Agentic workflows: As a substitute of writing code line by line, builders now orchestrate fleets of autonomous brokers. On this paradigm, a conductor works with a single agent in an interactive loop, whereas an orchestrator coordinates a number of brokers operating concurrently. This shift empowers groups to deal with giant tasks with fewer engineers, nevertheless it requires new pondering round prompts, context administration and oversight.

Skilled Insights – What the Specialists Are Saying

- Plan earlier than you code. Google Chrome engineering supervisor Addy Osmani urges builders to start out with a transparent specification and break work into small, iterative duties. He notes that AI coding is “troublesome and unintuitive” with out construction, recommending a mini waterfall course of (planning in quarter-hour) earlier than writing any code.

- Present in depth context. Skilled customers emphasize the necessity to feed AI fashions with all related recordsdata, documentation and constraints. Instruments like Claude Code help importing whole repositories and summarizing them into manageable prompts.

- Combine fashions for greatest outcomes. Clarifai’s business information underscores that there isn’t any single “greatest” mannequin; combining giant normal fashions with smaller area‑particular ones can enhance accuracy and scale back value.

Learn how to Consider Code‑Technology APIs (Key Standards)

Supported Languages & Domains

Fashions like StarCoder2 and Codestral are educated on over 600 programming languages. Others specialise in Python, Java or JavaScript. Contemplate the languages your staff makes use of, as fashions could deal with dynamic typing in another way or lack correct indentation for sure languages.

Context Window & Reminiscence

An extended context means the mannequin can analyze bigger codebases and keep coherence throughout a number of recordsdata. Main fashions now supply context home windows from 128 ok tokens (Claude Sonnet, DeepSeek R1) as much as 1 M tokens (Gemini 2.5 Professional). Clarifai’s consultants notice that contexts of 128 ok–200 ok tokens allow finish‑to‑finish documentation summarization and danger evaluation.

Agentic Capabilities & Software Integration

Primary completion fashions return a snippet given a immediate; superior agentic fashions can run assessments, open recordsdata, name exterior APIs and even search the online. For instance, Claude Code’s Agent SDK can learn and edit recordsdata, run instructions and coordinate subagents for parallel duties. Multi‑agent frameworks like Stride 100× map codebases, create duties and open pull requests autonomously.

Benchmarks & Accuracy

Benchmarks assist quantify efficiency throughout duties. Frequent assessments embody:

- HumanEval/EvalPlus: Measures the mannequin’s means to generate right Python features from descriptions and deal with edge circumstances.

- SWE‑Bench: Evaluates actual‑world software program engineering duties by enhancing whole GitHub repositories and operating unit assessments.

- APPS: Assesses algorithmic reasoning with advanced downside unitsx

Observe {that a} excessive rating on one benchmark doesn’t assure normal success; have a look at a number of metrics and consumer critiques.

Efficiency & Value

Massive proprietary fashions supply excessive accuracy however could also be costly; open‑supply fashions present management and price financial savings. Clarifai’s compute orchestration lets groups spin up safe environments, check a number of fashions concurrently and run inference domestically with on‑premises runners. This infrastructure helps optimize value whereas sustaining safety and compliance.

Skilled Insights – Suggestions from Analysis

- Smaller fashions can outperform bigger ones. MIT researchers developed a method that guides small language fashions to supply syntactically legitimate code, permitting them to outperform bigger fashions whereas being extra environment friendly.

- Reasoning fashions dominate the long run. DeepSeek R1’s use of Reinforcement Studying with Verifiable Rewards (RLVR) demonstrates that reasoning‑oriented coaching considerably improves efficiency.

- Diffusion fashions allow bidirectional context. JetBrains researchers present that diffusion language fashions can generate out of order by conditioning on previous and future context, mirroring how builders revise code.

Fast abstract – What ought to builders search for when selecting a mannequin?

Have a look at supported languages, context window size, agentic capabilities, benchmarks and accuracy, value/pricing, and privateness/safety features. Balancing these elements helps match the best mannequin to your workflow.

Which Code‑Technology APIs Are Finest for 2026? (Prime Fashions Reviewed)

Under we profile the ten most influential fashions and platforms. Every part features a fast abstract, key capabilities, strengths, limitations and professional insights. Keep in mind to guage fashions within the context of your stack, finances and regulatory necessities.

1. OpenAI Codex & GPT‑5 – Highly effective Reasoning and Large Context

Fast abstract – Why contemplate Codex/GPT‑5?

OpenAI’s Codex fashions (the engine behind early GitHub Copilot) and the most recent GPT‑5 household are extremely succesful throughout languages and frameworks. GPT‑5 gives context home windows of as much as 400 ok tokens and powerful reasoning, whereas GPT‑4.1 offers balanced instruction following with as much as 1 M tokens in some variants. These fashions help perform calling and gear integration by way of the OpenAI API, making them appropriate for advanced workflows.

What They Do Nicely

- Versatile era: Helps a variety of languages and duties, from easy snippets to full software scaffolding.

- Agentic integration: The API permits perform calling to entry exterior companies and run code, enabling agentic behaviors. The fashions can work via IDE plug‑ins (Copilot), ChatGPT and command‑line interfaces.

- In depth ecosystem: Wealthy set of tutorials, plug‑ins and neighborhood instruments. Copilot integrates straight into VS Code and JetBrains, providing actual‑time options and AI chat.

Limitations

- Value: Pricing is greater than many open‑supply alternate options, particularly for giant context utilization. The pay‑as‑you‑go mannequin can result in unpredictable bills with out cautious monitoring.

- Privateness: Code submitted to the API is processed by OpenAI’s servers, which can be a priority for regulated industries. Self‑internet hosting shouldn’t be obtainable.

Skilled Insights

- Builders discover success after they construction prompts as in the event that they have been pair‑programming with a human. Addy Osmani notes that you need to deal with the mannequin like a junior engineer—present context, ask it to jot down a spec first after which generate code piece by piece.

- Researchers emphasize that reasoning‑oriented submit‑coaching, akin to RLVR, enhances the mannequin’s means to clarify its thought course of and produce right solutions.

2. Anthropic Claude Sonnet 4.5 & Claude Code – Security and Instruction Following

Fast abstract – How does Claude differ?

Anthropic’s Claude Sonnet fashions (v3.7 and v4.5) emphasize protected, well mannered and strong instruction following. They provide 128 ok context home windows and excel at multi‑file reasoning and debugging. The Claude Code API provides an Agent SDK that grants AI brokers entry to your file system, enabling them to learn, edit and execute code.

What They Do Nicely

- Prolonged context: Helps giant prompts, permitting evaluation of whole repositories.

- Agent SDK: Brokers can run CLI instructions, edit recordsdata and search the online, coordinating subagents and managing context.

- Security controls: Anthropic locations strict alignment measures on outputs, decreasing dangerous or insecure options.

Limitations

- Availability: Not all options (e.g., Claude Code SDK) are extensively obtainable. There could also be waitlists or capability constraints.

- Value: Paid tiers may be costly at scale.

Skilled Insights

- Anthropic recommends giving brokers sufficient context—complete recordsdata, documentation and assessments—to attain good outcomes. Their SDK mechanically compacts context to keep away from hitting the token restrict.

- When constructing brokers, take into consideration parallelism: subagents can deal with unbiased duties concurrently, dashing up workflows.

3. Google Gemini Code Help (Gemini 2.5 Professional) – 1 M Token Context & Multimodal Intelligence

Fast abstract – What units Gemini 2.5 Professional aside?

Gemini 2.5 Professional extends Google’s Gemini household into coding. It gives as much as 1 M tokens of context and may course of code, textual content and pictures. Gemini Code Help integrates with Google Cloud’s CLI and IDE plug‑ins, offering conversational help, code completion and debugging.

What It Does Nicely

- Large context: The 1 M token window permits whole repositories and design docs to be loaded right into a immediate—splendid for summarizing codebases or performing danger evaluation.

- Multimodal capabilities: It will probably interpret screenshots, diagrams and consumer interfaces, which is efficacious for UI improvement.

- Integration with Google’s ecosystem: Works seamlessly with Firebase, Cloud Construct and different GCP companies.

Limitations

- Non-public beta: Gemini 2.5 Professional could also be in restricted launch; entry could also be restricted.

- Value and knowledge privateness: Like different proprietary fashions, knowledge have to be despatched to Google’s servers.

Skilled Insights

- Clarifai’s business information notes that multimodal intelligence and retrieval‑augmented era are main tendencies in subsequent‑era fashions. Gemini leverages these improvements to contextualize code with documentation, diagrams and search outcomes.

- JetBrains researchers recommend that fashions with bi‑directional context, like diffusion fashions, could higher mirror how builders refine code; Gemini’s lengthy context helps approximate this conduct.

4. Amazon Q Developer (Previously CodeWhisperer) – AWS Integration & Safety Scans

Fast abstract – Why select Amazon Q?

Amazon’s Q Developer (previously CodeWhisperer) focuses on safe, AWS‑optimized code era. It helps a number of languages and integrates deeply with AWS companies. The instrument suggests code snippets, infrastructure‑as‑code templates and even coverage suggestions.

What It Does Nicely

- AWS integration: Supplies context‑conscious suggestions that mechanically configure IAM insurance policies, Lambda features and different AWS assets.

- Safety and licensing checks: Scans code for vulnerabilities and compliance points, providing remediation options.

- Free tier for people: Presents limitless utilization for one consumer in sure tiers, making it accessible to hobbyists and small startups.

Limitations

- Platform lock‑in: Finest suited to builders deeply invested in AWS. Tasks hosted elsewhere might even see much less profit.

- Boilerplate bias: Might emphasize AWS‑particular patterns over normal options, and options can really feel generic.

Skilled Insights

- Critiques emphasize utilizing Amazon Q if you find yourself already inside the AWS ecosystem; it shines when you might want to generate serverless features, CloudFormation templates or handle IAM insurance policies.

- Bear in mind the commerce‑offs between comfort and vendor lock‑in; consider portability in the event you want multi‑cloud help.

5. Mistral Codestral – Open Weights and Fill‑in‑the‑Center

Fast abstract – What makes Codestral distinctive?

Codestral is a 22 B parameter mannequin launched by Mistral. It’s educated on 80+ programming languages, helps fill‑in‑the‑center (FIM) and has a devoted API endpoint with a beneficiant beta interval.

What It Does Nicely

- Open weights: Codestral’s weights are freely obtainable, enabling self‑internet hosting and high-quality‑tuning.

- FIM capabilities: It excels at infilling lacking code segments, making it splendid for refactoring and partial edits. Builders report excessive accuracy on benchmarks like HumanEval.

- Integration into widespread instruments: Supported by frameworks like LlamaIndex and LangChain and IDE extensions akin to Proceed.dev and Tabnine.

Limitations

- Context measurement: Whereas strong, it could not match the 128 ok+ home windows of newer proprietary fashions.

- Documentation and help: Being a more moderen entrant, neighborhood assets are nonetheless growing.

Skilled Insights

- Builders reward Codestral for providing open weights and aggressive efficiency, enabling experimentation with out vendor lock‑in.

- Clarifai recommends combining open fashions like Codestral with specialised fashions via compute orchestration to optimize value and accuracy.

6. DeepSeek R1 & Chat V3 – Inexpensive Open‑Supply Reasoning Fashions

Fast abstract – Why select DeepSeek?

DeepSeek R1 and Chat V3 are open‑supply fashions famend for introducing Reinforcement Studying with Verifiable Rewards (RLVR). R1 matches proprietary fashions on coding benchmarks whereas being value‑efficient.

What They Do Nicely

- Reasoning‑oriented coaching: RLVR allows the mannequin to supply detailed reasoning and step‑by‑step options.

- Aggressive benchmarks: DeepSeek R1 performs nicely on HumanEval, SWE‑Bench and APPS, usually rivaling bigger proprietary fashions.

- Value and openness: The mannequin is open weight, permitting for self‑internet hosting and modifications. Context home windows of as much as 128 ok tokens help giant codebases.

Limitations

- Ecosystem: Whereas rising, DeepSeek’s ecosystem is smaller than these of OpenAI or Anthropic; plug‑ins and tutorials could also be restricted.

- Efficiency variance: Some builders report inconsistencies when transferring between languages or domains.

Skilled Insights

- Researchers emphasize that RLVR and comparable strategies present that smaller, nicely‑educated fashions can compete with giants, thereby democratizing entry to highly effective coding assistants.

- Clarifai notes that open‑supply fashions may be mixed with area‑particular fashions by way of compute orchestration to tailor options for regulated industries.

7. Clarifai StarCoder2 & Compute Orchestration Platform – Balanced Efficiency and Belief

Fast abstract – Why decide Clarifai?

StarCoder2‑15B is Clarifai’s flagship code‑era mannequin. It’s educated on greater than 600 programming languages and gives a giant context window with strong efficiency. It’s accessible via Clarifai’s platform, which incorporates compute orchestration, native runners and equity dashboards.

What It Does Nicely

- Efficiency and breadth: Handles numerous languages and duties, making it a flexible selection for enterprise tasks. The mannequin’s API returns constant outcomes with safe dealing with.

- Compute orchestration: Clarifai’s platform permits groups to spin up safe environments, run a number of fashions in parallel and monitor efficiency. Native runners allow on‑premises inference, addressing knowledge‑privateness necessities.

- Equity and bias monitoring: Constructed‑in dashboards assist detect and mitigate bias throughout outputs, supporting accountable AI improvement.

Limitations

- Parameter measurement: At 15 B parameters, StarCoder2 could not match the uncooked energy of 40 B+ fashions, nevertheless it strikes a stability between functionality and effectivity.

- Group visibility: As a more moderen entrant, it could not have as many third‑occasion integrations as older fashions.

Skilled Insights

- Clarifai consultants advocate for mixing fashions—utilizing normal fashions like StarCoder2 alongside area‑particular small fashions to attain optimum outcomes.

- The corporate highlights rising improvements akin to multimodal intelligence, chain‑of‑thought reasoning, combination‑of‑consultants architectures and retrieval‑augmented era, all of which the platform is designed to help.

8. IQuest Coder V1 – Code‑Circulation Coaching and Environment friendly Architectures

Fast abstract – What’s particular about IQuest Coder?

IQuest Coder comes from the AI analysis arm of a quantitative hedge fund. Launched in January 2026, it introduces code‑move coaching—coaching on commit histories and the way code evolves over time. It gives Instruct, Considering and Loop variants, with parameter sizes starting from 7 B to 40 B.

What It Does Nicely

- Excessive benchmarks with fewer parameters: The 40 B variant achieves 81.4 % on SWE‑Bench Verified and 81.1 % on LiveCodeBench, matching or beating fashions with 400 B+ parameters.

- Reasoning and effectivity: The Considering variant employs reasoning‑pushed reinforcement studying and a 128 ok context window. The Loop variant makes use of a recurrent transformer structure to cut back useful resource utilization.

- Open supply: Full mannequin weights, coaching code and analysis scripts can be found for obtain.

Limitations

- New ecosystem: Being new, IQuest’s neighborhood help and integrations are nonetheless rising.

- Licensing constraints: The license consists of restrictions on industrial use by giant corporations.

Skilled Insights

- The success of IQuest Coder underscores that innovation in coaching methodology can outperform pure scaling. Code‑move coaching teaches the mannequin how code evolves, resulting in extra coherent options throughout refactoring.

- It additionally highlights that business outsiders—akin to hedge funds—at the moment are constructing state‑of‑the‑artwork fashions, hinting at a broader democratization of AI analysis.

9. Meta’s Code Llama & Llama 4 Code / Qwen & Different Open‑Supply Options – Large Context & Group

Fast abstract – The place do open fashions like Code Llama and Qwen match?

Meta’s Code Llama and Llama 4 Code supply open weights with context home windows as much as 10 M tokens, making them appropriate for enormous codebases. Qwen‑Code and comparable fashions present multilingual help and are freely obtainable.

What They Do Nicely

- Scale: Extraordinarily lengthy contexts permit evaluation of whole monorepos.

- Open ecosystem: Group‑pushed improvement results in new high-quality‑tunes, benchmarks and plug‑ins.

- Self‑internet hosting: Builders can deploy these fashions on their very own {hardware} for privateness and price management.

Limitations

- Decrease efficiency on some benchmarks: Whereas spectacular, these fashions could not match the reasoning of proprietary fashions with out high-quality‑tuning.

- {Hardware} necessities: Working 10 M‑token fashions calls for important VRAM and compute; not all groups can help this.

Skilled Insights

- Clarifai’s information highlights that edge and on‑system fashions are a rising pattern. Self‑internet hosting open fashions like Code Llama could also be important for functions requiring strict knowledge management.

- Utilizing combination‑of‑consultants or adapter modules can prolong these fashions’ capabilities with out retraining the entire community.

10. Stride 100×, Tabnine, GitHub Copilot & Agentic Frameworks – Orchestrating Fleets of Fashions

Fast abstract – Why contemplate agentic frameworks?

Along with standalone fashions, multi‑agent platforms like Stride 100×, Tabnine, GitHub Copilot, Cursor, Proceed.dev and others present orchestration and integration layers. They join fashions, code repositories and deployment pipelines, creating an finish‑to‑finish answer.

What They Do Nicely

- Job orchestration: Stride 100× maps codebases, creates duties and generates pull requests mechanically, permitting groups to handle technical debt and have work.

- Privateness & self‑internet hosting: Tabnine gives on‑prem options for organizations that want full management over their code. Proceed.dev and Cursor present open‑supply IDE plug‑ins that may hook up with any mannequin.

- Actual‑time help: GitHub Copilot and comparable instruments supply inline options, doc era and chat performance.

Limitations

- Ecosystem variations: Every platform ties into particular fashions or API suppliers. Some supply solely proprietary integrations, whereas others help open‑supply fashions.

- Subscription prices: Orchestration platforms usually use seat‑primarily based pricing, which might add up for giant groups.

Skilled Insights

- In keeping with Qodo AI’s evaluation, multi‑agent methods are the long run of AI coding. They predict that builders will more and more depend on fleets of brokers that generate code, overview it, create documentation and handle assessments.

- Addy Osmani distinguishes between conductor instruments (interactive, synchronous) and orchestrator instruments (asynchronous, concurrent). The selection will depend on whether or not you want interactive coding classes or giant automated refactors.

Learn how to Combine Code‑Technology APIs into Your Workflow

Fast abstract – What’s one of the simplest ways to make use of these APIs?

Begin by planning your challenge, then select a mannequin that matches your languages and finances. Set up the suitable IDE extension or SDK, present wealthy context and iterate in small increments. Use Clarifai’s compute orchestration to combine fashions and run them securely.

Step 1: Plan and Outline Necessities

Earlier than writing a single line of code, brainstorm your challenge and write an in depth specification. Doc necessities, constraints and structure choices. Ask the AI mannequin to assist refine edge circumstances and create a challenge plan. This starting stage units expectations for each human and AI companions.

Step 2: Select the Proper API and Set Up Credentials

Choose a mannequin primarily based on the analysis standards above. Register for API keys, set utilization limits and decide which mannequin variations (e.g., GPT‑5 vs GPT‑4.1; Sonnet 4.5 vs 3.7) you’ll use.

Step 3: Set up Extensions and SDKs

Most fashions supply IDE plug‑ins or command‑line interfaces. For instance:

- Clarifai’s SDK means that you can name StarCoder2 by way of REST and run inference on native runners; the native runner retains your code on‑prem whereas enabling excessive‑pace inference.

- GitHub Copilot and Cursor combine straight into VS Code; Claude Code and Gemini have CLI instruments.

- Proceed.dev and Tabnine help connecting to exterior fashions by way of API keys.

Step 4: Present Context and Steerage

Add or reference related recordsdata, features and documentation. For multi‑file refactors, present your entire module or repository; use retrieval‑augmented era to herald docs or associated points. Claude Code and comparable brokers can import full repos into context, mechanically summarizing them.

Step 5: Iterate in Small Chunks

Break the challenge into chunk‑sized duties. Ask the mannequin to implement one perform, repair one bug or write one check at a time. Evaluate outputs rigorously, run assessments and supply suggestions. If the mannequin goes off observe, revise the immediate or present corrective examples.

Step 6: Automate in CI/CD

Combine the API into steady integration pipelines to automate code era, testing and documentation. Multi‑agent frameworks like Stride 100× can generate pull requests, replace READMEs and even carry out code critiques. Clarifai’s compute orchestration allows operating a number of fashions in a safe surroundings and capturing metrics for compliance.

Step 7: Monitor, Consider and Enhance

Observe mannequin efficiency utilizing unit assessments, benchmarks and human suggestions. Use Clarifai’s equity dashboards to audit outputs for bias and alter prompts accordingly. Contemplate mixing fashions (e.g., utilizing GPT‑5 for reasoning and Codestral for infilling) to leverage strengths.

Rising Tendencies & Future Instructions in Code Technology

Fast abstract – What’s subsequent for AI coding?

Future fashions will enhance how they edit code, handle context, purpose about algorithms and run on edge gadgets. Analysis into diffusion fashions, recursive language fashions and new reinforcement studying strategies guarantees to reshape the panorama.

Diffusion Language Fashions – Out‑of‑Order Technology

Not like autoregressive fashions that generate token by token, diffusion language fashions (d‑LLMs) situation on each previous and future context. JetBrains researchers notice that this aligns with how people code—sketching features, leaping forward after which refining earlier components. d‑LLMs can revisit and refine incomplete sections, enabling extra pure infilling. In addition they help coordinated multi‑area updates: IDEs might masks a number of problematic areas and let the mannequin regenerate them coherently.

Semi‑Autoregressive & Block Diffusion – Balancing Velocity and High quality

Researchers are exploring semi‑autoregressive strategies, akin to Block Diffusion, which mix the effectivity of autoregressive era with the flexibleness of diffusion fashions. These approaches generate blocks of tokens in parallel whereas nonetheless permitting out‑of‑order changes.

Recursive Language Fashions – Self‑Managing Context

Recursive Language Fashions (RLMs) give LLMs a persistent Python REPL to handle their context. The mannequin can examine enter knowledge, name sub‑LLMs and retailer intermediate outcomes. This method addresses context rot by summarizing or externalizing data, enabling longer reasoning chains with out exceeding context home windows. RLMs could turn into the spine of future agentic methods, permitting AI to handle its reminiscence and reasoning.

Code‑Circulation Coaching & Evolutionary Information

IQuest Coder’s code‑move coaching teaches the mannequin how code evolves throughout commit histories, emphasizing dynamic patterns fairly than static snapshots. This method ends in smaller fashions outperforming giant ones on advanced duties, indicating that high quality of information and coaching methodology can trump sheer scale.

Reinforcement Studying with Verifiable Rewards (RLVR)

RLVR permits fashions to study from deterministic rewards for code and math issues, eradicating the necessity for human choice labels. This system powers DeepSeek R1’s reasoning skills and is prone to affect many future fashions.

Edge & On‑System Fashions

Clarifai predicts important development in edge and area‑particular fashions. Working code‑era fashions on native {hardware} ensures privateness, reduces latency and allows offline improvement. Count on to see extra slimmed‑down fashions optimized for cellular and embedded gadgets.

Multi‑Agent Orchestration

The way forward for coding will contain fleets of brokers. Instruments like Copilot Agent, Stride 100× and Tabnine orchestrate a number of fashions to deal with duties in parallel. Builders will more and more act as conductors and orchestrators, guiding AI workflows fairly than writing code straight.

Actual‑World Case Research & Skilled Voices

Fast abstract – What do actual customers and consultants say?

Case research present that integrating AI coding assistants can dramatically enhance productiveness, however success will depend on planning, context and human oversight.

Stride 100× – Automating Tech Debt

In a single case examine, a mid‑sized fintech firm adopted Stride 100× to deal with technical debt. Stride’s multi‑agent system scanned their repositories, mapped dependencies, created a backlog of duties and generated pull requests with code fixes. The platform’s means to open and overview pull requests saved the staff a number of weeks of handbook work. Builders nonetheless reviewed the adjustments, however the AI dealt with the repetitive scaffolding and documentation.

Addy Osmani’s Coding Workflow

Addy Osmani stories that at Anthropic, round 90 % of the code for his or her inside instruments is now written by AI fashions. Nevertheless, he cautions that success requires a disciplined workflow: begin with a transparent spec, break work into iterative chunks and supply considerable context. With out this construction, AI outputs may be chaotic; with it, productiveness soars.

MIT Analysis – Small Fashions, Massive Affect

MIT’s staff developed a probabilistic method that guides small fashions to stick to programming language guidelines, enabling them to beat bigger fashions on code era duties. This analysis means that the long run could lie in environment friendly, area‑specialised fashions fairly than ever‑bigger networks.

Clarifai’s Platform – Equity and Flexibility

Firms in regulated industries (finance, healthcare) have leveraged Clarifai’s compute orchestration and equity dashboards to deploy code‑era fashions securely. By operating fashions on native runners and monitoring bias metrics, they have been in a position to undertake AI coding assistants with out compromising privateness or compliance.

IQuest Coder – Effectivity and Evolution

IQuest Coder’s launch shocked many observers: a 40 B‑parameter mannequin beating a lot bigger fashions by coaching on code evolution. Aggressive programmers report that the Considering variant explains algorithms step-by-step and suggests optimizations, whereas the Loop variant gives environment friendly inference for deployment. Its open‑supply launch democratizes entry to slicing‑edge strategies.

Steadily Requested Questions (FAQs)

Q1. Are code‑era APIs protected to make use of with proprietary code?

Sure, however select fashions with robust privateness ensures. Self‑internet hosting open‑supply fashions or utilizing Clarifai’s native runner ensures code by no means leaves your surroundings. For cloud‑hosted fashions, learn the supplier’s privateness coverage and contemplate redacting delicate knowledge.

Q2. How do I forestall AI from introducing bugs?

Deal with AI options as drafts. Plan duties, present context, run assessments after each change and overview generated code. Splitting work into small increments and utilizing fashions with excessive benchmark scores reduces danger.

Q3. Which mannequin is greatest for novices?

Novices could want instruments with robust instruction following and security, akin to Claude Sonnet or Amazon Q. These fashions supply clearer explanations and guard in opposition to insecure patterns. Nevertheless, all the time begin with easy duties and steadily enhance complexity.

This autumn. Can I mix a number of fashions?

Completely. Utilizing Clarifai’s compute orchestration, you possibly can run a number of fashions in parallel—e.g., utilizing GPT‑5 for design, StarCoder2 for implementation and Codestral for refactoring. Mixing fashions usually yields higher outcomes than counting on one.

Q5. What’s the way forward for code era?

Analysis factors towards diffusion fashions, recursive language fashions, code‑move coaching and multi‑agent orchestration. The following era of fashions will doubtless generate code extra like people—enhancing, reasoning and coordinating duties throughout a number of brokers

Last Ideas

Code‑era APIs are reworking software program improvement. The 2026 panorama gives a wealthy mixture of proprietary giants, modern open‑supply fashions and multi‑agent frameworks. Evaluating fashions requires contemplating languages, context home windows, agentic capabilities, benchmarks, prices and privateness. Clarifai’s StarCoder2 and compute orchestration present a balanced, clear answer with safe deployment, equity monitoring and the power to combine fashions for optimized outcomes.

Rising analysis means that future fashions will generate code extra like people—enhancing iteratively, managing their very own context and reasoning about algorithms. On the similar time, business leaders emphasize that AI is a accomplice, not a substitute; success will depend on clear planning, human oversight and moral utilization. By staying knowledgeable and experimenting with totally different fashions, builders and corporations can harness AI to construct strong, safe and modern software program—whereas maintaining belief and equity on the core.