3D printing has come a great distance since its invention in 1983 by Chuck Hull, who pioneered stereolithography, a method that solidifies liquid resin into stable objects utilizing ultraviolet lasers. Over the a long time, 3D printers have advanced from experimental curiosities into instruments able to producing all the pieces from customized prosthetics to advanced meals designs, architectural fashions, and even functioning human organs.

However because the know-how matures, its environmental footprint has turn into more and more tough to put aside. The overwhelming majority of client and industrial 3D printing nonetheless depends on petroleum-based plastic filament. And whereas “greener” options produced from biodegradable or recycled supplies exist, they arrive with a severe trade-off: they’re usually not as sturdy. These eco-friendly filaments are likely to turn into brittle below stress, making them ill-suited for structural functions or load-bearing components — precisely the place power issues most.

This trade-off between sustainability and mechanical efficiency prompted researchers at MIT’s Pc Science and Synthetic Intelligence Laboratory (CSAIL) and the Hasso Plattner Institute to ask: Is it potential to construct objects which are principally eco-friendly, however nonetheless sturdy the place it counts?

Their reply is SustainaPrint, a brand new software program and {hardware} toolkit designed to assist customers strategically mix sturdy and weak filaments to get the very best of each worlds. As a substitute of printing a whole object with high-performance plastic, the system analyzes a mannequin by way of finite factor evaluation simulations, predicts the place the item is probably to expertise stress, after which reinforces simply these zones with stronger materials. The remainder of the half will be printed utilizing greener, weaker filament, decreasing plastic use whereas preserving structural integrity.

“Our hope is that SustainaPrint can be utilized in industrial and distributed manufacturing settings someday, the place native materials shares could fluctuate in high quality and composition,” says MIT PhD scholar and CSAIL researcher Maxine Perroni-Scharf, who’s a lead creator on a paper presenting the undertaking. “In these contexts, the testing toolkit may assist make sure the reliability of obtainable filaments, whereas the software program’s reinforcement technique may scale back total materials consumption with out sacrificing perform.”

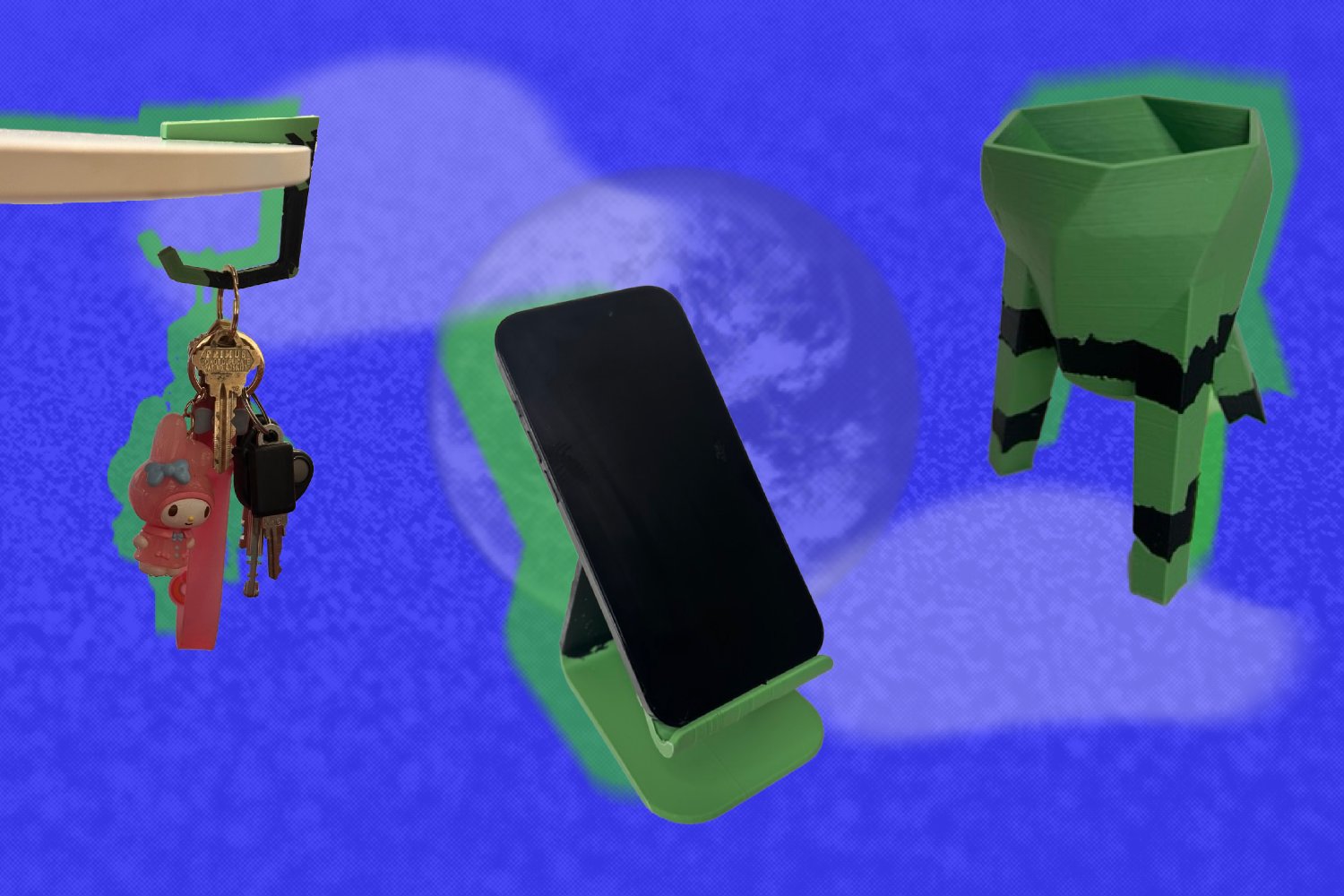

For his or her experiments, the workforce used Polymaker’s PolyTerra PLA because the eco-friendly filament, and commonplace or Robust PLA from Ultimaker for reinforcement. They used a 20 % reinforcement threshold to point out that even a small quantity of sturdy plastic goes a great distance. Utilizing this ratio, SustainaPrint was capable of get better as much as 70 % of the power of an object printed solely with high-performance plastic.

They printed dozens of objects, from easy mechanical shapes like rings and beams to extra purposeful home goods corresponding to headphone stands, wall hooks, and plant pots. Every object was printed 3 ways: as soon as utilizing solely eco-friendly filament, as soon as utilizing solely sturdy PLA, and as soon as with the hybrid SustainaPrint configuration. The printed components have been then mechanically examined by pulling, bending, or in any other case breaking them to measure how a lot pressure every configuration may stand up to.

In lots of instances, the hybrid prints held up almost in addition to the full-strength variations. For instance, in a single check involving a dome-like form, the hybrid model outperformed the model printed solely in Robust PLA. The workforce believes this can be because of the strengthened model’s skill to distribute stress extra evenly, avoiding the brittle failure typically brought on by extreme stiffness.

“This means that in sure geometries and loading situations, mixing supplies strategically may very well outperform a single homogenous materials,” says Perroni-Scharf. “It’s a reminder that real-world mechanical habits is stuffed with complexity, particularly in 3D printing, the place interlayer adhesion and power path selections can have an effect on efficiency in surprising methods.”

A lean, inexperienced, eco-friendly printing machine

SustainaPrint begins off by letting a person add their 3D mannequin right into a customized interface. By choosing mounted areas and areas the place forces shall be utilized, the software program then makes use of an strategy known as “Finite Factor Evaluation” to simulate how the item will deform below stress. It then creates a map displaying strain distribution contained in the construction, highlighting areas below compression or rigidity, and applies heuristics to phase the item into two classes: people who want reinforcement, and people who don’t.

Recognizing the necessity for accessible and low-cost testing, the workforce additionally developed a DIY testing toolkit to assist customers assess power earlier than printing. The package has a 3D-printable system with modules for measuring each tensile and flexural power. Customers can pair the system with frequent gadgets like pull-up bars or digital scales to get tough, however dependable efficiency metrics. The workforce benchmarked their outcomes in opposition to producer knowledge and located that their measurements persistently fell inside one commonplace deviation, even for filaments that had undergone a number of recycling cycles.

Though the present system is designed for dual-extrusion printers, the researchers imagine that with some handbook filament swapping and calibration, it might be tailored for single-extruder setups, too. In present type, the system simplifies the modeling course of by permitting only one pressure and one mounted boundary per simulation. Whereas this covers a variety of frequent use instances, the workforce sees future work increasing the software program to help extra advanced and dynamic loading situations. The workforce additionally sees potential in utilizing AI to deduce the item’s meant use based mostly on its geometry, which may enable for totally automated stress modeling with out handbook enter of forces or boundaries.

3D without spending a dime

The researchers plan to launch SustainaPrint open-source, making each the software program and testing toolkit obtainable for public use and modification. One other initiative they aspire to convey to life sooner or later: schooling. “In a classroom, SustainaPrint isn’t only a software, it’s a method to train college students about materials science, structural engineering, and sustainable design, multi functional undertaking,” says Perroni-Scharf. “It turns these summary ideas into one thing tangible.”

As 3D printing turns into extra embedded in how we manufacture and prototype all the pieces from client items to emergency tools, sustainability issues will solely develop. With instruments like SustainaPrint, these issues not want to come back on the expense of efficiency. As a substitute, they will turn into a part of the design course of: constructed into the very geometry of the issues we make.

Co-author Patrick Baudisch, who’s a professor on the Hasso Plattner Institute, provides that “the undertaking addresses a key query: What’s the level of amassing materials for the aim of recycling, when there is no such thing as a plan to truly ever use that materials? Maxine presents the lacking hyperlink between the theoretical/summary concept of 3D printing materials recycling and what it truly takes to make this concept related.”

Perroni-Scharf and Baudisch wrote the paper with CSAIL analysis assistant Jennifer Xiao; MIT Division of Electrical Engineering and Pc Science grasp’s scholar Cole Paulin ’24; grasp’s scholar Ray Wang SM ’25 and PhD scholar Ticha Sethapakdi SM ’19 (each CSAIL members); Hasso Plattner Institute PhD scholar Muhammad Abdullah; and Affiliate Professor Stefanie Mueller, lead of the Human-Pc Interplay Engineering Group at CSAIL.

The researchers’ work was supported by a Designing for Sustainability Grant from the Designing for Sustainability MIT-HPI Analysis Program. Their work shall be offered on the ACM Symposium on Person Interface Software program and Know-how in September.

.jpg)