Query:

MoE fashions include much more parameters than Transformers, but they’ll run quicker at inference. How is that doable?

Distinction between Transformers & Combination of Specialists (MoE)

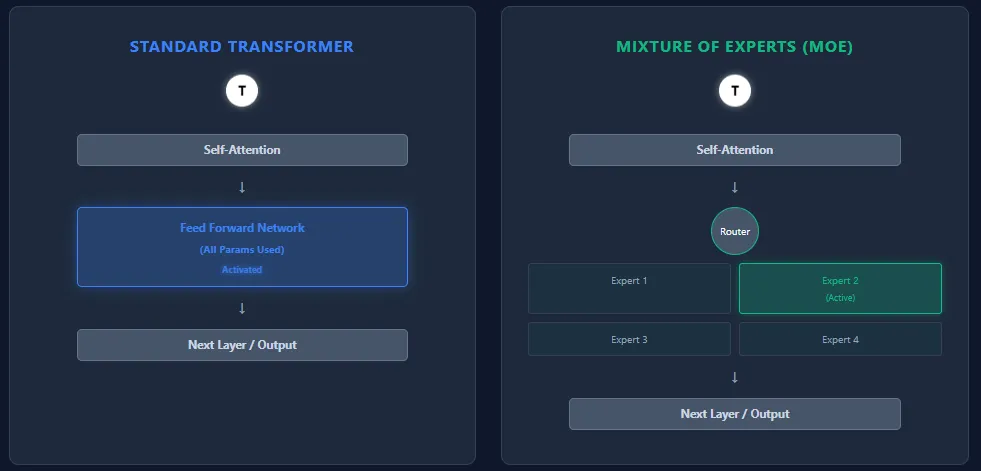

Transformers and Combination of Specialists (MoE) fashions share the identical spine structure—self-attention layers adopted by feed-forward layers—however they differ essentially in how they use parameters and compute.

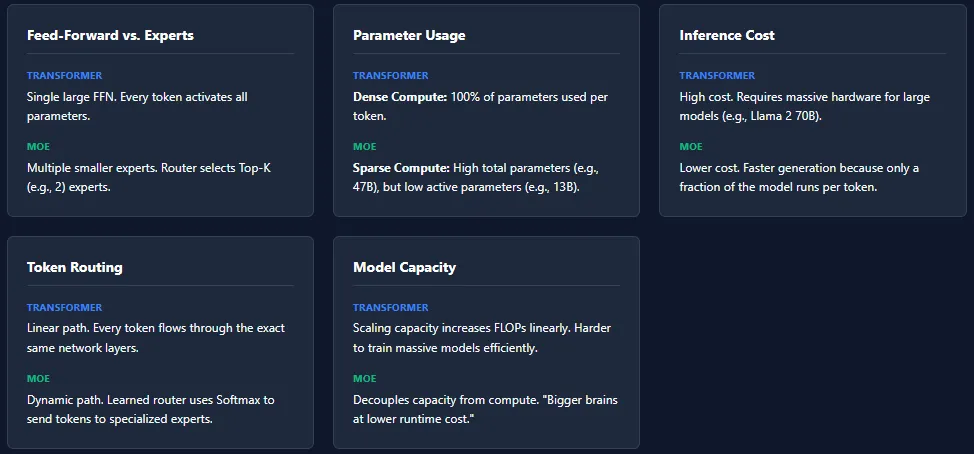

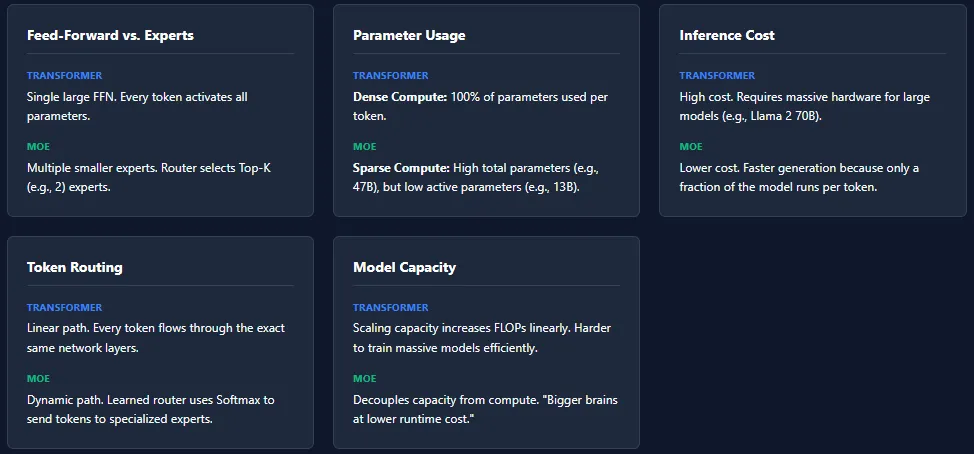

Feed-Ahead Community vs Specialists

- Transformer: Every block comprises a single giant feed-forward community (FFN). Each token passes by way of this FFN, activating all parameters throughout inference.

- MoE: Replaces the FFN with a number of smaller feed-forward networks, referred to as specialists. A routing community selects just a few specialists (High-Ok) per token, so solely a small fraction of complete parameters is energetic.

Parameter Utilization

- Transformer: All parameters throughout all layers are used for each token → dense compute.

- MoE: Has extra complete parameters, however prompts solely a small portion per token → sparse compute. Instance: Mixtral 8×7B has 46.7B complete parameters, however makes use of solely ~13B per token.

Inference Value

- Transformer: Excessive inference price resulting from full parameter activation. Scaling to fashions like GPT-4 or Llama 2 70B requires highly effective {hardware}.

- MoE: Decrease inference price as a result of solely Ok specialists per layer are energetic. This makes MoE fashions quicker and cheaper to run, particularly at giant scales.

Token Routing

- Transformer: No routing. Each token follows the very same path by way of all layers.

- MoE: A discovered router assigns tokens to specialists based mostly on softmax scores. Completely different tokens choose totally different specialists. Completely different layers could activate totally different specialists which will increase specialization and mannequin capability.

Mannequin Capability

- Transformer: To scale capability, the one choice is including extra layers or widening the FFN—each enhance FLOPs closely.

- MoE: Can scale complete parameters massively with out rising per-token compute. This allows “larger brains at decrease runtime price.”

Whereas MoE architectures supply large capability with decrease inference price, they introduce a number of coaching challenges. The most typical concern is skilled collapse, the place the router repeatedly selects the identical specialists, leaving others under-trained.

Load imbalance is one other problem—some specialists could obtain much more tokens than others, resulting in uneven studying. To handle this, MoE fashions depend on strategies like noise injection in routing, High-Ok masking, and skilled capability limits.

These mechanisms guarantee all specialists keep energetic and balanced, however additionally they make MoE techniques extra advanced to coach in comparison with commonplace Transformers.