Black Forest Labs has launched FLUX.2, its second era picture era and modifying system. FLUX.2 targets actual world inventive workflows corresponding to advertising and marketing belongings, product images, design layouts, and complicated infographics, with modifying assist as much as 4 megapixels and powerful management over format, logos, and typography.

FLUX.2 product household and FLUX.2 [dev]

The FLUX.2 household spans hosted APIs and open weights:

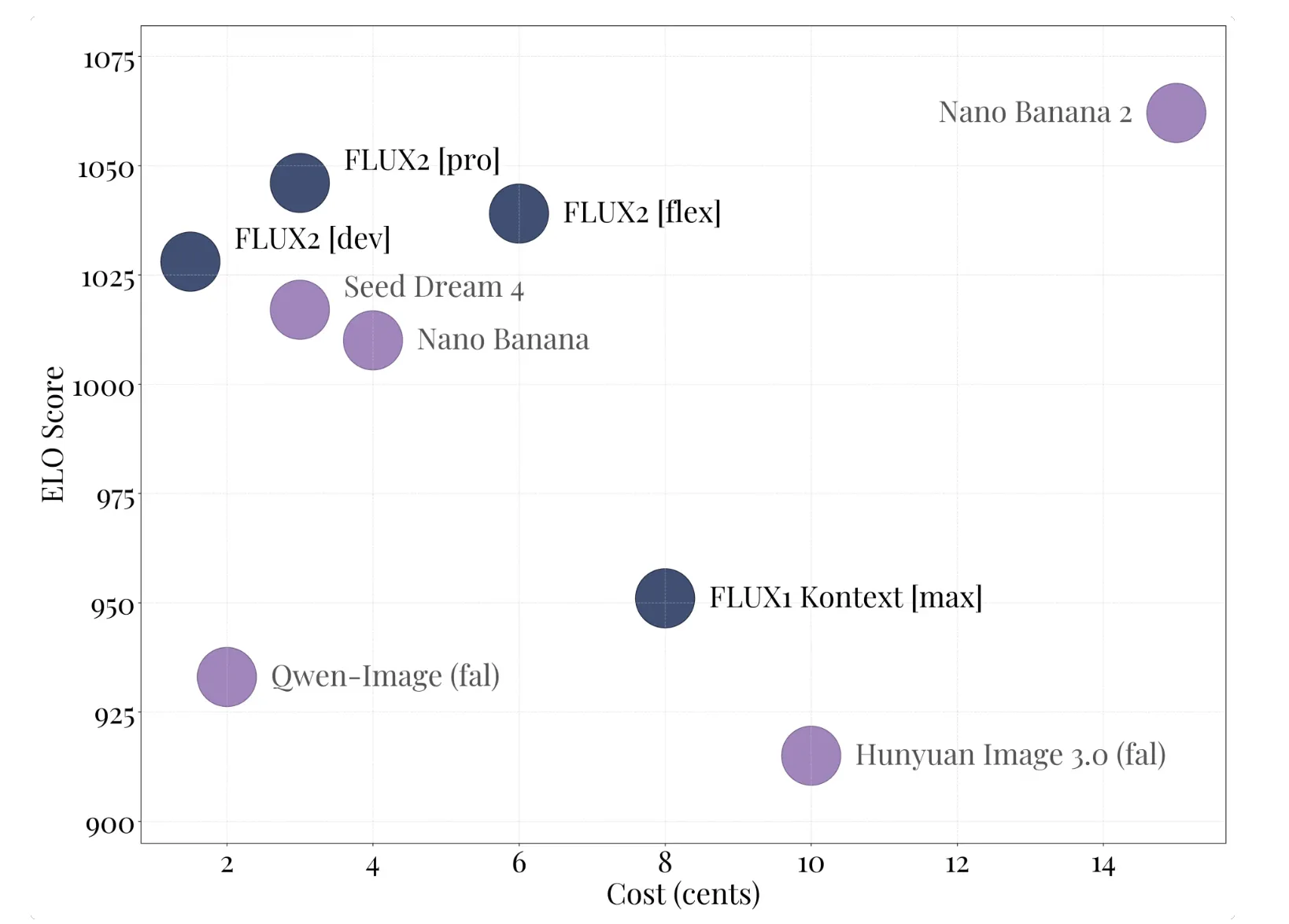

- FLUX.2 [pro] is the managed API tier. It targets cutting-edge high quality relative to closed fashions, with excessive immediate adherence and low inference price, and is out there within the BFL Playground, BFL API, and companion platforms.

- FLUX.2 [flex] exposes parameters corresponding to variety of steps and steering scale, so builders can commerce off latency, textual content rendering accuracy, and visible element.

- FLUX.2 [dev] is the open weight checkpoint, derived from the bottom FLUX.2 mannequin. It’s described as essentially the most highly effective open weight picture era and modifying mannequin, combining textual content to picture and multi picture modifying in a single checkpoint, with 32 billion parameters.

- FLUX.2 [klein] is a coming open supply Apache 2.0 variant, measurement distilled from the bottom mannequin for smaller setups, with most of the similar capabilities.

All variants assist picture modifying from textual content and a number of references in a single mannequin, which removes the necessity to preserve separate checkpoints for era and modifying.

Structure, latent circulate, and the FLUX.2 VAE

FLUX.2 makes use of a latent circulate matching structure. The core design {couples} a Mistral-3 24B imaginative and prescient language mannequin with a rectified circulate transformer that operates on latent picture representations. The imaginative and prescient language mannequin offers semantic grounding and world information, whereas the transformer spine learns spatial construction, supplies, and composition.

The mannequin is educated to map noise latents to picture latents underneath textual content conditioning, so the identical structure helps each textual content pushed synthesis and modifying. For modifying, latents are initialized from current photos, then up to date underneath the identical circulate course of whereas preserving construction.

A brand new FLUX.2 VAE defines the latent area. It’s designed to stability learnability, reconstruction high quality, and compression, and is launched individually on Hugging Face underneath an Apache 2.0 license. This autoencoder is the spine for all FLUX.2 circulate fashions and will also be reused in different generative programs.

Capabilities for manufacturing workflows

The FLUX.2 Docs and Diffusers integration spotlight a number of key capabilities:

- Multi reference assist: FLUX.2 can mix as much as 10 reference photos to take care of character identification, product look, and magnificence throughout outputs.

- Photoreal element at 4MP: the mannequin can edit and generate photos as much as 4 megapixels, with improved textures, pores and skin, materials, arms, and lighting appropriate for product pictures and picture like use instances.

- Sturdy textual content and format rendering: it may render advanced typography, infographics, memes, and consumer interface layouts with small legible textual content, which is a typical weak spot in lots of older fashions.

- World information and spatial logic: the mannequin is educated for extra grounded lighting, perspective, and scene composition, which reduces artifacts and the artificial look.

Key Takeaways

- FLUX.2 is a 32B latent circulate matching transformer that unifies textual content to picture, picture modifying, and multi reference composition in a single checkpoint.

- FLUX.2 [dev] is the open weight variant, paired with the Apache 2.0 FLUX.2 VAE, whereas the core mannequin weights use the FLUX.2-dev Non Business License with obligatory security filtering.

- The system helps as much as 4 megapixel era and modifying, strong textual content and format rendering, and as much as 10 visible references for constant characters, merchandise, and types.

- Full precision inference requires greater than 80GB VRAM, however 4 bit and FP8 quantized pipelines with offloading make FLUX.2 [dev] usable on 18GB to 24GB GPUs and even 8GB playing cards with adequate system RAM.

Editorial Notes

FLUX.2 is a crucial step for open weight visible era, because it combines a 32B rectified circulate transformer, a Mistral 3 24B imaginative and prescient language mannequin, and the FLUX.2 VAE right into a single excessive constancy pipeline for textual content to picture and modifying. The clear VRAM profiles, quantized variants, and powerful integrations with Diffusers, ComfyUI, and Cloudflare Employees make it sensible for actual workloads, not solely benchmarks. This launch pushes open picture fashions nearer to manufacturing grade inventive infrastructure.

Try the Technical particulars, Mannequin weight and Repo. Be happy to take a look at our GitHub Web page for Tutorials, Codes and Notebooks. Additionally, be at liberty to comply with us on Twitter and don’t overlook to affix our 100k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you may be a part of us on telegram as nicely.