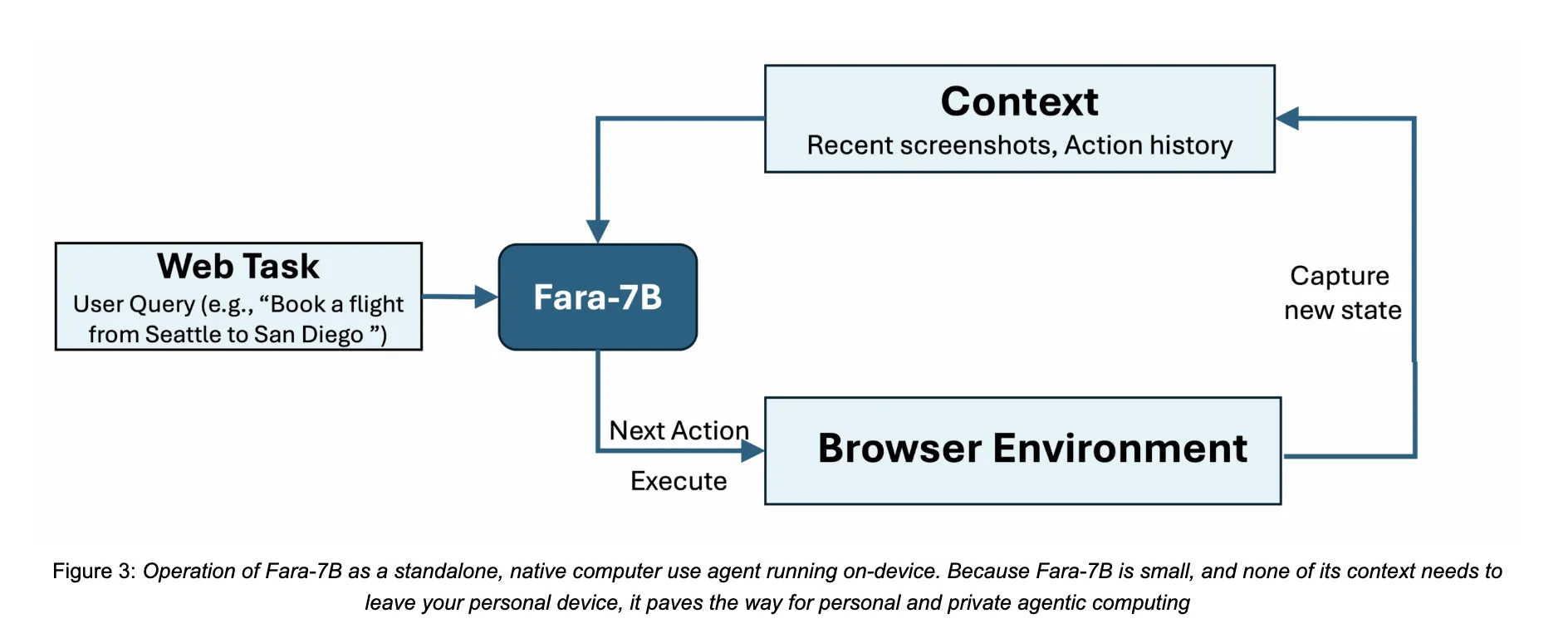

How can we safely let an AI agent deal with actual internet duties like reserving, looking out, and type filling immediately on our personal units with out sending every part to the cloud? Microsoft Analysis has launched Fara-7B, a 7 billion parameter agentic small language mannequin designed particularly for laptop use. It’s an open weight Laptop Use Agent that runs from screenshots, predicts mouse and keyboard actions, and is sufficiently small to execute on a single consumer machine, which reduces latency and retains shopping information native.

From Chatbots to Laptop Use Brokers

Standard chat oriented LLMs return textual content. Laptop Use Brokers reminiscent of Fara-7B as a substitute management the browser or desktop consumer interface to finish duties like filling kinds, reserving journey, or evaluating costs. They understand the display screen, motive concerning the web page format, then emit low degree actions reminiscent of click on, scroll, sort, web_search, or visit_url.

Many current techniques depend on giant multimodal fashions wrapped in complicated scaffolding that parses accessibility bushes and orchestrates a number of instruments. This will increase latency and sometimes requires server facet deployment. Fara-7B compresses the habits of such multi agent techniques right into a single multimodal decoder solely mannequin constructed on Qwen2.5-VL-7B. It consumes browser screenshots and textual content context, then immediately outputs thought textual content adopted by a device name with grounded arguments reminiscent of coordinates, textual content, or URLs.

FaraGen, Artificial Trajectories for Internet Interplay

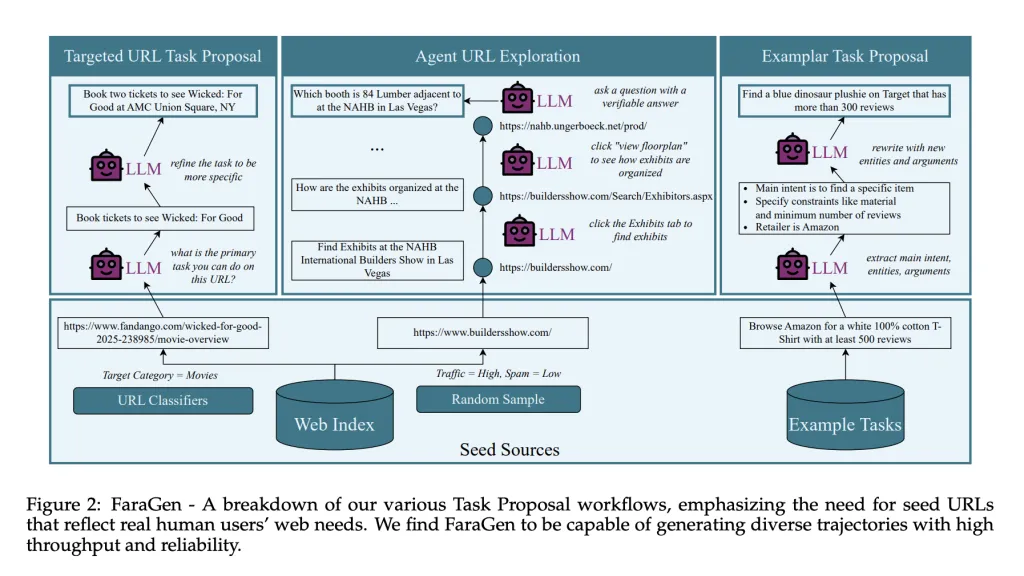

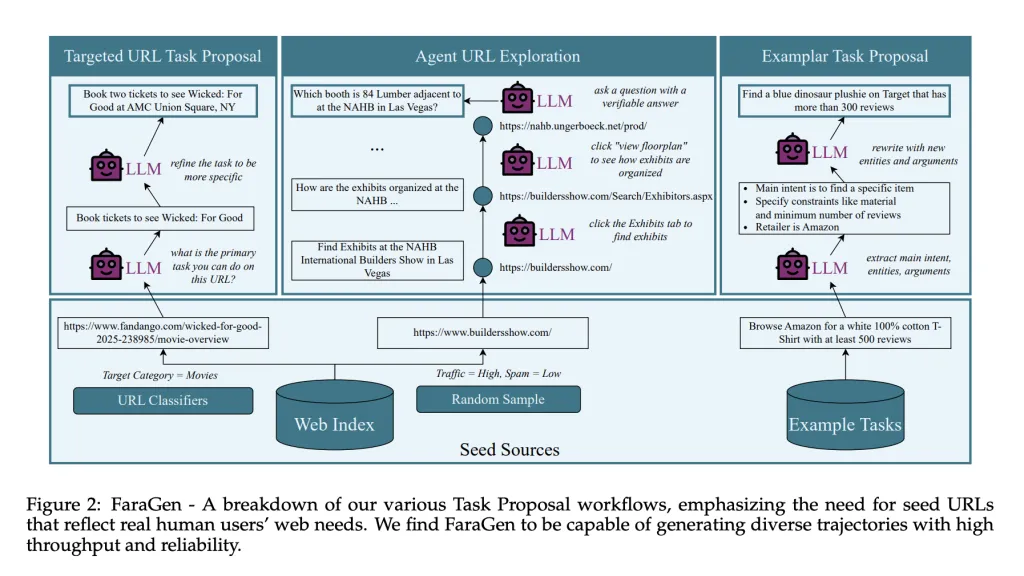

The important thing bottleneck for Laptop Use Brokers is information. Prime quality logs of human internet interplay with multi step actions are uncommon and costly to gather. The Fara mission introduces FaraGen, an artificial information engine that generates and filters internet trajectories on reside websites.

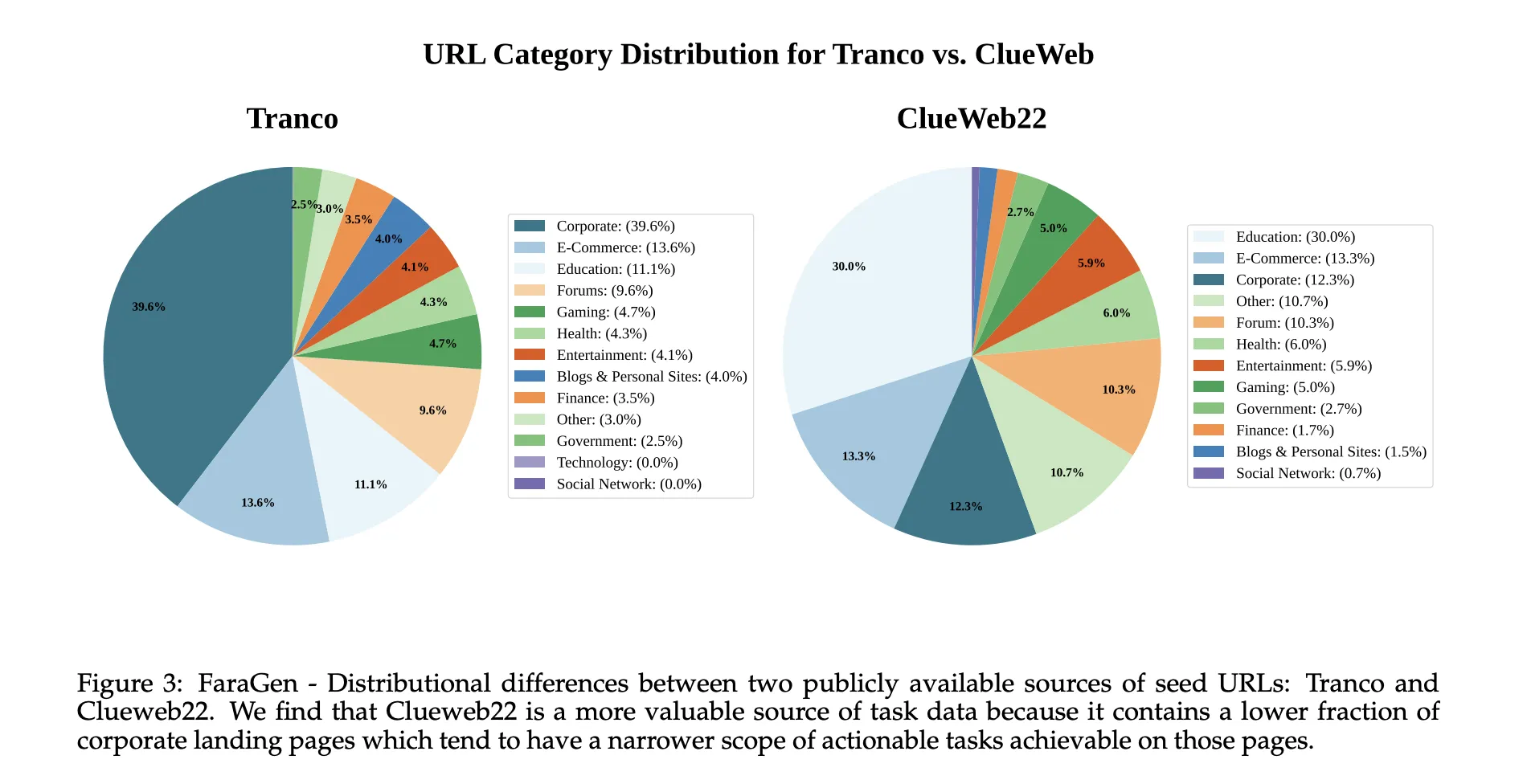

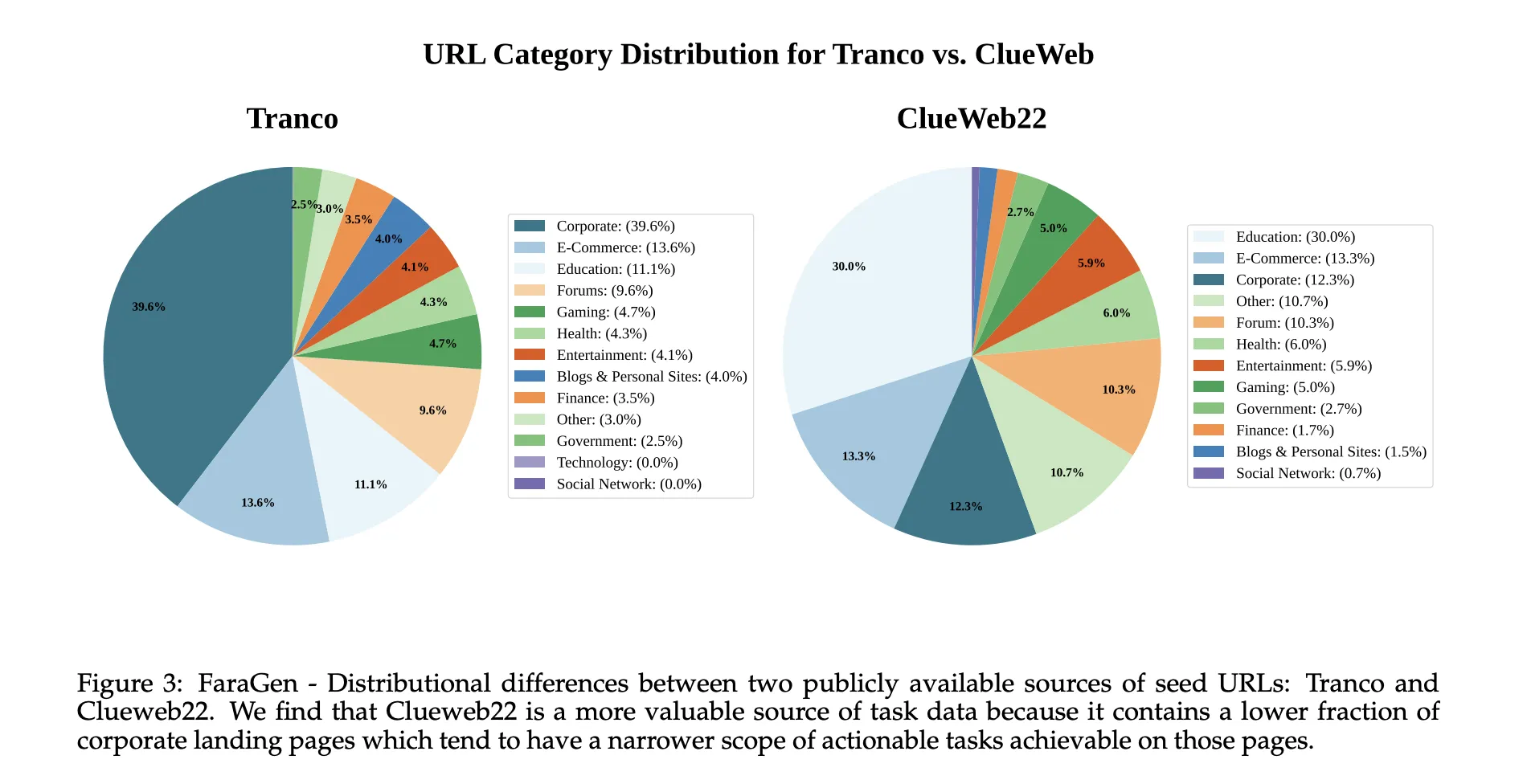

FaraGen makes use of a 3 stage pipeline. Job Proposal begins from seed URLs drawn from public corpora reminiscent of ClueWeb22 and Tranco, that are categorized into domains like e commerce, journey, leisure, or boards. Giant language fashions convert every URL into lifelike duties that customers would possibly try on that web page, for instance reserving particular film tickets or making a procuring listing with constraints on critiques and supplies. Duties should be achievable with out login or paywall, absolutely specified, helpful, and robotically verifiable.

Job Fixing runs a multi agent system based mostly on Magentic-One and Magentic-UI. An Orchestrator agent plans the excessive degree technique and retains a ledger of job state. A WebSurfer agent receives accessibility bushes and Set-of-Marks screenshots, then emits browser actions by means of Playwright, reminiscent of click on, sort, scroll, visit_url, or web_search. A UserSimulator agent provides comply with up directions when the duty wants clarification.

Trajectory Verification makes use of three LLM based mostly verifiers. An Alignment Verifier checks that the actions and closing reply match the duty intent. A Rubric Verifier generates a rubric of subgoals and scores partial completion. A Multimodal Verifier inspects screenshots plus the ultimate reply to catch hallucinations and make sure that seen proof helps success. These verifiers agree with human labels on 83.3 % of circumstances, with reported false optimistic and false adverse charges round 17 to 18 %.

After filtering, FaraGen yields 145,603 trajectories with 1,010,797 steps over 70,117 distinctive domains. The trajectories vary from 3 to 84 steps, with a mean of 6.9 steps and about 0.5 distinctive domains per trajectory, which signifies that many duties contain websites not seen elsewhere within the dataset. Producing information with premium fashions reminiscent of GPT-5 and o3 prices roughly 1 greenback per verified trajectory.

Mannequin Structure

Fara-7B is a multimodal decoder solely mannequin that makes use of Qwen2.5-VL-7B as the bottom. It takes as enter a consumer objective, the newest screenshots from the browser, and the total historical past of earlier ideas and actions. The context window is 128,000 tokens. At every step the mannequin first generates a series of thought describing the present state and the plan, then outputs a device name that specifies the following motion and its arguments.

The device area matches the Magentic-UI computer_use interface. It contains key, sort, mouse_move, left_click, scroll, visit_url, web_search, history_back, pause_and_memorize_fact, wait, and terminate. Coordinates are predicted immediately as pixel positions on the screenshot, which permits the mannequin to function with out entry to the accessibility tree at inference time.

Coaching makes use of supervised finetuning over roughly 1.8 million samples that blend a number of information sources. These embody the FaraGen trajectories damaged into observe suppose act steps, grounding and UI localization duties, screenshot based mostly visible query answering and captioning, and security and refusal datasets.

Benchmarks and Effectivity

Microsoft evaluates Fara-7B on 4 reside internet benchmarks: WebVoyager, On-line-Mind2Web, DeepShop, and the brand new WebTailBench, which focuses on underneath represented segments reminiscent of restaurant reservations, job purposes, actual property search, comparability procuring, and multi web site compositional duties.

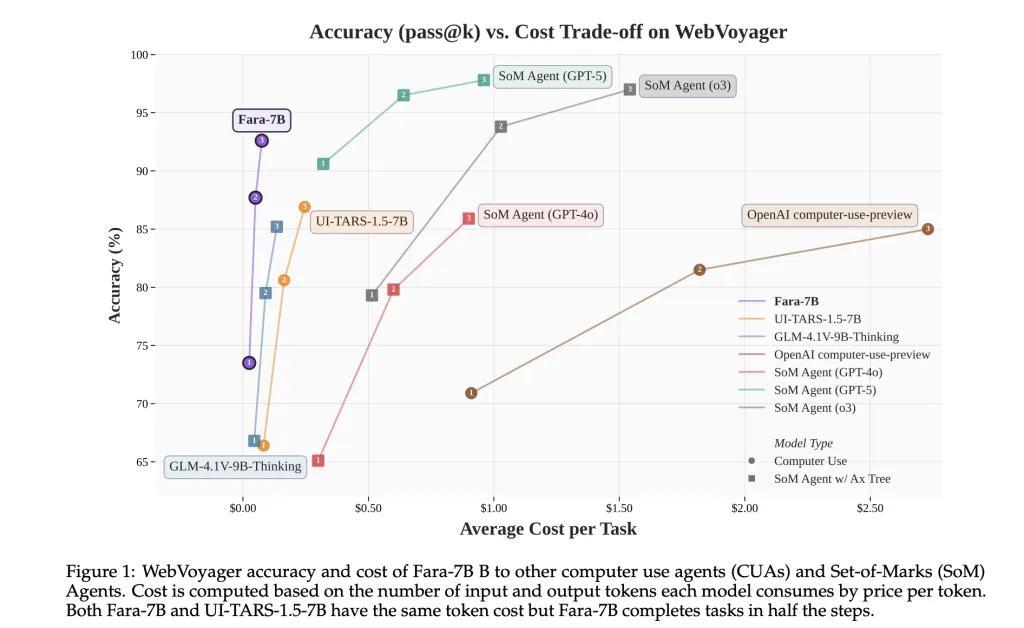

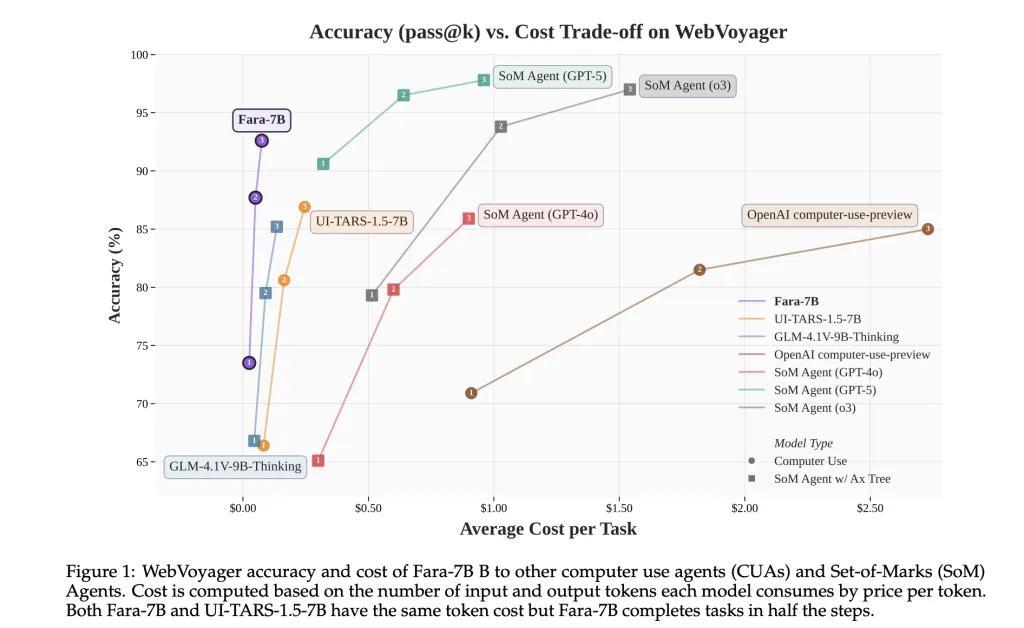

On these benchmarks, Fara-7B achieves 73.5 % success on WebVoyager, 34.1 % on On-line-Mind2Web, 26.2 % on DeepShop, and 38.4 % on WebTailBench. This outperforms the 7B Laptop Use Agent baseline UI-TARS-1.5-7B, which scores 66.4, 31.3, 11.6, and 19.5 respectively, and compares favorably to bigger techniques like OpenAI computer-use-preview and SoM Agent configurations constructed on GPT-4o.

On WebVoyager, Fara-7B makes use of on common 124,000 enter tokens and 1,100 output tokens per job, with about 16.5 actions. Utilizing market token costs, the analysis staff estimate a mean price of 0.025 {dollars} per job, versus round 0.30 {dollars} for SoM brokers backed by proprietary reasoning fashions reminiscent of GPT-5 and o3. Fara-7B makes use of an analogous variety of enter tokens however about one tenth the output tokens of those SoM brokers.

Key Takeaways

- Fara-7B is a 7B parameter, open weight Laptop Use Agent constructed on Qwen2.5-VL-7B that operates immediately from screenshots and textual content, then outputs grounded actions reminiscent of clicks, typing and navigation, with out counting on accessibility bushes at inference time.

- The mannequin is skilled with 145,603 verified browser trajectories and 1,010,797 steps generated by the FaraGen pipeline, which makes use of multi agent job proposal, fixing, and LLM based mostly verification on reside web sites throughout 70,117 domains.

- Fara-7B achieves 73.5 % success on WebVoyager, 34.1 % on On-line-Mind2Web, 26.2 % on DeepShop, and 38.4 % on WebTailBench, enhancing considerably over the 7B UI-TARS-1.5 baseline on all 4 benchmarks.

- On WebVoyager, Fara-7B makes use of about 124,000 enter tokens and 1,100 output tokens per job, with a mean of 16.5 actions, yielding an estimated price of round 0.025 {dollars} per job, which is round an order of magnitude cheaper in output token utilization than SoM brokers backed by GPT 5 class fashions.

Editorial Notes

Fara-7B is a helpful step towards sensible Laptop Use Brokers that may run on native {hardware} with decrease inference price whereas preserving privateness. The mix of Qwen2.5 VL 7B, FaraGen artificial trajectories and WebTailBench provides a transparent and nicely instrumented path from multi agent information era to a single compact mannequin that matches or exceeds bigger techniques on key benchmarks whereas implementing Essential Level and refusal safeguards.

Try the Paper, Mannequin weights and technical particulars. Be at liberty to take a look at our GitHub Web page for Tutorials, Codes and Notebooks. Additionally, be happy to comply with us on Twitter and don’t overlook to hitch our 100k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you’ll be able to be part of us on telegram as nicely.

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of Synthetic Intelligence for social good. His most up-to-date endeavor is the launch of an Synthetic Intelligence Media Platform, Marktechpost, which stands out for its in-depth protection of machine studying and deep studying information that’s each technically sound and simply comprehensible by a large viewers. The platform boasts of over 2 million month-to-month views, illustrating its recognition amongst audiences.