Algorithmic buying and selling methods are sometimes mentioned by way of fashions, indicators, and execution logic. Nonetheless, earlier than any of those elements may be developed, researchers should first remedy a extra elementary drawback: dependable entry to monetary information.

In skilled analysis environments, information infrastructure shouldn’t be a preliminary step. It’s a gating perform. If the info layer is inconsistent, each downstream mannequin inherits that instability. On this article, we concentrate on the foundational layer of systematic buying and selling: monetary information infrastructure. We’ll discover how monetary information APIs like FMP allow automated information ingestion, how they match into quantitative analysis pipelines, and the way builders can use them to construct scalable analysis workflows utilizing Python.

Why Information Infrastructure Issues in Algorithmic Buying and selling

Information infrastructure determines whether or not a quantitative workflow can function reliably at scale. Earlier than fashions may be evaluated or indicators may be examined, researchers should be certain that monetary information is on the market in a structured, constant, and scalable type. With out this basis, even well-designed methods change into tough to validate or reproduce.

Systematic Buying and selling Requires Dependable Information Inputs

In systematic buying and selling, information points are not often apparent at first. Small inconsistencies in inputs, corresponding to lacking values, misaligned timestamps, or formatting variations, propagate via the pipeline and deform characteristic calculations, mannequin outputs, and backtest outcomes.

Information Necessities in Quantitative Analysis

Quantitative workflows depend on a number of datasets that should combine seamlessly, together with value information, monetary statements, and event-driven info. As analysis expands throughout bigger universes and time intervals, managing these datasets turns into more and more advanced with out correct infrastructure.

Challenges with Handbook Information Assortment

Within the absence of automation, information assortment rapidly turns into inefficient.

Handbook workflows, corresponding to downloading spreadsheets or copying information from a number of sources, introduce a number of limitations. These strategies may fit for small analyses, however don’t scale effectively in a analysis surroundings.

Some frequent points embrace:

- Variations in information formatting throughout sources

- Issue in sustaining up to date datasets

- Restricted scalability when working with many securities

- Lowered reproducibility of outcomes

These challenges typically result in inconsistencies, making it tough to validate analysis outcomes over time.

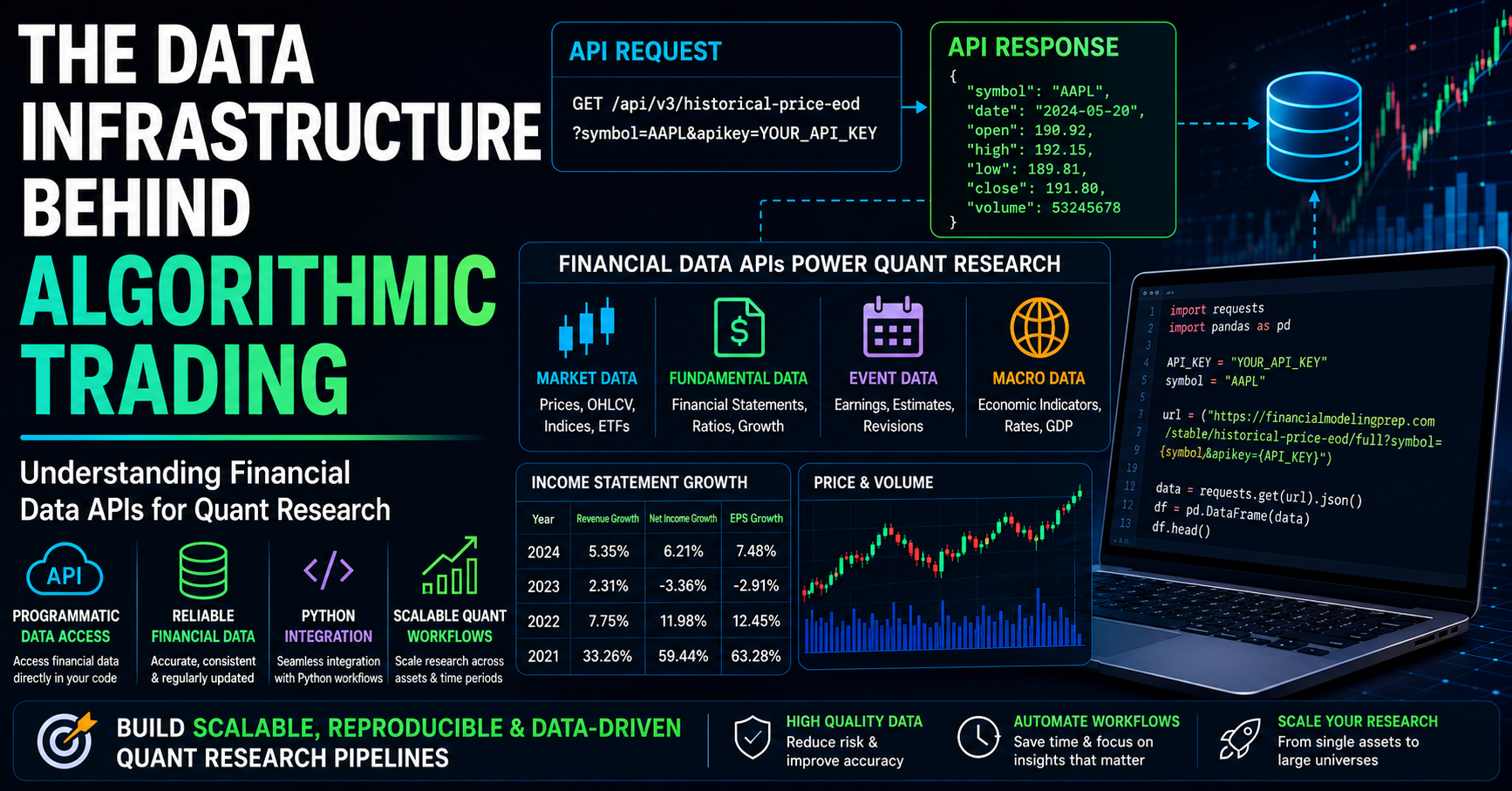

Monetary Information APIs because the Basis

Monetary information APIs allow a structured and automatic approach to retrieve datasets instantly right into a analysis surroundings, changing guide workflows with programmatic entry.

In quantitative analysis pipelines, APIs primarily energy the info ingestion layer, the place datasets corresponding to costs, monetary statements, and estimates are retrieved and built-in instantly into the workflow. This allows repeatable information entry, simpler integration with Python-based pipelines, and extra scalable analysis processes.

What Is a Monetary Information API

A monetary information API allows programmatic entry to monetary datasets, permitting information to be retrieved instantly inside code relatively than collected manually. It acts as an interface between an information supplier and a analysis surroundings, enabling information to be requested and consumed instantly inside code. In follow, builders additionally consider APIs primarily based on latency, fee limits, schema consistency, and the way reliably endpoints behave throughout large-scale requests.

In quantitative workflows, this implies a Python script or pocket book can retrieve datasets corresponding to historic costs, monetary statements, or analyst estimates with out counting on guide downloads.

How an API Works in Follow

The next diagram summarises how a typical API interplay flows from request to information utilization:

For instance, monetary information platforms expose endpoints for datasets corresponding to firm profiles, historic value information, and monetary statements, all of which comply with this request–response sample.

Handbook vs Programmatic Information Retrieval

The distinction between guide workflows and API-based workflows turns into clear when evaluating how information is collected

| Facet | Handbook Information Retrieval | Programmatic Information Retrieval |

|---|---|---|

| Effort | Excessive (repeated guide steps) | Low (automated via code) |

| Scalability | Restricted to small datasets | Scales throughout massive datasets |

| Consistency | Susceptible to formatting inconsistencies | Standardised and structured |

| Replace Course of | Requires guide updates | Robotically refreshable |

| Reproducibility | Tough to duplicate | Absolutely reproducible workflows |

For quantitative analysis, programmatic entry is considerably extra dependable as a result of it reduces human intervention and ensures consistency.

Authentication Utilizing API Keys

Most monetary information APIs require authentication via an API key, which is included with every request. It verifies consumer id and allows suppliers to handle entry and utilization limits.

Why APIs Are Central to Quant Workflows

Monetary information APIs remodel information entry right into a programmable element of the analysis pipeline. As an alternative of guide preparation, information retrieval turns into built-in instantly into code, enabling constant inputs, sooner iteration, and scalable workflows.

This makes APIs a core a part of fashionable quantitative analysis environments.

Sorts of Monetary Information Utilized in Algorithmic Buying and selling

As soon as information may be accessed programmatically, the following step is knowing what sorts of datasets are generally utilized in quantitative analysis. These datasets type the enter layer of most analysis pipelines and are sometimes retrieved via monetary information APIs.

| Kind of Information | Examples |

|---|---|

| Market Information | Historic value information (open, excessive, low, shut, quantity), intraday value collection, index and ETF costs |

| Basic Information | Earnings statements (income, internet revenue), steadiness sheets (property, liabilities), money circulate statements, and monetary ratios |

| Occasion & Macro Information | Earnings calendars, analyst estimates and revisions, financial indicators (inflation, rates of interest, GDP) |

These datasets type the enter layer of most quantitative analysis workflows and are sometimes accessed via monetary information APIs.

Constructing a Quant Analysis Pipeline Utilizing a Monetary Information API

To grasp how monetary information APIs assist real-world workflows, let’s stroll via a simplified analysis situation. The aim is to not construct a buying and selling technique, however to show how information flows via a structured pipeline utilizing dependable and repeatable information sources.

Situation

A researcher needs to guage whether or not enhancing fundamentals can function a constant cross-sectional sign throughout a big universe of equities. This requires combining a number of datasets and analysing how monetary efficiency traits relate to noticed value behaviour throughout totally different corporations.

Workflows like this are generally taught in structured quantitative finance packages, and mirror how structured analysis pipelines are in-built each tutorial {and professional} quant environments.

APIs Utilized in This Workflow

Earlier than constructing the pipeline, we outline the datasets and APIs required.

1. Historic Value Information: Retrieve end-of-day value information for time-series evaluation.

2. Earnings Assertion Progress Information: Retrieve progress metrics corresponding to income progress and earnings progress.

The way to Get Your API Key

To entry most monetary information APIs, customers sometimes register for an API key, which is included in requests for authentication and utilization monitoring. For instance, you’ll be able to register at Monetary Modeling Prep.

After registration, your API key shall be out there in your dashboard. Change “YOUR_API_KEY” within the code examples under along with your private key to authenticate requests.

Step 1: Information Ingestion

Step one within the pipeline is retrieving structured datasets utilizing monetary information APIs. As an alternative of manually amassing information, we fetch each market information and elementary information programmatically.

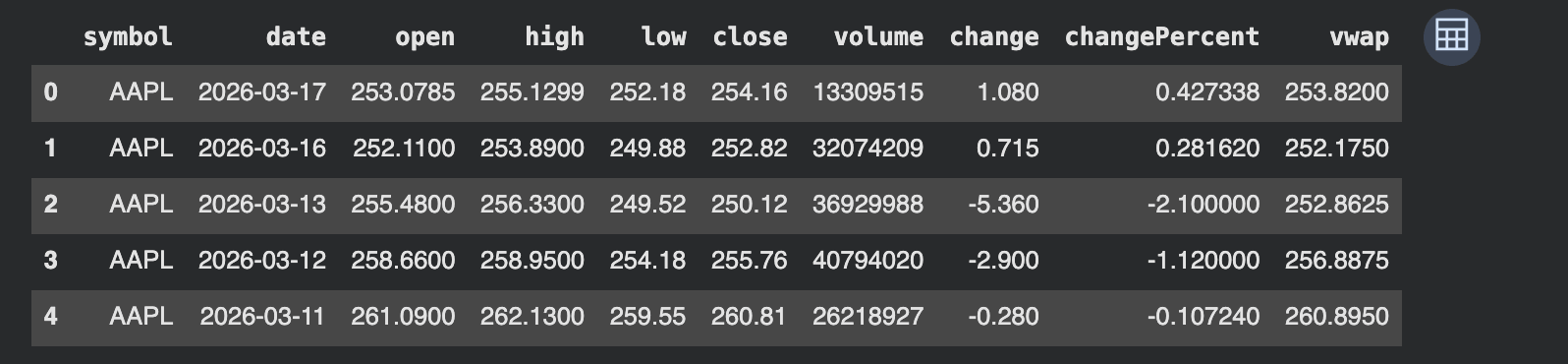

Fetching Historic Value Information

We start by retrieving end-of-day value information, which shall be used to analyse value behaviour over time.

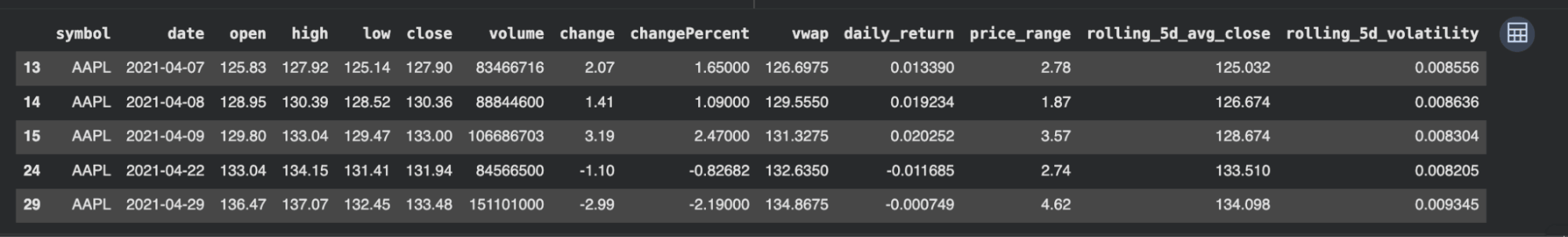

The output confirms that the API returned structured end-of-day value information for Apple in a clear tabular format. Every row represents one buying and selling day, whereas the columns seize key market fields corresponding to open, excessive, low, shut, quantity, every day value change, proportion change, and VWAP.

This issues as a result of the dataset is straight away usable inside a analysis surroundings with out extra guide formatting. A researcher can type by date, filter particular intervals, calculate rolling statistics, align costs with elementary occasions, or merge this desk with different datasets corresponding to revenue assertion progress or analyst estimates.

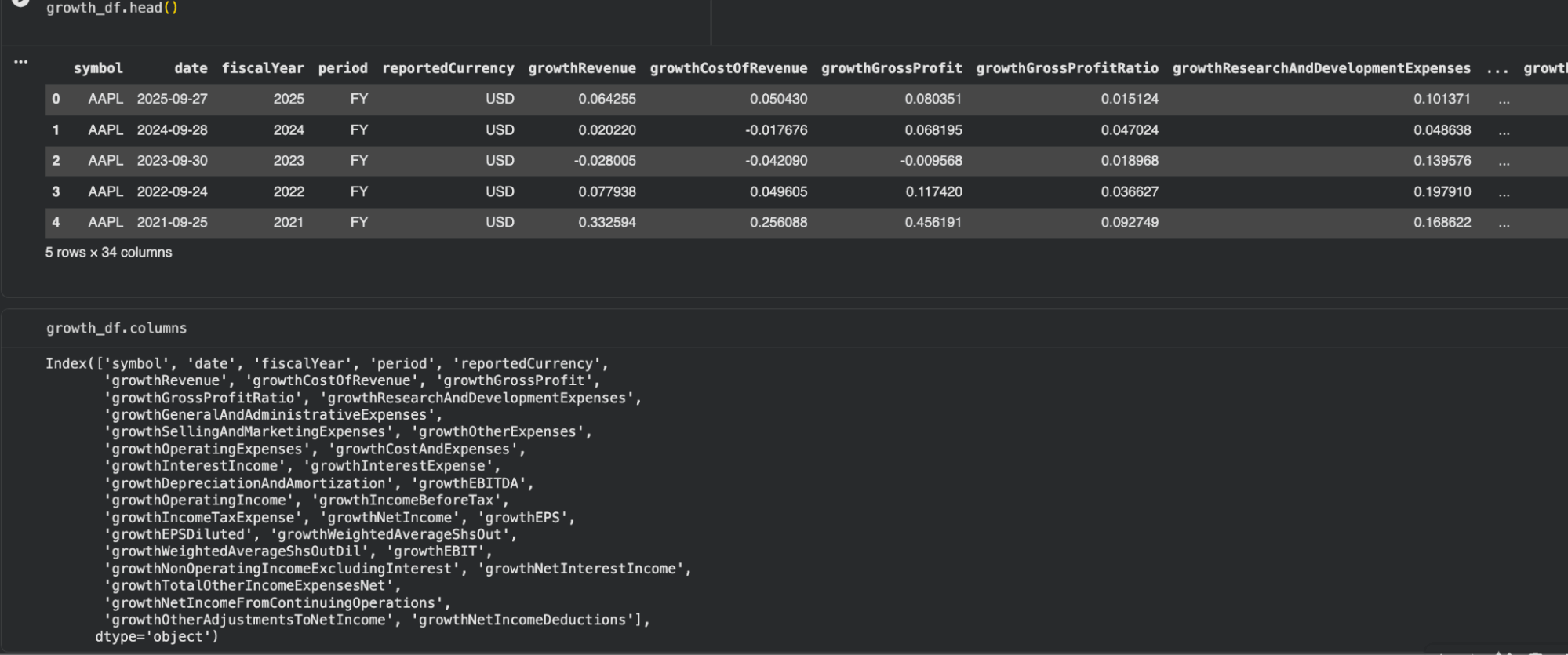

Fetching Basic Progress Information

To assist the analysis goal, we additionally retrieve elementary progress metrics. These datasets assist seize how an organization’s monetary efficiency is evolving over time.

Interpretation

The output exhibits that the API returned structured elementary progress information for Apple throughout a number of fiscal years. Every row represents a reporting interval, whereas the columns seize progress metrics throughout totally different elements of the revenue assertion.

Key observations from the dataset:

- growthRevenue displays how the corporate’s income has modified 12 months over 12 months

- growthGrossProfit and growthGrossProfitRatio present perception into profitability traits

- growthNetIncome and growthEPS point out how earnings are evolving

- Extra fields, corresponding to working bills, R&D, and EBITDA progress, present a deeper breakdown of enterprise efficiency

The dataset is already preprocessed, that means progress values are instantly out there with out requiring guide calculations. This reduces preprocessing effort and ensures consistency throughout corporations.

One other essential facet is the time granularity. In contrast to value information (every day), this dataset is reported at a monetary interval degree (annual on this case). This distinction turns into essential when combining datasets later within the pipeline.

Total, this dataset captures how the corporate’s fundamentals are evolving over time, which enhances the price-based dataset retrieved earlier.

Step 2: Function Engineering

As soon as each market information and elementary information can be found, the following step is to rework them into structured variables that may assist evaluation.

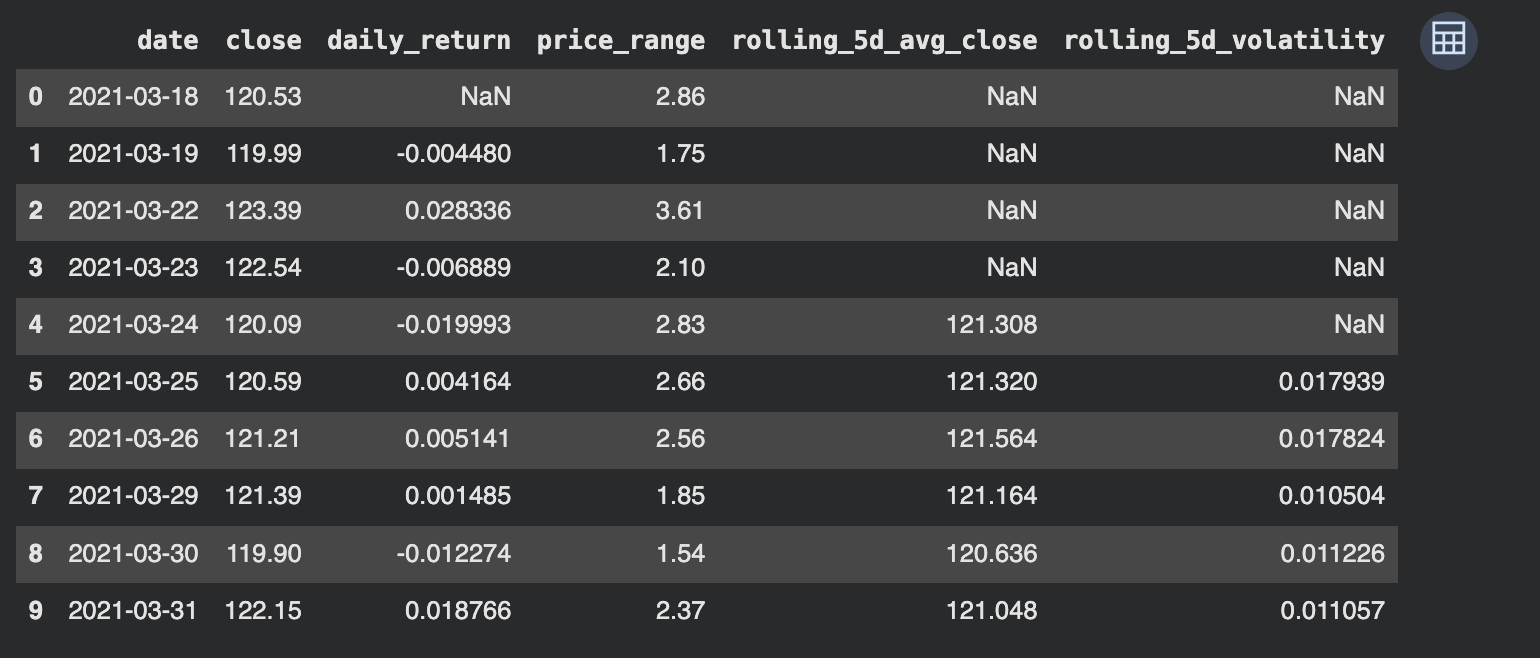

Engineering Value-Primarily based Options

We start by deriving options from the value dataset.

The engineered dataset exhibits how uncooked value information has been reworked into structured options that assist evaluation.

Every characteristic captures a distinct facet of market behaviour:

- daily_return measures short-term value motion

- price_range displays intraday volatility

- rolling_5d_avg_close captures short-term development course

- rolling_5d_volatility measures the steadiness of returns over time

Preliminary NaN values are anticipated, as rolling calculations require a minimal variety of observations.

This step highlights how uncooked market information is transformed into options that may be in contrast throughout time and mixed with different datasets, corresponding to monetary statements.

Working with Basic Progress Options

In contrast to value information, the basic dataset already gives engineered progress variables. These can be utilized instantly with out extra transformation.

Examples of accessible options embrace:

- Income progress (growthRevenue)

- Gross revenue progress (growthGrossProfit)

- Web revenue progress (growthNetIncome)

- Earnings per share progress (growthEPS)

These variables describe how the corporate’s monetary efficiency is altering over time, which is central to many analysis workflows.

Aligning Value and Basic Information

An essential step in characteristic engineering is aligning datasets that function at totally different frequencies. Incorrect alignment between reporting intervals and value information is without doubt one of the most typical sources of bias in quantitative analysis, significantly when elementary information is forward-filled improperly.

- Value information → every day

- Basic information → annual or quarterly

To mix them, researchers sometimes map elementary values to corresponding value intervals. This may be executed utilizing methods corresponding to:

- Ahead filling monetary information throughout every day rows (with warning to keep away from look-ahead bias)

- Merging datasets primarily based on reporting dates

- Creating time-aligned snapshots

Beneath is a simplified instance illustrating how these datasets may be aligned:

This alignment ensures that each market behaviour and enterprise efficiency may be analysed collectively inside a unified dataset.

Key End result of Function Engineering

At this stage, the dataset comprises:

- Value-based options describing market motion

- Basic options describing enterprise efficiency

This mix allows extra significant evaluation, the place value behaviour may be evaluated alongside adjustments in firm fundamentals.

Step 3: Speculation Testing

With each price-based options and elementary progress information out there, the researcher can now start evaluating relationships throughout the information in a structured means.

At this stage, the aim is to not construct a buying and selling technique, however to check whether or not observable patterns exist between market behaviour and underlying enterprise efficiency.

Defining the Analysis Query

A easy and significant speculation may very well be:

Do intervals of secure value behaviour systematically align with enhancing firm fundamentals?

This connects two key dimensions:

- Market behaviour → captured via value volatility

- Enterprise efficiency → captured via progress metrics

Structuring the Evaluation

To guage this speculation, the researcher can:

- Determine intervals the place short-term volatility is comparatively low

(utilizing rolling_5d_volatility) - Observe corresponding elementary progress traits, corresponding to:

- Income progress (growthRevenue)

- Web revenue progress (growthNetIncome)

- Examine whether or not:

- Steady value intervals coincide with enhancing fundamentals

- Or whether or not no constant relationship exists

Instance Workflow

A simplified analytical strategy may contain:

The filtered dataset highlights intervals the place short-term volatility is comparatively low, primarily based on the decrease quantile of the rolling 5-day volatility.

From the pattern:

- The rolling_5d_volatility values (~0.008–0.009) point out comparatively secure value actions

- Throughout these intervals, daily_return values are average and managed, with out excessive spikes

- The price_range stays inside a slim band, suggesting restricted intraday fluctuation

- The rolling_5d_avg_close strikes step by step, indicating clean short-term traits relatively than abrupt value adjustments

For instance:

- On 2021-04-07 to 2021-04-09, costs present regular upward motion with low volatility

- On 2021-04-22 and 2021-04-29, even when returns flip barely detrimental, volatility stays contained, suggesting managed corrections relatively than sharp declines

This output demonstrates that:

- Low volatility intervals will not be essentially flat markets

- They’ll symbolize secure development phases, though this relationship could range throughout property and market circumstances.

- These phases are structurally totally different from high-volatility intervals, which have a tendency to incorporate abrupt actions and uncertainty

This statement highlights how combining price-based options with elementary progress information allows a extra structured analysis of market behaviour, transferring the evaluation from descriptive patterns to testable relationships.

Step 4: Scaling the Analysis

In {most professional} environments, analysis pipelines are designed to function throughout a whole bunch or hundreds of securities, making scalability a core requirement relatively than an optimisation.

As soon as the workflow is validated for a single firm, the identical pipeline may be prolonged throughout a bigger universe of securities.

As a result of each information ingestion and have engineering are applied in code, the method may be repeated with minimal adjustments.

In follow, scaling could contain:

- Operating the pipeline throughout a listing of symbols as an alternative of a single inventory

- Combining outcomes right into a unified dataset for cross-sectional evaluation

- Refreshing the info at common intervals utilizing automated scripts

- Reusing the identical characteristic definitions throughout a number of analysis questions

For instance, the identical characteristic engineering logic utilized to Apple may be utilized to a whole bunch of shares utilizing a easy loop or batch course of.

That is the place monetary information APIs play a essential position. They permit researchers to maneuver from remoted examples to scalable analysis methods, the place information retrieval, transformation, and evaluation may be executed persistently throughout massive datasets.

Why Information High quality Issues in Systematic Buying and selling

At this stage, the analysis pipeline is structured and repeatable. Nonetheless, the reliability of any evaluation nonetheless will depend on the standard of the underlying information. Even well-designed workflows can produce deceptive outcomes if the enter information is incomplete, inconsistent, or incorrectly adjusted.

Information high quality is not only a technical concern. It instantly impacts how precisely a researcher can consider patterns, evaluate corporations, and validate hypotheses.

Widespread Information Points in Quantitative Analysis

Monetary datasets typically include points that aren’t instantly seen however can considerably have an effect on evaluation. Widespread challenges embrace survivorship bias, lookahead bias from incorrect timestamps, lacking historic information, and improper dealing with of company actions corresponding to splits or dividends.

These points change into extra pronounced when scaling evaluation throughout a number of securities or time intervals.

Impression on Analysis Outcomes

Information high quality instantly impacts each stage of the pipeline, from characteristic engineering to speculation testing. Inconsistent or incomplete information can result in distorted indicators, unreliable comparisons, and deceptive conclusions.

Position of Monetary Information APIs

Dependable monetary information APIs assist cut back many of those challenges by offering standardised and structured datasets. With constant schemas, preprocessed metrics, and common updates, APIs make it simpler to combine a number of datasets right into a unified analysis pipeline.

Why Information High quality Is Foundational

Information high quality instantly influences the credibility of quantitative analysis.

If the enter information is dependable:

- Outcomes are simpler to validate

- Experiments are reproducible

- Insights are extra constant

If the enter information is flawed:

- Patterns could seem the place none exist

- Comparisons throughout securities could also be inaccurate

- Analysis conclusions change into tough to belief

Because of this information high quality is taken into account a foundational layer in systematic buying and selling. Earlier than evaluating any speculation, researchers should be certain that the info getting used is correct, constant, and full.

Key Takeaways

- For practitioners, the important thing takeaway is that dependable information infrastructure is a prerequisite for significant backtesting, strong characteristic engineering, and scalable technique improvement.

- Platforms like Monetary information APIs allow constant and programmatic entry to datasets corresponding to costs, monetary statements, and analyst estimates.

- Integrating APIs into Python workflows improves automation, reduces guide effort, and ensures reproducibility.

- A well-designed information layer permits analysis pipelines to scale throughout a number of securities and time intervals.

- Information consistency and high quality instantly impression the reliability of options, indicators, and analysis conclusions.

- In follow, the reliability of a quantitative workflow relies upon extra on the energy of its information pipeline than on the complexity of its fashions.

Concerning the Contributor

Monetary Modeling Prep (FMP) gives structured monetary information APIs used throughout quantitative analysis, funding evaluation, and developer workflows. Its platform is designed to assist scalable entry to market information, monetary statements, estimates, and different datasets generally utilized in systematic analysis environments.

Disclaimer: All information and knowledge offered on this article are for informational functions solely. QuantInsti® makes no representations as to accuracy, completeness, currentness, suitability, or validity of any info on this article and won’t be responsible for any errors, omissions, or delays on this info or any losses, accidents, or damages arising from its show or use. All info is offered on an as-is foundation.