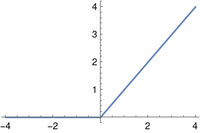

When a operate shouldn’t be differentiable within the classical sense there are a number of methods to compute a generalized spinoff. This publish will take a look at three generalizations of the classical spinoff, every utilized to the ReLU (rectified linear unit) operate. The ReLU operate is a generally used activation operate for neural networks. It’s additionally known as the ramp operate for apparent causes.

The operate is solely r(x) = max(0, x).

Pointwise spinoff

The pointwise spinoff could be 0 for x < 0, 1 for x > 0, and undefined at x = 0. So besides at 0, the pointwise spinoff of the ramp operate is the Heaviside operate.

In an actual evaluation course, you’d merely say r′(x) =H(x) as a result of features are solely outlined as much as equal modulo units of measure zero, i.e. the definition at x = 0 doesn’t matter.

Distributional spinoff

In distribution idea you’d establish the operate r(x) with the distribution whose motion on a check operate φ is

Then the spinoff of r could be the distribution r′ satisfying

for all clean features φ with compact help. You’ll be able to show utilizing integration by elements that the above equals the integral of φ from 0 to ∞, which is similar because the motion of H(x) on φ.

On this case the distributional spinoff of r is similar because the pointwise spinoff of r interpreted as a distribution. This doesn’t occur typically [1]. For instance, the pointwise spinoff of H is zero however the distributional spinoff of H is δ, the Dirac delta distribution.

For extra on distributional derivatives, see Easy methods to differentiate a non-differentiable operate.

Subgradient

The subgradient of a operate f at a degree x, written ∂f(x), is the set of slopes of tangent strains to the graph of f at x. If f is differentiable at x, then there is just one slope, particularly f′(x), and we usually say the subgradient of f at x is solely f′(x) when strictly talking we should always say it’s the one-element set {f′(x)}.

A line tangent to the graph of the ReLU operate at a damaging worth of x has slope 0, and a tangent line at a constructive x has slope 1. However as a result of there’s a pointy nook at x = 0, a tangent at this level might have any slope between 0 and 1.

My dissertation was filled with subgradients of convex features. This made me uneasy as a result of subgradients usually are not real-valued features; they’re set-valued features. More often than not you may blithely ignore this distinction, however there’s all the time a nagging suspicion that it’s going to chunk you unexpectedly.

[1] When is the pointwise spinoff of f as a operate equal to the spinoff of f as a distribution? It’s not sufficient for f to be steady, however it’s ample for f to be completely steady.