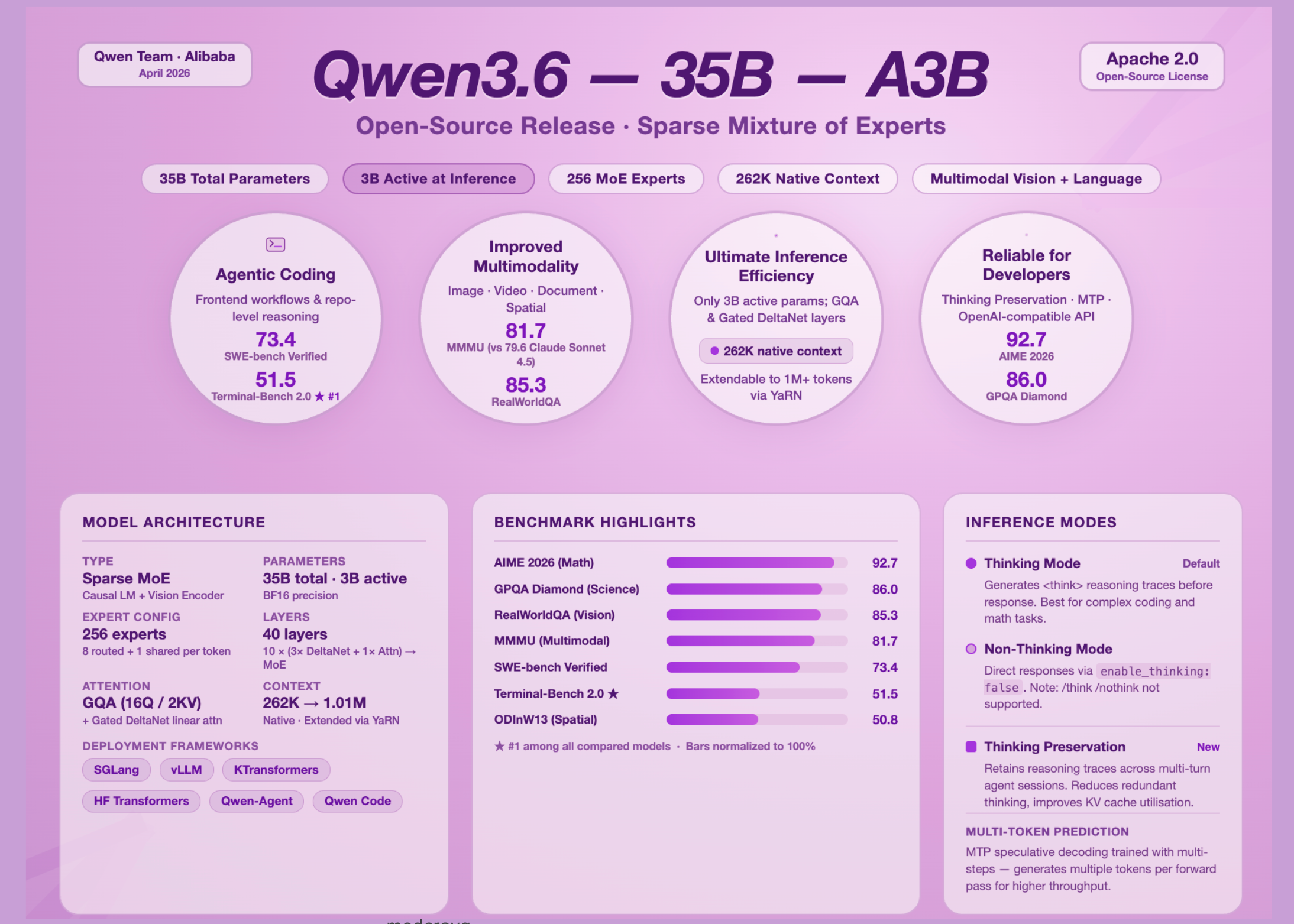

The open-source AI panorama has a brand new entry value listening to. The Qwen group at Alibaba has launched Qwen3.6-35B-A3B, the primary open-weight mannequin from the Qwen3.6 technology, and it’s making a compelling argument that parameter effectivity issues way over uncooked mannequin dimension. With 35 billion complete parameters however solely 3 billion activated throughout inference, this mannequin delivers agentic coding efficiency aggressive with dense fashions which are ten instances its lively dimension.

What’s a Sparse MoE Mannequin, and Why Does it Matter Right here?

A Combination of Consultants (MoE) mannequin doesn’t run all of its parameters on each ahead move. As a substitute, the mannequin routes every enter token via a small subset of specialised sub-networks referred to as ‘specialists.’ The remainder of the parameters sit idle. This implies you’ll be able to have an unlimited complete parameter depend whereas protecting inference compute — and subsequently inference value and latency — proportional solely to the lively parameter depend.

Qwen3.6-35B-A3B is a Causal Language Mannequin with Imaginative and prescient Encoder, skilled via each pre-training and post-training levels, with 35 billion complete parameters and three billion activated. Its MoE layer accommodates 256 specialists in complete, with 8 routed specialists and 1 shared professional activated per token.

The structure introduces an uncommon hidden format value understanding: the mannequin makes use of a sample of 10 blocks, every consisting of three situations of (Gated DeltaNet → MoE) adopted by 1 occasion of (Gated Consideration → MoE). Throughout 40 complete layers, the Gated DeltaNet sublayers deal with linear consideration — a computationally cheaper various to plain self-attention — whereas the Gated Consideration sublayers use Grouped Question Consideration (GQA), with 16 consideration heads for Q and solely 2 for KV, considerably lowering KV-cache reminiscence stress throughout inference. The mannequin helps a local context size of 262,144 tokens, extensible as much as 1,010,000 tokens utilizing YaRN (Yet one more RoPE extensioN) scaling.

Agentic Coding is The place This Mannequin Will get Critical

On SWE-bench Verified — the canonical benchmark for real-world GitHub problem decision — Qwen3.6-35B-A3B scores 73.4, in comparison with 70.0 for Qwen3.5-35B-A3B and 52.0 for Gemma4-31B. On Terminal-Bench 2.0, which evaluates an agent finishing duties inside an actual terminal surroundings with a three-hour timeout, Qwen3.6-35B-A3B scores 51.5 — the very best amongst all in contrast fashions, together with Qwen3.5-27B (41.6), Gemma4-31B (42.9), and Qwen3.5-35B-A3B (40.5).

Frontend code technology exhibits the sharpest enchancment. On QwenWebBench, an inside bilingual front-end code technology benchmark masking seven classes together with Net Design, Net Apps, Video games, SVG, Information Visualization, Animation, and 3D, Qwen3.6-35B-A3B achieves a rating of 1397 — effectively forward of Qwen3.5-27B (1068) and Qwen3.5-35B-A3B (978).

On STEM and reasoning benchmarks, the numbers are equally putting. Qwen3.6-35B-A3B scores 92.7 on AIME 2026 (the complete AIME I & II), and 86.0 on GPQA Diamond — a graduate-level scientific reasoning benchmark — each aggressive with a lot bigger fashions.

Multimodal Imaginative and prescient Efficiency

Qwen3.6-35B-A3B is just not a text-only mannequin. It ships with a imaginative and prescient encoder and handles picture, doc, video, and spatial reasoning duties natively.

On MMMU (Huge Multi-discipline Multimodal Understanding), a benchmark that exams university-level reasoning throughout photos, Qwen3.6-35B-A3B scores 81.7, outperforming Claude-Sonnet-4.5 (79.6) and Gemma4-31B (80.4). On RealWorldQA, which exams visible understanding in real-world photographic contexts, the mannequin achieves 85.3, forward of Qwen3.5-27B (83.7) and considerably above Claude-Sonnet-4.5 (70.3) and Gemma 4-31B (72.3).

Spatial intelligence is one other space of measurable achieve. On ODInW13, an object detection benchmark, Qwen3.6-35B-A3B scores 50.8, up from 42.6 for Qwen3.5-35B-A3B. For video understanding, it achieves 83.7 on VideoMMMU, outperforming Claude-Sonnet-4.5 (77.6) and Gemma4-31B (81.6).

Considering Mode, Non-Considering Mode, and a Key Behavioral Change

One of many extra virtually helpful design selections in Qwen3.6 is express management over the mannequin’s reasoning conduct. Qwen3.6 fashions function in considering mode by default, producing reasoning content material enclosed inside "enable_thinking": False within the chat template kwargs. Nevertheless, AI professionals migrating from Qwen3 ought to notice an necessary behavioral change: Qwen3.6 doesn’t formally assist the tender swap of Qwen3, i.e., /assume and /nothink. Mode switching have to be finished via the API parameter relatively than inline immediate tokens.

The extra novel addition is a function referred to as Considering Preservation. By default, solely the considering blocks generated for the most recent consumer message are retained; Qwen3.6 has been moreover skilled to protect and leverage considering traces from historic messages, which may be enabled by setting the preserve_thinking possibility. This functionality is especially helpful for agent eventualities, the place sustaining full reasoning context can improve choice consistency, scale back redundant reasoning, and enhance KV cache utilization in each considering and non-thinking modes.

Key Takeaways

- Qwen3.6-35B-A3B is a sparse Combination of Consultants mannequin with 35 billion complete parameters however solely 3 billion activated at inference time, making it considerably cheaper to run than its complete parameter depend suggests — with out sacrificing efficiency on complicated duties.

- The mannequin’s agentic coding capabilities are its strongest swimsuit, with a rating of 51.5 on Terminal-Bench 2.0 (the very best amongst all in contrast fashions), 73.4 on SWE-bench Verified, and a dominant 1,397 on QwenWebBench masking frontend code technology throughout seven classes together with Net Apps, Video games, and Information Visualization.

- Qwen3.6-35B-A3B is a natively multimodal mannequin, supporting picture, video, and doc understanding out of the field, with scores of 81.7 on MMMU, 85.3 on RealWorldQA, and 83.7 on VideoMMMU — outperforming Claude-Sonnet-4.5 and Gemma4-31B on every of those.

- The mannequin introduces a brand new Considering Preservation function that permits reasoning traces from prior dialog turns to be retained and reused throughout multi-step agent workflows, lowering redundant reasoning and enhancing KV cache effectivity in each considering and non-thinking modes.

- Launched below Apache 2.0, the mannequin is absolutely open for business use and is appropriate with the key open-source inference frameworks — SGLang, vLLM, KTransformers, and Hugging Face Transformers — with KTransformers particularly enabling CPU-GPU heterogeneous deployment for resource-constrained environments.

Try the Technical particulars and Mannequin Weights. Additionally, be happy to observe us on Twitter and don’t overlook to hitch our 130k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you’ll be able to be part of us on telegram as effectively.

Have to associate with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and so on.? Join with us