We’re going to be, on this sequence, going gradual. The aim will probably be two issues. One, study the continual diff-in-diff paper by Callaway, Goodman-Bacon and Sant’Anna (CBS), conditionally accepted at AER. Two, I’m going to take a stab at constructing one thing that I can use once more — a bundle. Brantly Callaway already has an R bundle, so we are going to see what it’s we are able to pull off, however my sense is that if we are able to make the bundle do some issues that’s not already in that bundle (like calculate the TWFE weights, embody covariates, or who is aware of what else), then it’ll assist me as a result of I believe I have to personally make one thing if I’m going to grasp this paper. And I’m wanting, too, for some workouts that I believe will assist me deepen my expertise with Claude Code, and attempting to sketch out a bundle looks like a great one. As this bundle is for myself, it’s beta solely, and my objective is only for us to at the start study the paper, after which see if we are able to use Claude Code to assist me obtain that objective.

However at the moment goes to be fundamental. Our objective goes to solely be creating the structure for our saved native listing utilizing a wide range of new /expertise. Particularly, we will probably be utilizing the /beautiful_deck ability, the /split-pdf ability, the /tikz ability and the /referee2 ability. As not all of those expertise have been mentioned earlier than on right here (however which I take advantage of so much), I clarify what they every do, and supply the prompts as properly. We will probably be creating an exquisite deck of step one concerned within the CBS decomposition of the TWFE coefficient in cases with steady doses.

Thanks once more for supporting this substack, in addition to my e-book, Causal Inference: The Mixtape, my workshops at Mixtape Periods with Kyle Butts and others, and my podcast. Serving to folks achieve expertise and entry to utilized econometric instruments by a wide range of artistic efforts is form of my ardour. It’s a labor of affection. In case you aren’t a paying subscriber, take into account changing into one at the moment! I hold the worth as little as Substack lets me at $5/month and $50 for an annual subscription (and $250 for founding members). The Claude Code stuff will for some time proceed to be free at its launch, although which will change sooner or later since after 6 months, there are such a lot of sources. Mine will proceed to deal with sensible analysis functions or what I name “AI Brokers for Analysis Employees”. Thanks!

I’ve a idea. My idea is that nobody really needed to study the brand new diff-in-diff estimators (beneath differential timing) till Andrew Goodman-Bacon’s paper, in the end revealed within the 2021 Journal of Econometrics, confirmed in a really clear approach that the vanilla TWFE specification was biased. It was biased even with parallel developments.

My idea is that writ massive, most utilized folks don’t care about new estimators till they are often convincingly proven that there’s something incorrect with the estimator that they already use, and in the event that they may also be proven that it’s problematic even with the assumptions they thought have been enough. And so when Bacon’s paper got here out exhibiting that TWFE (thought then to really be a synonym for difference-in-differences, not an estimator) was biased, it actually shook folks.

Now you possibly can disagree with my idea, however that’s my working speculation, and I’m utilizing it to inspire this sequence. And right here is my conjecture. I don’t suppose folks actually, deep down, wish to study this new steady diff-in-diff paper. I believe many individuals are on the opposite aspect of the diff-in-diff Laffer curve. They wish to see much less diff-in-diff stuff; no more. And the one approach that they are going to voluntarily make an individual select to study one other diff-in-diff estimator is in the event you may help them perceive that the estimator of selection — TWFE — is biased. In any other case, we have now payments to pay, mouths to feed, miles to run, and courses to show.

So, the plan then to assist do that’s to review the TWFE decomposition that Callaway, Goodman-Bacon and Sant’Anna derive. I requested Bacon by textual content the opposite day may I simply name this paper CBS as a result of CGBS doesn’t roll off the tongue. He mentioned I may, as a result of everybody calls him Bacon anyway, so I’m calling it CBS. Let’s get began then.

So Pedro Sant’Anna introduced yesterday at Harvard and he famous that there are two ways in which econometricians have approached causal inference. The primary is what he calls “backwards engineering”. Backwards engineering is the place you kind of run a regression, and utilizing some instrument like Frisch-Waugh-Lovell crack open the regression coefficient and determine what causal estimand, if any, you simply calculated. Typically the weights are so bizarre and poorly behaved that you just didn’t in any respect. And that’s backwards engineering.

Pedro prefers the second method which he calls “forwards engineering”. And ahead engineering is the place you state the causal estimand you have an interest in, you be aware the assumptions that you just suppose are life like in your information, you do a specific calculation that whenever you invoke these assumptions the calculation is that inhabitants estimand — or quite the imply of the sampling distribution is.

Nicely, we’re going to do each of those, however at the moment we’re going to do backwards engineering. And we’re doing it first due to what I mentioned in above — I believe folks should be taught what the coefficient they love means first, and in the event that they don’t like listening to what it’s, then they could really be prepared to sacrifice their time to take heed to a brand new one.

However we wish to use Claude Code to assist us right here, so let’s begin with the regression initially.

(Y_{i,t}= θ_t + η_i + β^{twfe} D_i·Post_t + v_{i,t})

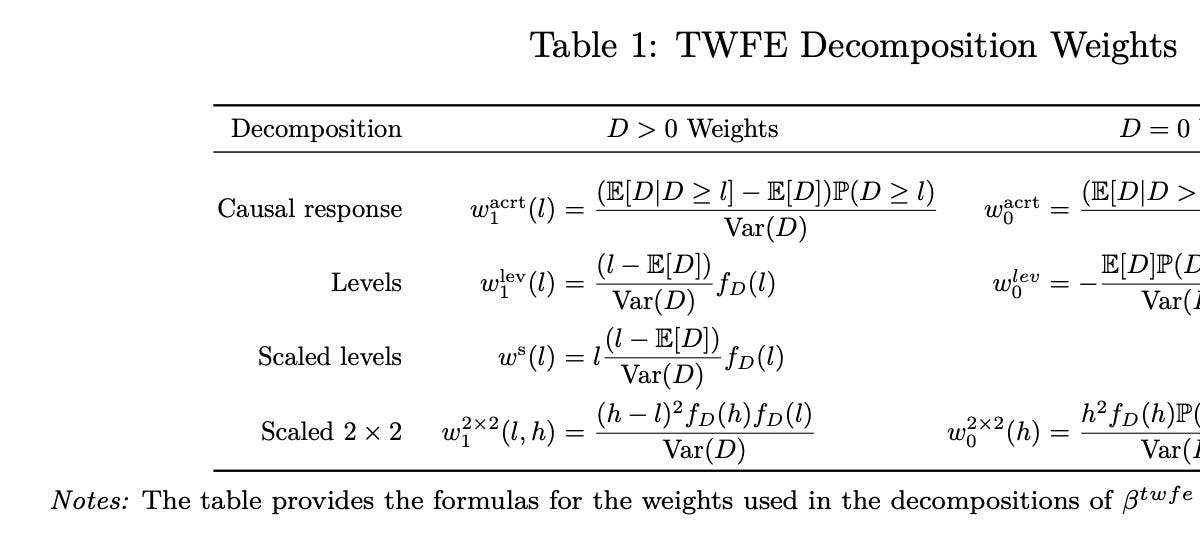

After which we are going to be aware from the paper what the beta means. Listed here are the CBS weights.

Bleh, proper? That’s gnarly wanting. So that is the place Claude Code goes to assist us. What we’re going to do is actually write a bundle collectively that when invoked will really calculate these weights.

However to do it, we’re going to wish an software. Kyle Butts developed an software for our CodeChella so I’m going to make use of it. It’s by “Commerce Liberalization and Markup Dispersion: Proof from China’s WTO Accession” by Yi Lu and Lingui Yu, 2015 American Financial Journal: Utilized Economics. Right here’s what the paper is about.

Lu and Yu’s paper is about China’s 2001 WTO accession. This compelled industries with pre-WTO tariffs above 10% to chop them to the ceiling. Lu and Yu use the dimensions of that predicted tariff minimize as a steady “dose” to estimate, through an industry-level DiD on 3-digit SIC panels from 1998–2005, whether or not commerce liberalization diminished within-industry dispersion of agency markups (measured by the Theil index and 4 different dispersion statistics). And their reply was within the affirmative. Industries hit with bigger mandated tariff cuts noticed bigger declines in markup dispersion, which they interpret as commerce liberalization decreasing useful resource misallocation. In order that will probably be our software.

So the very first thing that I did was learn the paper so much. That’s over a number of years, however I encourage you to learn the paper. The paper follows the groups’ philosophy of forwards engineering, however Desk 1 will get into backwards engineering the TWFE coefficient like I mentioned, and whereas I perceive why you forwards engineer, I believe for studying functions when the world makes use of one estimator already, I believe it’s really extra pedagogically helpful to backwards engineer. So we are going to. However first, we are going to use my instrument /split-pdf and this immediate:

Please use /newproject in right here, transfer the pdf on this folder already into the readings or articles folder, then use /split-pdf on it. Write a abstract of every break up in markdown. After which write a abstract of the entire thing. Pay cautious consideration to exactly find out how to calculate the twfe weights in desk 1, additionally. That’s what we're going to deal with ourselves.

So, what I had accomplished was I created a brand new empty folder, I put the paper in there, after which I had Claude Code break up the paper into smaller pdfs, then write markdown summaries of every one, after which as soon as it was accomplished with that, write a markdown abstract of all of the markdowns. Then we are going to do the identical for the China-WTO article. Right here was my immediate once more utilizing /split-pdf.

Use /split-pdf on Lu-Yu, make summaries of every break up in markdown, then create one huge abstract markdown of these smaller splits. Make the objective to deeply perceive their TWFE estimation technique, and a deep understanding of dose and outcomes along with no matter you ordinarily do.

I copied all of these items now to my web site so you possibly can see them, each the manuscripts and each markdown summaries from /split-pdf. Right here they’re.

And now I’m going to conclude. What we at the moment are going to do is make a “stunning deck” utilizing my rhetoric of decks essay to information us, have my /referee2 ability to critique its total group, after which a brand new ability I created just lately, /tikz, to comb by and repair any Tikz associated compile errors. All of those might be discovered at my MixtapeTools repo, and you’ll both clone it domestically if you need, or you possibly can simply have Claude Code learn it in your aspect (or Codex — no matter). Let me briefly clarify what every does.

The rhetoric of decks essay is one thing I’ve labored on right here and there. However basically, it’s primarily based on the premise that decks have their very own rhetoric, Claude has been educated kind of on each deck each created (in addition to each different piece of writing), and that the tacit information concerned in making good, unhealthy, mediocre and nice decks can and has been extracted by the massive language mannequin. And the rhetoric idea is within the classical sense in keeping with Aristotle’s three ideas of rhetoric that are as follows:

-

Ethos (credibility): The speaker’s authority and trustworthiness, earned by demonstrated experience and trustworthy acknowledgment of uncertainty. In decks, it reveals up as methodology diagrams, citations, and brazenly naming the options you thought of which sign you’ve accomplished the work.

-

Pathos (emotion): Enchantment to the viewers’s emotions, values, and aspirations together with what they worry, hope for, or acknowledge from expertise. In decks, it means opening with an issue the viewers feels, validating their frustrations, and exhibiting what success appears to be like like, although pathos with out logos collapses into demagoguery.

-

Logos (logic): Reasoned argument grounded in proof, construction, and acknowledgment of counterarguments. Once more, in decks, it seems as information visualizations, comparability tables, and a transparent movement from downside to resolution, however logos with out pathos is only a lecture that produces disengagement.

And for this train, I lastly bought round to turning my rhetoric of decks essay right into a ability referred to as /beautiful_deck. You’ll be able to name it now, and if you wish to learn extra about it, simply go right here to my /expertise listing. The default is it is going to create an exquisite deck in LaTeX’s beamer bundle following my explanations of what I’m going for in decks — information visualization and quantification, stunning slides, stunning tables, stunning figures, not a wall of phrases, sentences or equations, minimizing cognitive density of slides, max one thought per slide (two tops), utilizing Tikz for graphics and/or .png from software program packages like R, python, Stata, instinct, narrative, and at last technically rigorous exposition. However I’ve made it so you too can point out you need it in a special format like Quarto, markdown, and so on. Your name. One of many issues that /beautiful_deck does is it additionally checks for compile errors from overfull, field, and so on., and makes an effort to eradicate them, irrespective of how beauty they’re.

As we’re going to make an exquisite deck in beamer utilizing the /beautiful_deck ability, it is going to be by default utilizing Tikz, the highly effective and graphics bundle with almost impenetrable and really sophisticated (to me anyway) LaTeX syntax. Yow will discover an instance of it right here.

Nicely, the default within the /beautiful_deck ability is to test for all compile errors the place the content material of the slide is mainly spilling off the slide’s margins. That is when phrases go beneath the top of the slide and turn out to be unreadable, as an illustration.

However not all visible compile issues are caught inside the /beautiful_deck ability itself. As an illustration, the labels on graphics routinely, when purely automated, will intervene with different objects. Phrases, as an illustration, will probably be interfering with bins or strains. Issues will cross each other. And that is most likely associated to the truth that massive language fashions have poor spatial reasoning.

So, the /tikz ability is used to confirm that the placement of phrases, equations, and different labels aren’t blocks or crossing different objects. One of many issues /tikz makes use of to appropriate for this within the decks is that it makes use of Bézier curves that are parametric curves outlined by management factors, and my /tikz ability makes use of depth formulation on them to detect and repair arrow/label collisions in order that they don’t occur.

It’s not excellent. However, it will possibly assist. And I strongly encourage you to test your decks intently, and in the event you nonetheless see belongings you don’t like, do away with them. At the moment, we must always not tolerate any beauty errors like the placement of labels. Our objective is henceforth to make stunning decks and delightful decks have stunning footage, and delightful decks shouldn’t have errors.

And the final ability is my /referee2 ability which writes referee reviews critiquing code and decks. /referee2 is my adversarial audit ability with two modes: a code mode that performs a full five-audit cross-language replication protocol on empirical pipelines, and a deck mode that opinions Beamer displays for rhetorical high quality, visible cleanliness, and compile hygiene towards the Rhetoric of Decks ideas.

In deck mode it systematically checks each slide for titles-as-assertions, one-idea-per-slide, no wall of sentences, minimized cognitive density and stability throughout the deck, appropriate TikZ coordinate placement (utilizing the identical measurement guidelines as /tikz), and nil Overfull/Underfull/vbox/hbox warnings within the compile log. When full, it information a proper slide-by-slide report with settle for / minor-revision / major-revision verdicts. It’s meant to run in a recent terminal by a Claude occasion that has by no means seen the deck, so the evaluation is structurally unbiased from whoever constructed it. It is also used to audit code, although. For now, it’s not actually arrange for paper evaluation although I’m certain you need to use it for that in the event you needed (and I’ve).

And now we’re prepared. We’re going to ask Claude Code to make an exquisite deck that explains to make use of the TWFE decomposition in CBS. The objective is to take an individual who’s unfamiliar with their decomposition from understanding completely zero to completely one thing.

However our objective is extraordinarily slender. Since TWFE all the time constructs its coefficients utilizing this method, which is numerically the identical because the sum of squared residuals method which estimates the parameters a special approach, we are going to not be centered on their causality interpretation simply but. Our objective is solely to know the algebra of the decomposition divorced from causal inference and diff-in-diff totally. So right here it goes. It’s lengthy, however that’s primarily person error. I are inclined to over clarify issues to Claude, which admittedly makes use of a variety of tokens, so be at liberty to alter this if you need.

Please make an exquisite deck utilizing the rhetoric decks essay and /beautiful_deck of the CBS paper we summarized. Use the markdowns solely. And you'll apply it to the AEJ WTO paper that we additionally marked down. However at the moment’s objective on this deck is extraordinarily slender. I would like you to deal with the TWFE estimation, with a steady dose in -diff-in-diff, exhibiting the equation, and its historic interpretation. Clarify the context by which it's used within the AEJ paper. Then I would like you to ONLY deal with the algebraic decomposition of the method that's in Desk 1 of the CBS paper. There are a number of decompsoitions related to a number of various things so slowly take us by each however accomplish that utilizing the rhetoric of decks utilized toa. group of people who find themselves needing to go slowly and who discover the notation complicated at first look. So you need to be heavy on the applying first, the instinct second, all the time inside narrative and Aristotle’s rhetoric ideas, the precise information software (so produce R code or python code or Stata code that does this in replicable code), .tex information from that evaluation, heavy on Tikz graphics and quantification, use .png, and so forth. I would like the viewers to go from understanding zero concerning the CBS decomposition to understanding it on the finish. However no causal inference. Solely algebraic calculation of the weighting schemes concerned, and the interpretation of every enter utilizing shading, highlights, little brackets beneath equations, constructing block graphics, and so forth. Our objective in that is PURELY to know the weighting invlved in TWFE beneath these numerous measurements contained in Desk 1 solely. And remember-- magnificence first!

After it ran, I requested it to then use /tikz to repair labeling, and so on., after which /referee2 to critique it. And as soon as these have been accomplished, I advised Claude to do the whole lot /referee2 mentioned to do. This isn’t instantaneous, and it’ll spend about 20k to 25k tokens more than likely all mentioned and accomplished. So not low-cost, however on the similar time, however on the similar time, it wouldn’t get you on the Meta chief board which was a contest they ran to see who may use essentially the most tokens. The winner basically price the corporate over one million {dollars} over 30 days by utilizing one thing like 1 billion tokens.

Virtually definitely there’s a extra environment friendly workflow and I’m going to spend time someday attempting to see what I can do to do this, however you get the gist. In case you ideas, although, please go away them on methods you may enhance, and I’ll evaluation them and have a dialog with my Claude to see if I ought to do it. Right here is the ultimate product.

I’m going to, for at the moment, simply publish what I’ve although I think I’ll tinker extra with the deck later. I determine seeing the preliminary output, although, might be okay for the substack, as who is aware of — possibly the preliminary will land. Although I have a tendency to wish issues persistently reframed in a approach that matches how my mind works, and I sometimes go spherical and spherical with Claude on deck manufacturing till it’s precisely how I prefer it. So I could have to do this after I begin studying it.

That’s it for at the moment. This was so much. Within the subsequent entry, we are going to begin unpacking the content material from the deck however within the meantime, I encourage you to experiment with all of this in your finish. That approach you will get caught up in your personal time. Once we return, we are going to begin attempting to decipher the decomposition method. You might be inspired to learn the paper intently, however we will probably be narrowly centered on simply the decomposition method of the TWFE estimator within the steady dose diff-in-diff case.

Thanks once more for studying. This substack is a labor of affection, and in the meanwhile, I’m going to proceed to make the explicitly Claude Code posts initially free so that every one readers can study a bit how I take advantage of it. However I do encourage you to share this substack with others, notably utilized researchers desirous to study Claude Code within the context of studying one thing they’re primarily all for — utilized statistics, causal inference, information science, program analysis and econometrics for their very own analysis functions. And in the event you aren’t already a paying subscriber, take into account changing into one!