In response to Stack Overflow and Atlassian, builders lose between 6 and 10 hours each week looking for info or clarifying unclear documentation. For a 50-developer staff, that provides as much as $675,000–$1.1 million in wasted productiveness yearly. This isn’t only a tooling subject. It’s a retrieval drawback.

Enterprises have loads of knowledge however lack quick, dependable methods to search out the precise info. Conventional search fails as techniques develop advanced, slowing onboarding, selections, and assist. On this article, we discover how fashionable enterprise search solves these gaps.

Why Conventional Enterprise Search Falls Quick

Most enterprise search techniques had been constructed for a special period. They assume comparatively static content material, predictable bugs and queries, and guide tuning to remain related. In fashionable knowledge setting none of these assumptions maintain significance.

Groups work throughout quickly altering datasets. Queries are ambiguous and conversational. Context issues as a lot as Key phrases. But many search instruments nonetheless depend on brittle guidelines and precise matches, forcing customers guess the precise phrasing reasonably than expressing actual intent.

The result’s acquainted. Individuals search repeatedly, refine queries manually or abandon search altogether. In AI-powered purposes, the issue turns into extra severe. Poor retrieval doesn’t simply gradual customers down. It typically feeds incomplete or irrelevant context into language fashions, growing the danger of low-quality or deceptive outputs.

The Change to Hybrid Retrieval

The subsequent technology of enterprise search is constructed on hybrid retrieval. As an alternative of selecting between key phrase search and semantic search, fashionable techniques mix each of them.

Key phrase search excels at precision. Vector search captures which means and intent. Collectively, they allow search experiences which might be quick, versatile and resilient throughout a variety of queries.

Cortex Search is designed orienting this hybrid method from the beginning. It offers low latency, high-quality fuzzy search instantly over Snowflake knowledge, with out requiring groups to handle embeddings and tune relevance parameters or preserve customized infrastructure. The retrieval layer adapts to the info, not the opposite method round.

Fairly than treating search as an add on characteristic, Coretx Search makes it a foundational functionality that scales with enterprise knowledge complexity.

Cortex Search because the Retrieval Layer for AI and Enterprise Search

Cortex Search helps two major use instances which might be more and more central to fashionable knowledge methods.

First is Retrieval Augmented Technology. Cortex Search acts because the retrieval engine that provides massive language fashions with correct, up-to-date enterprise context. This grounding layer is what permits AI chat purposes to ship responses which might be particular, related and aligned with proprietary knowledge reasonably than generic patterns.

Second is Enterprise Search. Cortex Search can energy high-quality search experiences embedded instantly into purposes, instruments and workflows. Customers ask questions in pure language and obtain outcomes ranked by each semantic relevance and key phrase precision.

Below the hood, cortex search indexes textual content knowledge, applies hybrid retrieval and makes use of semantic reranking to floor probably the most related outcomes. Refreshes are automated and incremental, so search outcomes keep aligned with the present state of the info with out guide intervention.

This issues as a result of retrieval high quality instantly shapes consumer belief. When search works persistently, folks depend on it. When it doesn’t, they cease utilizing it and fall again to slower, dearer paths.

How Cortex Search Works in Apply

At a excessive stage, Cortex Search abstracts away the toughest elements of constructing a contemporary retrieval system.

Instance: Powering RAG Functions with Cortex Search

What we’ll Construct: A buyer assist AI assistant that solutions consumer questions by retrieving grounded context from historic assist tickets and transcripts: then passing that context to a Snowflake Cortex LLM to generate correct, particular solutions.

Stipulations

| Requirement | Particulars |

| Snowflake Account | Free trial at trial.snowflake.com — Enterprise tier or above |

| Snowflake Position | SYSADMIN or a task with CREATE DATABASE, CREATE WAREHOUSE, CREATE CORTEX SEARCH SERVICE privileges |

| Python | 3.9+ |

| Packages | snowflake-snowpark-python, snowflake-core |

Establishing Snowflake account

- Head over to trial.snowflake.com and Join the Enterprise account

- Now you will note one thing like this:

Step 1 — Set Up Snowflake Atmosphere

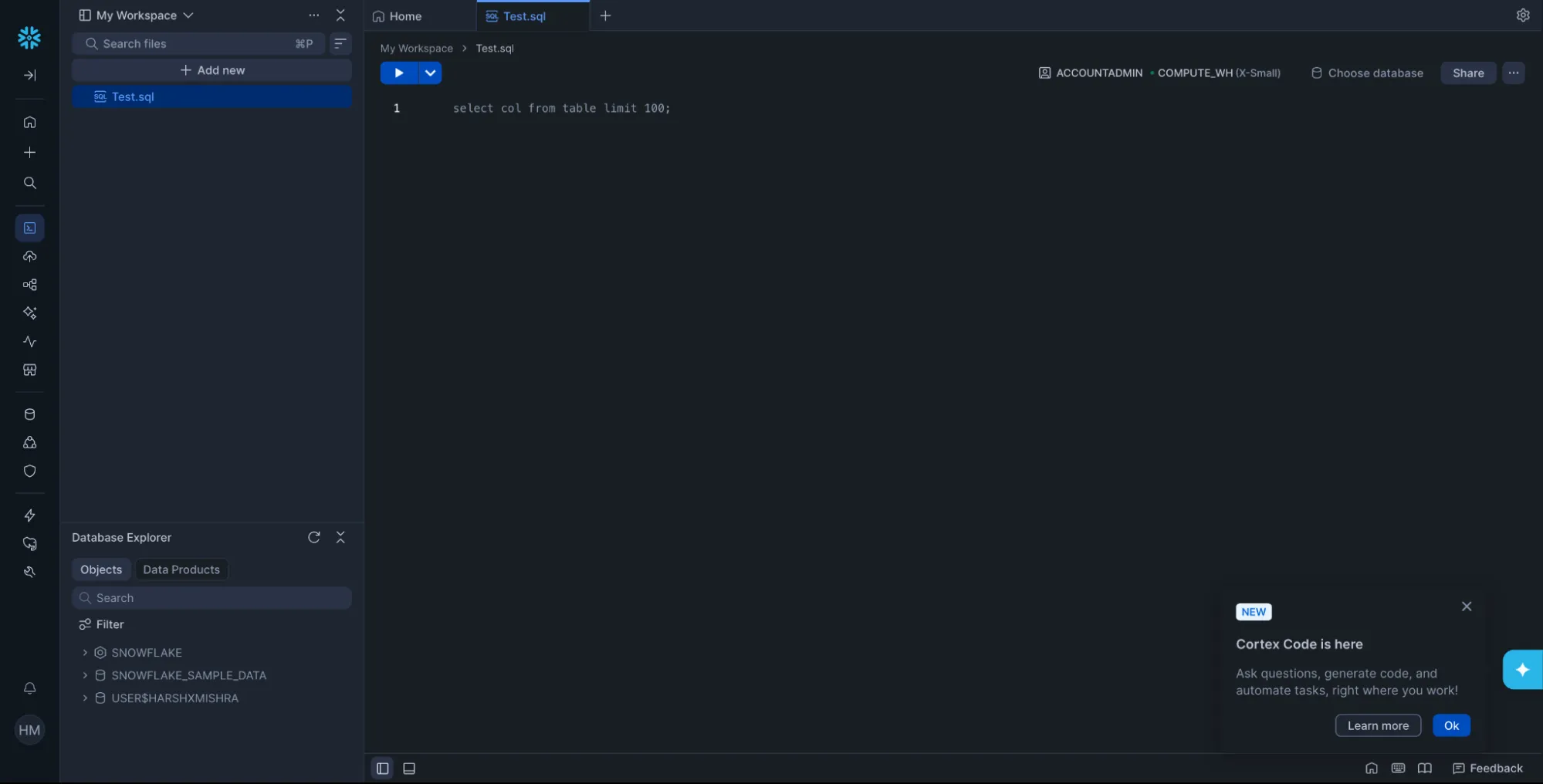

Run the next in a Snowflake Worksheet to create the database, schema

First create a brand new sql file.

CREATE DATABASE IF NOT EXISTS SUPPORT_DB;

CREATE WAREHOUSE IF NOT EXISTS COMPUTE_WH

WAREHOUSE_SIZE = 'X-SMALL'

AUTO_SUSPEND = 60

AUTO_RESUME = TRUE;

USE DATABASE SUPPORT_DB;

USE WAREHOUSE COMPUTE_WH;Step 2 — Create and Populate the Supply Desk

This desk simulates historic assist tickets. In manufacturing, this might be a stay desk synced out of your CRM, ticketing system, or knowledge pipeline.

CREATE TABLE IF NOT EXISTS SUPPORT_DB.PUBLIC.support_tickets (

ticket_id VARCHAR(20),

issue_category VARCHAR(100),

user_query TEXT,

decision TEXT,

created_at TIMESTAMP_NTZ DEFAULT CURRENT_TIMESTAMP()

);

INSERT INTO SUPPORT_DB.PUBLIC.support_tickets (ticket_id, issue_category, user_query, decision) VALUES

('TKT-001', 'Connectivity',

'My web retains dropping each couple of minutes. The router lights look regular.',

'Agent checked line diagnostics. Discovered intermittent sign degradation on the coax line. Dispatched technician to exchange splitter. Problem resolved after {hardware} swap.'),

('TKT-002', 'Connectivity',

'Web may be very gradual throughout evenings however high quality within the morning.',

'Community congestion detected in buyer section throughout peak hours (6–10 PM). Upgraded buyer to a much less congested node. Speeds normalized inside 24 hours.'),

('TKT-003', 'Billing',

'I used to be charged twice for a similar month. Want a refund.',

'Duplicate billing confirmed because of fee gateway retry error. Refund of $49.99 issued. Buyer notified through e-mail. Root trigger patched in billing system.'),

('TKT-004', 'Machine Setup',

'My new router just isn't exhibiting up within the Wi-Fi listing on my laptop computer.',

'Router was broadcasting on 5GHz solely. Buyer laptop computer had outdated Wi-Fi driver that didn't assist 5GHz. Guided buyer to replace driver. Each 2.4GHz and 5GHz bands now seen.'),

('TKT-005', 'Connectivity',

'Frequent packet loss throughout video calls. Wired connection additionally affected.',

'Packet loss traced to defective ethernet port on modem. Changed modem beneath guarantee. Buyer confirmed secure connection post-replacement.'),

('TKT-006', 'Account',

'Can't log into the client portal. Password reset emails aren't arriving.',

'E-mail supply blocked by SPF report misconfiguration on buyer area. Suggested buyer to offer assist area. Reset e-mail delivered efficiently.'),

('TKT-007', 'Connectivity',

'Web unstable solely when microwave is working within the kitchen.',

'2.4GHz Wi-Fi interference brought on by microwave proximity to router. Beneficial switching router channel from 6 to 11 and enabling 5GHz band. Problem eradicated.'),

('TKT-008', 'Pace',

'Marketed pace is 500Mbps however I solely get round 120Mbps on speedtest.',

'Pace check confirmed 480Mbps at node. Buyer router restricted to 100Mbps because of Quick Ethernet port. Beneficial router improve. Put up-upgrade pace confirmed at 470Mbps.');Step 3 — Create the Cortex Search Service

This single SQL command handles embedding technology, indexing, and hybrid retrieval setup routinely. The ON clause specifies which column to index for full-text and semantic search. ATTRIBUTES defines filterable metadata columns.

CREATE OR REPLACE CORTEX SEARCH SERVICE SUPPORT_DB.PUBLIC.support_search_svc

ON decision

ATTRIBUTES issue_category, ticket_id

WAREHOUSE = COMPUTE_WH

TARGET_LAG = '1 minute'

AS (

SELECT

ticket_id,

issue_category,

user_query,

decision

FROM SUPPORT_DB.PUBLIC.support_tickets

);What occurs right here: Snowflake routinely generates vector embeddings for the decision column, builds each a key phrase index and a vector index, and exposes a unified hybrid retrieval endpoint. No embedding mannequin administration, no separate vector database.

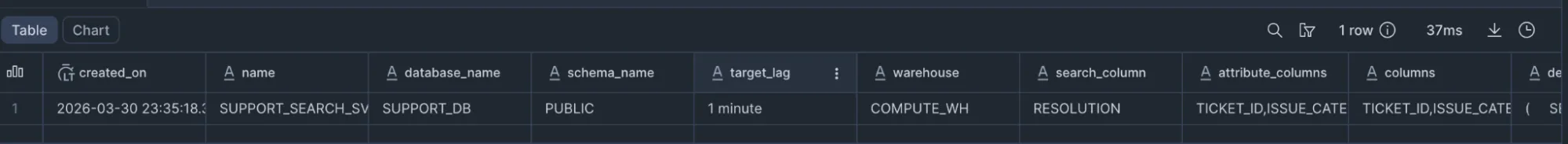

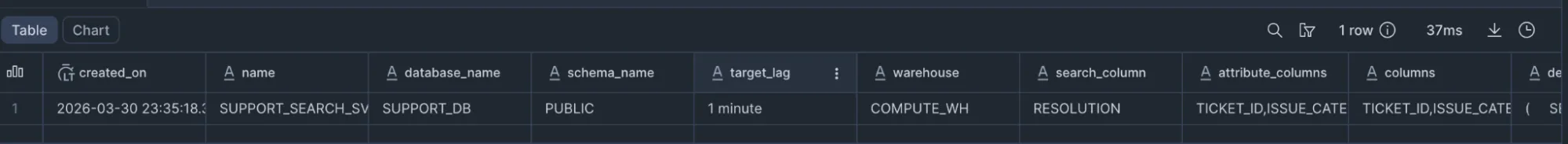

You may confirm the service is energetic:

SHOW CORTEX SEARCH SERVICES IN SCHEMA SUPPORT_DB.PUBLIC;Output:

Step 4 — Question the Search Service from Python

Hook up with Snowflake and use the snowflake-core SDK to question the service:

First Set up required packages:

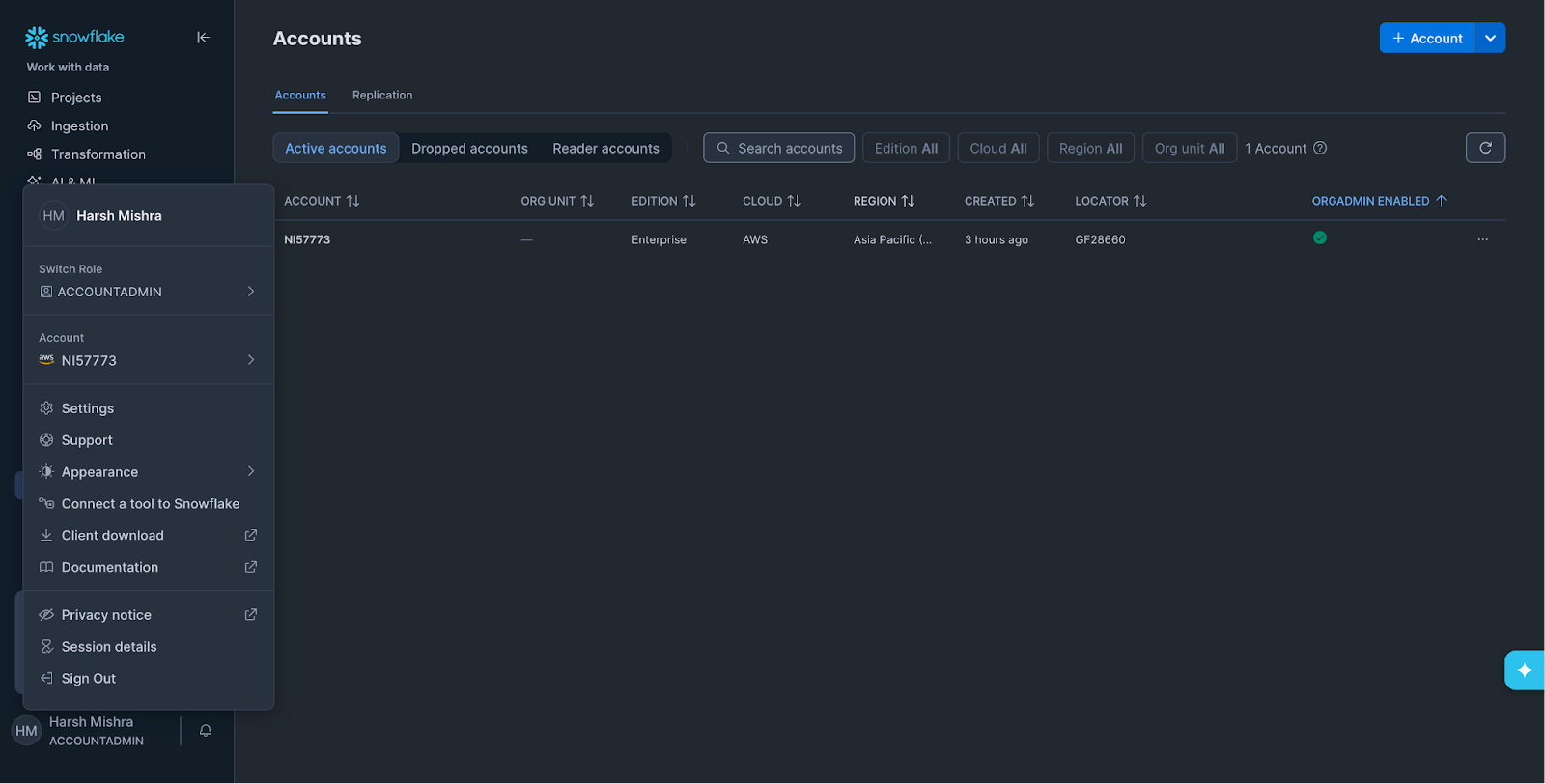

pip set up snowflake-snowpark-python snowflake-coreNow to search out your account particulars go to your account and click on on “Join a device to Snowflake”

from snowflake.snowpark import Session

from snowflake.core import Root

# --- Connection config ---

connection_params = {

"account": "YOUR_ACCOUNT_IDENTIFIER", # e.g. abc12345.us-east-1

"consumer": "YOUR_USERNAME",

"password": "YOUR_PASSWORD",

"function": "SYSADMIN",

"warehouse": "COMPUTE_WH",

"database": "SUPPORT_DB",

"schema": "PUBLIC",

}

# --- Create Snowpark session ---

session = Session.builder.configs(connection_params).create()

root = Root(session)

# --- Reference the Cortex Search service ---

search_svc = (

root.databases["SUPPORT_DB"]

.schemas["PUBLIC"]

.cortex_search_services["SUPPORT_SEARCH_SVC"]

)

def retrieve_context(question: str, category_filter: str = None, top_k: int = 3):

"""Run hybrid search towards the Cortex Search service."""

filter_expr = {"@eq": {"issue_category": category_filter}} if category_filter else None

response = search_svc.search(

question=question,

columns=["ticket_id", "issue_category", "user_query", "resolution"],

filter=filter_expr,

restrict=top_k,

)

return response.outcomes

# --- Take a look at retrieval ---

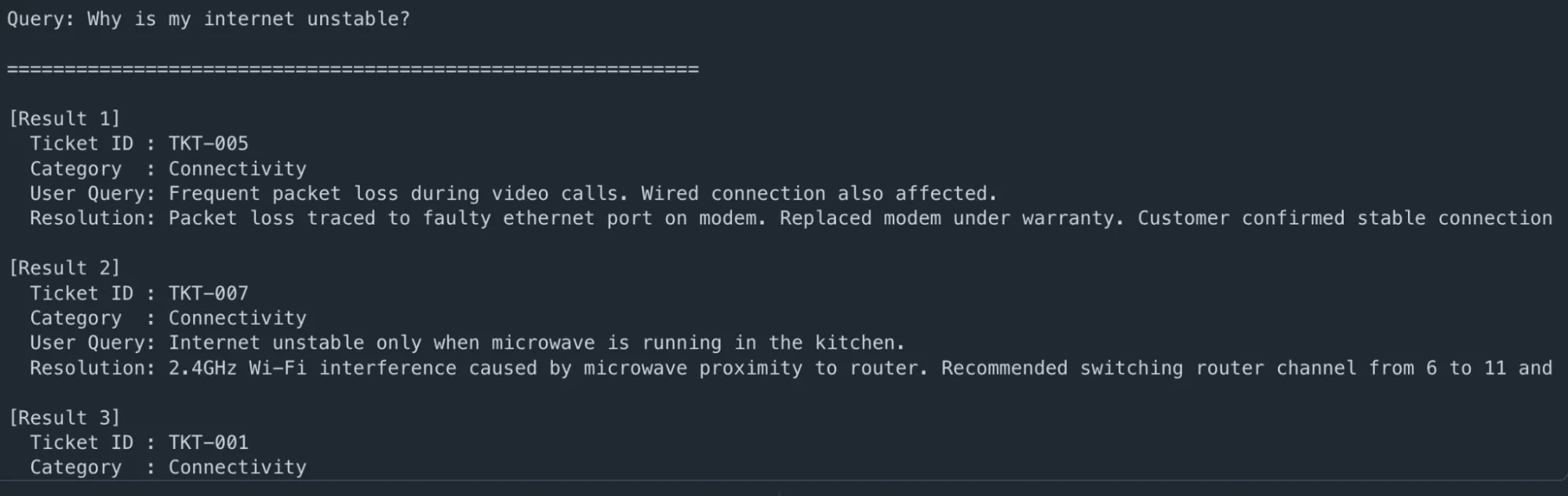

user_question = "Why is my web unstable?"

outcomes = retrieve_context(user_question, top_k=3)

print(f"n🔍 Question: {user_question}n")

print("=" * 60)

for i, r in enumerate(outcomes, 1):

print(f"n[Result {i}]")

print(f" Ticket ID : {r['ticket_id']}")

print(f" Class : {r['issue_category']}")

print(f" Consumer Question: {r['user_query']}")

print(f" Decision: {r['resolution'][:200]}...")Output:

Step 5 — Construct the Full RAG Pipeline

Now move the retrieved context into Snowflake Cortex LLM (mistral-large or llama3.1-70b) to generate a grounded reply:

import json

def build_rag_prompt(user_question: str, retrieved_results: listing) -> str:

"""Format retrieved context into an LLM-ready immediate."""

context_blocks = []

for r in retrieved_results:

context_blocks.append(

f"- Ticket {r['ticket_id']} ({r['issue_category']}): "

f"Buyer reported '{r['user_query']}'. "

f"Decision: {r['resolution']}"

)

context_str = "n".be part of(context_blocks)

return f"""You're a useful buyer assist assistant. Use ONLY the context under

to reply the client's query. Be particular and concise.

CONTEXT FROM HISTORICAL TICKETS:

{context_str}

CUSTOMER QUESTION: {user_question}

ANSWER:"""

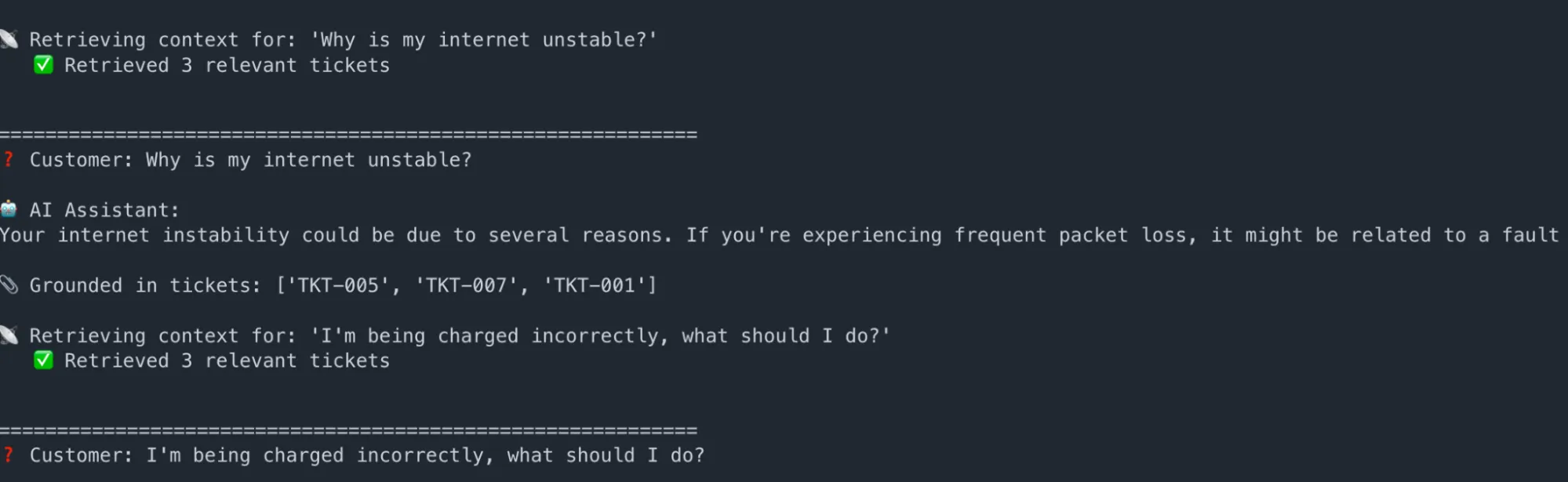

def ask_rag_assistant(user_question: str, mannequin: str = "mistral-large2"):

"""Full RAG pipeline: retrieve → increase → generate."""

print(f"n📡 Retrieving context for: '{user_question}'")

outcomes = retrieve_context(user_question, top_k=3)

print(f" ✅ Retrieved {len(outcomes)} related tickets")

immediate = build_rag_prompt(user_question, outcomes)

safe_prompt = immediate.exchange("'", "'")

sql = f"""

SELECT SNOWFLAKE.CORTEX.COMPLETE(

'{mannequin}',

'{safe_prompt}'

) AS reply

"""

end result = session.sql(sql).gather()

reply = end result[0]["ANSWER"]

return reply, outcomes

# --- Run the assistant ---

questions = [

"Why is my internet unstable?",

"I'm being charged incorrectly, what should I do?",

"My router is not visible on my devices",

]

for q in questions:

reply, ctx = ask_rag_assistant(q)

print(f"n{'='*60}")

print(f"❓ Buyer: {q}")

print(f"n🤖 AI Assistant:n{reply.strip()}")

print(f"n📎 Grounded in tickets: {[r['ticket_id'] for r in ctx]}")Output:

Key takeaway: The AI by no means generates generic solutions. Each response is traceable to particular historic tickets, dramatically decreasing hallucination threat and making outputs auditable.

Instance: Constructing Enterprise Search into Functions

What we’ll construct:

A pure language assist ticket search interface — embedded instantly into an software — that lets brokers and clients search historic tickets utilizing plain English. No new infrastructure is required: this instance reuses the very same support_tickets desk and support_search_svc Cortex Search service created within the RAG part above.

This exhibits how the identical Cortex Search service can energy two completely completely different surfaces: an AI assistant on one hand, and a browsable search UI on the opposite.

Step 1 — Verify the Current Service is Lively

Confirm the service created within the earlier part remains to be working:

USE DATABASE SUPPORT_DB;

USE SCHEMA PUBLIC;

SHOW CORTEX SEARCH SERVICES IN SCHEMA RAG_SCHEMA;Output:

Step 2 — Construct the Enterprise Search Shopper

This module connects to the identical Snowpark session and support_search_svc service, and exposes a search perform with class filtering and ranked end result show — the form of interface you’d embed right into a assist portal, an inner data device, or an agent dashboard.

# enterprise_search.py

from snowflake.snowpark import Session

from snowflake.core import Root

# --- Connection config ---

connection_params = {

"account": "YOUR_ACCOUNT_IDENTIFIER", # e.g. abc12345.us-east-1

"consumer": "YOUR_USERNAME",

"password": "YOUR_PASSWORD",

"function": "SYSADMIN",

"warehouse": "COMPUTE_WH",

"database": "SUPPORT_DB",

"schema": "PUBLIC",

}

session = Session.builder.configs(connection_params).create()

root = Root(session)

# --- Identical service because the RAG instance — no new service wanted ---

search_svc = (

root.databases["SUPPORT_DB"]

.schemas["RAG_SCHEMA"]

.cortex_search_services["SUPPORT_SEARCH_SVC"]

)

def search_tickets(question: str, class: str = None, top_k: int = 5) -> listing:

"""Pure language ticket search with non-compulsory class filter."""

filter_expr = {"@eq": {"issue_category": class}} if class else None

response = search_svc.search(

question=question,

columns=["ticket_id", "issue_category", "user_query", "resolution"],

filter=filter_expr,

restrict=top_k,

)

return response.outcomes

def display_tickets(question: str, outcomes: listing, filter_label: str = None):

"""Render search outcomes as a formatted ticket listing."""

label = f" [{filter_label}]" if filter_label else ""

print(f"n🔎 Search{label}: "{question}"")

print(f" {len(outcomes)} ticket(s) foundn")

print("-" * 72)

for i, r in enumerate(outcomes, 1):

print(f" #{i} {r['ticket_id']} | Class: {r['issue_category']}")

print(f" Buyer: {r['user_query']}")

print(f" Decision: {r['resolution'][:160]}...n")Step 3 — Run Pure Language Ticket Searches

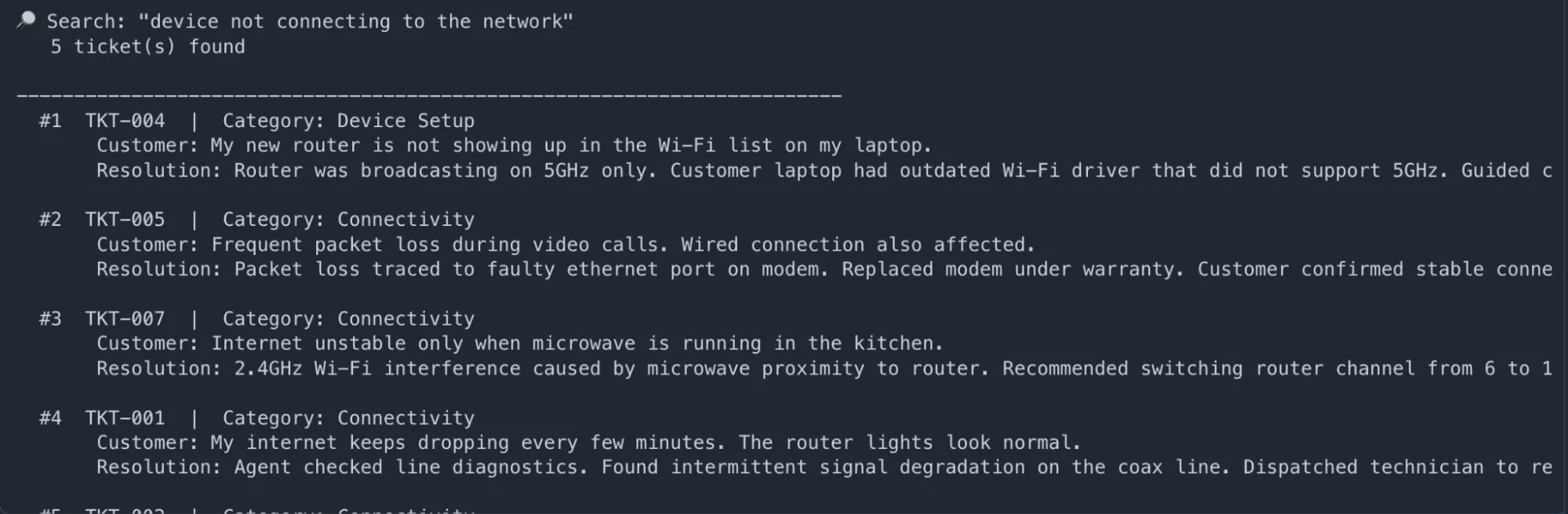

# --- Search 1: Semantic question — no precise match wanted ---

outcomes = search_tickets("machine not connecting to the community")

display_tickets("machine not connecting to the community", outcomes)Output:

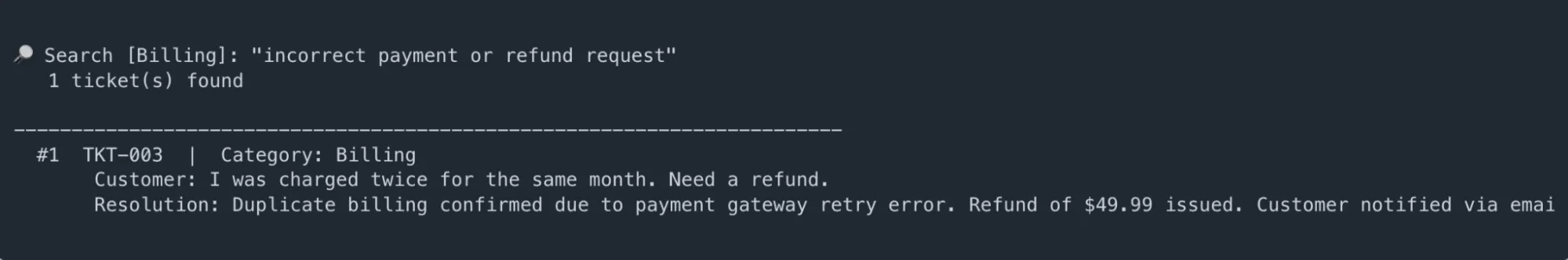

# --- Search 2: Class-filtered search (Billing solely) ---

outcomes = search_tickets(

question="incorrect fee or refund request",

class="Billing"

)

display_tickets(

"incorrect fee or refund request",

outcomes,

filter_label="Billing"

)2nd Output:

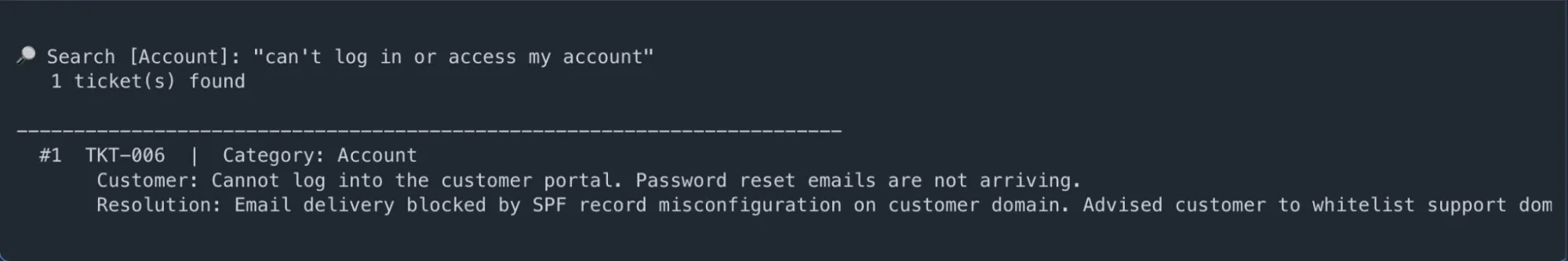

# --- Search 3: Account & entry points ---

outcomes = search_tickets("cannot log in or entry my account", class="Account")

display_tickets("cannot log in or entry my account", outcomes, filter_label="Account")Output:

Step 4 — Expose as a Flask Search API (Optionally available)

Wrap the search perform in a REST endpoint to embed it into any assist portal, inner device, or chatbot backend:

# app.py

from flask import Flask, request, jsonify

from enterprise_search import search_tickets

app = Flask(__name__)

@app.route("/tickets/search", strategies=["GET"])

def ticket_search():

question = request.args.get("q", "")

class = request.args.get("class") # non-compulsory filter

top_k = int(request.args.get("restrict", 5))

if not question:

return jsonify({"error": "Question parameter 'q' is required"}), 400

outcomes = search_tickets(question, class=class, top_k=top_k)

return jsonify({

"question": question,

"class": class,

"rely": len(outcomes),

"outcomes": outcomes,

})

if __name__ == "__main__":

app.run(port=5001, debug=True)Take a look at with curl:

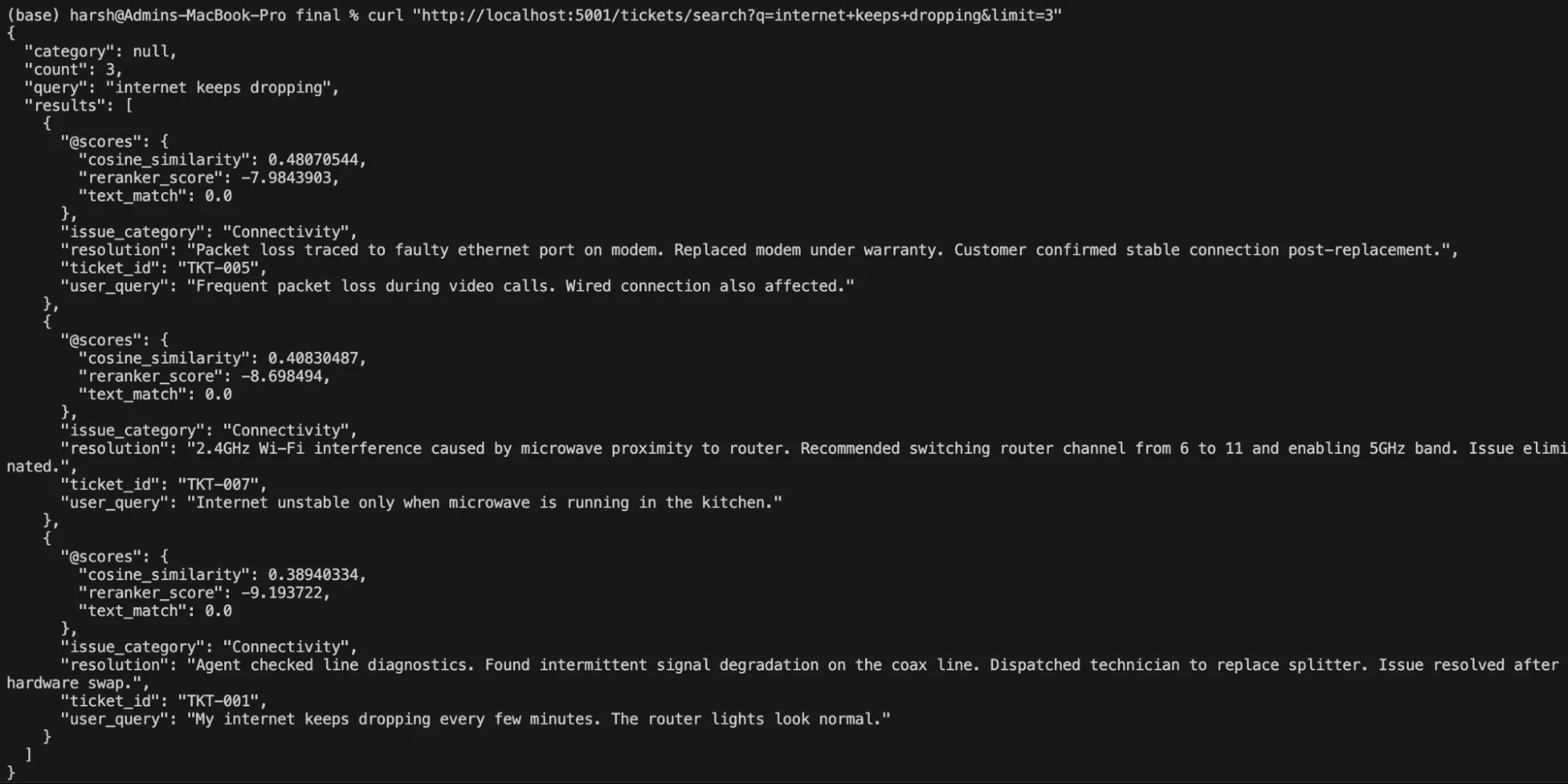

# Free-text pure language search

curl "http://localhost:5001/tickets/search?q=web+retains+dropping&restrict=3"Output:

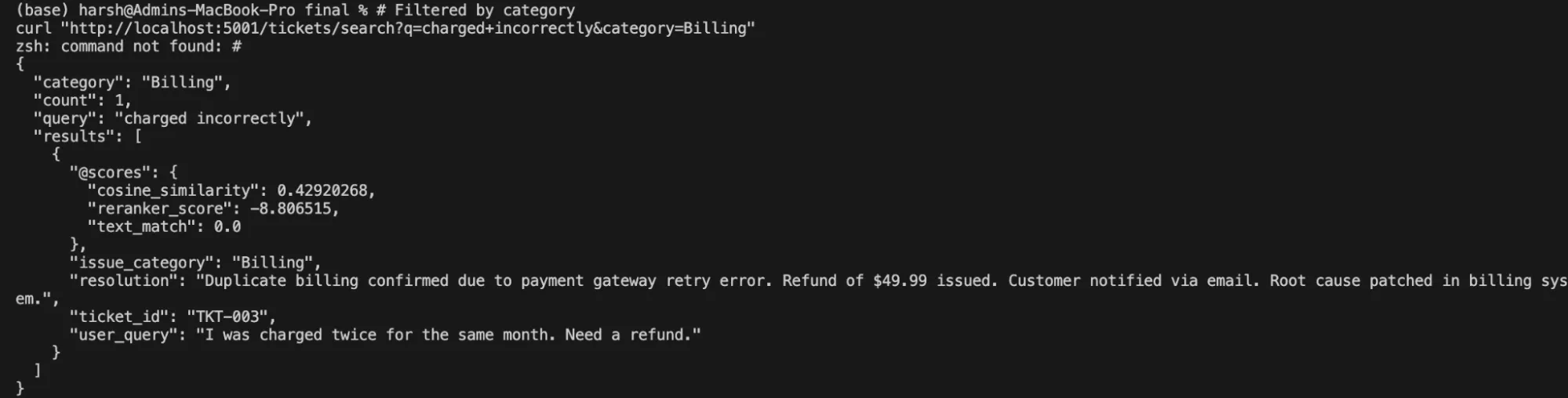

# Filtered by class

curl "http://localhost:5001/tickets/search?q=charged+incorrectly&class=Billing"Output:

Key takeaway: The identical Cortex Search service that grounds the RAG assistant additionally powers a completely useful enterprise search UI — no duplication of infrastructure, no second index to keep up. One service definition delivers each experiences, and each keep routinely in sync as tickets are added or up to date.

The Enterprise Impression of Higher Retrieval

Poor knowledge search strategies quietly erode enterprise efficiency. Time is misplaced to repeated queries and rework. However, assist groups get entangled in resolving questions that ought to have been self-served within the first place. New hires and clients take longer to succeed in productiveness. AI initiatives stall when outputs can’t be trusted.

In contrast, robust retrieval adjustments how organizations function.

Groups transfer quicker as a result of solutions are simpler to search out. AI purposes carry out higher as a result of they’re grounded in related, present knowledge. Characteristic adoption improves as a result of customers can uncover and perceive capabilities with out friction. Help prices decline as search absorbs routine questions.

Cortex Search turns retrieval from a background utility right into a strategic lever. It helps enterprises unlock the worth already current of their knowledge by making it accessible, searchable and usable at scale.

Steadily Requested Questions

A. It depends on key phrase matching and static indexes, which fail to seize intent and sustain with dynamic, distributed knowledge environments.

A. It combines key phrase precision with semantic understanding, enabling quicker, extra related outcomes even for ambiguous or conversational queries.

A. It offers correct, real-time retrieval that grounds AI responses and powers search experiences with out advanced infrastructure or guide tuning.

Login to proceed studying and luxuriate in expert-curated content material.