I wrote this put up final week. And some days after I printed it, Jason Fletcher left a remark that I couldn’t ignore. Couldn’t ignore as a result of I actually couldn’t cease enthusiastic about it, as I couldn’t perceive why his query would matter. He requested me concerning the heaping on the constructed t-statistic of 1 and three, and simply 2.

“Are you able to say one thing about rounding vs. p-hacking? What do you make of the large spike at t-stat=1 in your first determine?”

I learn it and simply might inform he knew one thing I didn’t know, so after spending about 5 hours, like I mentioned, on Sunday evening going backwards and forwards, delivery an increasing number of issues to OpenAI for evaluation, I really feel assured I must put up this now, as I don’t wish to add noise to what David Yanagizawa-Drott is doing on the Social Catalyst Lab and their APE mission.

Let me begin by explaining what I used to be doing within the first place. The final put up was concerning the Social Catalyst Lab’s APE mission. As of this writing, there’s round 750 papers, however once I pulled it final week, it was 651 economics papers written solely by AI brokers. David has mentioned that his crew will cease at 1,000, after which they’ll do p-hacking evaluation on it (which I didn’t notice else I in all probability wouldn’t have written it up in any respect).

In order I mentioned, I used Claude Code to ship 651 manuscripts to GPT-4o at OpenAI and extract coefficients and customary errors from the outcomes tables. I did this for fairly easy causes: as a result of normally economists don’t report t-statistics. They report coefficients and customary errors. The t-statistic is their ratio, so having each of them (in my thoughts) shouldn’t matter. And in my thoughts, anyway, all I might see was the Brodeur, et al. histogram with a giant heap at 1.96, and since I might be getting a t-statistic, I ought to be capable to verify what he did.

So, I acquired it. It took possibly lower than 5 minutes for OpenAI to investigate 3500 papers and extract these coefficients and customary errors. I then divided them to get t-statistics, plotted the distribution, and once I seen what regarded like bunching simply above t = 1.96, I grew to become targeted on attempting to determine the place it was, and if it was. It for certain was, and so I simply tried to explain all of it although I completely couldn’t work out the way it was even potential that it could be there.

I known as it proof of p-hacking. I even cited a Brodeur ratio of 1.52. That ratios of their work means 52% extra t-stats simply above the brink than simply beneath which is a big quantity. The unique Brodeur et al. (2020) paper discovered ratios round 1.3-1.4 in high economics journals.

I’m not saying that Jason Fletcher noticed the issue instantly, a lot as Jason requested me a query which appeared just like the sort of query somebody asks when they’re fairly accustomed to this literature. However I ended up spending 5 hours working by way of it with Claude Code earlier than I totally understood what had occurred. And whereas I’m not certain what David Yanagizawa-Drott goes to search out, I’m positively certain that you just can’t go about this the best way I did, due to the truth that the AI writers, like human writers, are rounding their regression coefficients and customary errors, which given the items in these outcomes, and the character of the therapy, implies that rounding will sometimes occur on the trailing digits in ways in which assure a compression to some interval.

That is the half I hadn’t thought by way of. And admittedly, now I’ll in all probability by no means unlearn it. However this was mockingly a part of the factor I used to be attempting to review within the first place which was the rhetoric of AI written papers.

When an writer rounds a coefficient to, say, three decimal locations and rounds the usual error to a few decimal locations, each numbers develop into discrete. They’re not steady. And since they’re not steady, then they’re not distinctive. Anybody with coefficients and customary errors “close to one another”, for whom neither had precisely the identical coefficients and precisely the identical customary error may have precisely the identical each. That’s as a result of the likelihood any two items have the identical steady worth is zero however the likelihood that any two items have the identical discrete worth isn’t zero, or doesn’t need to be zero.

So, while you divide two discrete numbers, the consequence can be discrete because it’s a ratio of two integers after that rounding. So although you aren’t rounding the t-statistic, you rounded the inputs which then made the t-statistic shift away from its true worth. Think about this instance.

The coefficient is 3.521 and the usual error is 2.109. The t-statistic is 1.6695116169. However in the event you spherical to the hundredths, you get 3.52 and a couple of.11, which is 1.6682464455. Okay not a lot totally different.

However what if the coefficient is 0.035 and a regular error of 0.021. That ratio is 1.6666666667. However in the event you rounded to hundredths, that’s 0.04 and 0.02. And that’s now 2.

So discover when the coefficient is “giant” (most probably as a result of the end result items are additionally giant), then rounding is inconsequential. However when the coefficients are “small” (most probably as a result of the items of the end result are small), then out of the blue ratios can develop into 2 even thought the true statistic is significantly much less (1.67).

To ensure that there to be a lot of t-statistics at 2 after rounding the inputs, there have to be a lot of values close to there within the first place. You want a lot of “nearly 2s” or “near 2s”, although frankly it may possibly nonetheless very insignificant and simply by way of rounding give the looks in any other case. Which might be why it’s not the worst concept on this planet to point out asterisks. If you’ll spherical, which you’ll, then it in all probability is a good suggestion to place stars on there, as normally we don’t care concerning the precise worth of the t-statistic, however fairly it’s relative place to some crucial worth, like 1.96.

However then why would there be an unusually giant variety of regression coefficients and customary errors close to 2? As a result of in labor economics, proportions, log outcomes, employment charges and so forth are quite common. And that area of outcomes offers us small numbers while you’re working with principally therapy indicators. If it’s a linear likelihood mannequin, then coefficients have to be comparatively small, for example. If the outcomes are scaled to per capita, that may convey it down. If the imply of the end result is 5, then a regression can’t feasibly be a 1,527 change in its worth. And in the event you take the log of earnings, that may shrink the values too.

Effectively, the area of small rounded integers has lots of 2-to-1 pairs in it. Not due to something suspicious, however as a result of 2:1 is the only multiplicative relationship between small numbers. (I maintain getting bit within the butt by issues I don’t know concerning the properties of enormous numbers with computer systems and apparently small numbers too).

I believe that’s the reason the spike appeared at 2, not 1.96. Which didn’t totally register to me in any way, in all probability as a result of I’m a visible thinker, and so the visible was all I might see in my thoughts. However Jason and one other particular person seen a spike 1 and three, and for some motive in my thoughts, I mentioned these had been noise, however at 2, it was sign. Which is one thing I’m going to have to consider extra — why I might type that framing so simply, I imply. Anyway, the purpose is rounding creates heaps in any respect easy integer ratios, from what I now perceive, which is 1, 2, 3, 3/2, 5/2, and so forth. The heap at 2 is the most important one close to the importance threshold as a result of that’s the place the density of true t-stats is highest. There’s extra uncooked materials to break down onto that worth.

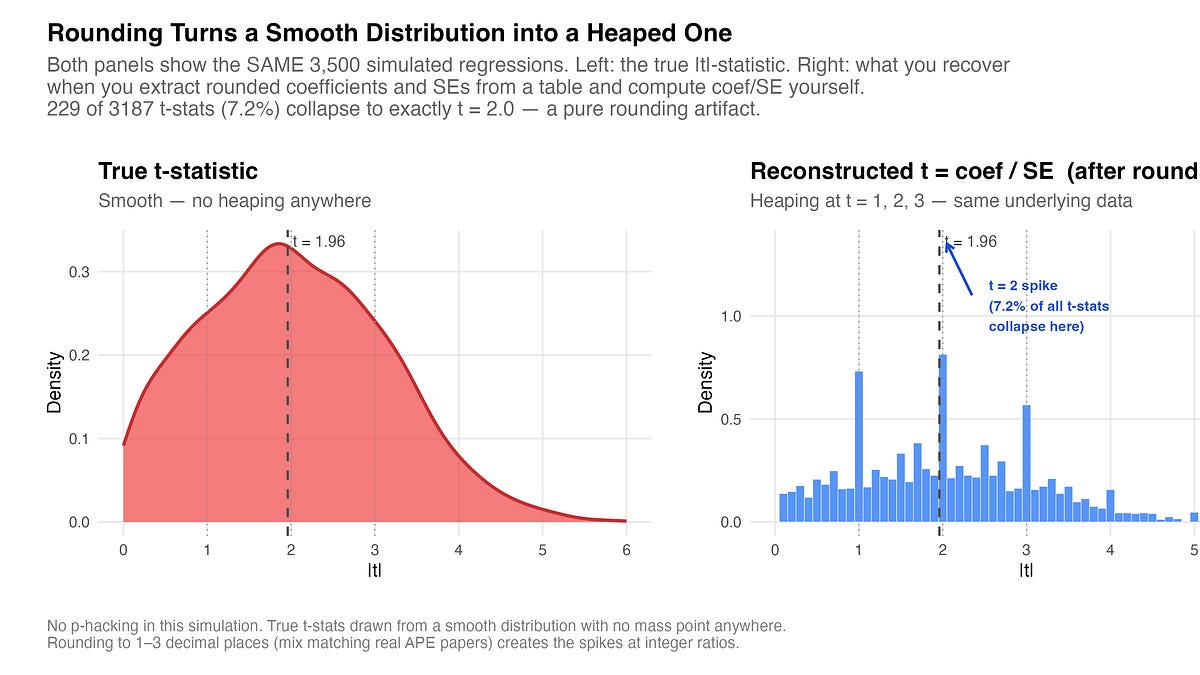

I’ll get extra into this “that’s the place the density of true t-stats is highest” later, however first, right here’s a simulation I had Claude Code make for me. It’s 3500 faux regression drawn from a totally easy underlying distribution. No bunching, no manipulation, nothing. The left panel is the “true” distribution and the suitable panel is what you get while you extract the rounded (imprecisely reported in a paper iow) coefficients and SEs after which compute the t-statistic your self by taking the ratio of these rounded values.

This isn’t the APE knowledge; like I mentioned, that is simulated. However it was achieved, at my request, to create values that had been extra possible in that neighborhood. And what I acquired was 229 of three,187 t-stats, which is 7.2%, collapse onto precisely t = 2.0. The underlying course of has no mass level wherever. The spike is solely a consequence of dividing two rounded numbers.

What kills me is I actually have by no means considered this earlier than. I don’t report t-statistics. I report coefficients, customary errors and p-values. Extra lately, I report confidence intervals. However I at all times have the software program produce them for me utilizing software program packages. I solely requested OpenAI to extract the coefficient and customary error as a result of I knew I couldn’t get the t-statistic. However see the t-statistic is rarely primarily based on the identical sort of deeply coarsened set of numbers as I used to be doing.

However now it’s apparent. The rhetoric of human written papers is to spherical at some widespread set of numbers (e.g., hundredths), however then all statistics primarily based on them are calculated utilizing the non-rounded values. However I simply had by no means considered this as a result of I had at all times put some bizarre script on statistic size like %9.2 or one thing to say “spherical to hundredths, let digits earlier than be as giant as 9 digits”.

The Brodeur et al. (2020) p-hacking check doesn’t use reported coefficients and customary errors from printed papers to then compute t-statistics. Slightly, they used the t-statistic from software program output. Brodeur et al. had been extra cautious about this than I had been. They extracted t-stats straight from regression tables that printed them, or transformed reported p-values to z-scores. They particularly averted reconstructing t-stats from rounded coefficients and customary errors exactly due to what I simply described and that I’ve realized the arduous method.

The check is to rely t-statistics in a slender window slightly below 1.96 and compares them to the window simply above. It’s a kind of RDD / bunching model method to forensic science. Underneath the null, the distribution of t-statistics needs to be easy across the crucial worth. There’s no motive for extra mass above the brink than beneath. But when there was such an asymmetry, particularly extra simply above than simply beneath, it suggests one thing is nudging outcomes throughout the road.

However, I don’t have the R code or the info; simply the manuscripts. So all the things I had was no matter made it into the LaTeX tables which suggests rounded coefficients and rounded customary errors. And since the papers don’t constantly report t-statistics (and even p-values), then I simply pulled the coefficients and customary errors pondering that was the identical factor not even remotely remembering that %9.2 factor I discussed, which is that I spherical continually. I do it for show functions.

Anyway, once I do a donut gap method, and drop the 68 circumstances of tangible 2s from the bunching window, the ratio drops from 1.52 to 1.02. In different phrases, it turns into flat, no bushing, suggesting that my authentic discovering was solely primarily based on the extraction technique I used. David can be this quickly, in all probability in a pair weeks, and my guess is that when he shows the uncooked t-statistic, there is not going to be any signal of p-hacking. As a result of my preliminary disbelief was in all probability warranted — it’s very arduous to wrap one’s head round the way it might occur realistically with out simply waving one’s hand with the “it’s within the coaching knowledge” card.

I’ll be sincere. I believe that graph fully took over my thoughts. I wasn’t intending to put in writing about p-hacking; simply rhetoric. I maintain being focused on how people write in science, and this concept that AI can extract these rhetorical ideas, even when they don’t seem to be written down. However once I noticed that graphic, all I might see was p-hacking, when a few different readers instantly sensed that it was in all probability a mirage created by rounding the inputs in a ratio.

I assume it’s good I realized one thing new, and so I believe others for pointing this out to me. I believe it’s not a lot that p-hacking doesn’t occur with AI brokers – though frankly, I discover it borderline not possible that it might occur, but when so that’s completely an interesting and vital consequence, and should even be the app killer for the entire thing. If AI agent written papers will not be p-hacking, then that’s going to be a serious consequence, and I stay up for studying David’s crew’s paper on this. However they’ll have the actual t-statistic to do it.