Richard Feynman mentioned that nearly all the things turns into fascinating for those who look into it deeply sufficient. Wanting up numbers in a desk is actually not fascinating, nevertheless it turns into extra fascinating while you dig into how properly you’ll be able to fill within the gaps.

If you wish to know the worth of a tabulated operate between values of x given within the desk, it’s important to use interpolation. Linear interpolation is commonly satisfactory, however you may get extra correct outcomes utilizing higher-order interpolation.

Suppose you might have a operate f(x) tabulated at x = 3.00, 3.01, 3.02, …, 3.99, 4.00 and also you wish to approximate the worth of the operate at π. You would approximate f(π) utilizing the values of f(3.14) and f(3.15) with linear interpolation, however you may additionally reap the benefits of extra factors within the desk. For instance, you may use cubic interpolation to calculate f(π) utilizing f(3.13), f(3.14), f(3.15), and f(3.16). Or you may use twenty ninth diploma interpolation with the values of f at 3.00, 3.01, 3.02, …, 3.29.

The Lagrange interpolation theorem permits you to compute an higher certain in your interpolation error. Nevertheless, the theory assumes the values at every of the tabulated factors are actual. And for bizarre use, you’ll be able to assume the tabulated values are actual. The most important supply of error is often the dimensions of the hole between tabulated x values, not the precision of the tabulated values. Tables have been designed so that is true [1].

The certain on order n interpolation error has the shape

c hn + 1 + λ δ

the place h is the spacing between interpolation factors and δ is the error within the tabulated values. The worth of c depends upon the derivatives of the operate you’re interpolating [2]. The worth of λ is at the very least 1 since λδ is the “interpolation” error on the tabulated factors.

The accuracy of an interpolated worth can’t be higher than δ typically, and so that you decide the worth of n that makes c hn + 1 lower than δ. Any increased worth of n isn’t useful. And actually increased values of n are dangerous since λ grows exponentially as a operate of n [3].

See the subsequent publish for mathematical particulars concerning the λs.

Examples

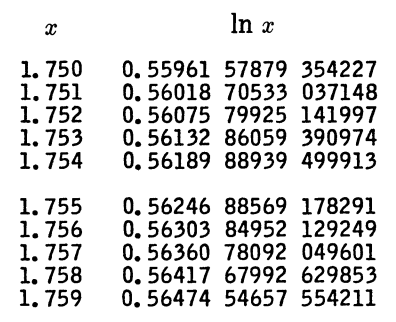

Let’s take a look at a particular instance. Right here’s a bit of a desk for pure logarithms from A&S.

Right here h = 10−3, and so linear interpolation would offer you an error on the order of h² = 10−6. You’re by no means going to get error lower than 10−15 since that’s the error within the tabulated values, so 4th order interpolation offers you about as a lot precision as you’re going to get. Rigorously bounding the error would require utilizing the values of c and λ above which might be particular to this context. In actual fact, the interpolation error is on the order of 10−8 utilizing fifth order interpolation, and that’s the very best you are able to do.

I’ll briefly point out a pair extra examples from A&S. The e-book features a desk of sine values, tabulated to 23 decimal locations, in increments of h = 0.001 radians. A tough estimate would recommend seventh order interpolation is as excessive as you must go, and actually the e-book signifies that seventh order interpolation gives you 9 figures of accuracy,

One other desk from A&S offers values of the Bessel operate J0 in with 15 digit values in increments of h = 0.1. It says that eleventh order interpolation gives you 4 decimal locations of precision. On this case, pretty high-order interpolation is helpful and even crucial. A lot of decimal locations are wanted within the tabulated values relative to the output precision as a result of the spacing between factors is so huge.

Associated posts

[1] I say have been due to course individuals not often lookup operate values in tables anymore. Tables and interpolation are nonetheless broadly used, simply indirectly by individuals; computer systems do the lookup and interpolation on their behalf.

[2] For capabilities like sine, the worth of c doesn’t develop with n, and actually decreases slowly as n will increase. However for different capabilities, c can develop with n, which might trigger issues like Runge phenomena.

[2] The fixed λ grows exponentially with n for evenly spaced interpolation factors, and values in a desk are evenly spaced. The fixed λ grows solely logarithmically for Chebyshev spacing, however this isn’t sensible for a normal goal desk.