As your conversational AI initiatives evolve, creating Amazon Lex assistants turns into more and more advanced. A number of builders engaged on the identical shared Lex occasion results in configuration conflicts, overwritten modifications, and slower iteration cycles. Scaling Amazon Lex improvement requires remoted environments, model management, and automatic deployment pipelines. By adopting well-structured steady integration and steady supply (CI/CD) practices, organizations can scale back improvement bottlenecks, speed up innovation, and ship smoother clever conversational experiences powered by Amazon Lex.

On this put up, we stroll by a multi-developer CI/CD pipeline for Amazon Lex that permits remoted improvement environments, automated testing, and streamlined deployments. We present you tips on how to arrange the answer and share real-world outcomes from groups utilizing this method.

Reworking improvement by scalable CI/CD practices

Conventional approaches to Amazon Lex improvement usually depend on single-instance setups and guide workflows. Whereas these strategies work for small, single-developer initiatives, they will introduce friction when a number of builders must work in parallel, resulting in slower iteration cycles and better operational overhead. A contemporary multi-developer CI/CD pipeline modifications this dynamic by enabling automated validation, streamlined deployment, and clever model management. The pipeline minimizes configuration conflicts, improves useful resource utilization, and empowers groups to ship new options quicker and extra reliably. With steady integration and supply, Amazon Lex builders can focus much less on managing processes and extra on creating participating, high-quality conversational AI experiences for patrons. Let’s discover how this resolution works.

Answer structure

The multi-developer CI/CD pipeline transforms Amazon Lex from a restricted, single-user improvement software into an enterprise-grade conversational AI platform. This method addresses the elemental collaboration challenges that decelerate conversational AI improvement. The next diagram illustrates the multi-developer CI/CD pipeline structure:

Utilizing infrastructure as code (IaC) with AWS Cloud Improvement Equipment (AWS CDK), every developer runs cdk deploy to provision their very own devoted Lex assistant and AWS Lambda situations in a shared Amazon Internet Providers (AWS) account. This method eliminates the overwriting points widespread in conventional Amazon Lex improvement and permits true parallel work streams with full model management capabilities.

Builders use lexcli, a customized AWS Command Line Interface (AWS CLI) software, to export Lex assistant configurations from the shared AWS account to their native workstations for modifying. Builders then check and debug domestically utilizing lex_emulator, a customized software offering built-in testing for each assistant configurations and AWS Lambda features with real-time validation to catch points earlier than they attain cloud environments. This native functionality transforms the event expertise by offering rapid suggestions and decreasing the necessity for time-consuming cloud deployments throughout iterations.

When builders push modifications to model management, this pipeline robotically deploys ephemeral check environments for every merge request by GitLab CI/CD. The pipeline runs in Docker containers, offering a constant construct atmosphere that ensures dependable Lambda operate packaging and reproducible deployments. Automated exams run towards these short-term stacks, and merges are solely enabled if all exams are profitable. Ephemeral environments are robotically destroyed after merge, guaranteeing price effectivity whereas sustaining high quality gates. Failed exams block merges and notify builders, stopping damaged code from reaching shared environments.

Modifications that cross testing in ephemeral environments are promoted to shared environments (Improvement, QA, and Manufacturing) with guide approval gates between levels. This structured method maintains high-quality requirements whereas accelerating the supply course of, enabling groups to deploy new options and enhancements with confidence.

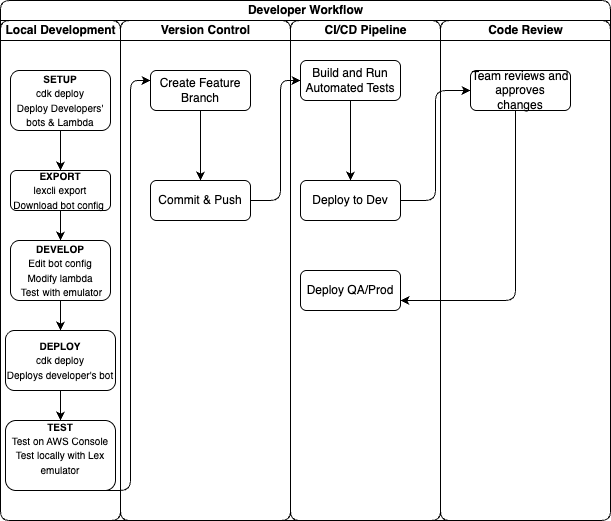

The next graphic illustrates the developer workflow organized by phases: native improvement, model management, and automatic deployment. Builders work in remoted environments earlier than modifications movement by the CI/CD pipeline to shared environments.

Enterprise Affect

By enabling parallel improvement workflows, this resolution delivers substantial time and effectivity enhancements for conversational AI groups. Inside evaluations present groups can parallelize a lot of their improvement work, driving measurable productiveness positive factors. Outcomes range primarily based on group measurement, undertaking scope, and implementation method, however some groups have lowered improvement cycles considerably. The acceleration has enabled groups to ship options in weeks somewhat than months, bettering time-to-market. The time financial savings permit groups to deal with bigger workloads inside current improvement cycles, releasing capability for innovation and high quality enchancment.

Actual-world success tales

This multi-developer CI/CD pipeline for Amazon Lex has supported enterprise groups in bettering their improvement effectivity. One group used it emigrate their platform to Amazon Lex, enabling a number of builders to collaborate concurrently with out conflicts. Remoted environments and automatic merge capabilities helped preserve constant progress throughout advanced improvement efforts.

A big enterprise adopted the pipeline as a part of its broader AI technique. By utilizing validation and collaboration options throughout the CI/CD course of, their groups enhanced coordination and accountability throughout environments. These examples illustrate how structured workflows can contribute to improved effectivity, smoother migrations, and lowered rework.

General, these experiences exhibit how the multi-developer CI/CD pipeline helps organizations of various scales strengthen their conversational AI initiatives whereas sustaining constant high quality and improvement velocity.

See the answer in motion

To higher perceive how the multi-developer CI/CD pipeline works in apply, watch this demonstration video that walks by the important thing workflows. It reveals how builders work in parallel on the identical Amazon Lex assistant, resolve conflicts robotically, and deploy modifications by the pipeline.

Getting began with the answer

The multi-developer CI/CD pipeline for Amazon Lex is offered as an open supply resolution by our GitHub repository. Commonplace AWS service fees apply for the sources you deploy.

Conditions and atmosphere setup

To observe together with this walkthrough, you want:

Core parts and structure

The framework consists of a number of key parts that work collectively to allow collaborative improvement: infrastructure-as-code with AWS CDK, the Amazon Lex CLI software known as lexcli, and the GitLab CI/CD pipeline configuration.

The answer makes use of AWS CDK to outline infrastructure parts as code, together with:

Deploy every developer’s atmosphere utilizing:

This creates a whole, remoted atmosphere that mirrors the shared configuration however permits for impartial modifications.

The lexcli software exports Amazon Lex assistant configuration from the console into version-controlled JSON information. When invoking lexcli export , it can:

- Hook up with your deployed assistant utilizing the Amazon Lex API

- Obtain the whole assistant configuration as a .zip file

- Extract and standardize identifiers to make configurations environment-agnostic

- Format JSON information for evaluate throughout merge requests

- Present interactive prompts to selectively export solely modified intents and slots

This software transforms the guide, error-prone strategy of copying assistant configurations into an automatic, dependable workflow that maintains configuration integrity throughout environments.

The .gitlab-ci.yml file orchestrates your entire improvement workflow:

- Ephemeral atmosphere creation – Robotically creates and destroys a brief dynamic atmosphere for every merge request.

- Automated testing – Runs complete exams together with intent validation, slot verification, and efficiency benchmarks

- High quality gates – Enforces code linting and automatic testing with 40% minimal protection; requires guide approval for all atmosphere deployments

- Surroundings promotion – Permits managed deployment development by dev, staging, manufacturing with guide approval at every stage

The pipeline ensures solely validated, examined modifications progress by deployment levels, sustaining high quality whereas enabling fast iteration.

Step-by-step implementation information

To create a multi-developer CI/CD pipeline for Amazon Lex, full the steps within the following sections. Implementation follows 5 phases:

- Repository and GitLab setup

- AWS authentication setup

- Native improvement atmosphere

- Improvement workflow

- CI/CD pipeline execution

Repository and GitLab setup

To arrange your repository and configure GitLab variables, observe these steps:

- Clone the pattern repository and create your individual undertaking:

- To configure GitLab CI/CD variables, navigate to your GitLab undertaking and select Settings. Then select CI/CD and Variables. Add the next variables:

- For

AWS_REGION, enterus-east-1 - For

AWS_DEFAULT_REGION, enterus-east-1 - Add the opposite environment-specific secrets and techniques your software requires

- For

- Arrange department safety guidelines to guard your major department. Correct workflow enforcement prevents direct commits to the manufacturing code.

AWS authentication setup

The pipeline requires applicable permissions to deploy AWS CDK modifications inside your atmosphere. This may be achieved by numerous strategies, akin to assuming a particular IAM function throughout the pipeline, utilizing a hosted runner with an connected IAM function, or enabling one other authorized type of entry. The precise setup depends upon your group’s safety and entry administration practices. The detailed configuration of those permissions is outdoors the scope of this put up, but it surely’s important to correctly authorize your runners and roles to carry out CDK deployments.

Native improvement atmosphere

To arrange your native improvement atmosphere, full the next steps:

- Set up dependencies

- Deploy your private assistant atmosphere:

This creates your remoted assistant occasion for impartial modifications.

Improvement workflow

To create the event workflow, full the next steps:

- Create a characteristic department:

- To make assistant modifications, observe these steps:

- Entry your private assistant within the Amazon Lex console

- Modify intents, slots, or assistant configurations as wanted

- Take a look at your modifications immediately within the console

- Export modifications to code:

The software will interactively immediate you to pick which modifications to export so that you solely commit the modifications you meant.

- Evaluation and commit modifications:

CI/CD pipeline execution

To execute the CI/CD pipeline, full the next steps:

- Create merge request – The pipeline robotically creates an ephemeral atmosphere in your department

- Automated testing – The pipeline runs complete exams towards your modifications

- Code evaluate – Crew members can evaluate each the code modifications and check outcomes

- Merge to major – After the modifications are authorized, they’re merged and robotically deployed to improvement

- Surroundings promotion – Handbook approval gates management promotion to QA and manufacturing

What’s subsequent?

After implementing this multi-developer pipeline, take into account these subsequent steps:

- Scale your testing – Add extra complete check suites for intent validation

- Improve monitoring – Combine Amazon CloudWatch dashboards for assistant efficiency

- Discover hybrid AI – Mix Amazon Lex with Amazon Bedrock for generative AI capabilities

For extra details about Amazon Lex, confer with the Amazon Lex Developer Information.

Conclusion

On this put up, we confirmed how implementing multi-developer CI/CD pipelines for Amazon Lex addresses vital operational challenges in conversational AI improvement. By enabling remoted improvement environments, native testing capabilities, and automatic validation workflows, groups can work in parallel with out sacrificing high quality, serving to to speed up time-to-market for advanced conversational AI options.

You can begin implementing this method as we speak utilizing the AWS CDK prototype and Amazon Lex CLI software out there in our GitHub repository. For organizations trying to improve their conversational AI capabilities additional, take into account exploring the Amazon Lex integration with Amazon Bedrock for hybrid options utilizing each structured dialog administration and giant language fashions (LLMs).

We’d love to listen to about your expertise implementing this resolution. Share your suggestions within the feedback or attain out to AWS Skilled Providers for implementation steerage.

In regards to the authors