Picture by Writer

# Introduction

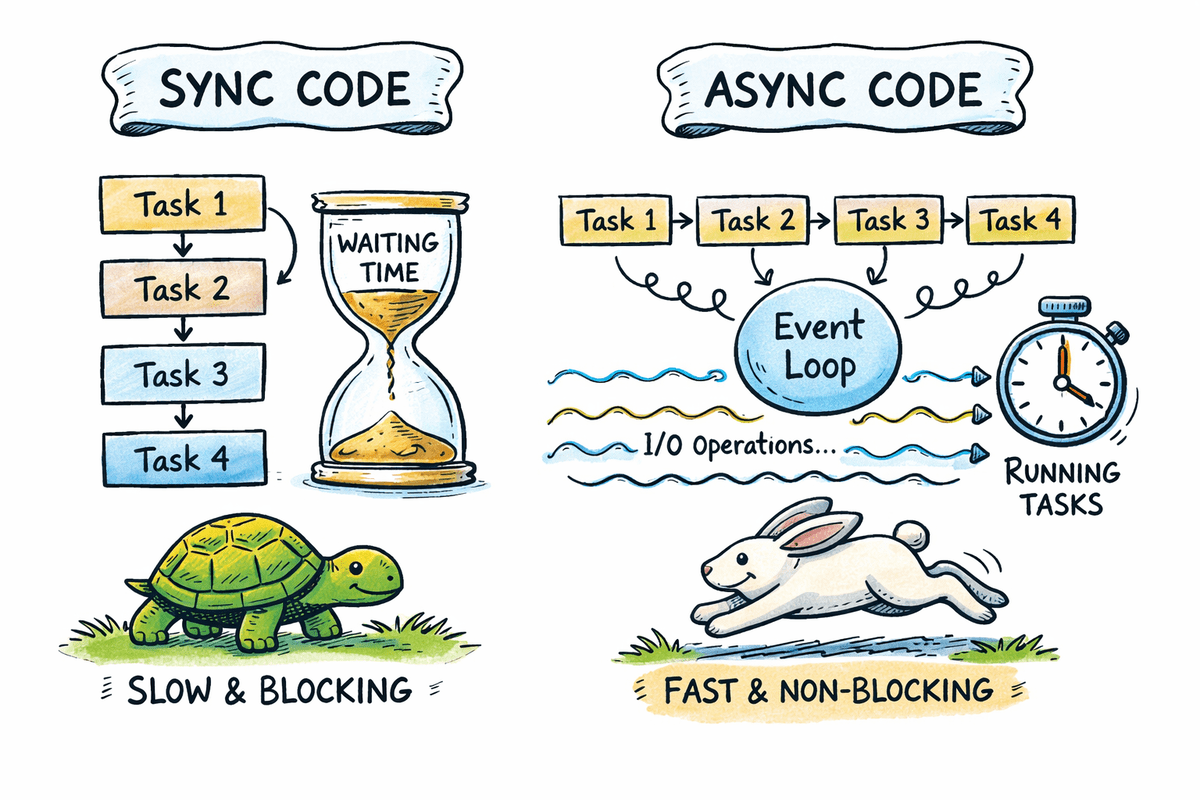

Most Python functions spend vital time ready on APIs, databases, file programs, and community providers. Async programming permits a program to pause whereas ready for I/O operations and proceed executing different duties as an alternative of blocking.

On this tutorial, you’ll be taught the basics of async programming in Python utilizing clear code examples. We’ll examine synchronous and asynchronous execution, clarify how the occasion loop works, and apply async patterns to real-world situations comparable to concurrent API requests and background duties.

By the tip of this information, you’ll perceive when async programming is helpful, the way to use async and await accurately, and the way to write scalable and dependable async Python code.

# Defining Async Programming in Python

Async programming permits a program to pause execution whereas ready for an operation to finish and proceed executing different duties within the meantime.

Core constructing blocks embody:

async deffor outlining coroutinesawaitfor non-blocking waits- The occasion loop for process scheduling

Notice: Async programming improves throughput, not uncooked computation pace.

# Understanding the Async Occasion Loop in Python

The occasion loop is chargeable for managing and executing asynchronous duties.

Key tasks embody:

- Monitoring paused and prepared duties

- Switching execution when duties await I/O

- Coordinating concurrency with out threads

Python makes use of the asyncio library as its commonplace async runtime.

# Evaluating Sequential vs. Async Execution in Python

This part demonstrates how blocking sequential code compares to asynchronous concurrent execution and the way async reduces whole ready time for I/O-bound duties.

// Inspecting a Sequential Blocking Instance

Sequential execution runs duties one after one other. If a process performs a blocking operation, your entire program waits till that operation completes. This method is straightforward however inefficient for I/O-bound workloads the place ready dominates execution time.

This operate simulates a blocking process. The decision to time.sleep pauses your entire program for the desired variety of seconds.

import time

def download_file(title, seconds):

print(f"Beginning {title}")

time.sleep(seconds)

print(f"Completed {title}")

The timer begins earlier than the operate calls and stops in spite of everything three calls full. Every operate runs solely after the earlier one finishes.

begin = time.perf_counter()

download_file("file-1", 2)

download_file("file-2", 2)

download_file("file-3", 2)

finish = time.perf_counter()

print(f"[TOTAL SYNC] took {finish - begin:.4f} seconds")

Output:

file-1begins and blocks this system for 2 secondsfile-2begins solely afterfile-1finishesfile-3begins solely afterfile-2finishes

Whole runtime is the sum of all delays, roughly six seconds.

Beginning file-1

Completed file-1

Beginning file-2

Completed file-2

Beginning file-3

Completed file-3

[TOTAL SYNC] took 6.0009 seconds

// Inspecting an Asynchronous Concurrent Instance

Asynchronous execution permits duties to run concurrently. When a process reaches an awaited I/O operation, it pauses and permits different duties to proceed. This overlapping of ready time considerably improves throughput.

This async operate defines a coroutine. The await asyncio.sleep name pauses solely the present process, not your entire program.

import asyncio

import time

async def download_file(title, seconds):

print(f"Beginning {title}")

await asyncio.sleep(seconds)

print(f"Completed {title}")

asyncio.collect schedules all three coroutines to run concurrently on the occasion loop.

async def most important():

begin = time.perf_counter()

await asyncio.collect(

download_file("file-1", 2),

download_file("file-2", 2),

download_file("file-3", 2),

)

finish = time.perf_counter()

print(f"[TOTAL ASYNC] took {finish - begin:.4f} seconds")

This begins the occasion loop and executes the async program.

Output:

- All three duties begin nearly on the similar time

- Every process waits independently for 2 seconds

- Whereas one process is ready, others proceed executing

- Whole runtime is near the longest single delay, roughly two seconds

Beginning file-1

Beginning file-2

Beginning file-3

Completed file-1

Completed file-2

Completed file-3

[TOTAL ASYNC] took 2.0005 seconds

# Exploring How Await Works in Python Async Code

The await key phrase tells Python {that a} coroutine could pause and permit different duties to run.

Incorrect utilization:

async def process():

asyncio.sleep(1)

Appropriate utilization:

async def process():

await asyncio.sleep(1)

Failing to make use of await prevents concurrency and will trigger runtime warnings.

# Working A number of Async Duties Utilizing asyncio.collect

asyncio.collect permits a number of coroutines to run concurrently and collects their outcomes as soon as all duties have accomplished. It’s generally used when a number of impartial async operations might be executed in parallel.

The job coroutine simulates an asynchronous process. It prints a begin message, waits for one second utilizing a non-blocking sleep, then prints a end message and returns a outcome.

import asyncio

import time

async def job(job_id, delay=1):

print(f"Job {job_id} began")

await asyncio.sleep(delay)

print(f"Job {job_id} completed")

return f"Accomplished job {job_id}"

asyncio.collect schedules all three jobs to run concurrently on the occasion loop. Every job begins execution instantly till it reaches an awaited operation.

async def most important():

begin = time.perf_counter()

outcomes = await asyncio.collect(

job(1),

job(2),

job(3),

)

finish = time.perf_counter()

print("nResults:", outcomes)

print(f"[TOTAL WALL TIME] {finish - begin:.4f} seconds")

asyncio.run(most important())

Output:

- All three jobs begin nearly on the similar time

- Every job waits independently for one second

- Whereas one job is ready, others proceed working

- The outcomes are returned in the identical order the duties have been handed to

asyncio.collect - Whole execution time is shut to at least one second, not three

Job 1 began

Job 2 began

Job 3 began

Job 1 completed

Job 2 completed

Job 3 completed

Outcomes: ['Completed job 1', 'Completed job 2', 'Completed job 3']

[TOTAL WALL TIME] 1.0013 seconds

This sample is foundational for concurrent community requests, database queries, and different I/O-bound operations.

# Making Concurrent HTTP Requests

Async HTTP requests are a standard real-world use case the place async programming supplies instant advantages. When a number of APIs are referred to as sequentially, whole execution time turns into the sum of all response delays. Async permits these requests to run concurrently.

This checklist comprises three URLs that deliberately delay their responses by one, two, and three seconds.

import asyncio

import time

import urllib.request

import json

URLS = [

"https://httpbin.org/delay/1",

"https://httpbin.org/delay/2",

"https://httpbin.org/delay/3",

]

This operate performs a blocking HTTP request utilizing the usual library. It can’t be awaited immediately.

def fetch_sync(url):

"""Blocking HTTP request utilizing commonplace library"""

with urllib.request.urlopen(url) as response:

return json.hundreds(response.learn().decode())

The fetch coroutine measures execution time and logs when a request begins. The blocking HTTP request is offloaded to a background thread utilizing asyncio.to_thread. This prevents the occasion loop from blocking.

async def fetch(url):

begin = time.perf_counter()

print(f"Fetching {url}")

# Run blocking IO in a thread

information = await asyncio.to_thread(fetch_sync, url)

elapsed = time.perf_counter() - begin

print(f"Completed {url} in {elapsed:.2f} seconds")

return information

All requests are scheduled concurrently utilizing asyncio.collect.

async def most important():

begin = time.perf_counter()

outcomes = await asyncio.collect(

*(fetch(url) for url in URLS)

)

whole = time.perf_counter() - begin

print(f"nFetched {len(outcomes)} responses")

print(f"[TOTAL WALL TIME] {whole:.2f} seconds")

asyncio.run(most important())

Output:

- All three HTTP requests begin nearly instantly

- Every request completes after its personal delay

- The longest request determines the whole wall time

- Whole runtime is roughly three and a half seconds, not the sum of all delays

Fetching https://httpbin.org/delay/1

Fetching https://httpbin.org/delay/2

Fetching https://httpbin.org/delay/3

Completed https://httpbin.org/delay/1 in 1.26 seconds

Completed https://httpbin.org/delay/2 in 2.20 seconds

Completed https://httpbin.org/delay/3 in 3.52 seconds

Fetched 3 responses

[TOTAL WALL TIME] 3.52 seconds

This method considerably improves efficiency when calling a number of APIs and is a standard sample in trendy async Python providers.

# Implementing Error Dealing with Patterns in Async Python Functions

Strong async functions should deal with failures gracefully. In concurrent programs, a single failing process mustn’t trigger your entire workflow to fail. Correct error dealing with ensures that profitable duties full whereas failures are reported cleanly.

This checklist contains two profitable endpoints and one endpoint that returns an HTTP 404 error.

import asyncio

import urllib.request

import json

import socket

URLS = [

"https://httpbin.org/delay/1",

"https://httpbin.org/delay/2",

"https://httpbin.org/status/404",

]

This operate performs a blocking HTTP request with a timeout. It might elevate exceptions comparable to timeouts or HTTP errors.

def fetch_sync(url, timeout):

with urllib.request.urlopen(url, timeout=timeout) as response:

return json.hundreds(response.learn().decode())

This operate wraps a blocking HTTP request in a secure asynchronous interface. The blocking operation is executed in a background thread utilizing asyncio.to_thread, which prevents the occasion loop from stalling whereas the request is in progress.

Frequent failure instances comparable to timeouts and HTTP errors are caught and transformed into structured responses. This ensures that errors are dealt with predictably and {that a} single failing request doesn’t interrupt the execution of different concurrent duties.

async def safe_fetch(url, timeout=5):

strive:

return await asyncio.to_thread(fetch_sync, url, timeout)

besides socket.timeout:

return {"url": url, "error": "timeout"}

besides urllib.error.HTTPError as e:

return {"url": url, "error": "http_error", "standing": e.code}

besides Exception as e:

return {"url": url, "error": "unexpected_error", "message": str(e)}

All requests are executed concurrently utilizing asyncio.collect.

async def most important():

outcomes = await asyncio.collect(

*(safe_fetch(url) for url in URLS)

)

for end in outcomes:

print(outcome)

asyncio.run(most important())

Output:

- The primary two requests full efficiently and return parsed JSON information

- The third request returns a structured error as an alternative of elevating an exception

- All outcomes are returned collectively with out interrupting the workflow

{'args': {}, 'information': '', 'information': {}, 'kind': {}, 'headers': {'Settle for-Encoding': 'identification', 'Host': 'httpbin.org', 'Consumer-Agent': 'Python-urllib/3.11', 'X-Amzn-Hint-Id': 'Root=1-6966269f-1cd7fc7821bc6bc469e9ba64'}, 'origin': '3.85.143.193', 'url': 'https://httpbin.org/delay/1'}

{'args': {}, 'information': '', 'information': {}, 'kind': {}, 'headers': {'Settle for-Encoding': 'identification', 'Host': 'httpbin.org', 'Consumer-Agent': 'Python-urllib/3.11', 'X-Amzn-Hint-Id': 'Root=1-6966269f-5f59c151487be7094b2b0b3c'}, 'origin': '3.85.143.193', 'url': 'https://httpbin.org/delay/2'}

{'url': 'https://httpbin.org/standing/404', 'error': 'http_error', 'standing': 404}

This sample ensures {that a} single failing request doesn’t break your entire async operation and is crucial for production-ready async functions.

# Utilizing Async Programming in Jupyter Notebooks

Jupyter notebooks already run an energetic occasion loop. Due to this, asyncio.run can’t be used inside a pocket book cell, because it makes an attempt to start out a brand new occasion loop whereas one is already working.

This async operate simulates a easy non-blocking process utilizing asyncio.sleep.

import asyncio

async def most important():

await asyncio.sleep(1)

print("Async process accomplished")

Incorrect utilization in notebooks:

Appropriate utilization in notebooks:

Understanding this distinction ensures async code runs accurately in Jupyter notebooks and prevents widespread runtime errors when experimenting with asynchronous Python.

# Controlling Concurrency with Async Semaphores

Exterior APIs and providers usually implement fee limits, which makes it unsafe to run too many requests on the similar time. Async semaphores help you management what number of duties execute concurrently whereas nonetheless benefiting from asynchronous execution.

The semaphore is initialized with a restrict of two, which means solely two duties can enter the protected part on the similar time.

import asyncio

import time

semaphore = asyncio.Semaphore(2) # permit solely 2 duties at a time

The duty operate represents an asynchronous unit of labor. Every process should purchase the semaphore earlier than executing, and if the restrict has been reached, it waits till a slot turns into out there.

As soon as contained in the semaphore, the duty information its begin time, prints a begin message, and awaits a two-second non-blocking sleep to simulate an I/O-bound operation. After the sleep completes, the duty calculates its execution time, prints a completion message, and releases the semaphore.

async def process(task_id):

async with semaphore:

begin = time.perf_counter()

print(f"Process {task_id} began")

await asyncio.sleep(2)

elapsed = time.perf_counter() - begin

print(f"Process {task_id} completed in {elapsed:.2f} seconds")

The most important operate schedules 4 duties to run concurrently utilizing asyncio.collect, however the semaphore ensures that they execute in two waves of two duties.

Lastly, asyncio.run begins the occasion loop and runs this system, leading to a complete execution time of roughly 4 seconds.

async def most important():

begin = time.perf_counter()

await asyncio.collect(

process(1),

process(2),

process(3),

process(4),

)

whole = time.perf_counter() - begin

print(f"n[TOTAL WALL TIME] {whole:.2f} seconds")

asyncio.run(most important())

Output:

- Duties 1 and a pair of begin first because of the semaphore restrict

- Duties 3 and 4 wait till a slot turns into out there

- Duties execute in two waves, every lasting two seconds

- Whole wall time is roughly 4 seconds

Process 1 began

Process 2 began

Process 1 completed in 2.00 seconds

Process 2 completed in 2.00 seconds

Process 3 began

Process 4 began

Process 3 completed in 2.00 seconds

Process 4 completed in 2.00 seconds

[TOTAL WALL TIME] 4.00 seconds

Semaphores present an efficient option to implement concurrency limits and shield system stability in manufacturing async functions.

# Concluding Remarks

Async programming just isn’t a common resolution. It isn’t appropriate for CPU-intensive workloads comparable to machine studying coaching, picture processing, or numerical simulations. Its energy lies in dealing with I/O-bound operations the place ready time dominates execution.

When used accurately, async programming improves throughput by permitting duties to make progress whereas others are ready. Correct use of await is crucial for concurrency, and async patterns are particularly efficient in API-driven and service-based programs.

In manufacturing environments, controlling concurrency and dealing with failures explicitly are crucial to constructing dependable and scalable async Python functions.

Abid Ali Awan (@1abidaliawan) is a licensed information scientist skilled who loves constructing machine studying fashions. Presently, he’s specializing in content material creation and writing technical blogs on machine studying and information science applied sciences. Abid holds a Grasp’s diploma in know-how administration and a bachelor’s diploma in telecommunication engineering. His imaginative and prescient is to construct an AI product utilizing a graph neural community for college kids fighting psychological sickness.